This week, just after Hot Chips 34, we attended the first of Intel’s new Chalk Talk series. The focus of this event was to discuss the benefits of adding acceleration into CPUs, and Intel’s plans to do just that. If this sounds familiar, about a month ago we discussed how server vendor philosophies have changed and Intel is focused on “More Acceleration, More Better” with its next-generation Sapphire Rapids Xeon. You can learn more about that in More Cores More Better AMD Arm and Intel Server CPUs in 2022-2023.

We wanted to just cover that Intel is supporting this theme and so we wanted to cover the slides of the event.

Intel Accelerates Messaging on Acceleration Ahead of Sapphire Rapids Xeon

Using accelerators for commonly used tasks is something Intel implements in a few ways. It has cards and other devices such as FPGAs, ASICs, eASICs, and more for specific domains or ultimate flexibility. In the Intel Xeon Sapphire Rapids generation, and even Ice Lake, acceleration generally falls into two categories. Acceleration often happens via either accelerator blocks or adding new instructions into processors that greatly accelerate common tasks. AES-NI for crypto acceleration is probably the best known these days, but Intel has a number of these technologies focusing on AI, HPC, and networking.

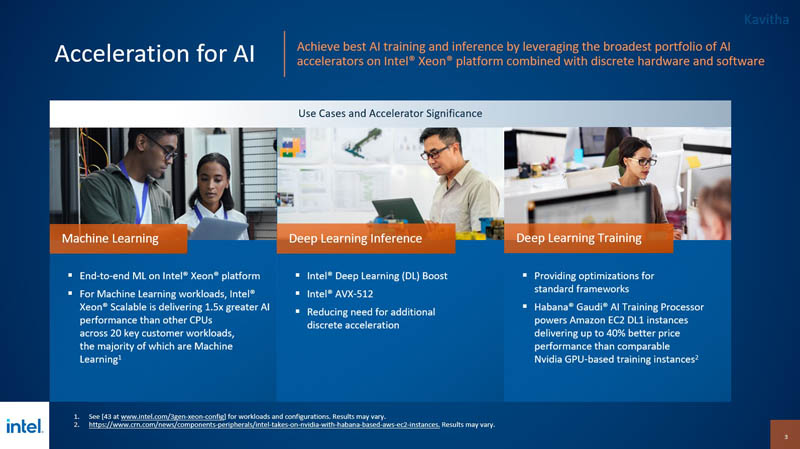

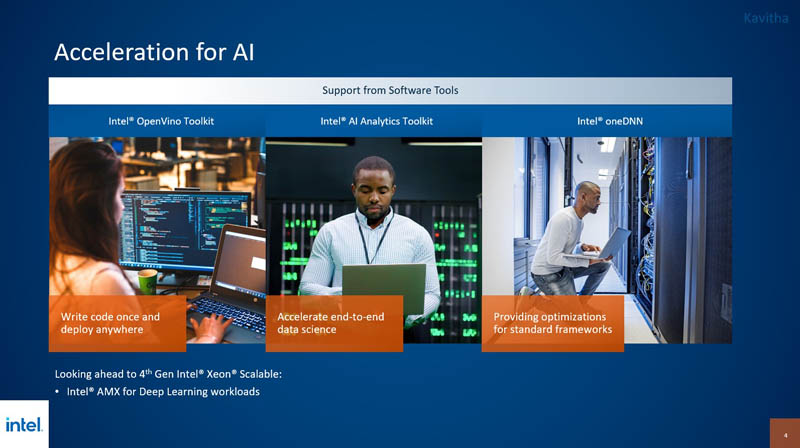

On the AI side, Intel is focused on adding new features into its chips. Intel has new products like the Intel Habana Gaudi2 to compete directly in the AI training market. It has the upcoming Intel Ponte Vecchio GPU, but it also has features built into its client and server CPUs. With AMX in the next generation of chips, Intel is going to not just focus on adding inference support, but it is going to look at accelerating deep learning workloads on massive datasets that cannot fit into GPU memory.

In the future, if one thinks of applications, there will be parts of applications that run traditional workloads such as generating UI (e.g. web pages), storing and accessing data in databases, and more. Part of that flow in the future will be doing AI inference for a portion of the workload. Intel is focused on providing AI inference for that without having to add an accelerator. It is also looking at the opportunity to provide training with excess CPU capacity. Intel AMX is a technology we have heard a lot of buzz about.

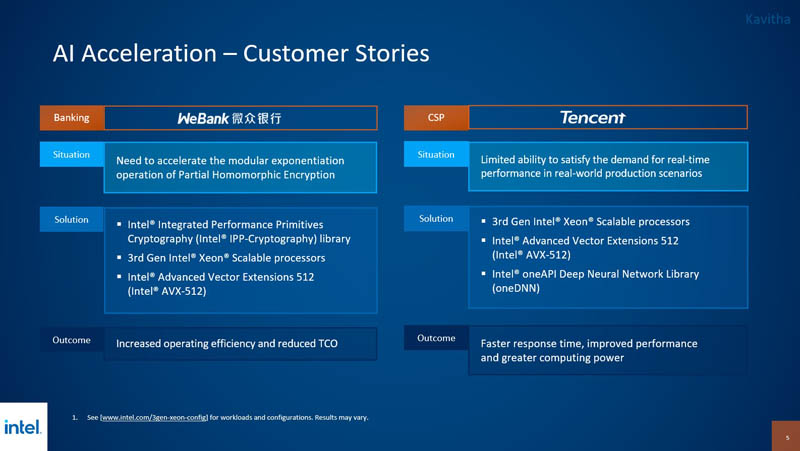

Intel is saying it has customers in China focused on using Intel CPUs for AI acceleration today.

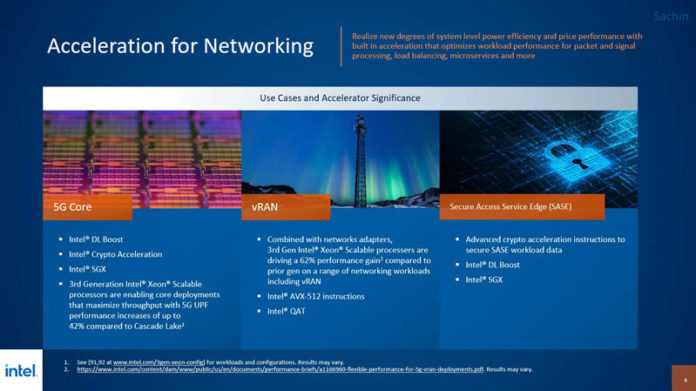

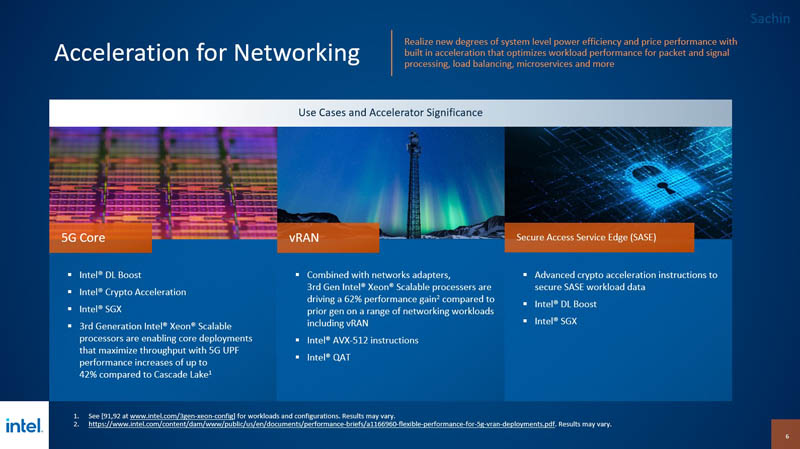

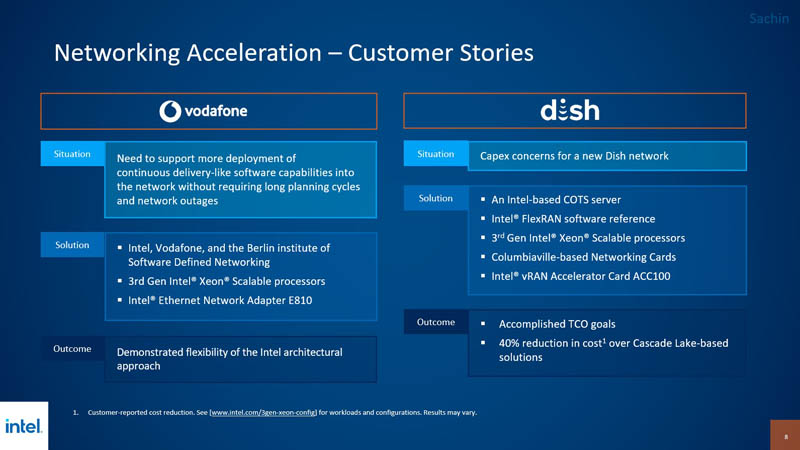

On the networking side, Intel has a number of features it has been building for years. These features include features like the built-in chip crypto acceleration as well as QuickAssist.

We just did a piece on Intel QuickAssist Parts and Cards by QAT Generation, and have started updating our 2016–2017 QAT work with the Netgate 4100 pfSense Plus Review where the chip used can be lower power because it is using QAT acceleration.

Beyond QAT, Intel also built new instructions to assist common functions in areas like telco for vRAN and ORAN.

In this space, Intel is widely used because of this work. When you walk or drive down the street, many of the telecom infrastructure that you pass all the time is based on Intel Xeon because of this market focus.

Beyond the Sapphire Rapids chips, Intel also has the Atom and Xeon D series, as well as NICs, FPGAs, IPUs, and more to accelerate this space.

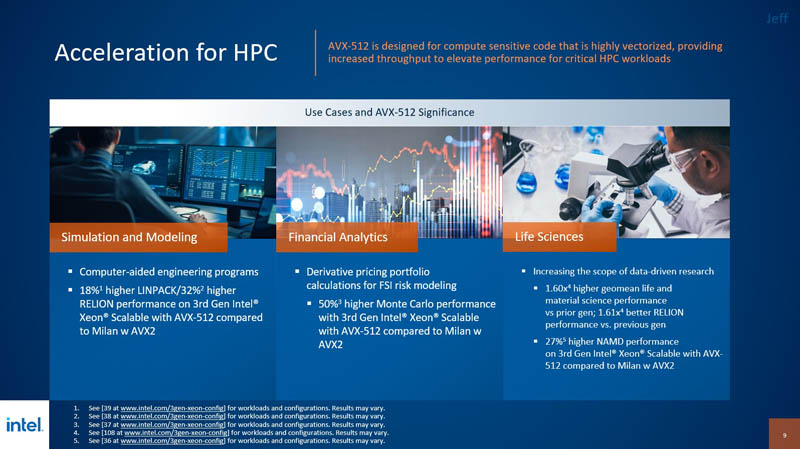

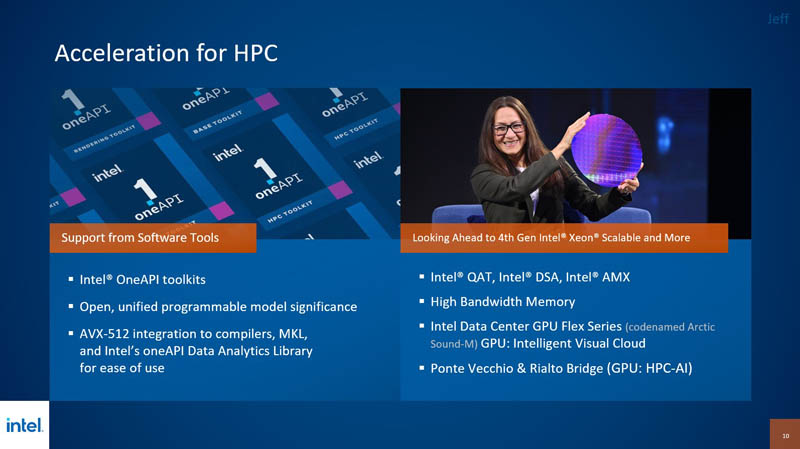

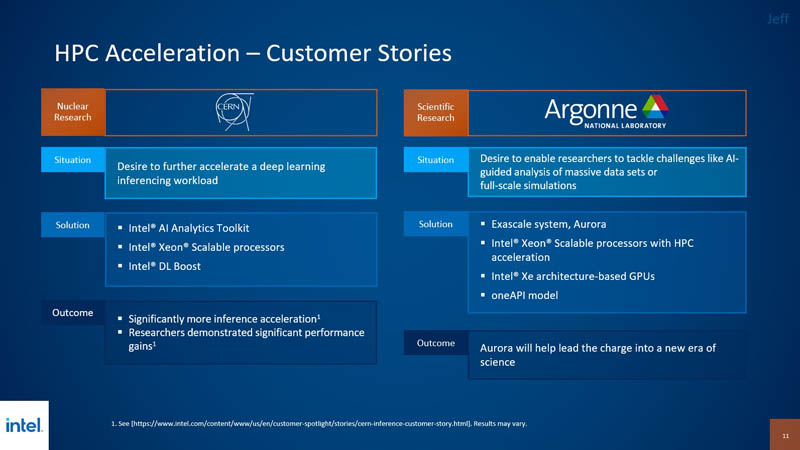

On the HPC side Intel has been touting AVX-512 for years. AMD will add AVX-512 with its upcoming Genoa chip. Still, AVX-512 is a fairly well-known technology at this point in the HPC space.

Intel discussed not just Sapphire Rapids, but also that it has Ponte Vecchio coming and Rialto Bridge after that. Rialto Bridge, again, is the successor to Ponte Vecchio and is an upgrade but not a big architectural shift that we will get with Falcon Shores.

The Intel Flex Series previously “Arctic Sound-M” felt like a bit of a stretch to be in the HPC, but perhaps it was something that Intel wanted to talk about given the launch this week and needed a place to put it.

Again, in this space, Sapphire Rapids and Sapphire Rapids with HBM will bring next-generation performance along with Ponte Vecchio.

Final Words

Intel is going to be pushing the acceleration storyline a lot over the next few months. We have been showing AWS EC2 m6 Instances Why Acceleration Matters for quite some time now. STH content is built with a longer-term view, so we are

In September, we are going to look at Intel QuickAssist Technology or Intel QAT and show a few aspects of the technology that many have never seen. We are also going to show just how much of an impact the current generation Intel QAT has both in mainstream servers as well as Xeon D. In Sapphire Rapids, Intel will greatly expand the scope and span of QuickAssist and we are laying the foundation for that on STH today.