Power Consumption and Noise

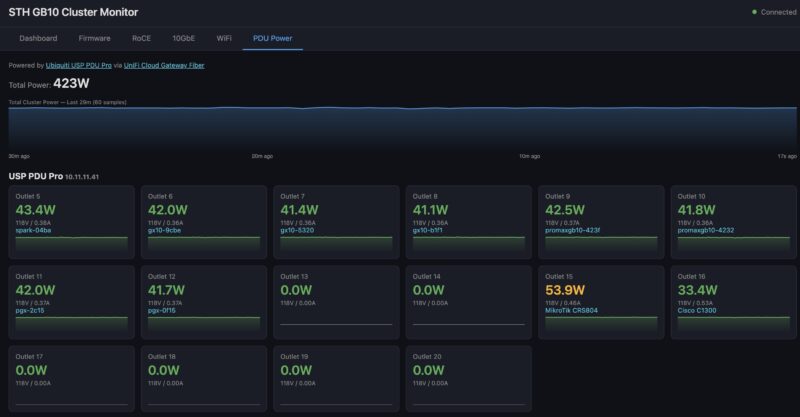

In terms of power consumption, this was quite a bit of a win. At idle, the entire cluster, including the eight NVIDIA GB10 compute nodes, as well as the MikroTik CRS804 DDQ switch, used under 400W. When you add a 10Gbase-T switch, the power consumption jumps to around 430W at idle.

Under load, we often see power in the 900-950W range while running models like Kimi K2.5, including both switches.

With that said, there is a very clear path to getting the cluster to around 1.2kW by loading the CPUs more.

On the noise side, the cluster is surprisingly quiet. The loudest part, by far, was the MikroTik CRS804 DDQ switch. As a quick aside, I told one of MikroTik’s co-founders that noise would be a key differentiator. Still, 5-10 meters from the cluster, it is hard to hear. Closer than that, you will both hear and feel the heat from the systems.

Final Words

Many NVIDIA GB10 systems, many switches, and a lot ot time later, we are using this cluster every day. At the same time, this is a massive undertaking. The price of this entire cluster has increased by roughly $9000 since we put it together due to the rising memory costs. I think buying something like a DGX Station, a massive NVIDIA RTX Pro 6000 Blackwell Edition setup, or even a dual Apple Mac Studio M3 Ultra 512GB platform (although you cannot buy these anymore) makes a lot of sense if you are aiming for speed. The GB10 cluster is not particularly fast. What it represents is really a different kind of setup.

First, you are running large models locally, which means you can handle tasks you want to keep from being sent to a cloud provider. There are many reasons, whether for data privacy, data sovereignty, or just because you have confidential information you do not want shared with others. Cloud providers may say they are keeping your data out of training runs, but there have been many “oopses” so far. At $23-35K, this is a way to run models locally.

Second, you might be power-limited. In sub-1kW operation, this runs off a standard North American power outlet with room to spare. A DGX Station may be faster and have almost as much memory, but it is a 1.6kW class machine. Likewise, those running 8x RTX Pro 6000 Blackwell servers can get roughly 80% as much memory (taking into account overheads), but those will use more than 3x as much power and make notably more noise.

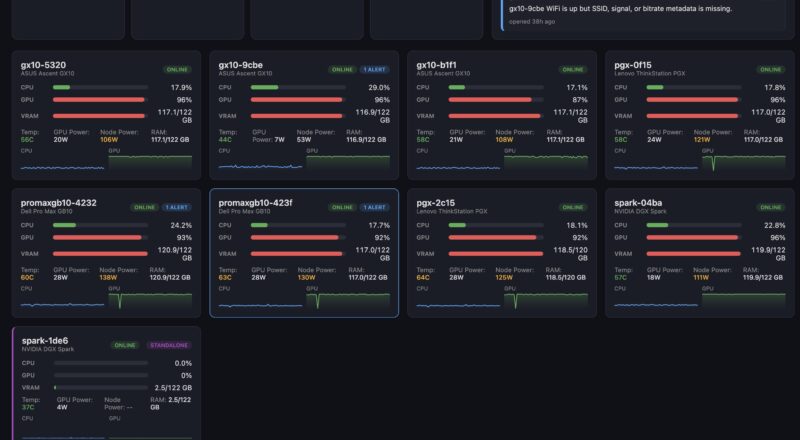

Third, and this is the big one. You might want to use this as a prototyping cluster. One of the most important use cases for us has been just running models and seeing how they perform on tasks. We are not necessarily talking about Tokens/second performance. Instead, we are talking about the models’ ability to keep AI agents productive and on the right track. Benchmarks are great, but being able to run a large model and get an answer quickly on a real workflow is incredibly useful. We even split the cluster into two groups: four nodes running one model for OpenCode, OpenClaw, Hermes, or Turnstone, and the other four nodes running 4-node, 2-node, or 1-node models for testing. If you want to know more about the accuracy of models for your work, then having a setup like this running the NVIDIA Blackwell generation is very useful.

Is this perfect? Absolutely not. There is a good reason that NVIDIA only supports up to 4x node clusters. At the same time, this proved that the 8x node clusters work. Setting up a cluster like this, where you have Arm CPUs, NVIDIA Blackwell GPUs, and ConnectX-7 RDMA networking, teaches you a lot that is directly transferable to running larger clusters. Perhaps the most important learning is that you do not need to spend a day to get this working, or learn a bunch of technical domains. In 2026, going from bare machine logins to functioning vLLM serving clusters across 8-nodes can be done simply with an AI agent doing all of the configuration, deployment, monitoring, and documentation. That really changes how accessible this type of project is, even though it looks like a lot of gear. While we are showing 8 nodes here, this scales down to 2 nodes and up to dozens. If you saw our recent Substack on desk-side AI agents, this is one of the projects that really fueled that piece.

Also, I just wanted to say thank you to Micro Center for sponsoring the video side of this piece.

This is f*n awesome. Good on ya bro

BEST piece you’ve done recently. Wow. It makes me only want a 4N not 8N

This is indeed one of your best articles in years. My 2-node cluster will keep me entertained for a long time. Maybe one day I will go up to 4 nodes, we shall see.

The MikroTik CRS804 is perfect for a 4-node cluster, 4x200G for the RoCE, 4x100G for storage, and the last 400G port facing the NAS.

Have you tried implementing TurboQuant on the cluster? I am curious to see how long context windows impact the available vram over time and how TurboQuant might help.

Great article especially showing how you leveraged ai to set everything up!