Building the 8x NVIDIA GB10 Cluster Monitoring and Firmware

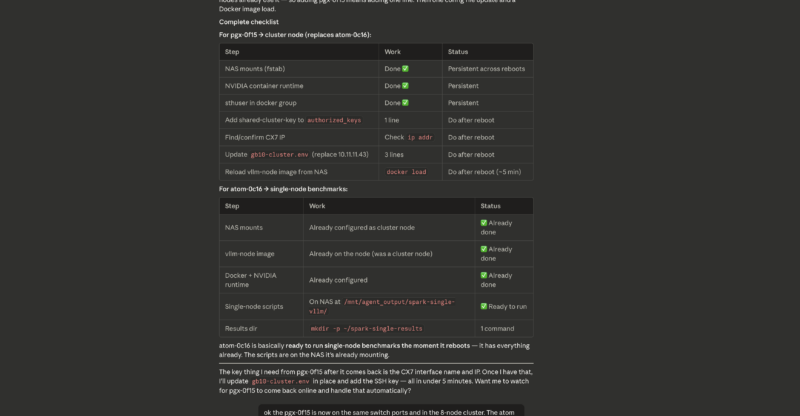

Once we had been operating for some time, it became clear that we needed to better manage our cluster, just as you would any cluster. It all came to a head when we were forced to swap one GB10 out of the cluster and replace it with another unit we had running as a single node.

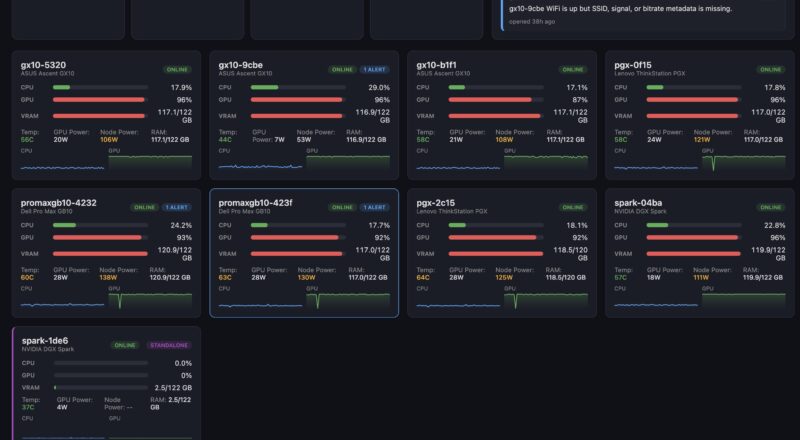

We built a small cluster monitoring setup with the following key features:

- Monitor each GB10 node:

- CPU Utilization

- GPU Utilization

- LPDDR5X Usage

- Temperature

- Package Power Consumption

- Node Power Consumption

- Links to 10GbE and 200GbE networks

- Ports on the PDU

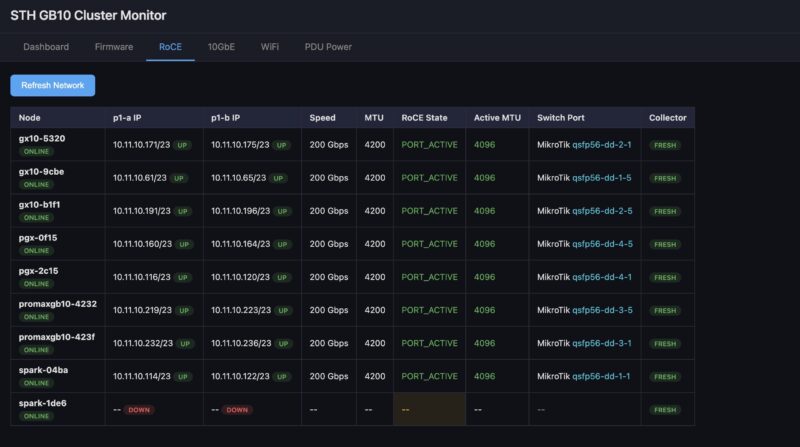

- Monitor 200GbE networking:

- Ensure 200GbE was connected

- Monitor each port

- Monitor RDMA networking status on each port

- Monitor 10GbE networking

- Ensure 10GbE was connected

- Monitor each port

- Monitor WiFi networking

- Ensure WiFi is not connected

- Monitor each so a reboot does not change this

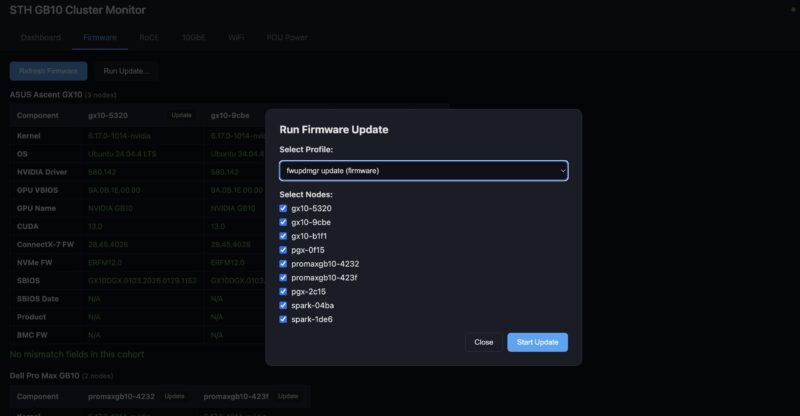

- Monitor Firmware and Update

- Check for OS kernel version

- Check NVIDIA driver

- Check Firmware of GB10

- Check Firmware of ConnectX-7

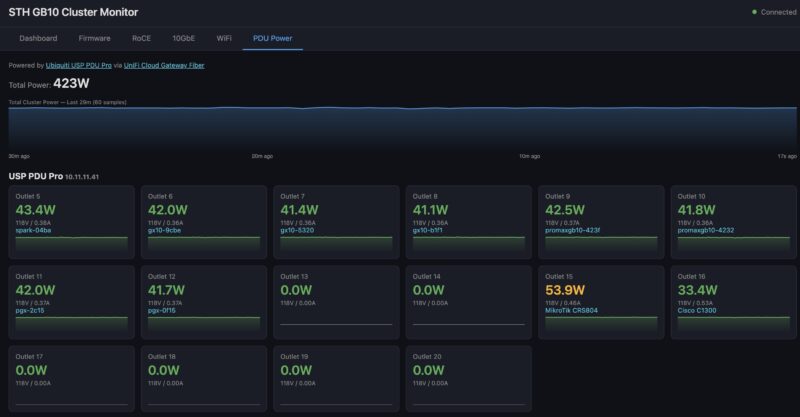

- Monitor Cluster Power

- Monitor power for the cluster

- Allow for remote power cycling of nodes

This might all sound boring at first, but keeping all of the nodes on the same firmware is a pain if you are managing each one individually. Also, if you are away from the cluster often (I did 98 flights in 2025 as an example), then having the ability to do remote diagnostics and power cycling is important. Having all of the links between individually switched and monitored PDU ports, switches, and nodes makes both troubleshooting and remote hands easier. Using this, I once had to guide Sam to remotely swap out a node, and it was easy because I had a way to verify the issue and then tell him how to validate that the replaced node was in the correct spot.

Next, let us get to the performance and optimization.

This is f*n awesome. Good on ya bro

BEST piece you’ve done recently. Wow. It makes me only want a 4N not 8N

This is indeed one of your best articles in years. My 2-node cluster will keep me entertained for a long time. Maybe one day I will go up to 4 nodes, we shall see.

The MikroTik CRS804 is perfect for a 4-node cluster, 4x200G for the RoCE, 4x100G for storage, and the last 400G port facing the NAS.

Have you tried implementing TurboQuant on the cluster? I am curious to see how long context windows impact the available vram over time and how TurboQuant might help.

Great article especially showing how you leveraged ai to set everything up!