Performance and Optimization

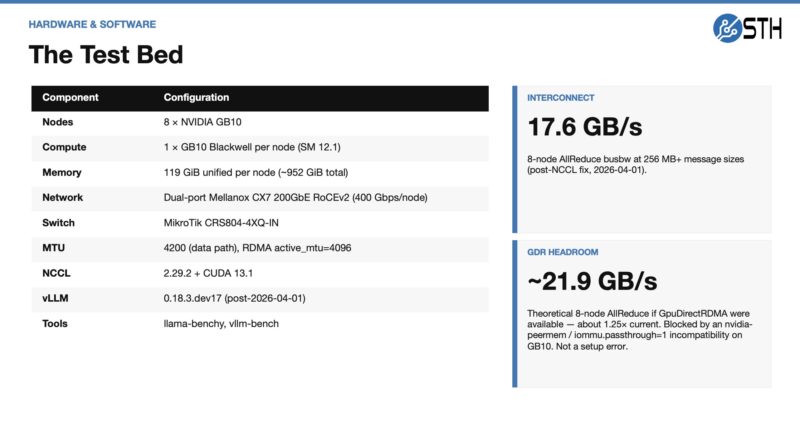

Let us quickly discuss the performance of this setup. The goal was to run Kimi K2.5, but it has run dozens of models, often with many quants per model. Here is the test bed, and also something that we noticed

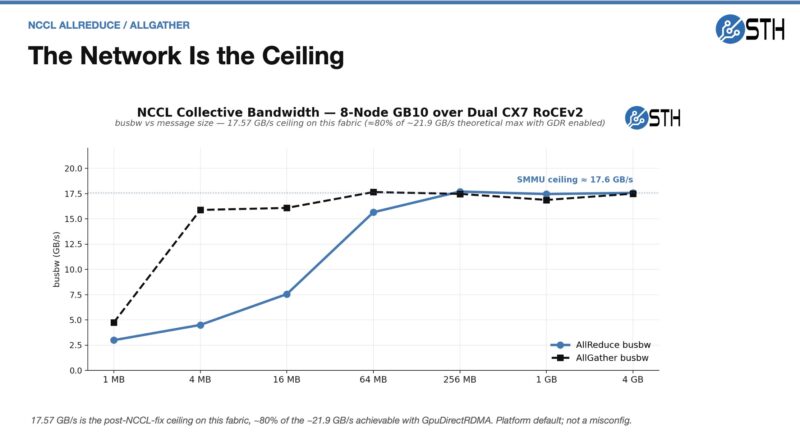

A quick word on those notes. We were only getting around 140Gbps on the network side. It looks like this is an SMMU ceiling for the platform.

The kernel default on the GB10 is iommu.passthrough=0. NVIDIA’s own RDMA setup guide tells you to leave it alone. The 12.7 Gbps per-port direct-DMA cap is inherent to that mode, not a misconfiguration. NCCL on GB10 does not use GpuDirectRDMA it uses CPU-staged copies, which sidestep the SMMU DMA-FQ cap. That is why the 8-node AllReduce hits 17.57 GB/s despite the per-port direct-DMA number being so much lower. That is constraining our NCCL performance somewhat, so we are probably getting like 80% of the scaling we could if this were not something we ran into.

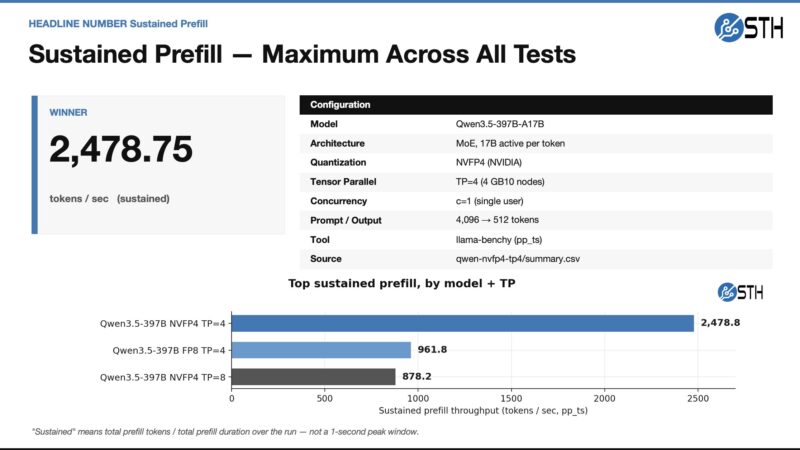

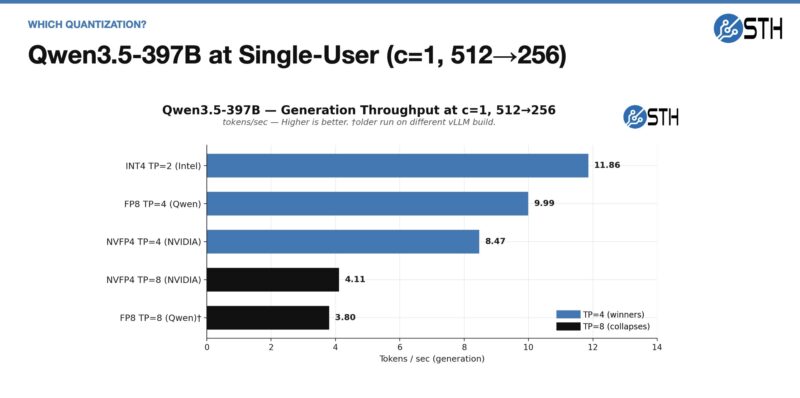

Taking a quick look at the sustained prefill, here is what we saw just using Qwen3.5-397B as an example:

NVFP4 really helped the GB10 cluster rip through these, albeit this was only at TP=4, so using half of the nodes.

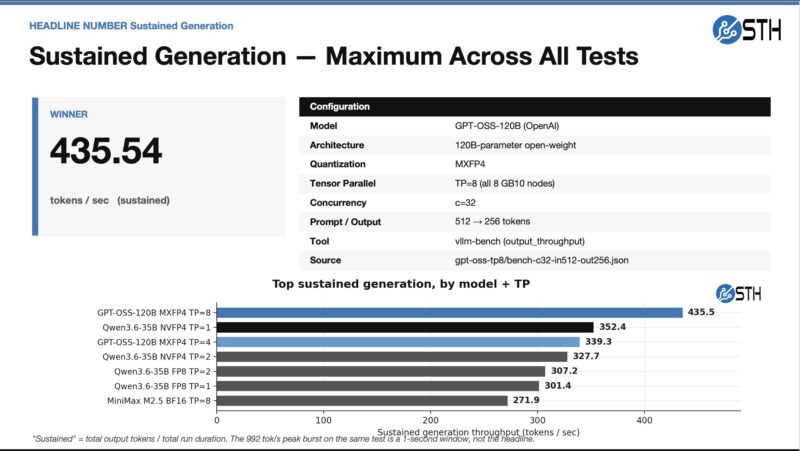

On the generation side, GPT-OSS-120B did really well:

Of course, all of these numbers will change with new software versions and so forth, so take these as a point-in-time snapshot of what is happening. There are also other quantizations available, so again, just take this as a snapshot. Let us move on to some of the more focused results.

Kimi K2.5 and Kimi K2.6 Results

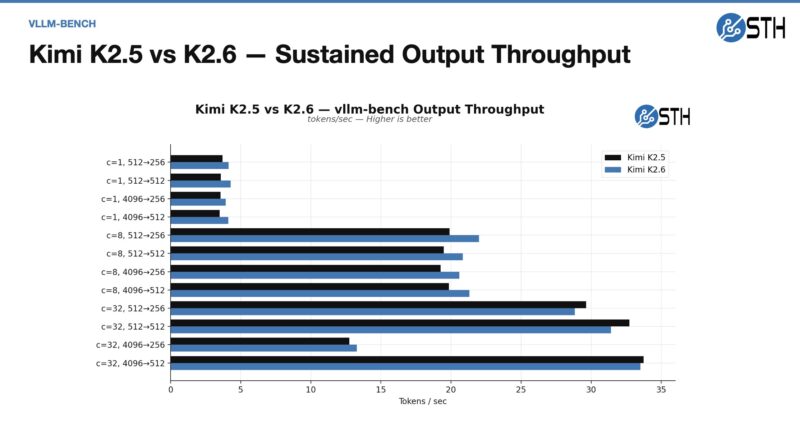

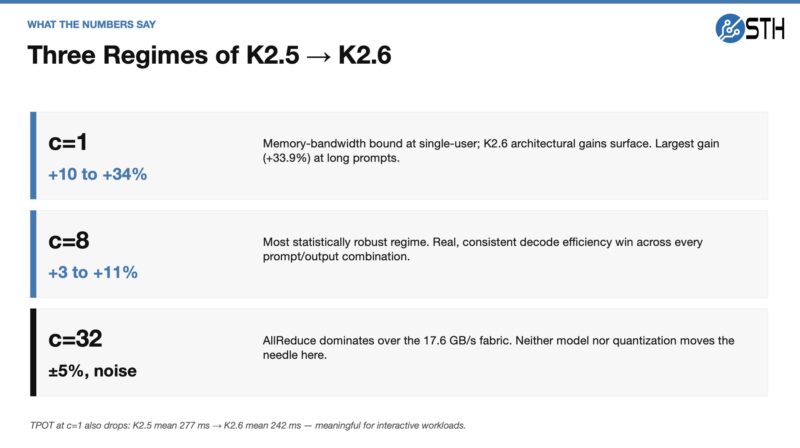

Something that kept this from going live is that we ended up in a cycle where we would be about ready to go live, but then a new model would come up, we would run it through benchmarks for a day, then record that, and have it hit the editing queue. After that was done, another model would come out. Here is an idea of Kimi K2.5 performance and K2.6 using the Moonshotai INT4 quant on HuggingFace:

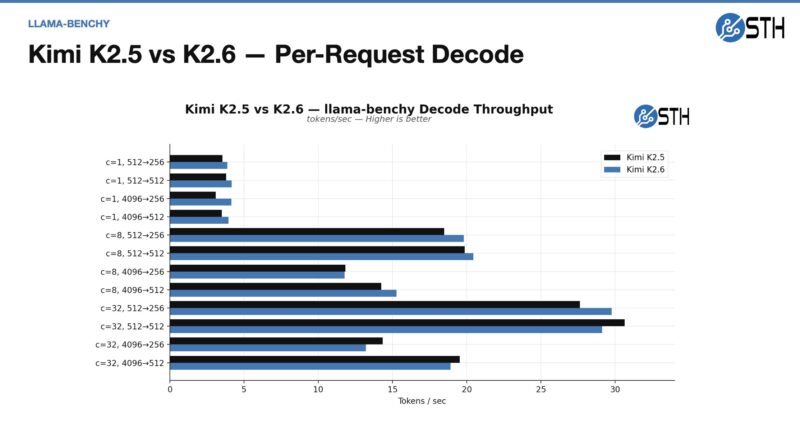

Here is the decode performance:

In theory, these should have been the same, or at least closer, but here is the summary of the concurrency of what we saw.

That was an interesting finding, and combined with model advancements, is one reason we will use K2.6 over K2.5 on this cluster, even though the goal was to run K2.5.

Qwen3.5-395B-A17B Insights

I think folks know I am a big fan of Qwen3.5-397B-A17B. Here are the single-user results, which are not super-fast since there are only 17B active parameters.

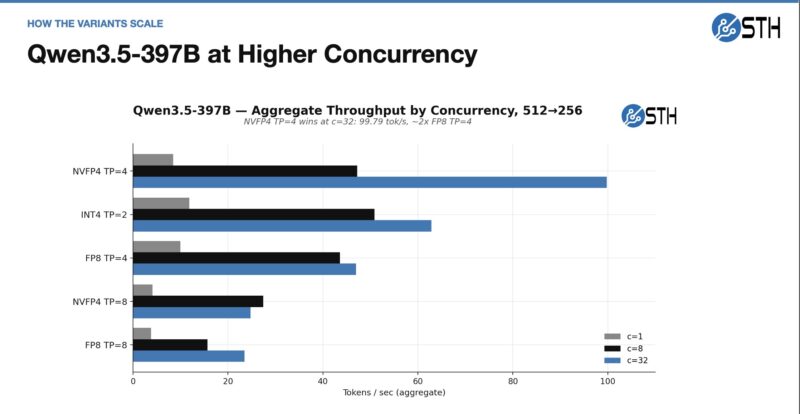

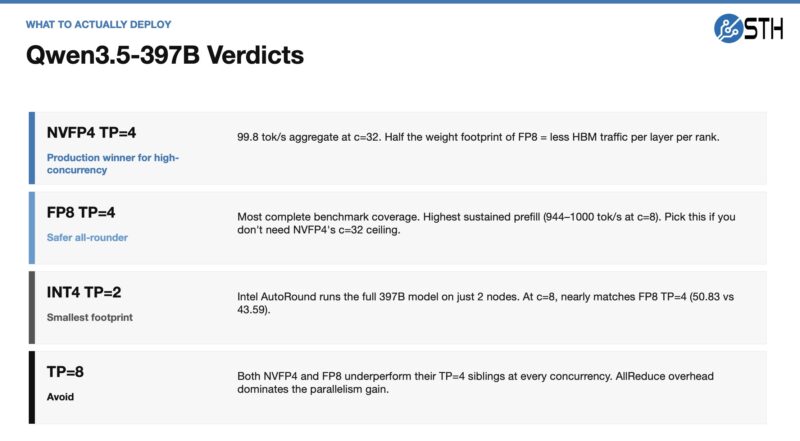

When we crank up the concurrency, however, we can also significantly increase speed.

Something we have heard but is not necessarily true is the idea that moving from a model that fits on one node to eight nodes means you get 8x 273GB/s of memory bandwidth and more GPUs, so it will run much faster on eight nodes than on one. That is false. The penalty for going from a single node to an allreduce function, even on the fast 200Gbps networking, is non-trivial. Indeed, the best guidance I can give is that denser models tend to run better on the cluster and to keep models running on as few nodes as possible to avoid the communication performance hit.

Our basic summary here is that since this can fit on 4-nodes, run it on 4-nodes, not eight.

GPT-OSS-120B Insights

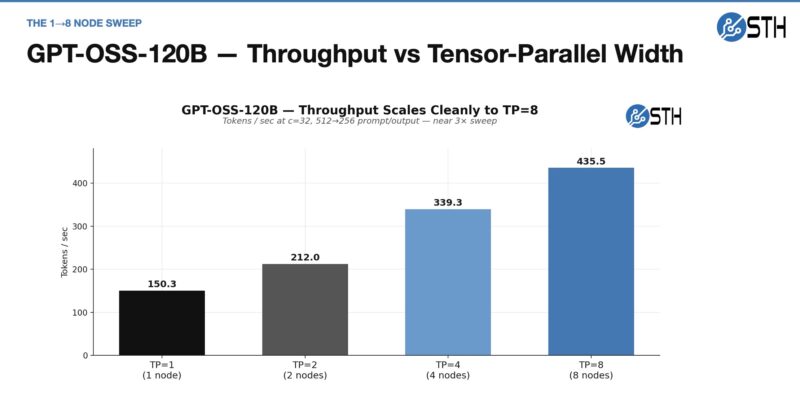

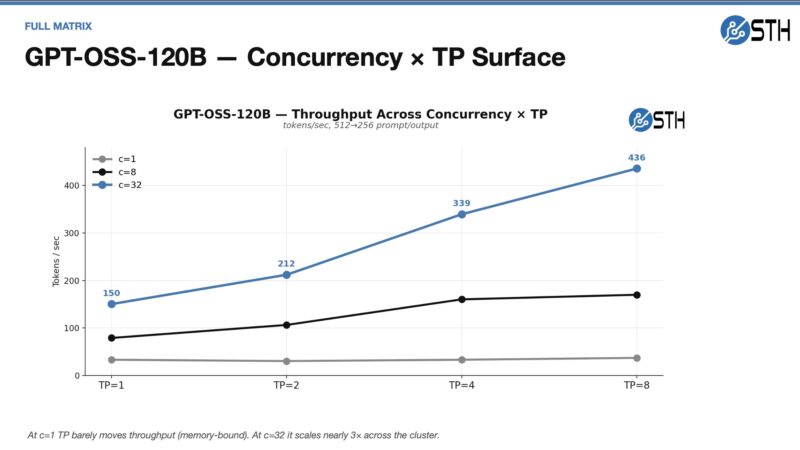

GPT-OSS-120B is not the biggest model, and frankly, is one that we were using a lot to end 2025, but newer models have eclipsed it. Still, we wanted to check what would happen if we scaled from a single node to multiple nodes.

You can see the immediate impact of scaling from the single node to the second node, as we are not getting anywhere near a 100% increase. This is because we are going over the network and taking that performance penalty of moving off-node and coordinating work. We also wanted to see the impact of scaling on concurrency.

As a quick note here. If you just ran eight separate instances on GB10 nodes at 32 concurrency, that would be around 1200 tokens/ second. For some, that may be a much better option.

My best advice for performance is to check out Spark-Arena. Folks in the NVIDIA GB10 community are working on performance every day. Frankly, because all Blackwell GPUs are not equal, sometimes new optimizations come along for the GB10 platform and can significantly increase performance. That project is great and one that will keep you better informed on the overall performance.

Next, let us get to the power consumption and noise.

This is f*n awesome. Good on ya bro

BEST piece you’ve done recently. Wow. It makes me only want a 4N not 8N

This is indeed one of your best articles in years. My 2-node cluster will keep me entertained for a long time. Maybe one day I will go up to 4 nodes, we shall see.

The MikroTik CRS804 is perfect for a 4-node cluster, 4x200G for the RoCE, 4x100G for storage, and the last 400G port facing the NAS.

Have you tried implementing TurboQuant on the cluster? I am curious to see how long context windows impact the available vram over time and how TurboQuant might help.

Great article especially showing how you leveraged ai to set everything up!