Today we have the long-awaited launch of the AMD Instinct MI300 series. Frankly, we thought this series was launching back in June 2023, but today is officially the day. As part of the MI300 series, we get two family members. The MI300A is the APU, or a CPU plus GPU IP. AMD has fewer cores in its APU than something like a NVIDIA Grace Hopper, but the integration is another level. Perhaps a few levels ahead.

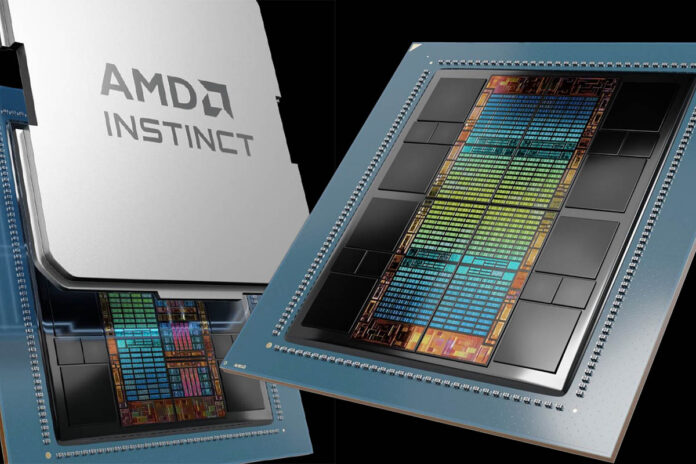

On the more traditional GPU side, we have the AMD Instinct MI300X. The MI300X is the GPU model designed to take on the NVIDIA H100 head-on. Perhaps the easiest way to remember this is that the MI300A looks almost like like an EPYC Genoa CPU and the MI300X looks like an OAM/ SXM assembly (it is OAM not SXM of course.)

In this article, we are going to get into the chips. First, though, let us jump ahead to the specs.

AMD MI300X and MI300A vs NVIDIA H100 and MI250X

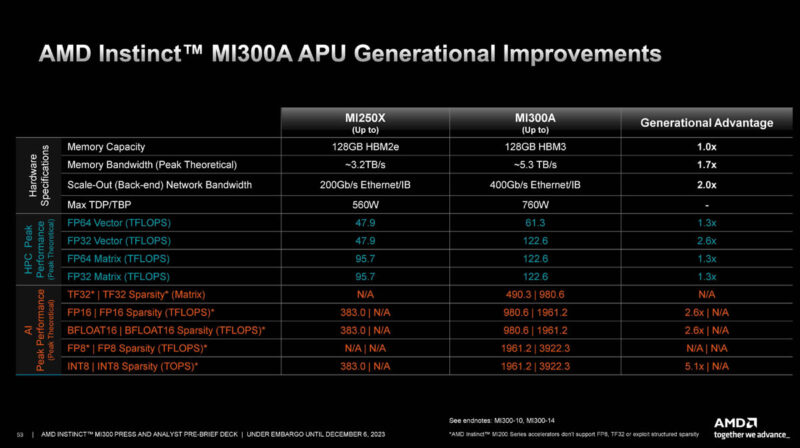

Here is AMD’s slide comparing its previous generation GPU-only MI250X product to the APU MI300A. Even adding CPU cores to the package, AMD still is showing some massive generational gains.

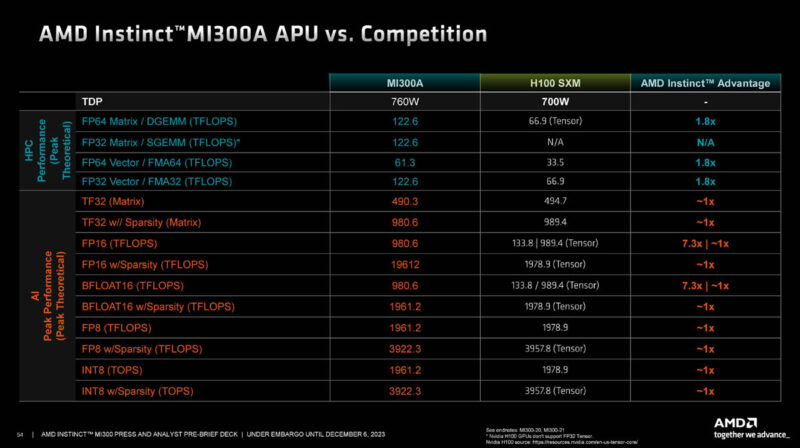

Taking a look at the MI300A versus H100 SXM, again an APU (CPU + GPU) versus the a GPU-only, AMD thinks its chip is in the ballpark, but with a CPU included.

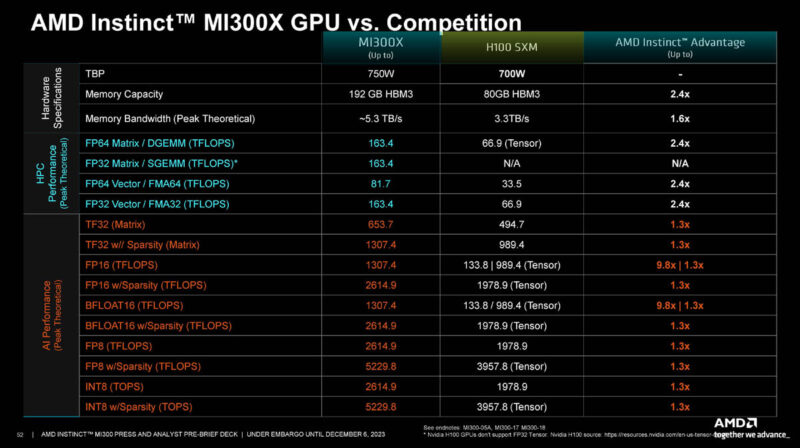

Then comes the big one. Here is the MI300X versus the H100 SXM. AMD is showing that on many top-end numbers, it has a greater than 2x chip. On the other hand, there are many AI areas where it is showing 1.3x.

Some may notice that the MI300X is 50W higher TDP, and frankly, not many folks will care if they are getting a performance benefit. The other storyline is that the NVIDIA H200 with 141GB of HBM3e will be coming next year. Realistically, it feels like AMD built a big chip to be a supercomputing monster and then the AI market took off and AMD realized it is very competitive in AI as well.

Next, we are going to look at the AMD Instinct MI300X both at a higher level and then in its architecture. We will follow that with the MI300A.

STH testing when?

Great article as always Patrick and Team STH

It looks like a couple of MI300A systems are available: https://www.gigabyte.com/Enterprise/GPU-Server/G383-R80-rev-AAM1 and https://www.amax.com/ai-optimized-solutions/acelemax-dgs-214a/

Couldn’t find prices but if it’s supposed to compete with GH then it’ll be around U$30K.

It’s a good question which of the MI300A or MI300X is going to be more popular. As a GPU could the MI300X be paired with Intel or even IBM Power CPUs?

I personally find the APU more interesting. Not because the design is new so much as the fact that real problems are often solved using a mixture of algorithms some of which work well on GPUs and others better suited to CPUs.

Do you know if mi300A supports CXL memory?

I hope to see some uniprocessor MI300A systems hit the market. As of today only quad and octo.

Maybe a sort of cube form factor, PSU on the bottom, then mobo and gigantic cooler on the top. A SOC compute monster.

In the spirit of all the small Ryzen 7940hs tiny desktops. Just, you know, more.