Among the servers of squalor, the AMD EPYC 7232P is king. There is an almost surprisingly large market of servers that have very low CPU capabilities and often run those CPUs at a very low utilization rate. Here, saving $400 on the CPU, or 15% of a server cost can be worth getting less than half the performance. Intel has long had a stranglehold on this market with processors as we touched on in A Look at 7 Years of Advancement Leading to the Xeon Bronze 3204 as well as our recent Intel Xeon Silver 4208, and Intel Xeon Silver 4210 Benchmarks and Review pieces. In this market, the AMD EPYC 7251 was acceptable but did not receive our recommendation.

With the AMD EPYC 7002 series, we wanted to focus on the lower-end CPUs first, so we went out and purchased an AMD EPYC 7232P via an Ingram Micro reseller. AMD did not sample us this part because it is not their best performing. Still, we know this is a large segment of the market and we wanted to look at what the newest P part has to offer. Unlike the previous generation, this is an 8 core EPYC part we can get behind. In this review, we are going to show why.

Key stats for the AMD EPYC 7232P: 8 cores / 16 threads with a 3.1GHz base clock and 3.2GHz turbo boost. There is 32MB of onboard L3 cache. The CPU features a 120W TDP. These are $450 list price parts.

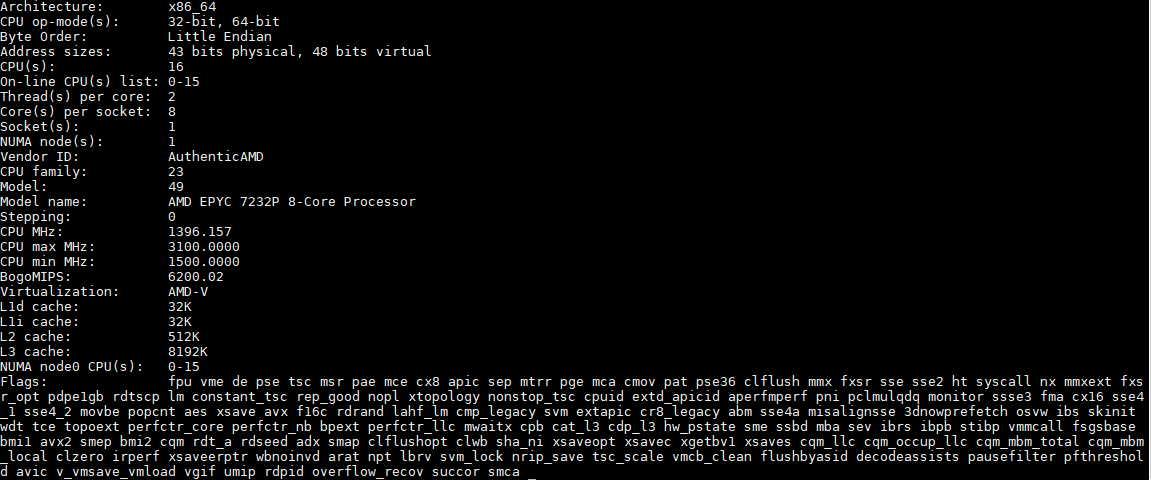

Here is what the lscpu output looks like for an AMD EPYC 7232P:

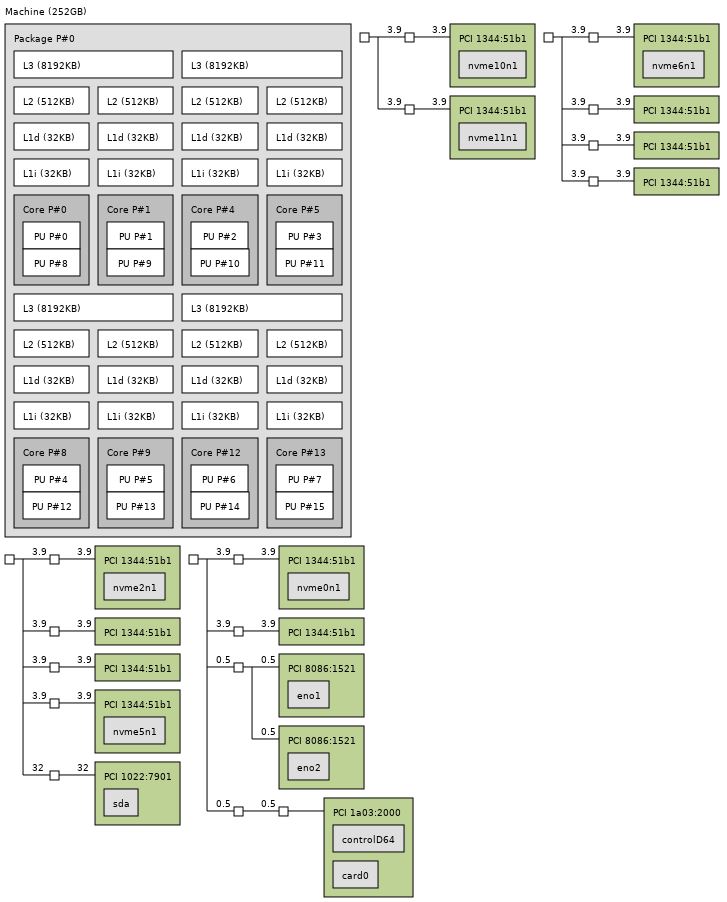

32MB of cache is relatively low for the AMD EPYC 7002 series. With 8 cores there is around 4MB of L3 cache per core. That is still significantly more than current-generation Intel Xeon CPUs have. Here is a topology of one of our test servers with the AMD EPYC 7232P:

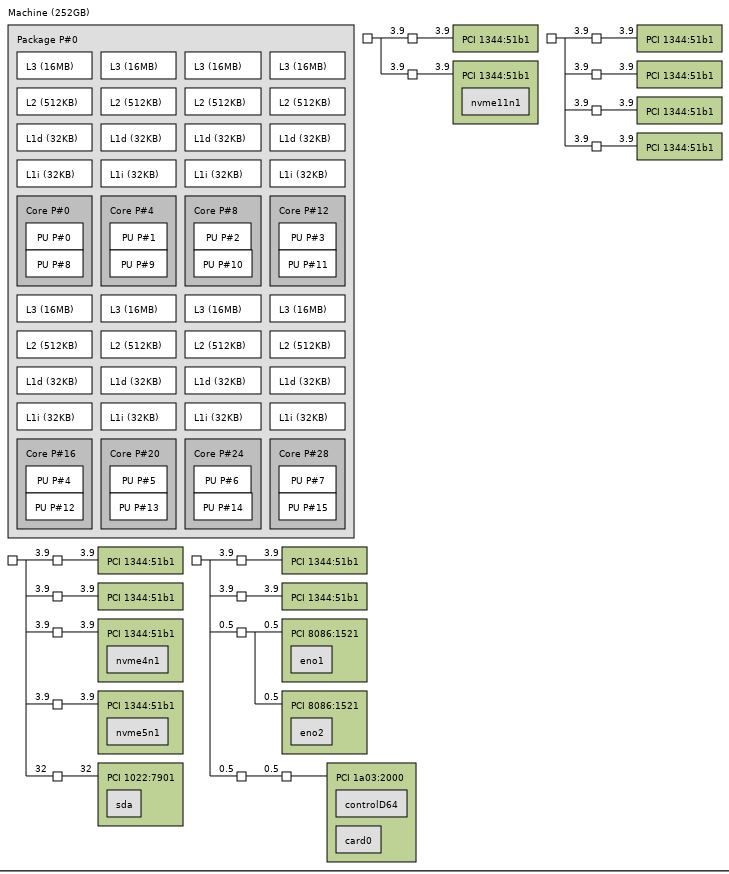

Here is that same server with the AMD EPYC 7262 CPU from the same Ingram reseller:

We are going to look at the performance impacts of this later in our article.

The bigger takeaway from these views is the fact that the AMD EPYC 7232P at 120W can handle 128 lanes of PCIe Gen4. When you compare that to Intel Xeon Scalable CPUs that may at first seem like a lot of power. On the other hand, the AMD EPYC 7232P has more PCIe lanes and bandwidth than a dual Intel Xeon Scalable system. Two Intel Xeon Bronze CPUs cost more and have higher power consumption.

For systems like the Supermicro AS-1014S-WTRT and the Gigabyte R272-Z32 one can use a single AMD EPYC 7232P to create a low-cost platform with top tier expandability. AMD still does not have a sub-100W TDP part designed to take a single Xeon Bronze’s place as a sub $400 CPU, but in a way, that is AMD’s strategy. With a higher performing chip and plenty of expansion for storage appliances, the EPYC 7232P allows one to move from two or more chips to one. Passing 96 PCIe lanes, or more precisely the bandwidth of 96 PCIe Gen3 lanes, the EPYC 7232P starts getting into greater than 2:1 socket consolidation ratios which makes its $450 price tag almost irrelevant.

There is one caveat, the AMD EPYC 7232P is a 4-channel memory-optimized SKU. That means that one can still populate all eight channels and sixteen DIMMs of DDR4-3200, but one will only see half the memory bandwidth of higher-end parts. For this market that is more cost versus performance sensitive that makes sense.

A Word on Power Consumption

We tested these in a number of configurations. The lowest spec configuration we used is a Supermicro AS-1014S-WTRT. This had two 1.2TB Intel DC S3710 SSDs along with 8x 32GB DDR4-3200 RAM. One can get a bit lower in power consumption since this was using a Broadcom BCM57416 based onboard 10Gbase-T connection, but there were no add-in cards.

Even with that here are a few data points using the AMD EPYC 7232P in this configuration when we pushed the sliders all the way to performance mode:

- Idle Power (Performance Mode): 89W

- STH 70% Load: 132W

- STH 100% Load: 177W

- Maximum Observed Power (Performance Mode): 207W

As a 1U server, this does not have the most efficient cooling, still, we are seeing absolutely great power figures here. The impact is simple. If one can consolidate smaller nodes onto an AMD EPYC 7232P system, there are power efficiency gains to be attained as well.

Next, let us look at our performance benchmarks before getting to market positioning and our final words.

I get what you’re saying. $375 more for the 7302p’s straight up a better deal

Squalor was one of the funniest ones you’ve had since the channel cobbler line. It’s spot on. The El cheapo servers that are sold in volumes but nobody cares about.

We use Xeon Silver 2 per for ZFS boxes. We’re going to try a 7232P and 7302P now and see how they’re working. If we can use the 7232P that’ll save us so much. Getting more PCIe lanes means more drives attached per controller node

I like the phrase “light the platform” for CPUs like this. You don’t buy it because you need crazy amounts of CPU performance, you buy it because it’s the cheapest CPU that’ll go in the socket and enable all the other platform features you do need, like massive PCIe.

I can see this CPU being used in a lot of NVMe storage nodes.

How did you determine the 7232P has a 4*(2c + 8MB) configuration vs. 2*(4c + 16MB)? This would be the first confirmed Zen part with fractionally disabled L3, and people are bickering about it on Twitter, etc.