STH started when the world was in the middle of the transition between the first and second generations of SAS interconnects, and the world has changed a lot. In 2007-2010, SAS was the faster alternative to lower-speed SATA. Today, SAS is being positioned as the scale-out interface. With 24G SAS, we get more speed, and so it seemed like time to just do a quick introduction piece. 24G SAS is out, but the deployment is still gaining momentum, so we wanted to discuss what the new standard is as our readers are going to see it more often in the next few quarters.

What is 24G SAS and Why?

First, let us quickly discuss some of the changes in the new generation of SAS. For some reference, some are calling this SAS4 or SAS-4, but most are simply using 24G SAS. 24G SAS seems like the most correct, but after a SAS2 and SAS3, it is natural for folks to want a SAS4.

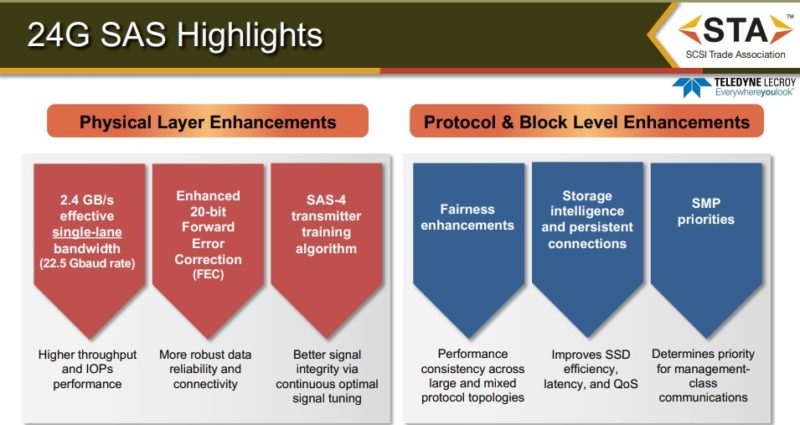

On the physical layer, we get up to 2.4GB/s per lane. Effectively one can think of this as twice the speed of the 12G SAS (SAS3) generation. We should quickly note here that SAS drives are usually dual-ported so there can be two lanes from the device to the SAS infrastructure.

This increased bandwidth along with the 20-bit FEC, 128b/150b Encoding, and a new transmitter training algorithm that helps things like dealing with noisy lines, together mean that the physical layer has been improved for this new generation.

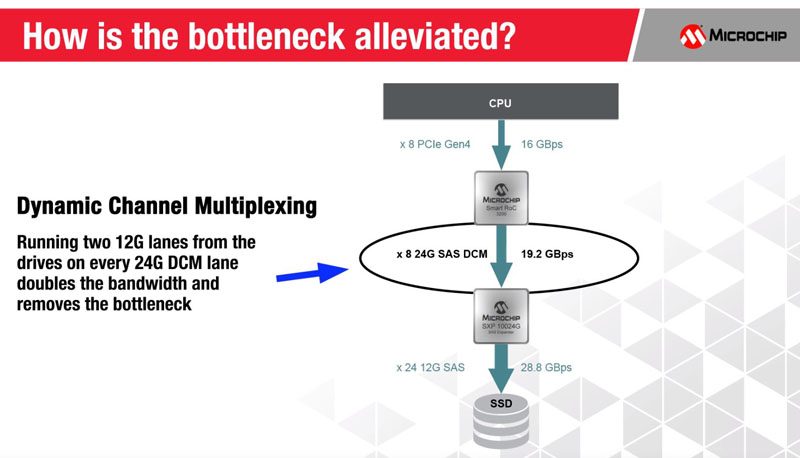

With new 24G SAS HBAs and RAID cards from companies like Broadcom and Microchip, we get the ability to link to not just faster individual devices, but also to expanders. The new 24G SAS expanders have twice the bandwidth to use as backhauls and that can help alleviate some performance bottlenecks, even when using 12G SAS(3) devices on the newer infrastructure.

While hard drives are progressing much more slowly than the SAS interface, SSDs are on another path. Many will change to NVMe for SSDs as the next generation of 2022 servers like Intel Sapphire Rapids and AMD Genoa EPYCs come online. Still, some will want to have a single interface for storage, especially with HA topologies, and that is where the new interface provides a lot more bandwidth for SSDs.

You will likely see SlimSAS internal cables as well as MiniSAS HD cables used for 24G SAS. We have seen a number of SlimSAS designs in the past 2-3 years, and that is due to the compatibility with SAS-4 as well as NVMe. Both Broadcom and Microchip are offering Tri-mode adapters at this point to support SATA, 24G SAS, and NVMe on the same adapter. While in the 6Gbps era SATA was a more competitive interface, these days it is less than a quarter of the speed of 24G SAS-4 when looking at bi-directional bandwidth.

These days, the breakdown is basically NVMe for mainstream SSDs, and performance SSDs. 24G SAS for scale-out. SATA is now the interface for lower-end/ smaller hard drive arrays.

Final Words

We have had a few readers over the past month asking about 24G SAS and why is it different than SAS-4/ SAS4. It seemed like it was time to do a quick piece just setting that clear, but also talking about where they will be used going forward.

Hopefully, this helps those readers and others with the same questions. We have been doing a lot more coverage on the NVMe side, and will be looking a lot more at NVMeoF in the next quarter, but we wanted to at least cover this before we get too far down that path.

I am eager to read/follow more news along these lines. Thanks!

Even though I work in data-centers I’ve always just missed working on the exotic hardware. The day i started my most recent position, the company had decommissioned FOUR 48-drive fiber-channel (i-SCSI) Gluster storage arrays! So as I say, I keep missing the cool stuff.

Every server that i work on these days uses SATA drives instead of SAS, (Always configured as JBOD, using FreeBSD & ZFS).

When/Where can SATA drives be used inplace of SAS? I know that (some of) the connectors are backwards compatible, but not all of them either.

I’m wondering about the convergence of transport methods. At some point nearly everything becomes serialised and lane-split enough to simply become PCIe-transportable.

Once low-cost high density PCIe interconnects and the chipsets to go with them become available you essentially end up with “Thunderbolt for Datacenters”, which is somewhat similar to mainframe technologies but commoditised.

I really don’t see the market for SAS4 except SSDs with the need for redundant interfaces, NVMe is the industry standard, and I don’t see spinners scratching SAS3 limits anytime soon (except for theses crazy 10k RPM drives, which don’t have a market anymore afaik)