Step 5: Scaling the Dell Pro Max with GB10

That brought my thinking around to applying this to other companies. Frankly, there are many companies that do not have the capital or facilities to buy and house $200K+ 8-GPU servers, or even one of them. On the other hand, two of these are only around $8100.

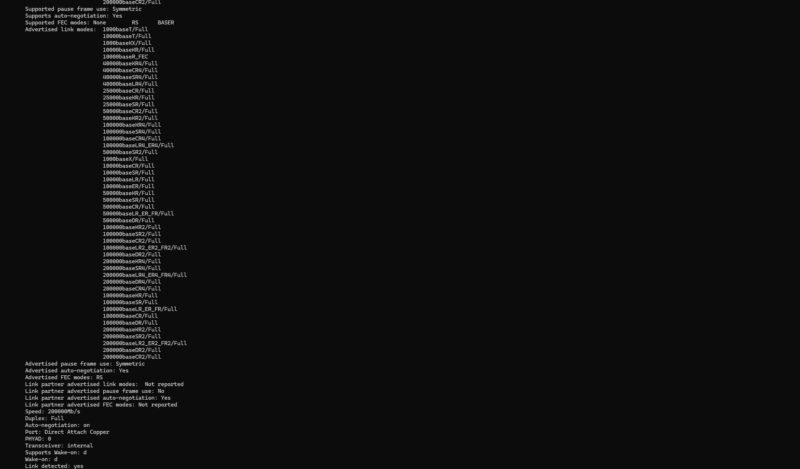

Recently we did an in-depth piece using these showing why The NVIDIA GB10 ConnectX-7 200GbE Networking is Really Different. The networking is actually similar to what we saw when we did bi-directional 800Gbps traffic through the NVIDIA ConnectX-8 NIC in our review. NVIDIA has its (fast) Arm processor, Blackwell GPU, a lot of memory, and high-speed networking to allow for not just one of these to be used at a time. Doing that ConnectX-8 piece really helped give us the perspective that these are probably the best low-cost development tools for NVIDIA’s Arm, Blackwell, and RDMA networking stack. The RDMA networking is important because it provides a path to scale to larger models.

When I first saw the MikroTik CRS812-8DS-2DQ-2DDQ-RM and upcoming MikroTik CRS804 DDQ in Latvia last summer I told John Tully, MikroTik’s co-founder, that these were perfect for the GB10 clusters. Indeed, if you are buying a few of these units, then these have become the de-facto standard for low-power and lower-cost switches.

In the video, we showed you how to connect these not just to those smaller switches, but also a larger Dell Z9332F-ON 32-port 400GbE switch. We also tested these with the NVIDIA DGX Spark. These days we would strongly suggest getting a Dell Pro Max with GB10 over the DGX Spark because you get the ability to customize the storage and you can get Dell’s warranty and support services. That includes options for ProSupport Plus for drops and spills if you are planning to travel with these setups.

This is a somewhat strange way to think about it, but the thought I had while doing this is what if we treated the Dell Pro Max with GB10 as an “employee”. Using that mental model, we could then have several of these as a team. That team can do individual tasks (host different models) or be used together via RDMA networking to accomplish a larger task, hosting larger models.

Final Words

There is certainly a lot of benefit to getting higher-end systems instead of multiple Dell Pro Max with GB10’s. At the same time, these allow you to scale to your business needs if you think of them as 2 hours per week of time savings at $40 per hour for a year. For many business owners, managers, and so forth, they may want to have a local AI solution but coming up with a business case to host a super-fast 10kW server that costs $200,000 is difficult. On the other hand, everyone does reporting. Reporting is done on data that most organizations do not want to share to many cloud services, and there are likely countless organziations out there that have somone doing a 2 hour per week $40 per hour reporting role.

I know that many folks will read this and have ideas of what can be changed. Some may think “I could do this better with a NVIDIA RTX Pro 6000 Workstation” or “I would just use a cloud service to generate tokens faster.” All of these are true statements, but that is perhaps the point of this. It was a fairly easy worfklow to setup, going from a flow chart on a notepad, to a functioning AI-powered reporting tool a few hours later. Using this is something that frees up my time without having to hire an additional part time role. Instead, we were able to get a well-known individual as STH’s new managing editor.

Our other key takeaway was a big one. What enabled this was not running a smaller model fast. It was running a larger model, with higher accuracy, even if it was much slower. We are already budgeting 2-4 hours per week for this, so it is not something I am expecting, nor do we need answers within five seconds. What I need is to be able to trust the result of the workflow. Ideally, I could have extremely fast result I could trust, but in a sub-$10,000 workflow these days that is hard to do using a different tool.

I’ve got 4 FTEs doing reporting full-time. For those who don’t get it, they’re using the LLM to convert a very unstructured request into a structured request format. There’s a lot of words around that, but that’s what employs people.

Eye-opening to say the least. We’re going to order a few this quarter just to try this.

What I like is since email is asynchronous that interactive performance doesn’t matter and one can run a capable large language model with everything to gain on cheaper hardware.

You haven’t included energy cost in your business case- how does that play out?

H Lowe – Yes

Eric O – Realistically, when these come in, if we get them out that week, that is usually OK. Sometimes folks need them that day or the next. You are right, the SLA is not tight, which makes this easier.

Martin – Power in Arizona is relatively cheap, which is why there are so many data centers and fabs here. These are sub $5/ month to run. On the other hand, what would 0.05-0.10 of a person’s energy cost be to offset that?

@Martin it should be noted in the analysis that energy costs do need to be added but figures are going to be variable. Different regions can have vastly different power rates.

Analysis of this compared larger rack based systems ran locally at the same cost of energy would be interesting. Rack solutions are supposed to more efficient in terms of performance/watt but have a much much higher power consumption as well. In other words, those larger systems need to be loaded for those performance/watt improves to translate into a return on investment. For projects like this who load is cyclical and job completion is not time sensitive, ROI should arrive much sooner due to lower initial investment. The nice thing about the math in small cluster vs. larger rack solution in this analysis is that per unit power cost get factored out of both sides of the equation as the result is a load factor difference.

The comparison to cloud offerings or hosted data centers is that power often is included in their figures. It may not be an explicit line item but does explain some of the different region-to-region pricing cloud providers can have for the same compute. In addition, the rate for which hyperscalers pay is often less than commercial rates or residential rates of power in an area. Electric companies like consistent load and consistent predictable income from large data centers. The cloud solutions can be competitive on a cost basis as they can run multiple customer job across the same hardware to generate the loads necessary to hit ROI in a similar time frame, even including the differences in power cost though that is more abstracted. That performance/watt difference of the larger rack systems is where the profit is generated for cloud providers as they can operate at the scale and loads that are not feasible in-house.

You really don’t need AI to do this.

Easier to setup some basic workflow automation around your content analytics/metrics and have automated reporting setup to key stakeholders/clients that blasts out reports every week/month/quarter.

Make it part of the package of what they get when they sponsor content/run ads, etc.

MR – That would be great, except that is not for the standard reporting flows we have. This is to address the ad hoc requests that come in and need to be serviced.

Your proposal is to use a different process than what folks are asking for/ need. You are right, the challenge AI is solving is the non-standardized reporting.

It is nice to see a real world use case for AI at this level. The growing concern with AI is that there is a lot of spend on the build out and a lot of folks playing with “slop”. There are little practical use cases especially outside of the large enterprises.

As simple as the use case in this article may seem, it is one of the few times that I have seen N8N being used in a way that benefits small businesses.

It also highlights the nuance of how AI hw can be used in this type of business. It is not training at 100% utilization. You are able to create the app with relatively little effort, test and then set and forget.

Now I need to move beyond creating an animated image of Jensen Huang with ComfyUI!

It’s a thousand dollars more than the HP and the exact same configuration.

@Sridhar: Wasn’t the Dell released earlier?

You were not presenting a fictional “GB20” next step on purpose?

If a future GB20 ever shows up, it should come with 128–512 GB of RAM and around 800 GB/s of memory bandwidth, not just a nicer spec sheet.

GB10 looks great, but on some key specs it was already obsolete on paper the day it launched. Prioritizing 2×200 Gb/s networking over seriously juiced‑up memory bandwidth is a great way to build a “workstation” that looks amazing in a rack but makes a lot less sense on a desk at home, where extra RAM and bandwidth would be far more useful. And given that Apple has been shipping roughly 800 GB/s on a Mac Studio with an M2 Ultra since 2022, it’s hard to pretend that 273–300 GB/s is still “high‑end” in 2026.

GB300 DGX Station is the next step, but that will be $100K+ now.

Remember, you can also scale these out easily. We have an 8x unit cluster already.