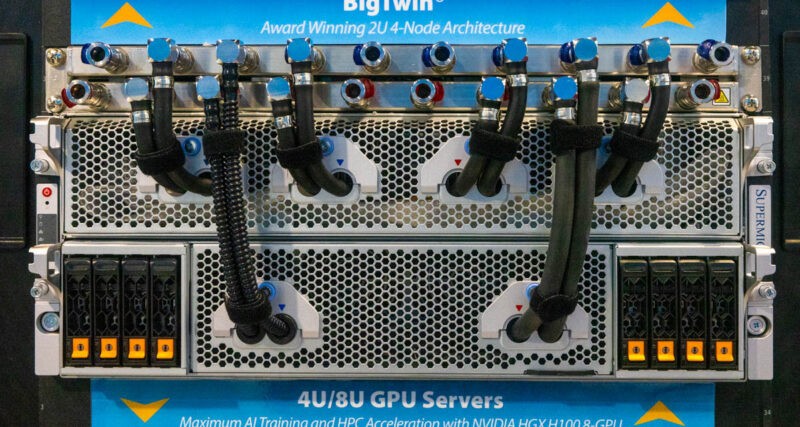

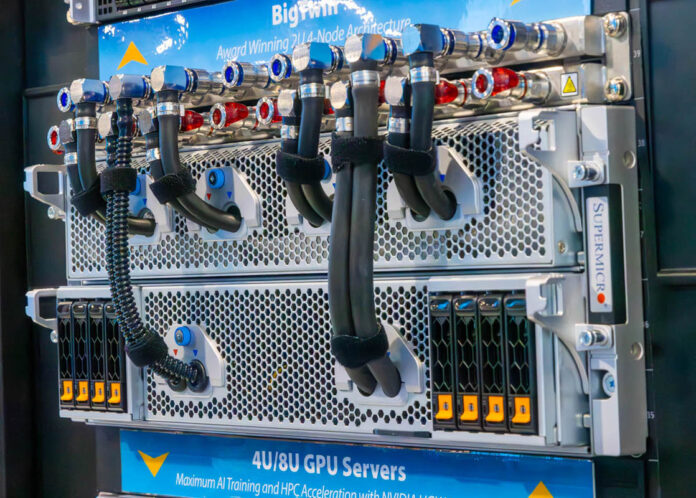

At SC23, we spotted the new Supermicro 4U Universal GPU system. This is a liquid-cooled system designed for the densest deployments. Since we have been doing a lot with liquid cooling, we figured we would show this off as the team is going through photos from the show.

Supermicro 4U Universal GPU System for Liquid Cooled NVIDIA HGX H100 and HGX H200

At SC23, we took a look at the new Supermicro 4U Universal GPU system. Supermicro has a number of 8U models that are optimized for either air or liquid cooling, but this design is specifically designed to take advantage of liquid cooling to dramatically increase density.

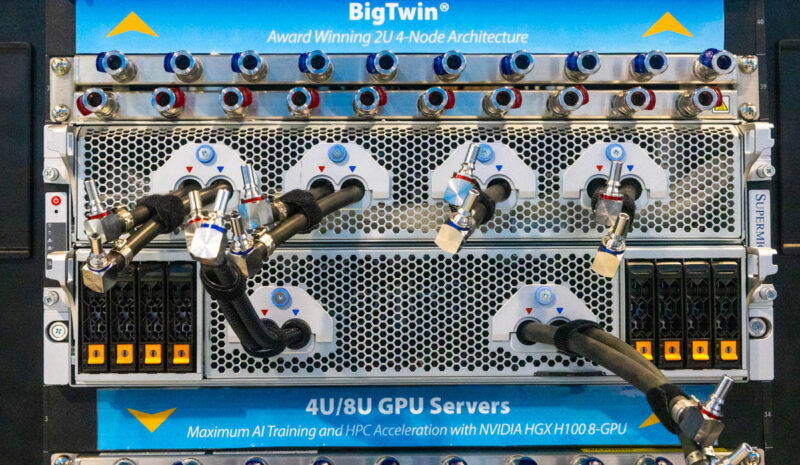

The liquid cooling manifold is a horizontal solution, as we showed previously on STH. That allows the system’s cooling nozzles to be quickly disconnected from the manifold.

In this system, the top tray is the NVIDIA HGX H100 8-GPU with NVSwitch tray. In the future, Supermicro says it will support the HGX H200 GPUs.

We could not move the rack, but behind the unit, there are four power supplies (two installed) and a massive set of full-height and low-profile I/O expansion card slots. We also get the BMC’s out-of-band management port, two USB 3 ports, and a VGA port.

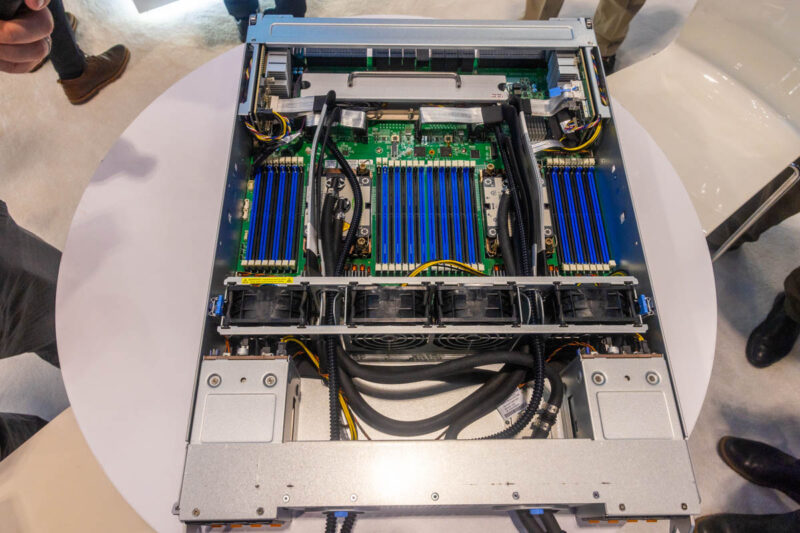

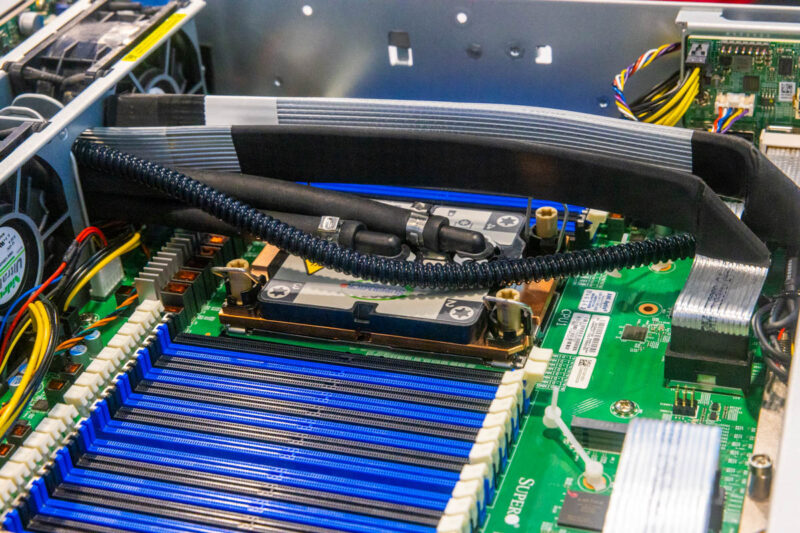

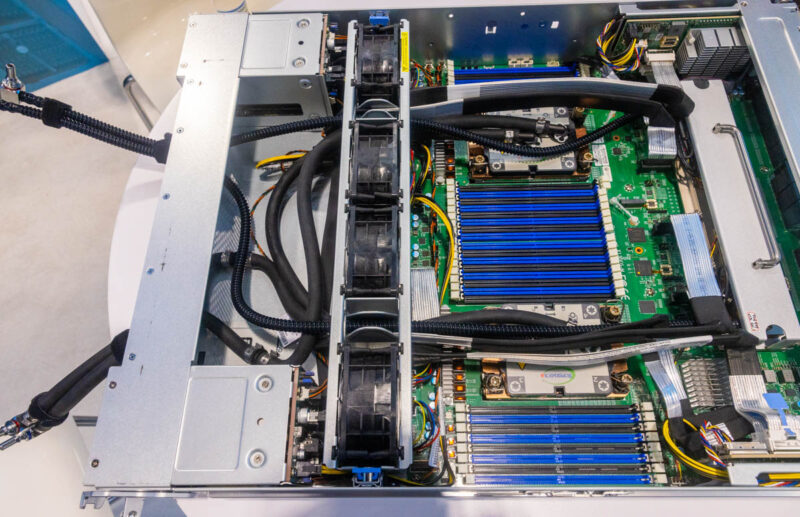

Pulling the CPU tray out, we see a dual Intel Xeon server that is for either Sapphire Rapids (4th Gen Intel Xeon Scalable) or the upcoming 5th Gen Intel Xeon Scalable (Emerald Rapids) CPUs. Each has a full set of 16 DDR5 DIMM slots for 32 total.

The CPUs in the system are liquid-cooled since Intel’s socket is designed for up to 385W TDP and typically higher-end CPUs are used in these GPU servers.

Something our readers will notice is that there are fans in this chassis. The fans allow Supermicro to cool the DIMMs, M.2 SSDs, 2.5″ SSDs, and the rear I/O cards without needing cold plates on all of those devices.

One can see the two cages for 8x 2.5″ NVMe SSDs total on the front of the system here.

Final Words

Overall, this new system follows Supermicro’s design philosophy for AI servers, except for the fact it is primarily liquid-cooled. A 4U GPU server presents a challenge for cooling as they can use ~10kW of power each. Ten of these in a 45U rack would be 100kW. Using liquid cooling usually removes 10-15% of the power requirement, but that is still 80-90kW in the rack before adding switches.

Supermicro has several large-scale GPU customers that can use liquid cooling, are building more power, and need more density. That is the type of customer this system is built for.

If you want to learn more about Supermicro liquid cooling, we previously looked at the 8U Liquid Cooled Supermicro SYS-821GE-TNHR 8x NVIDIA H100 AI server and Supermicro’s custom liquid cooling rack.

Impressive! But who comes up with the names? A universal server for the specific case of two models of liquid cooled GPU.

Where does support for 100kW racks exist? In my world it typically tops out at 12kW per rack. Usually it’s a cooling limit but definitely power as well.