At GTC China 2019, NVIDIA made a number of announcements. The first is that a new version of TensorRT 7 launched. We also were treated to a set of new automotive-related announcements from the company. What is clear is that NVIDIA is putting significant efforts behind taking its dominance in the AI training market to date into inferencing.

NVIDIA TensorRT 7 Launch

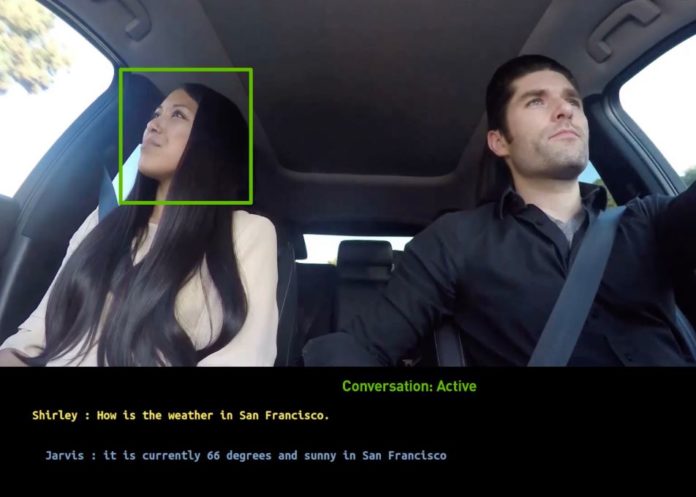

Perhaps the most immediate launch was that of TensorRT 7. NVIDIA TensorRT is the company’s solution for running inferencing across a range of devices out to the edge. The new version has features to accelerate the real-time conversational AI pipeline supporting speech recognition, natural language understanding, and text to speech models.

Here are the highlights of TensorRT 7:

- New compiler to accelerate recurrent neural networks commonly used in speech and anomaly detection

- Support for over 20 new ONNX operations to accelerate key speech models such as BERT, TacoTron 2 and WaveRNN

- Expanded support for Dynamic Shapes to enable key conversational AI models (Source: NVIDIA)

TensorRT 7 and the associated plugins, parsers and new samples for BERT, Mask-RCNN, Faster-RCNN, NCF, and OpenNMT are rolling out already on its developer platforms. One can see with the NVIDIA supplied cover image for this article that the company is positioning this for use even in autonomous vehicles and their conversational assistants.

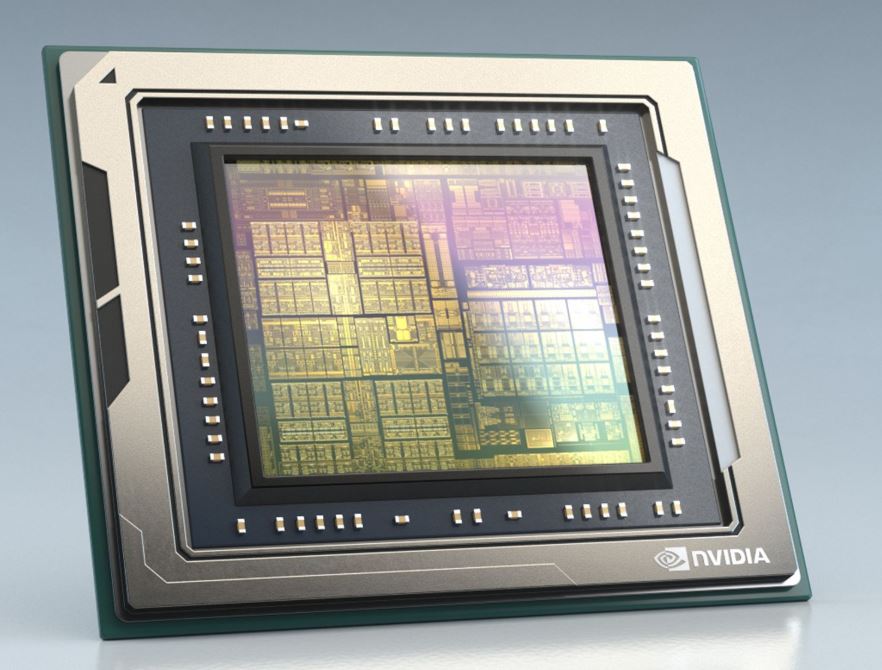

NVIDIA Drive AGX Orin

NVIDIA made a number of announcements related to automotive at GTC China 2019. These included the availability of pre-trained models for tasks such as traffic light and signage detection on NGC. NVIDIA is also pushing a concept of federated learning that allows teams to share models across IP regime boundaries without having to share data and specific IP. Perhaps the biggest announcement was of the NVIDIA Drive AGX Orin.

While we currently have the NVIDIA Drive Xavier shipping for automotive, NVIDIA is showing a chip that is set to launch in 2022. The new chip will be capable of over 200 million INT8 operations per second from 30M in today’s Xavier.

The NVIDIA Orin platform is said to house over 17 billion transistors. That encompasses functionality for all of the hardware video encode/ decode, networking, and other specialty features as well as the main CPU and GPU blocks. NVIDIA is ditching custom-designed cores and settling on next-gen Arm Hercules cores. On the GPU, this is being billed as a next-gen architecture although NVIDIA is not specifying which one. This may be 2-3 architectures ahead given the timing and how NVIDIA decides to introduce new GPUs for different markets. It will have features like tensor cores.

Between the extremely early pre-announcement of new hardware along with the focus on the AI development pipeline, NVIDIA is pushing its expertise in automotive AI. The real eyebrow-raiser is that NVIDIA is pre-announcing a product that is 2-3 years from the market. It almost seems as though it is trying to counter products that came out after Xavier and showing that anyone playing in the space is going to need a much more powerful solution in the near future to remain competitive.

I am assuming Tesla’s custom chip is exactly what is meant by “countering products that came out after Xavier”?