When we last covered the Kioxia RM5, Kioxia was still called Toshiba Memory. The line is designed to be at SATA pricing on configurators of major OEMs such as HPE, Dell EMC, and Lenovo yet offer a SAS3 interface. Kioxia’s belief is that there is a market that still wants SAS3 RAID controllers for in-server storage. Given that server architecture, the Kioxia RM5 is designed to beat SATA SSD features and performance at the same price. If you want to know how to pronounce Kioxia, we have a How to Pronounce Kioxia guide.

Kioxia RM5 Overview

We are pulling some of the overview materials from our previous pieces such as the Toshiba RM5 Answers the Call of Replacing SATA with SAS3. These are still going to say Toshiba because of that.

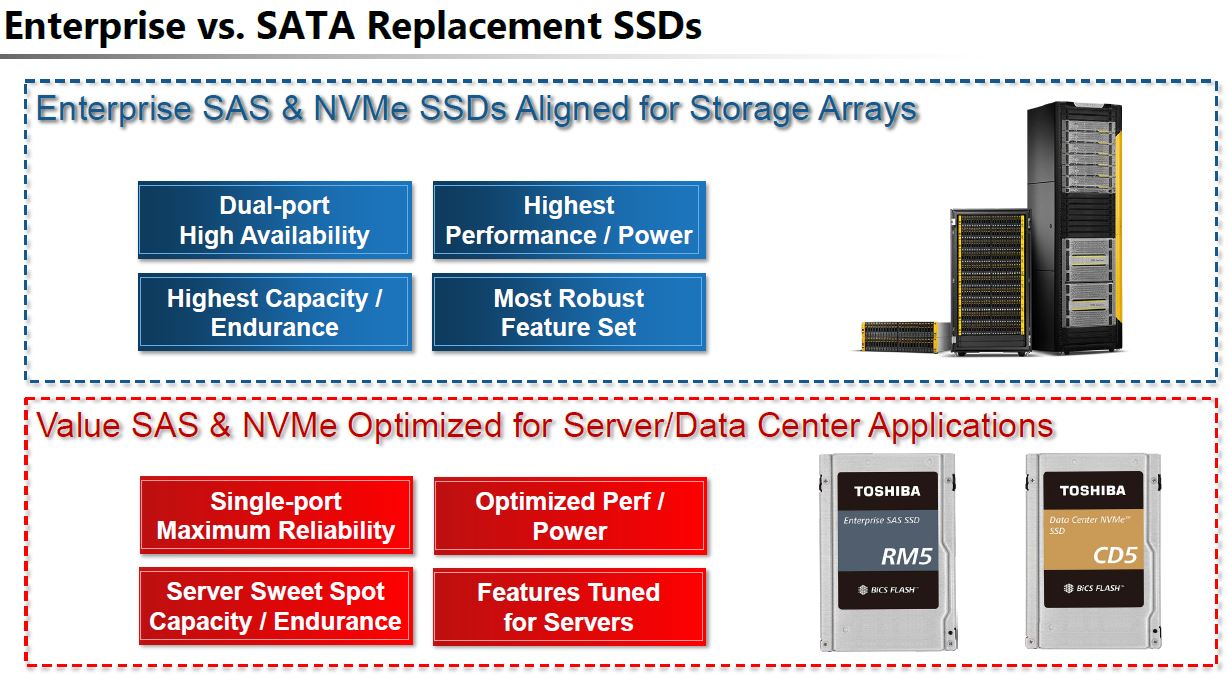

The basic setup Kioxia is using here is that SATA is dying in servers. Storage is moving to SAS3 because of its robust installed base and higher-end feature sets such as dual-port drives. It is also moving to NVMe because of the raw performance available.

Although the company still sells SATA SSDs, it is pitching “Value SAS” as an alternative. SATA is a legacy 6Gbps interface while SAS3 is a 12Gbps interface. What is more, SAS has a roadmap to SAS4. Using the higher-performance interface, the Kioxia RM5 is not limited to some of the performance constraints that SATA SSDs contend with.

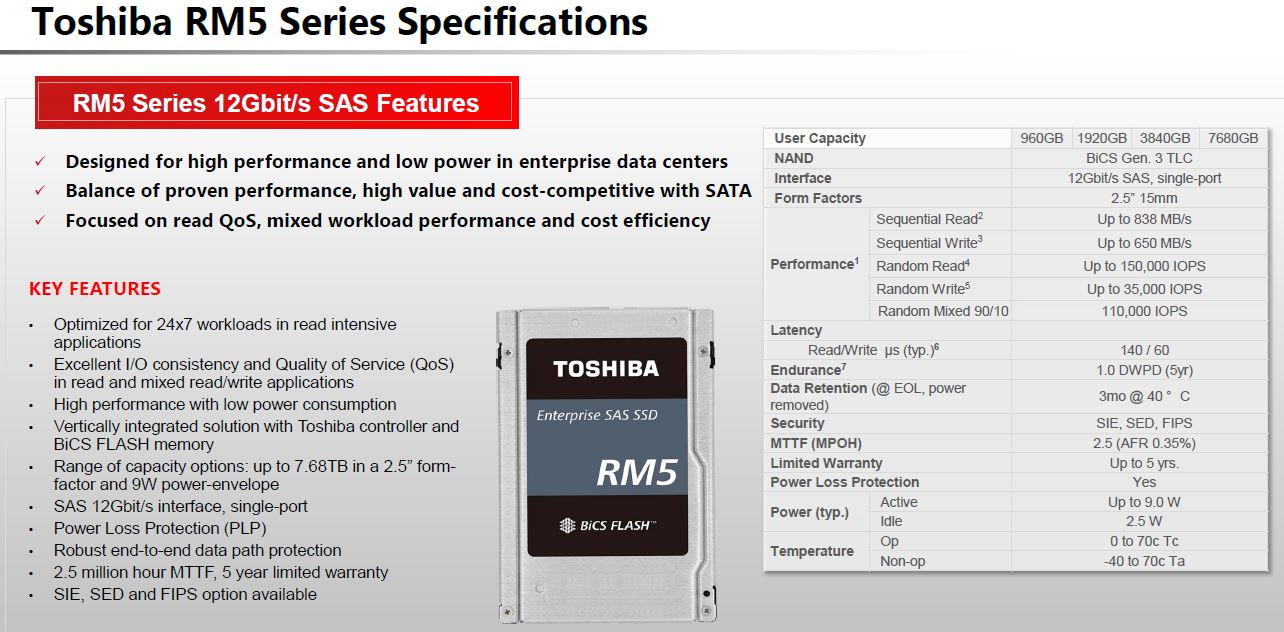

As an enterprise SSD, the Kioxia RM5 is designed to have features such as Power Loss Protection and a 2.5M hour MTTF. Those are features that one would expect from enterprise SAS drives.

There is one SAS3 feature that one may expect, yet Kioxia omitted. That is dual-port support. Dual-port SAS3 drives are used in large storage arrays where one has Active-Active controllers. The two ports on each drive, allow the SSDs to sit on two independent datapaths, one to each controller. If one controller fails, there is still a datapath to access the SSD.

Where Kioxia RM5 SSD is targeted is somewhere slightly different. Instead of being targeted at the large storage arrays, the RM5’s market is inside compute nodes. Take a typical 1U server such as the Dell EMC PowerEdge R640 or HPE ProLiant DL325 Gen10 and both to have front hot-swap bays. Both configurations we reviewed had the vendor’s SAS3 RAID controller (PERC and SmartArray.) Those controllers were the only links to the front panel SSDs. Essentially, these servers had single SAS3 datapath to the devices.

The Kioxia RM5 is designed for exactly that use case. If there is a single controller connecting to a drive, then it can shed dual-port support for lower power and cost, driving better TCO. By introducing a drive with a SAS3 interface instead of the also single-port SATA interface, one doubles the effective link bandwidth which translates to better performance.

Kioxia RM5 Performance

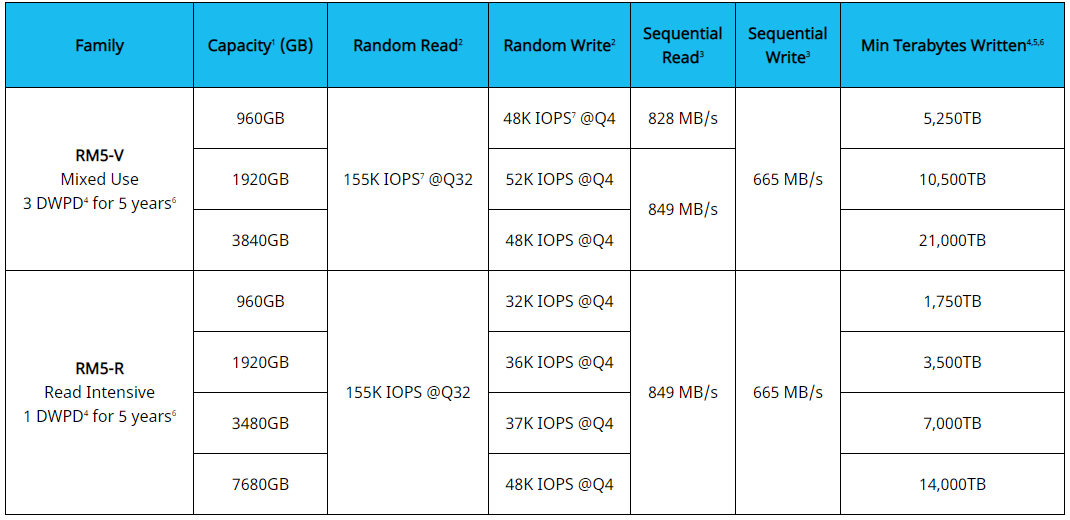

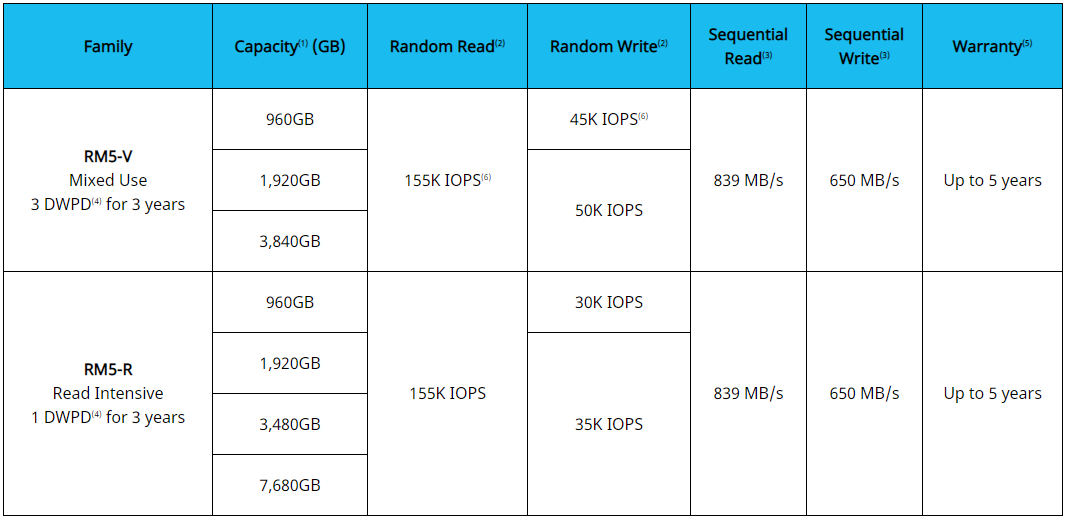

In 2020, if you want high-performance in-server storage, you should buy NVMe. That is the performance option. Still, we wanted to give some sense of what we were seeing from the RM5 in real-world applications. Before getting there we wanted to discuss some of the more standard numbers. We saw the Kioxia HPE specs on the company’s website and we were close, but not exactly aligned to these numbers.

It turns out that we were using Dell EMC firmware Kioxia RM5 drives instead and that those have ever so slightly different specs.

Kioxia works with HPE, Dell EMC, and others to provide custom firmware for the SSDs that also support each vendor’s management suites. That also includes some performance tuning. Instead of making our readers scroll through dozens of charts to show the above, we wanted to get a higher-level look at the real-world performance of the drives versus SATA SSDs.

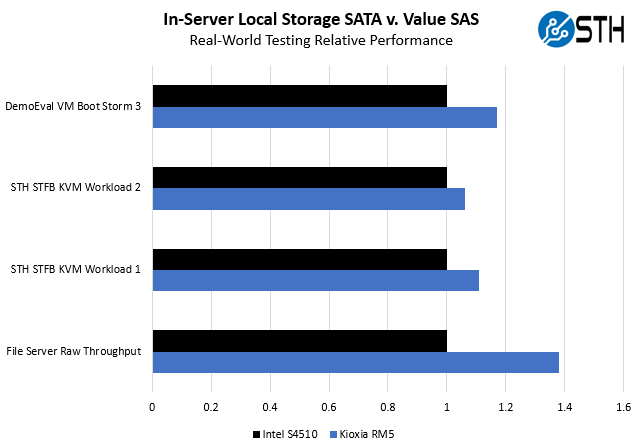

As a quick overview of what we tested using 4x Kioxia RM5 drives versus 4x Intel DC S4510 SSDs:

- File Server – we use a 500GB data set spread across the drives and let a series of KVM virtual machines pull data from the file server.

- STH STFB KVM Workload 1 @ Medium VM size – This particular test utilizes workers that are ingesting a data feed, storing what is needed and processing the information looking for patterns that can be used in future interactions with a user. This is essentially a more classical machine learning set of workloads. This workload is heavily CPU bound but becomes storage bound when data is stored on disk which we do not do during our CPU benchmarks e.g. our recent quad Intel Xeon Gold 6252 Benchmarks and Review.

- STH STFB KVM Workload 2 @ Medium VM size – This is our more balanced virtualization workload that we run servers and server CPUs through. Workload 2 utilizes a php and javascript front end. Data is pulled from a cached database and server-side processing is done to generate elements that need to be displayed to a user via the nginx web server which is encrypting data served via HTTPS. Normally, we run data from RAM disks, but in this case we are putting it on SSDs in a dual Intel Xeon Platinum 8180 PowerEdge R740xd server.

- VM Boot Storm 3 – This is a workload we run for one of our DemoEval clients that simply times how long it takes to power-on a set of VMs and have the infrastructure respond to requests. The total data set is around 427GB between OS, application, and data loaded.

Overall, we got better numbers which makes intuitive sense. Sequential performance is much better than on SATA SSDs simply due to the SAS3 interface. There are parts of these workloads that are CPU bound as well, but that is fairly normal to see different constraints. The Xeon Platinum 8180’s are high-end 28-core chips, so one could argue this minimized CPU constraints putting more stress on storage. This is by no means radical performance improvement but there is value here in providing something incrementally better. Also, all of these workloads are significantly faster on NVMe drives, but that requires changing server configurations so we are focusing on what one can get in a SAS3/ SATA bay.

If one can get better performance using the same server hardware (e.g. not switching to NVMe versions), then the next question is how much one pays for that performance. That is what we aim to answer in our market analysis.

Kioxia RM5 Market Analysis

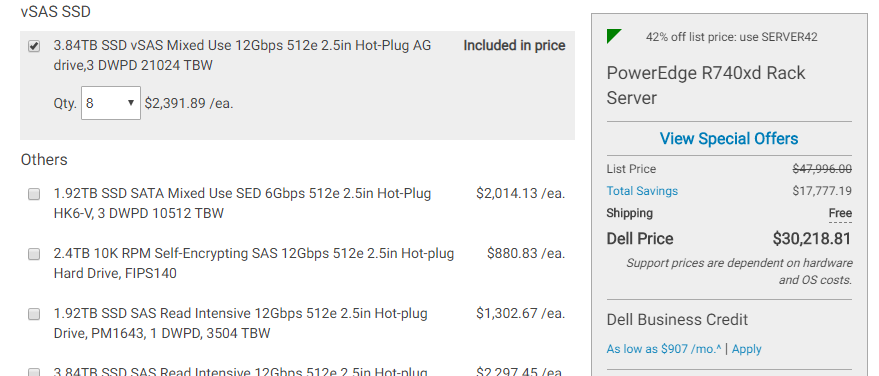

Taking a quick look at the Kioxia RM5 in action, one can see them in places such as the HPE and Dell configurators. When we go to the online configurator for the Dell EMC PowerEdge R740xd we reviewed, we can find the RM5 in its own category. It is not under SATA, SAS, or others, but instead under vSAS:

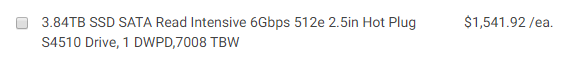

Note the list prices are meant to have the 42% off coupon before negotiating any further discounts. Prices vary but on the day we are publishing this there are no 3 DWPD SATA drives listed. Instead, all we can find is the 3.84TB 1 DWPD option:

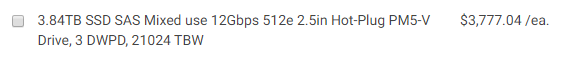

Under SAS3, the 3.84TB 3 DWPD option is the following:

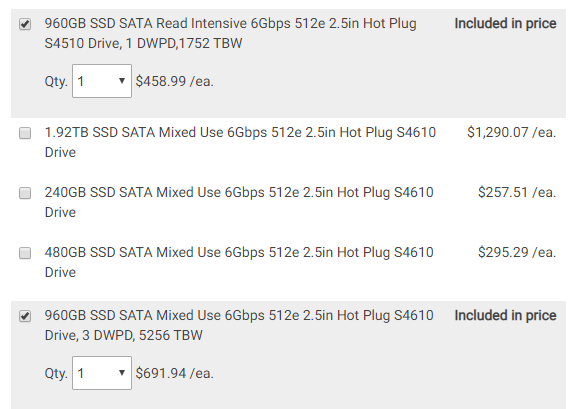

This puts the Kioxia RM5 much closer to SATA pricing than SAS3 pricing. Still, without a 3 DWPD SATA option, we do not have a directly comparable drive to the RM5. Moving to a smaller 960GB capacity, we can get a sense of the delta:

As one can see the 3 DWPD drive is about 50% more expensive. Using that scaling, we would get a $1,541.92 * 1.5 = $2312.88 or so for a SATA drive comparable to a 3 DWPD Kioxia RM5. That puts the RM5 at about 3-4% more expensive than the theoretical SATA variant.

Single port SAS3 is a better interface than SATA, but the question becomes by how much. At a 3-5% premium, it makes a lot of sense, especially in servers with SmartArray, PERC, or other SAS3 adapters already included. If the server is an ultra-low-cost model it may use onboard SATA in which case the RM5’s value proposition is diminished. Intel and AMD servers have SATA onboard but require SAS3 RAID cards and HBAs to get higher-end connectivity. Major vendors such as HPE, Dell EMC, and Lenovo push to have there SAS3 controllers in most systems since using these cards allows them to integrate into iLO, iDRAC, and XClarity management portfolios.

Final Words

The Kioxia RM5 is a very bold move. As the industry forges ahead with a transition to NVMe for performance workloads, we see SATA being relegated to spinning disks and boot SSDs. SAS3 is somewhere in the middle. It is easier to scale SAS3 than NVMe and SAS3 has a mature dual-port infrastructure that will be around for years in higher-end storage arrays. Seeing the opportunity of using a superior 12Gbps SAS3 interface already inside servers from major vendors and pitching a low-cost single-port drive as an alternative to the 6Gbps SATA III ecosystem is great. So long as the costs are similar, this bold move by Kioxia is a win for server buyers.

What am I missing, why would I buy a more expensive (~25+% premium) a single port SAS device, if I could use a cheaper 3 DWPD SATA device like an Intel S4610?

SAS backplanes support SATA devices, so there are no compatibility issues.

Finally, don’t you think it is misleading on the part of Dell to mix single and dual port devices in options, without calling out the difference?

I think the point was that for an equivalent SATA drive it is more around a 3-5% premium. Dell sells the S4610 for a 50% list price premium and that is a better comparison point.

I do like that they have a distinct section for the visas drives.

Sorry I’m late to comment but I’m distilling the essays I originally penned because I couldn’t believe that everyone missed the Elephant In Room significances (plural big deals. Plural big deals for horizontal industry no less) which is how this announcement will probably save SAS itself – the protocol- from sublimation into history eliminated by repackaged resellers in the volume storage business and hegemonies imposed on anyone whose account isn’t a prize for Oracle or whoever is gouging 90’s Oracle size commissions presently.

You only need to ask what Storage Spaces is on about to get everything that I would take several thousand words to explain, from how much our economy has depended on serious computing platforms like SCSI (the I is the word that matters here and needs to be replaced in the next acronym evolution: Scalable Serial Availability Architecture American Synchronised Symbiosis Platform S2AS2P or (SA)2 ASP I know I’ve hashed that because nt mind is stuck on American Sympatico Platform but I’m shooting for a fit for SAS-ASP extending the deserving of better recognition work of the SCSI into our wider computing needs where storage is required to play a much expanded critical role without sufficient though given to any of it directly.

Anyone remember the QUOTRON machine?

SCSI was the LAN

LAN does it disservice: it was a distributed system.

This product announced here is defining a category that’ll be misjudged by the UNIX/Linux primary shops, possibly by far most of the heterogeneous shops we know, too, unfortunately:

Storage Spaces requires SAS drives.

Give Windows Server a modestly generous SAS JBOD, and it will happily spin up about any network storage protocol, block or object, which anybody needs short of who ought to know about DDN or learn to love parallel fs design.

This market effectively just came into existence with this announcement.

Erratum:

I meant reinstated, not replaced, in my first comment. Recall the Initiative!