With the launch of the Intel Xeon Scalable Processor Family, we wanted to take a moment to discuss the platform at a high-level. We have a ton of coverage on the new platform which you can access via our Skylake-SP Coverage Central. At the same time, we wanted to look at the overall platform implications in a higher-level piece while many of our other articles are in-depth analysis pieces.

Intel Xeon Scalable Processor Family Platform Overview

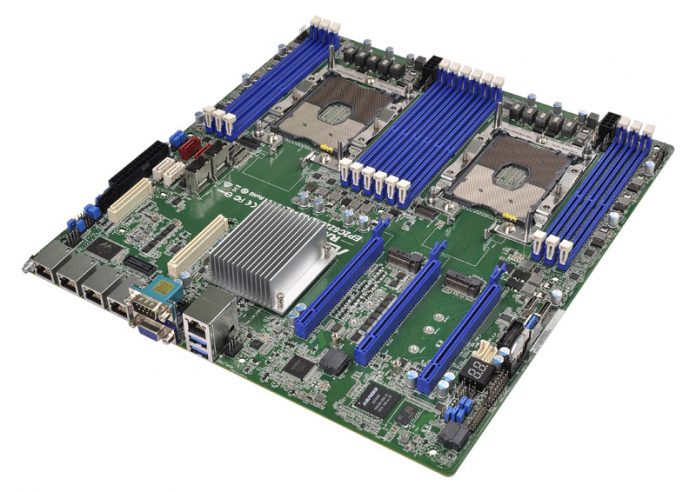

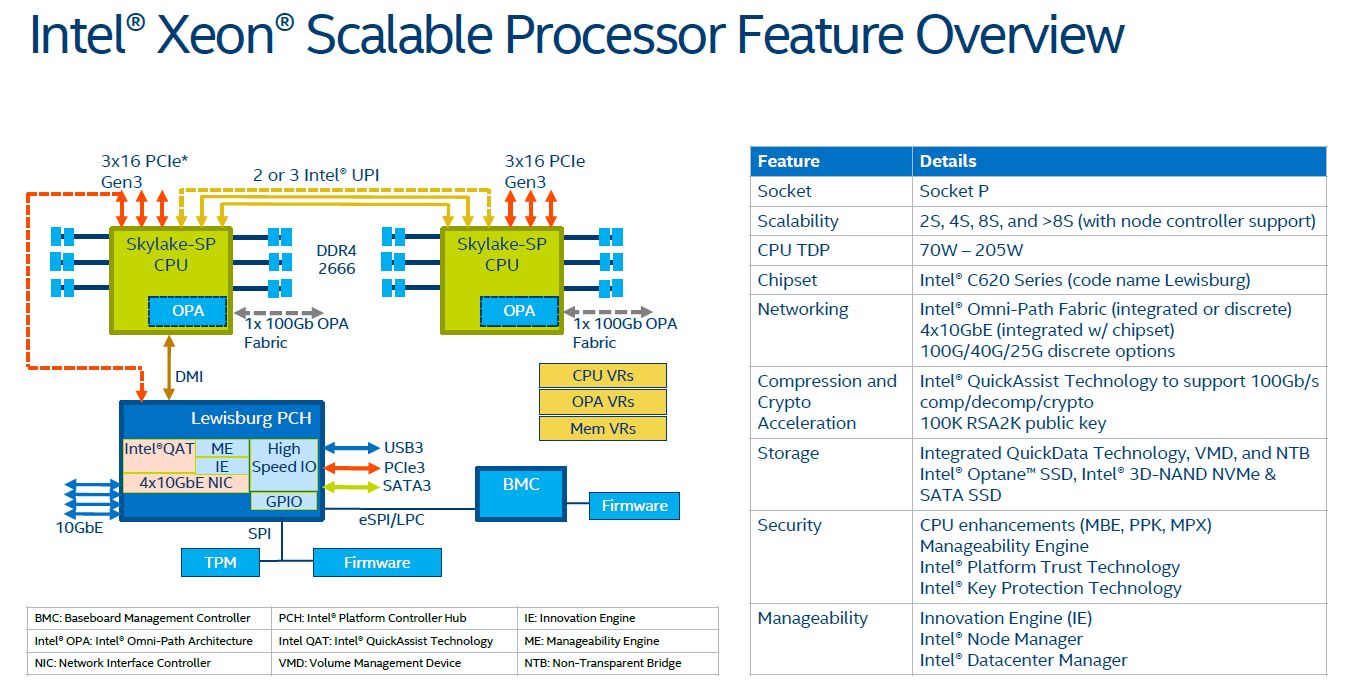

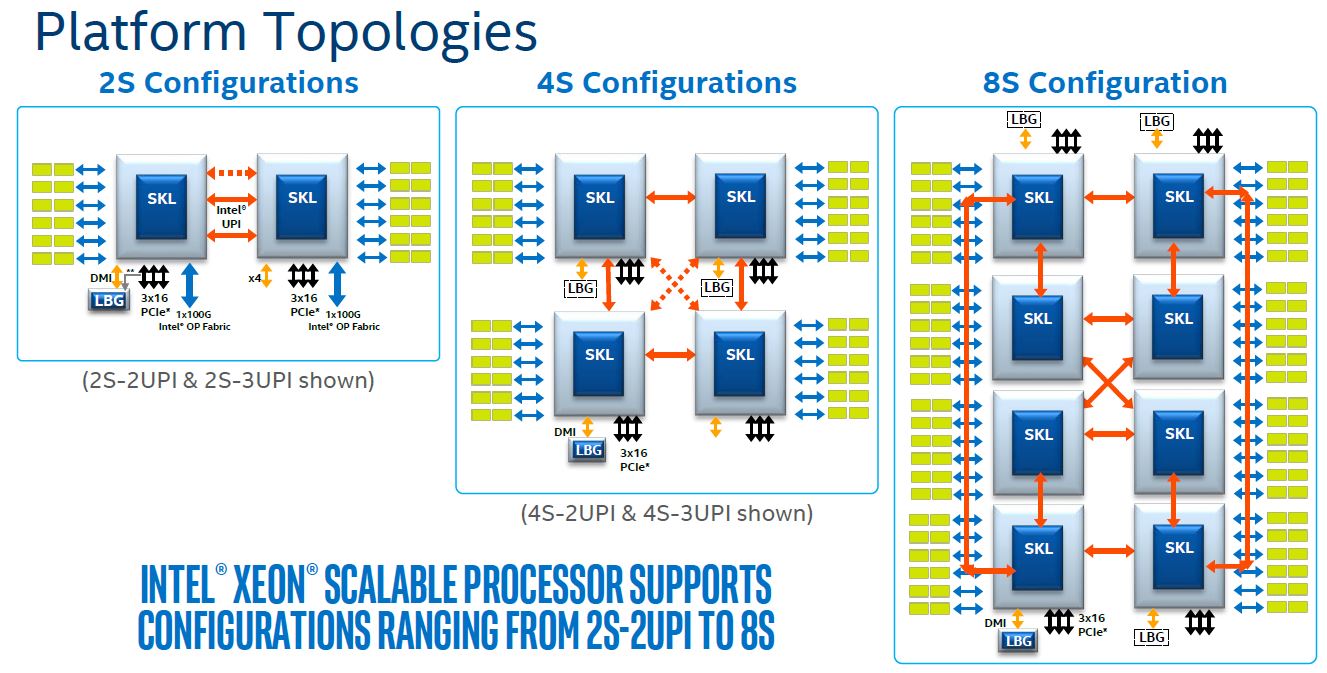

Intel provided this overview slide which we found to be extremely useful for describing the platform. Intel has removed the Xeon E5 versus Xeon E7 differentiation and now supports scaling to 4P and beyond configurations with relative ease. There is a new socket, new chipset, new NVMe storage support, optionally integrated fabric, more cores, more memory channels, and new security features. Suffice to say, the platform is meant to simply be all-around bigger than the Intel Xeon E5 family ever was.

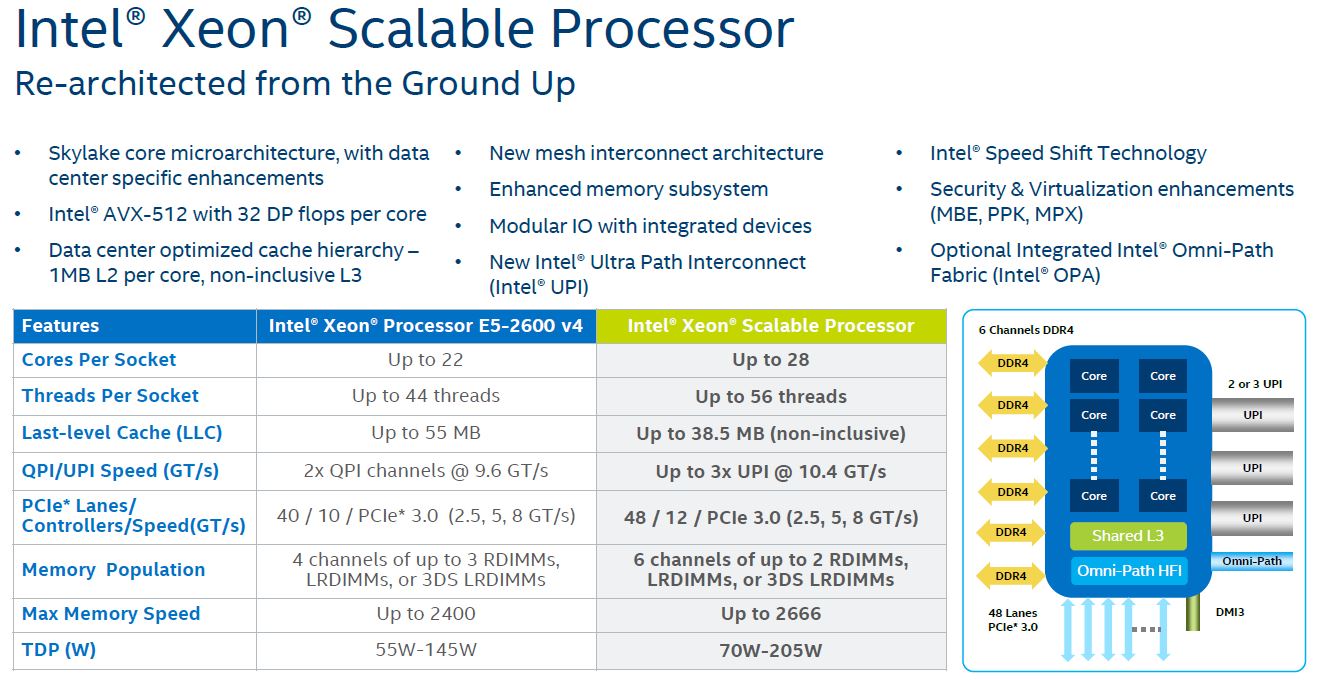

The Intel Xeon Scalable Processor Family improves upon previous generation Intel Xeon E5 and E7 V4 generations in just about every metric. Here is Intel’s slide on the talking points:

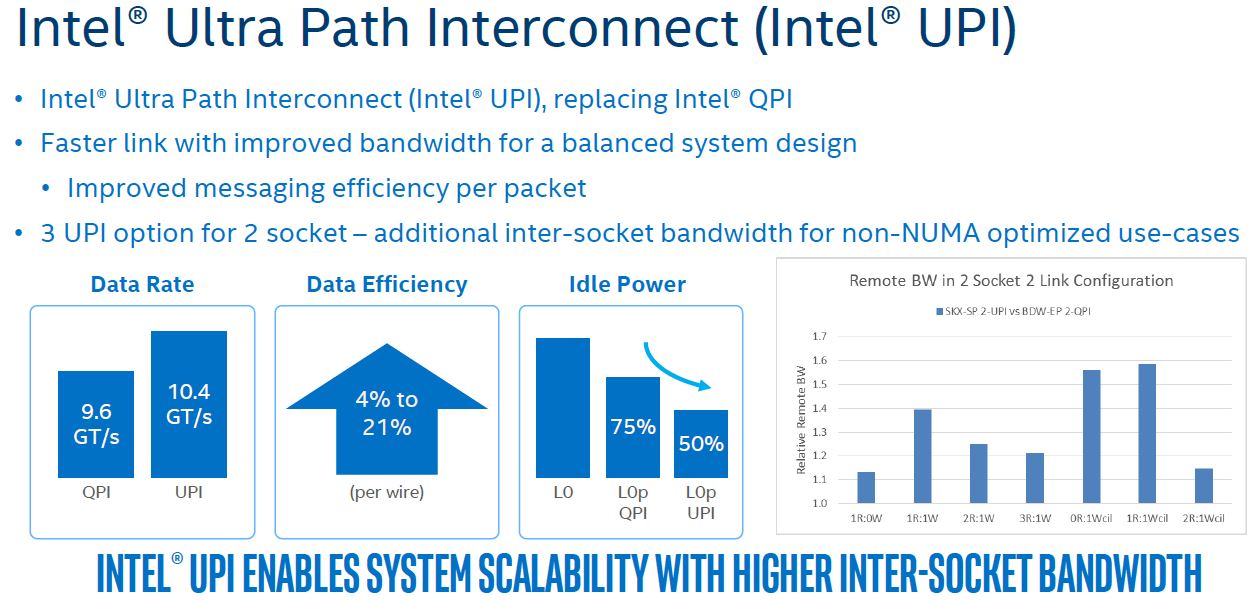

With the platform scaling to 4 and 8 sockets (with high-end SKUs) and by adding more I/O and memory bandwidth, it was time to replace QPI. QPI was the main intrasystem link that Intel used to move data between CPUs in the Intel Xeon E5 era. Replacing QPI is the Intel Ultra Path Interconnect (Intel UPI):

The key here is that Intel is using new encoding and speeds to reach higher inter-socket bandwidth than it had before.

The new Intel Xeon Scalable CPUs support 2 UPI or 3 UPI links per CPU. Intel can support a lower performance, lower cost 4S design (Intel Xeon Gold 5100 series) with 2 UPI links or higher performance 4S and up to 8S design with CPUs that feature 3 UPI links. We have more details of how these links interface with the CPUs in our Intel Mesh Interconnect Architecture piece.