Intel Xeon Platinum 9200 Performance and DL Boost

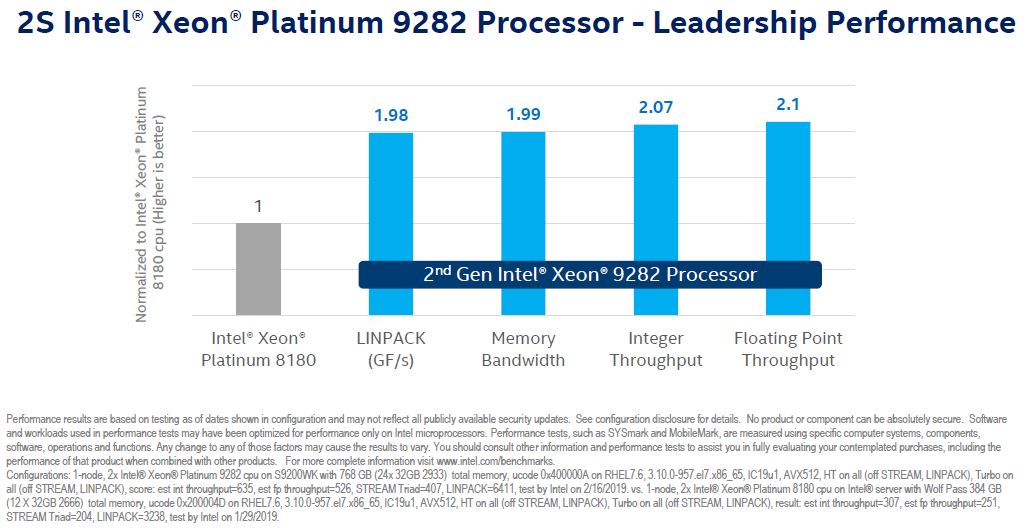

Intel showed off a number of comparisons for the new chips. We are going to show a few. First, the Intel Xeon Platinum 9282 versus an Intel Xeon Platinum 8180. Here, the Intel Xeon Platinum 9282 has double the performance of the Platinum 8180 +/- 5%. From what we have seen with the Intel Xeon Platinum 8180 versus the 8280, one does lose some performance by moving to the denser configuration. At some point, power and thermals come into play.

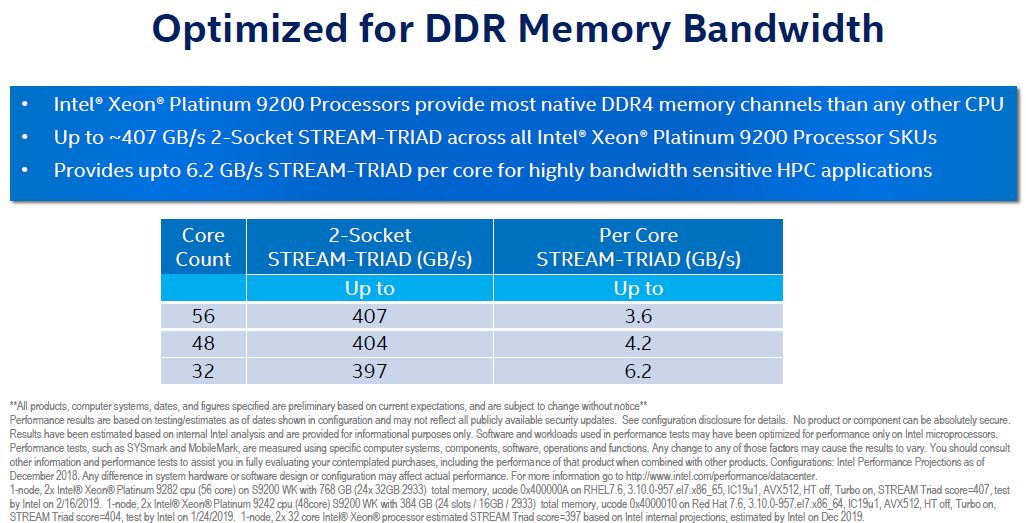

Intel likes to highlight that it has excellent memory bandwidth with its new chips. Indeed, memory bandwidth is a major limiting factor in real HPC workloads. It makes sense Intel is optimizing here.

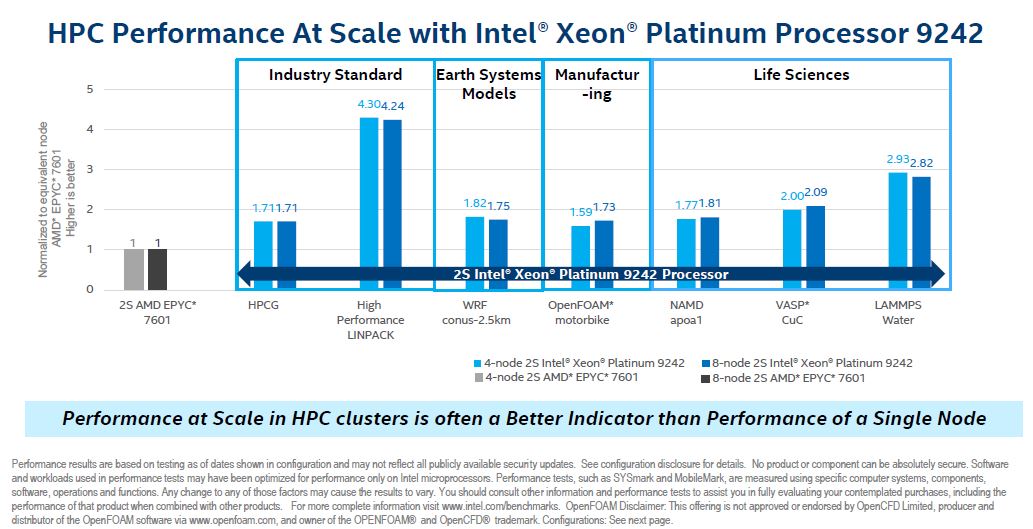

One chart that was less exciting was the comparison of the Intel Xeon Platinum 9242 versus the AMD EPYC 7601. To read this correctly, Intel is essentially comparing its dual 48 core platform to the AMD EPYC 32 core platform launched seven quarters ago. There are a few major omissions here:

- The AMD EPYC is a socketed solution available from many vendors and the Intel Xeon Platinum 9242 must be purchased on a PCB, likely from Intel.

- The 2S AMD EPYC 7601 platforms can have 32 DDR4 DIMMs in 8 channels while the Platinum 9242 platforms launched have 24 DDR4 DIMMs in 12 channels.

- The AMD EPYC 7601 is a 180W TDP part while the Xeon Platinum 9242 is almost double that at 350W.

- The AMD EPYC 7601 platforms have 128 PCIe lanes while the Intel Xeon Platinum 9242 has only 80 available on Intel’s PCB.

- From a power, and likely price perspective, Intel is essentially comparing a de-tuned version of its 4-socket platforms to a 2-socket AMD platform by calling the two die and NUMA domain Platinum 9242 a socket.

- Intel is keeping the number of nodes constant.

With those caveats here is the chart.

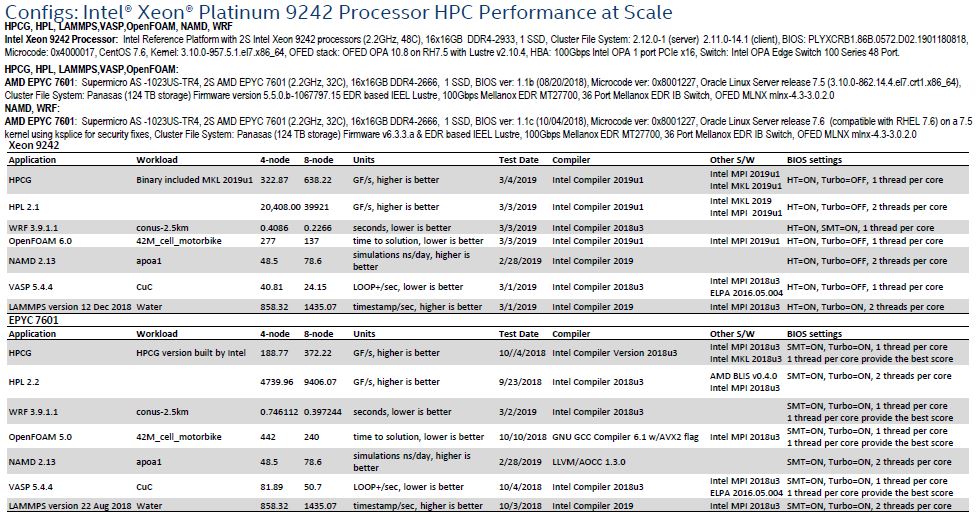

Here is the backup on the configurations:

This is one of those comparisons that is true and has some merit. Fewer nodes mean fewer Mellanox (soon to be NVIDIA) 200Gbps InfiniBand cards, 100GbE/ 200GbE cards, or even Intel’s 100Gbps OPA. It means fewer cables, fewer switch ports and less power consumed moving data to nodes. At the same time, this is comparing a mainstream platform to a narrow scope HPC platform designed for CPU compute.

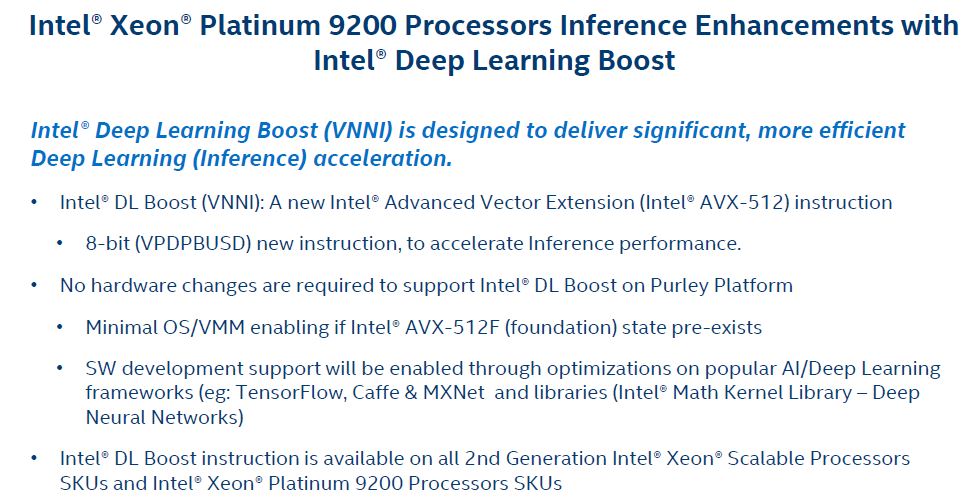

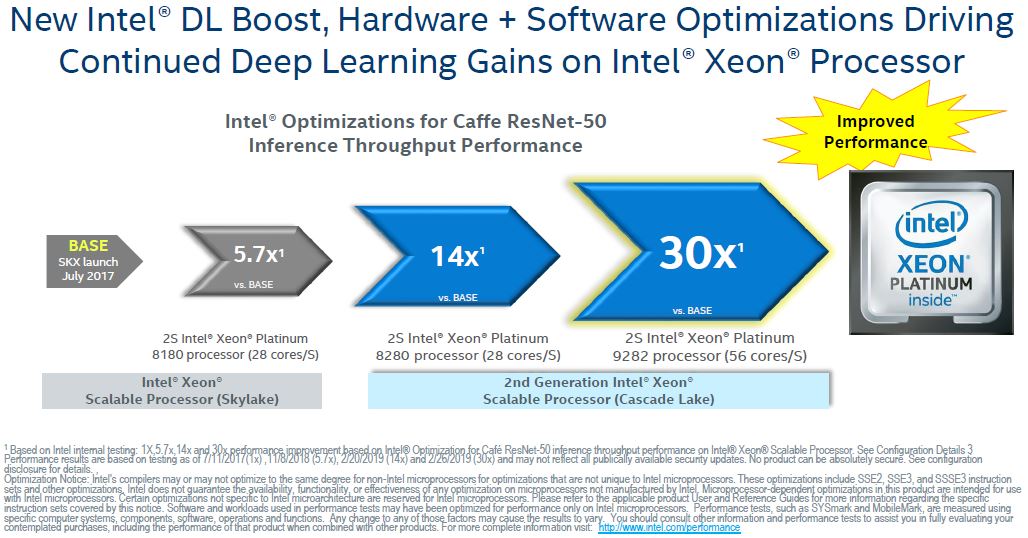

Like the rest of the second generation Intel Xeon Scalable family, the Xeon Platinum 9200 supports Deep Learning Boost or VNNI. Even though Intel is calling this a “deep learning” feature, it is really an inferencing instruction at this point.

Intel DL Boost accelerating inferencing is important. There is a lot of work in the HPC community today around using inferencing to enhance observation and parsing results of observation.

This is certainly a great feature that the HPC community will use.

Final Words

The Intel Xeon Platinum 9200 series is designed first and foremost as a HPC platform. Selling the chips soldered to an Intel PCB means we will not see a broad partner ecosystem making platforms. More than a few have commented to STH that they are not supporting the Xeon Platinum 9200 series because they cannot provide value in the ecosystem beyond cooling.

One concept is certain: the Intel Xeon Platinum 9200 is not a mainstream part. It is a part for certain HPC applications where density is the primary constraint not memory capacity nor accelerator density. In that corner of the market, the Intel Xeon Platinum 9200 will be the chip to beat for at least Q2 2019.

“gluing Xeons together”

Nice one Patrick, lol