Innovium Teralynx 7-based 32x 400GbE Switch Performance

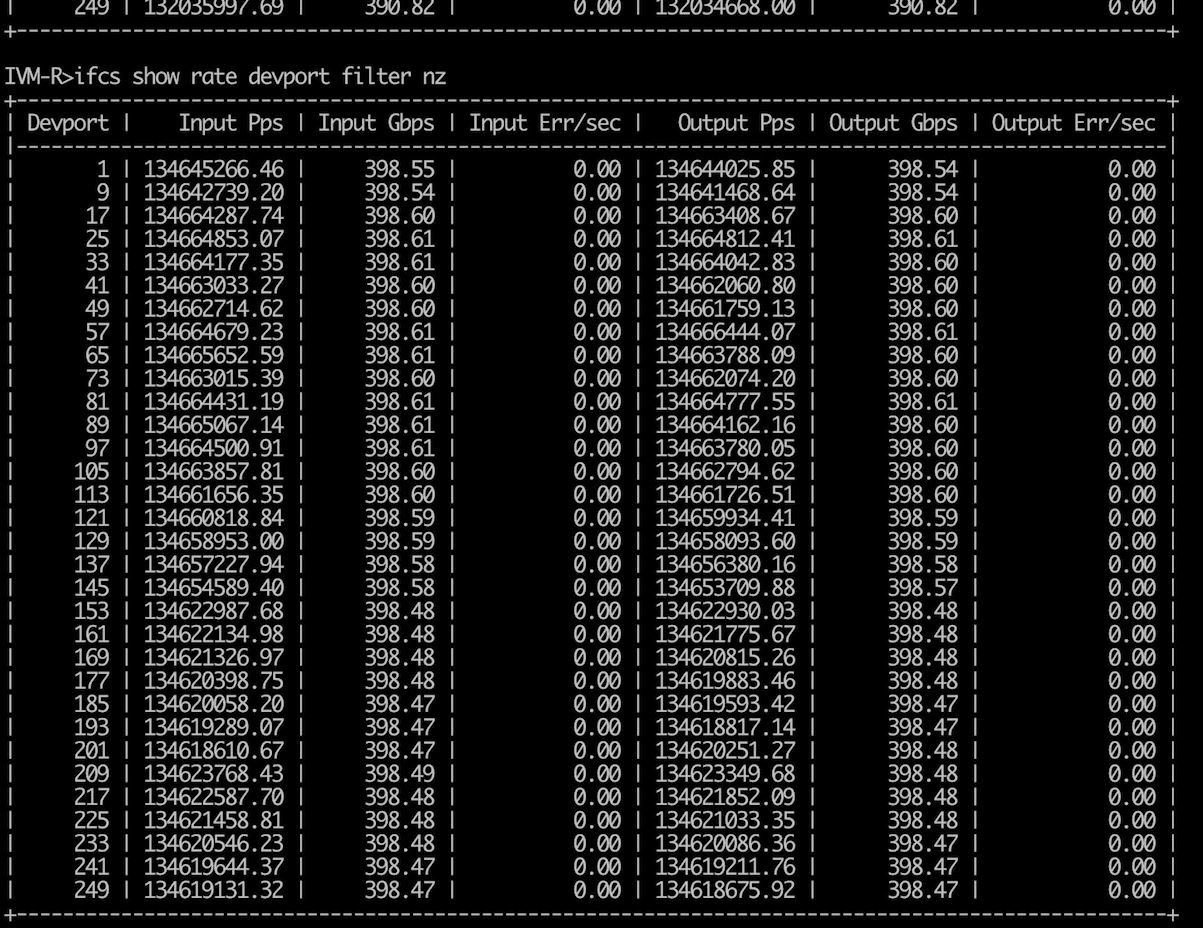

In terms of performance, STH visited the Innovium lab (fully masked, distanced, and there were three individuals on the entire floor that day.) We were able to utilize the Spirent test equipment to drive 400Gbps of traffic on both ends of a snake configuration. Here is a screenshot from SONiC (again there is a bit more with the Spirent UI in the video):

As you can see, we have 400Gbps on the Input and Output side for each of the switch ports since this is testing bi-directional traffic here. We have billions of packets per second being passed and around 12.8Tbps of traffic in both directions.

We checked eBay immediately after, no 400GbE Spirent test units available for the STH lab. Frankly, at this level of performance, one needs dedicated test hardware like this.

Innovium Teralynx 7-based 32x 400GbE Power

In terms of power consumption, we mentioned the 1.3kW 80Plus Platinum power supply. The key here is that the PSU is designed to handle the switch ASIC, cooling, and all of the QSFP-DD pluggable.

We were told that typical power consumption is around 600W. That is significantly higher than we see in the 3.2T generation, but it is not necessarily quadrupling what one would see with a Broadcom Tomahawk as an example. One gets power savings both through scaling up the switch, but also with the larger switch radix.

Final Words

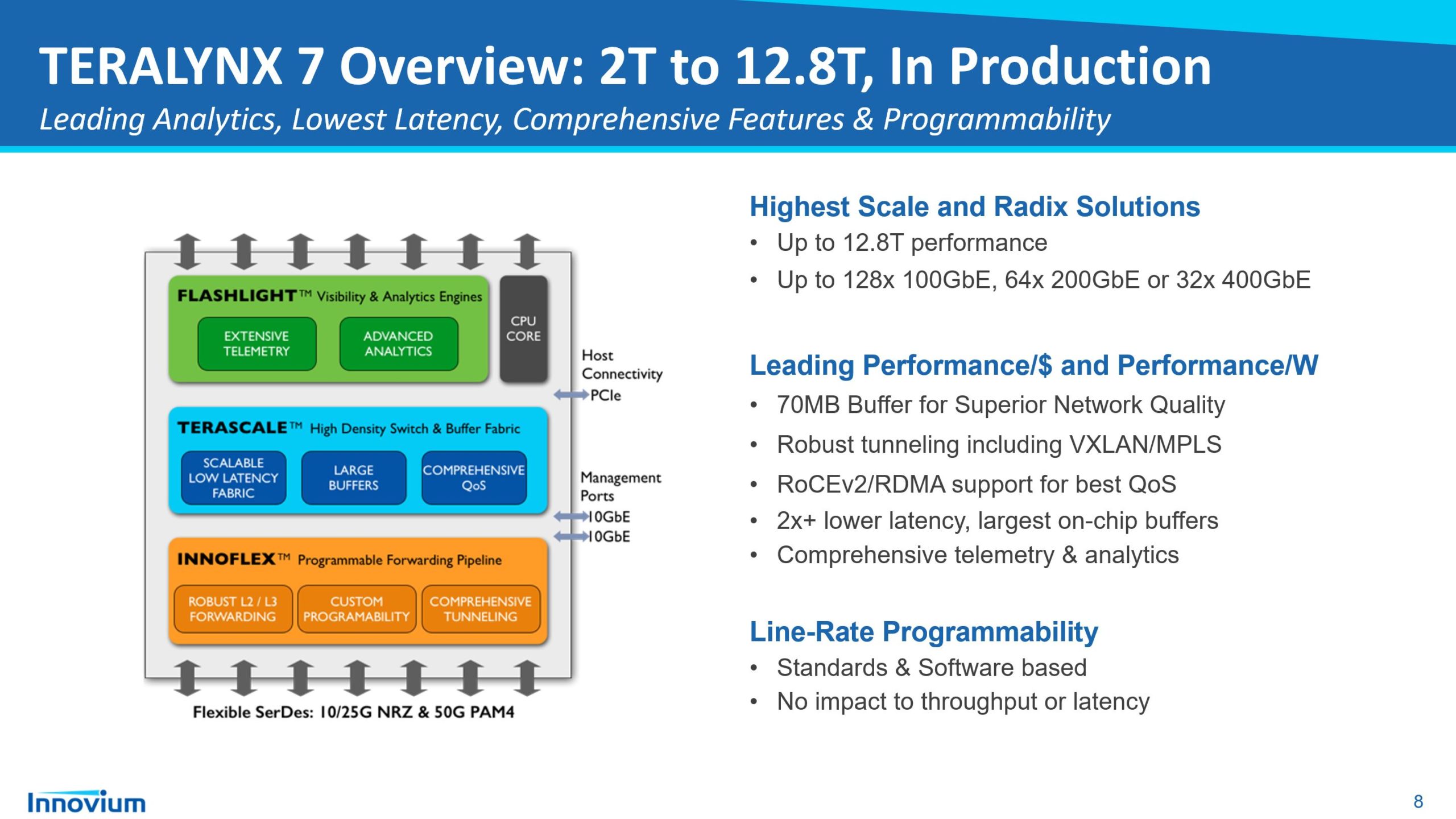

Taking a step back, the radix point is extremely important. Moving from the 32x 100GbE to 32 x 400GbE form factor means we can also break out those 400GbE ports to 64x 200GbE or 128x 100GbE. Combinations are also possible. For our readers, the HPE-Cray Slingshot is an example of a 64x200GbE solution in the supercomputer market so it is a similar generation 12.8T line to this switch. Servicing more bandwidth and potentially more 100GbE ports in a single switch means we have a more robust radix. This means there are two main impacts.

First, we can flatten the network and use fewer switches. Using fewer switches reduces power consumption by networking in a data center, allowing more to be dedicated to compute/ storage. Flattening the network also means we can get better latency since there are fewer hops in the layers of aggregation sitting above a top-of-rack switch. The counterpoint is that fewer switches mean that each can have a larger impact if there is an issue, which is why Innovium stresses its Flashlight telemetry solution.

Another potential option is that a data center can use the same number of switches but provide more bandwidth to or support more nodes.

In either case, one can understand why larger switches are important. It will still be some time until we see 400GbE adapters in mainstream servers, simply because we need PCIe Gen5 for a x16 slot to handle that bandwidth without resorting to multi-host adapters or something similar. Still, taking a look at a solution like this is always a great window into not just what is available, but also what is driving the change in the networking industry.

Looks like it is 1M SERDES shipped and not 1M 400G ports.

I’d say this is one of the best high-end switch reviews I’ve ever seen. I’d like to see more on SONiC installs and ops. Good work though.

How STH kills time before the AMD Milan launch ——-> Oh here’s some ultra amazing switch.

Second the request for articles on SONiC installs and ops. These switch teardowns are fantastic.

OMG, 32x 400Gbps. And in the meantime small business/office users are waiting for fanless 10Gbps switch with more than just 2-4 10GigEs.

That market share number for Innovium feels a little too high. Any idea why?

If you are looking closely, it is not total switch market share.

This is really cool. I can help with the interconnections. We should talk if your are interested in QSFP-QQ 400G connectors, cables and optics.

That switch consumes more power than my entire rack at home lol. Great review though!