At SC22, Ingrasys showed off an updated version of a line we showed last year and got to demo earlier in 2022. The basic premise is taking SSDs and putting storage directly onto an Ethernet network via NVMeoF so they can be accessed and composed elsewhere, with components like DPUs.

Ingrasys Has an Updated Ethernet SSD Switch for Kioxia SSDs at SC22

If you want to learn more about the Ingrasys-Kioxia solution, we are going to simply point you to our Ethernet SSDs Hands-on with the Kioxia EM6 NVMeoF SSD.

At SC22, we saw a few updates to the Ingrasys solution.

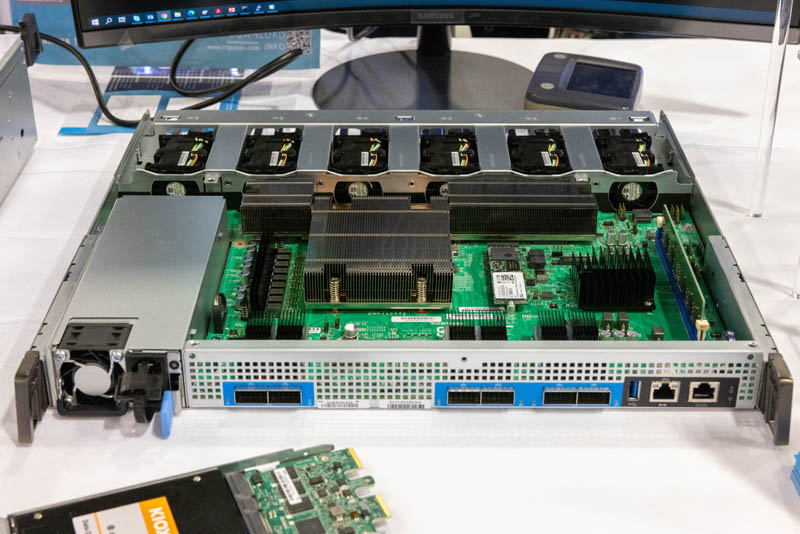

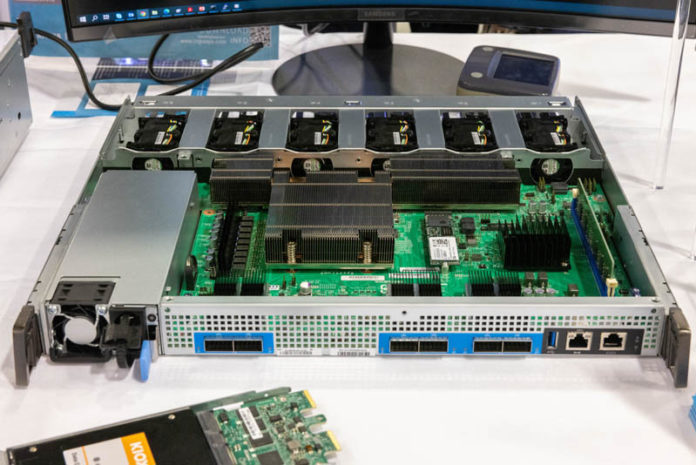

First, is the new switch sled. This version is called the Ingrasys ES2100. The main difference between the ES2100 shown at SC22 and the ES2000 we looked at earlier is that this version swaps switch silicon from a Marvell 98EX5630 chip to a NVIDIA Spectrum-2 part. That swap allows the rear uplink port bandwidth to hit 200GbE/400GbE speeds.

The other change was around the drive sleds. We still have the Kioxia EM6, but we also now have an E3.S option with NVMe over TCP and a U2. option with NVMe over RoCE.

The E3.S adapter is fun because Ingrasys is using the EDSFF connector(s) in its chassis anyway. Effectively this adapter is interfacing via NVMe on the drive side and Ethernet/ NVMe over TCP on the chassis side.

New drive options, higher speeds, and new switch silicon make this even more interesting.

Small Bonus: AMD EPYC 9004 Genoa

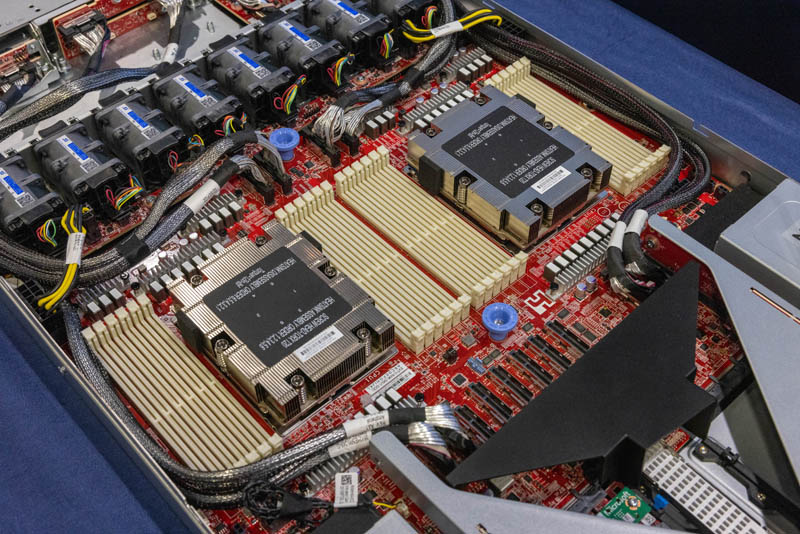

Since it is a theme of our SC22 coverage, Ingrasys has an AMD EPYC 9004 Genoa solution.

This platform is either 1U (SV1120A) or 1OU (SV1121A) and is a 1DPC dual-socket design.

Final Words

Hopefully, this kind of solution takes off. Our sense is that something that would help is the lower-end NVMe SSD to 25GbE adapter boards that we saw at FMS years ago. The Ingrasys solution is something that can be deployed, but it feels like a case where a smaller lower-cost option would help get more folks interested in the solution. As we are working with more DPUs, it is not hard to see how these technologies could be paired together in future infrastructure.

Update: We just published our video that has more views and some video on this one here:

This is good stuff, NVMeOF is a really useful thing. I’ve done comparisons of PCIe3 NVMe SSDs served via NVMeOF using older ConnectX2 IB cards (QDR), better quality Samsung SSDs, and Linux kernel circa 4.20. Compared to locally attached SSDs the NVMeOF method suffered from slightly degraded POSIX file open/close/create speed but was about as performant for reads & writes. Sharing SSDs via NVMeOF to servers doing Caffe training with a pair of 2080ti the image rate was the same either way. Compared to NFS, even to NFS over RDMA, NVMeOF was markedly superior.