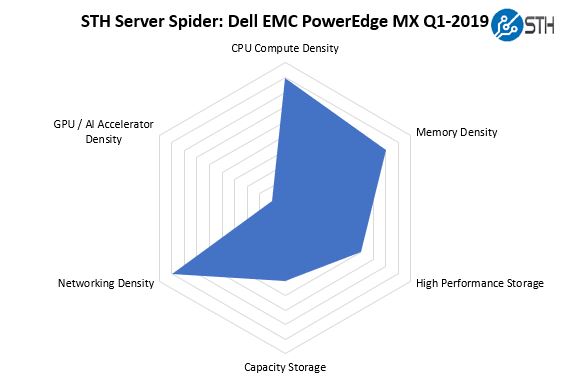

STH Server Spider: Dell EMC PowerEdge MX 2019

In the second half of 2018, we introduced the STH Server Spider as a quick reference to where a server system’s aptitude lies. Our goal is to start giving a quick visual depiction of the types of parameters that a server is targeted at.

With the ability to combine up to four switches, two SAS fabric modules, and either eight dual-socket nodes or four quad socket nodes, the Dell EMC PowerEdge MX is indeed dense. One can optimize for higher density along a number of vectors, but the flexibility is where the Dell EMC PowerEdge MX shines. Please note, we are consciously giving a de minimus aptitude for GPU and FPGA compute to the PowerEdge MX in this STH Server Spider. Although we have a feeling Dell EMC will eventually launch GPU/ FPGA nodes, they are not in the market at the time this review was published.

Final Words

After some hands-on time with the Dell EMC PowerEdge MX, delving into its management software layers and into both the current generation of hardware and what this PowerEdge MX will enable in the future, our label is safe calling it the WowerEdge.

The PowerEdge MX is not the solution for everyone and every application. Dell EMC’s marketing makes the solution sound like the silver bullet that can cure all IT needs. Instead, it would be fair to call the PowerEdge MX a solid solution for a segment of the market that wants the integration, flexibility, and deployment ease of blade servers.

For buyers today, the Dell EMC PowerEdge MX has all of the basics on would expect for management and ease of maintenance. Everything is integrated into Dell OpenManage tools. Each node has familiar iDRAC which provides the security, access, and telemetry controls up and down the software stack. From a hardware perspective, everything is hot swap. Lacking a midplane means that the system is more serviceable and not prone to a single device failure requiring the entire chassis to go offline.

Where the PowerEdge MX shines is perhaps not what it is today, and instead of what it enables in the future. Using a PowerEdge MX with longer-lasting chassis components means that the PowerEdge MX immediately can be the start of a green IT initiative at a company. A CIO can make a commitment to reduce IT e-waste by 10-15% and make that commitment by simply deploying PowerEdge MX. The ability to re-use the chassis, fans, and power supplies, along with the no-midplane design means that the PowerEdge MX is ready to take on the next generation of interconnect technologies, compute, and storage solutions.

If you are interested in the Dell EMC PowerEdge MX, we hope this review was helpful. These systems tend to be so large, and so complex, that most of the third party information on them is either paid for by the vendor or is at best using survey response input for evaluation. Our independent Dell EMC PowerEdge MX review shows what this solution can do today, and the potential it has over its lifecycle. As a result, of our hands-on process, we are awarding the Dell EMC PowerEdge MX our Editor’s Choice Award.

Ya’ll are on some next level reviews. I was expecting a paragraph or two and instead got 7 pages and a zillion pictures — ok I didn’t count.

I think Dell needs to release more modules. AMD EPYC support? I’m sure they can. Are they going to have Cascade Lake-AP? I’m sure Ice Lake right?

“we now call the PowerEdge MX the “WowerEdge.”

Please don’t.

Can you guys do a piece on Gen-Z? I’d like to know more about that.

It’s funny. I’d seen the PowerEdge MX design, but I hadn’t seen how it works. The connector system has another profound impact you’re overlooking. There’s nothing stopping Dell from introducing a new edge connector in that footprint that can carry more data. It can also design motherboards with high-density x16 connectors and build-in a PCIe 4.0 fabric next year.

Really thorough review @STH

I’d like to see a roadmap of at least 2019 fabric options. Infiniband? They’ll need that for parity with HPE. It’s early in the cycle and I’d want to see this review in a year.

Of course STH finds fabric modules that aren’t on the product page… I thought ya’ll were crazy, buy the you have a picture of them. Only here

Give me EPYC blades, Dell!

Fabric B cannot be FC, it has to be fabric C, A and B are networking (FCOE) only.

“Each fabric can be different, for example, one can have fabric A be 25GbE while fabric B is fibre channel. Since the fabric cards are dual port, each fabric can have two I/O modules for redundancy (four in total.)”

what a GREAT REVIEW as usual patrick. Ofcourse, ONLY someone who has seen/used the cool HW that STH/pk over the years would give this system a 9.6! (and not a 10!) ha. Still my favorite STH review of all time is the dell 740xd.

btw, i think you may be surprised how many views/likes you would get on that raw 37min screen capture you made, posted to your sth youtube channel.

I know i for one would watch all 37min of it! Its a screen capture/video that many of us only dream of seeing/working on. Thanks again pk, 1st class as always.

you didnt mention that this design does not provide nearly the density of the m1000e. going from 16 blades in 10 ru to 8 blades in 7ru… to get 16 blades I would now be using 14 RU. Not to mention that 40GB links have been basically standard on the m1000e for what 8 years? and this is 25 as the default? Come ON!

Hey Salty you misunderstood that. The external uplinks have 4x25Gb / 100Gb at least for each connector. Fabric links have even 200Gb for each connector (most of them are these) on the switches. ATM this is the fastest shit out there.

How are the drives “how-swappable” if the storage sleds have to be pulled out? Am I missing something?

Higher-end gear has internal cable management that allows one to pull out sleds and still maintain drive connectivity. On compute nodes, there are also traditional front hot-swap bays.

400Gbps/ 25Gbps = 8 is a mistake. When NIC with dual ports are used, only one uplink from MX7116n fabric extender is connected 8x25G = 200G. The second 2x100G is used only for Quad port NIC.

Good review, Patrick! I was just wondering if that Fabric C, the SAS sled that you have on the test unit, can be used to connect external devices such as a JBOD via SAS cables? It’s not clear on the datasheets and other information provided by Dell if that’s possible, and the Dell reps don’t seem to be very knowledgeable on this either. Thanks!

I don’t have more money. Can I use 1 switch MX508n and 1 switch MXG610s?