Today we have a quick tutorial on getting the NVIDIA BlueField-2 DPU running on Windows. We are using Windows 11 Pro here, but one could use Windows Server 2022 or another Windows OS easily as well. Unlike with the GeForce drivers, getting drivers for former Mellanox products is harder, so we figured we would add a little guide to get people up and running. The awesome part, is that when this is done, you will have a hardware Arm-Linux system running inside your x86-Windows system.

How to Get NVIDIA BlueField-2 DPU Running on Windows 11 Pro

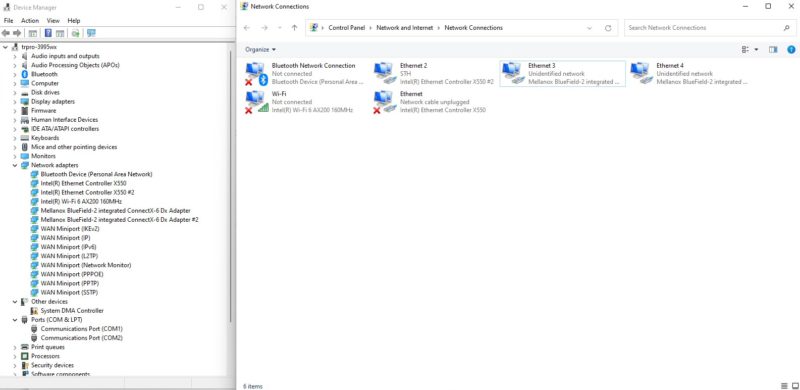

Our default Windows 11 Pro installation did not want to work with the BlueField-2 DPU. Luckily we had another NIC, and once the installation was complete, updates were applied, and drivers were found using the lower-speed NIC, the BlueField-2 DPU was somewhat functional. The card’s ConnectX-6 Dx ports were recognized, but the Rshim driver that allows for easy communication between the host system and the DPU over the PCIe bus was not working.

One can still get to the card using the out-of-band management port, but we wanted the full experience.

When this was all running, we could get the 100GbE connections, and we could see the cards in device manager, but the management adapter was not showing up in Windows Device Manager.

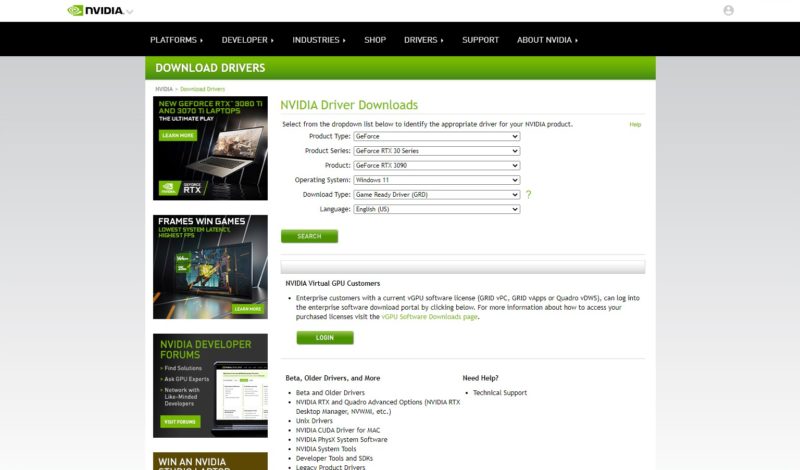

NVIDIA’s site was not a lot of help on this one since going to the top and drivers takes one to the GeForce. It is interesting that NVIDIA is becoming a data center focused company but its server products have drivers hidden. If you click on “Drivers” at the top of the page on NVIDIA’s website, you go to GPU driver downloads.

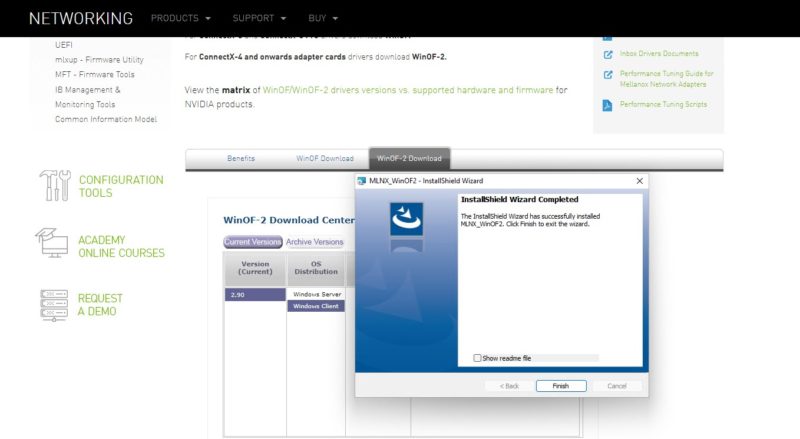

Instead, you need to get WinOF-2. WinOF-2 may sound familiar, since WinOF is also what you use for ConnectX-3 and previous and WinOF-2 is for ConnectX-4 and newer adapters. To save you some time searching, here is the current page at NVIDIA as of the time of writing this article.

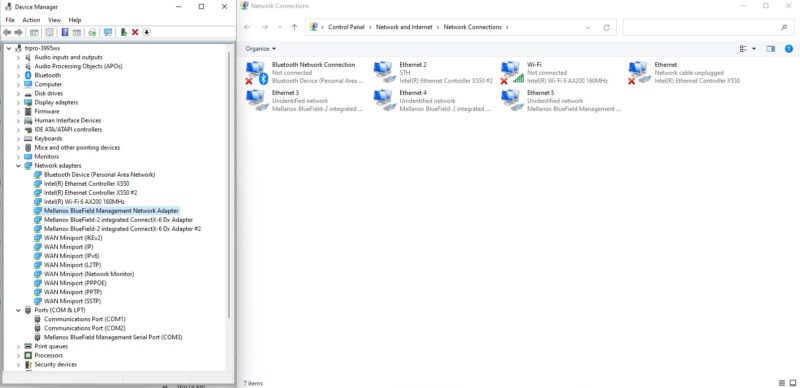

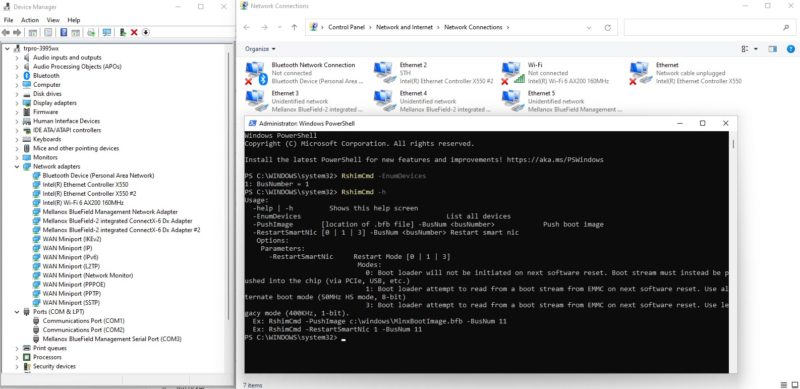

Once that is done, we can see the Mellanox BlueField Management Network Adapter show up in device manager, and as Ethernet 5 below. There is also a COM3 that is added as the Mellanox BlueField Management Serial Port. The “System DMA controller” that we had in the automatically installed Windows 11 driver now has a proper driver.

Once this is complete, we can now use the Rshim tools to communicate with the DPU including doing things like pushing images to the Arm CPU onboard and restarting the DPU.

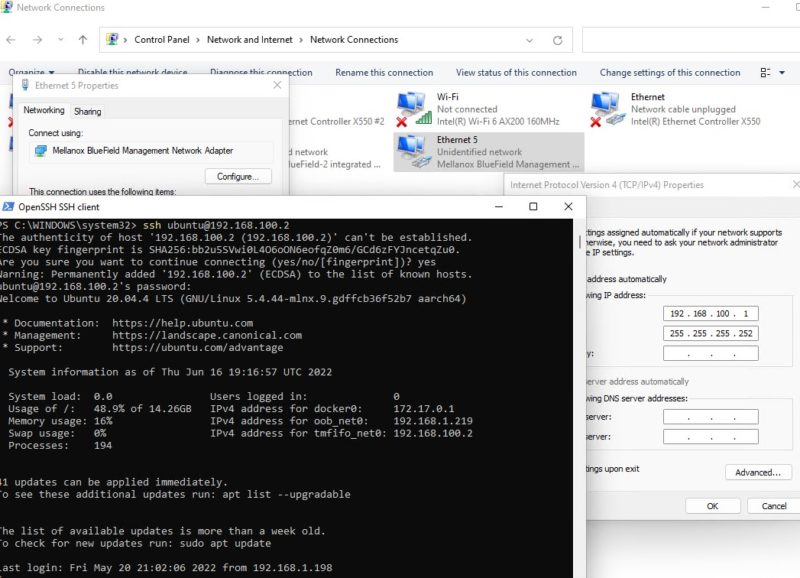

Now that that is done, you can also setup Ethernet 5 to use to connect to the DPU’s Arm CPU complex. First, set the IP address to 192.168.100.1. The BlueField-2 DPU will default to its side of this interface at 192.168.100.2. After this is set, you can ssh into the DPU directly:

Here we see logged into the DPU that it is at 192.168.100.2 on the tmfifo_net0 address. We could instead use an external address for the out-of-band management network.

Final Words

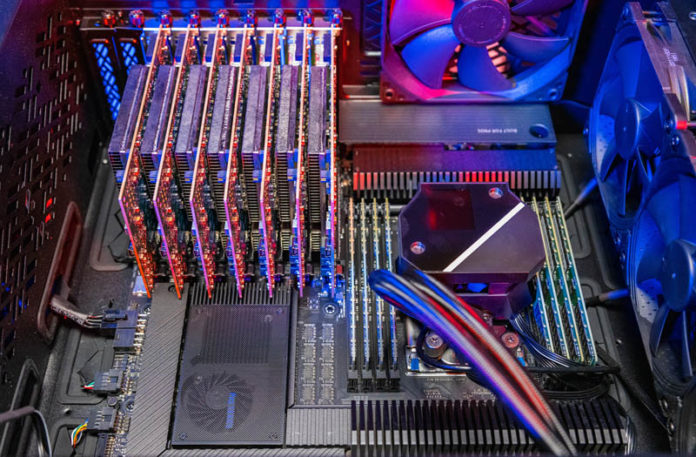

After you do this, you will have a functioning BlueField-2 DPU ready to run SNAP, do RDMA offloads, run OVS, and so forth. What is more, you have created something special. In your Windows x86 server, you not only have a dual 100GbE interface. You now have Ubuntu Linux running bare metal on Arm. This is using the same system from the Building the Ultimate x86 and Arm Cluster-in-a-Box piece, just with a single DPU.

If you want to learn more about how this is an independent system, see our ZFS on the NVIDIA BlueField-2 DPU piece.

Not only have you installed a BlueField-2 DPU on Windows at this point, but you have created a “holy grail” configuration mixing Windows, Linux, x86 and Arm, and that does not include the ASPEED BMC in our test system.

As a quick disclaimer: If you want to build something like this, use the lower-power E-series DPUs, and remember to push plenty of airflow over the DPU. Even though people equate Arm with low power, the reality is that the BlueField-2 DPU needs significant airflow and many consumer-level cases cannot provide enough.

This is so pointlessly cool

I’m in agreement with Garry.

I don’t know about you, but I think they wrote this as a how to guide on installing WinOF-2. Then they realized they had Arm and x86

but why would you want to install Bluefield on malware?

Why not? Do you think Microdollaroft will track down your house and arrest you for using them in their OS?

Would it be possible to make a system in which instead of the AMD CPU (and motherboard), you guys would have use an Ampera Altra+, making it a ARM Beast!!! I’m not well informed on the motherboard currently existing and if they offer 6+ PCIe16x connectors.