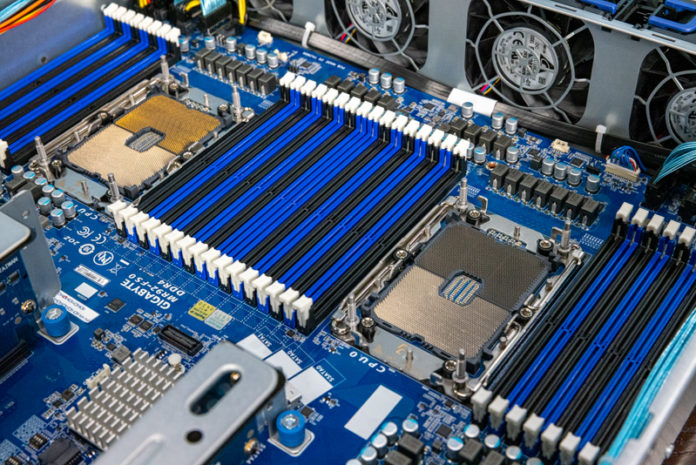

The Gigabyte R282-N80 is a 2U dual Intel Xeon server. It uses the 3rd generation Intel Xeon Scalable processor, codenamed “Ice Lake” to offer a more modern platform. With this generation, Intel increased its core counts (and TDP) while also significantly increasing its memory and PCIe I/O. All three of these changes have major impacts on server design, so we wanted to explore those in this review.

Gigabyte R282-N80 Hardware Overview

As has become customary, we are splitting our hardware overview into an external and internal overview. As our reviews have gotten longer this format helps highlight both what those in the data center will experience servicing from the hot and cold aisles, and then also what is inside powering the server. There are a few “flex” areas that we are moving to the internal/ external overviews based on how long each section is. Let us get to it.

Gigabyte R282-N80 External Overview

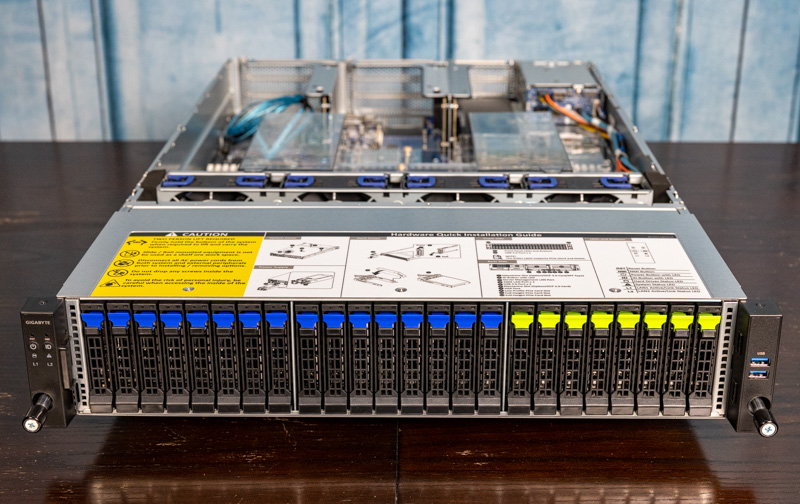

The front of the server is a fairly standard 2U design. 24 of the 26 2.5″ hot-swap bays are located on the front of the chassis. More on that in a bit. There are 16x 2.5″ SATA/SAS bays as well as 8x SAS/ SATA/ NVMe drive bays. The NVMe bays are wired for PCIe Gen4 connectivity. Just having this many hot-swap NVMe bays in conjunction with the rich PCIe riser I/O is new for this generation. This configuration not only gets a speed upgrade to PCIe Gen4, but it also only takes up 25% of the platform’s PCIe lanes whereas it would take up 33% in the previous generation. That means more PCIe lanes are available for riser I/O.

We are just going to quickly note that along with the normal power/ ID buttons, LED status lights, and the USB 3 Type-A ports on the front of the chassis, there are airflow vents on either side of the drives. This helps provide clean and cooler airflow to the rest of the system and is an impact of designing for higher TDP components inside the server.

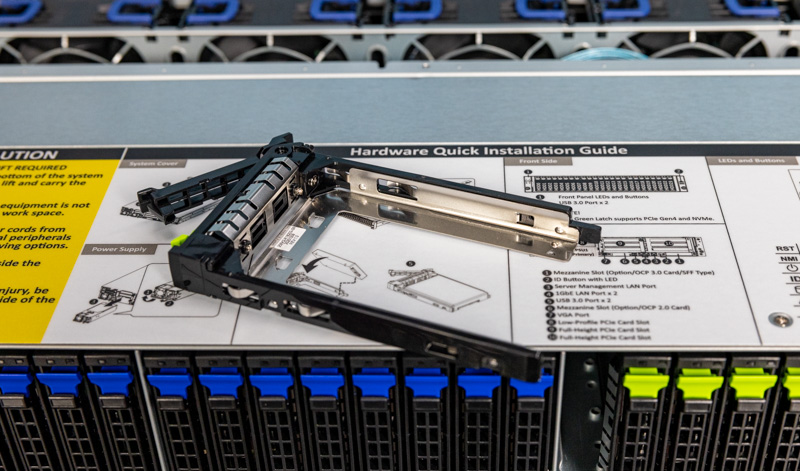

The drive trays we wanted to show quickly as well. These are tool-less drive trays. One simply snaps drives into trays and they are ready to go.

Something else that is less common on servers of this class is how the SATA/ SAS bays are handled. There is an onboard 12G SAS3 expander that sits between the drive backplane and the fans.

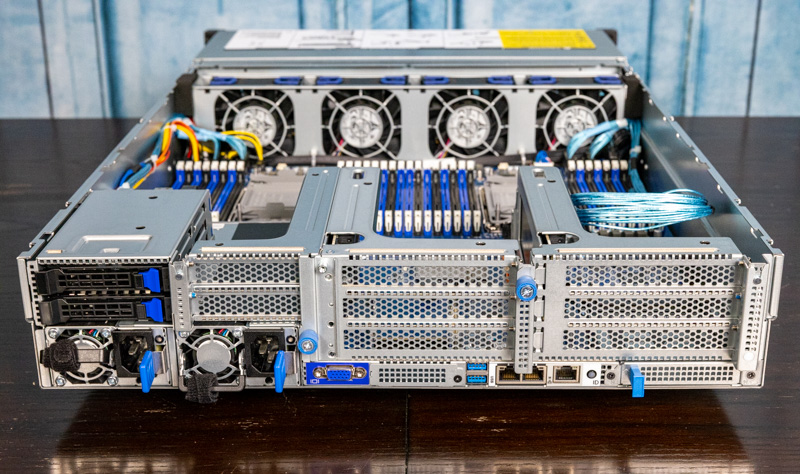

The rear of the unit has the additional two hot-swap bays along with all of the I/O and power supply.

Starting with the left of that photo, we have two 2.5″ drive trays. One can see the connectivity here, and that these are wired for SATA but the backplane supports NVMe connectivity with the appropriate cabling.

There are two power supplies. These are 1.6kW (at 240V) 80 PLUS Platinum power supplies. On 110-120V the power supplies only operate at 1kW so that is a big delta between the power delivery at the different voltages. It is also something common, so we suggest to our users that the 208V and higher racks are used in new installations.

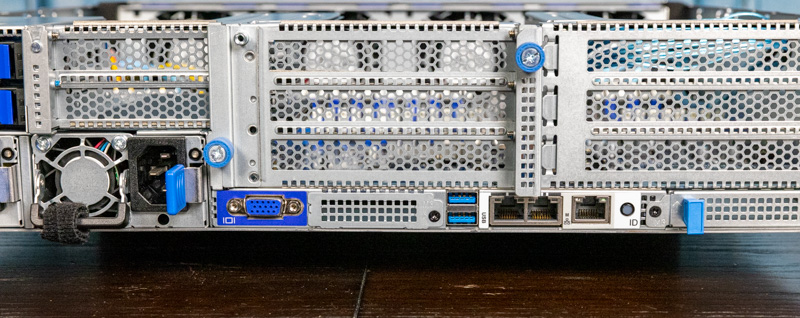

On the bottom center of the rear of the server, we get the I/O. Here we get a VGA port, two USB 3 Type-A ports, two 1GbE ports, and an out-of-band management port. The onboard networking is supported via an Intel i350-am2 NIC. This is a lower-end solution but that is because Gigabyte is planning on high-speed networking being added elsewhere including in the gap between the VGA and USB ports.

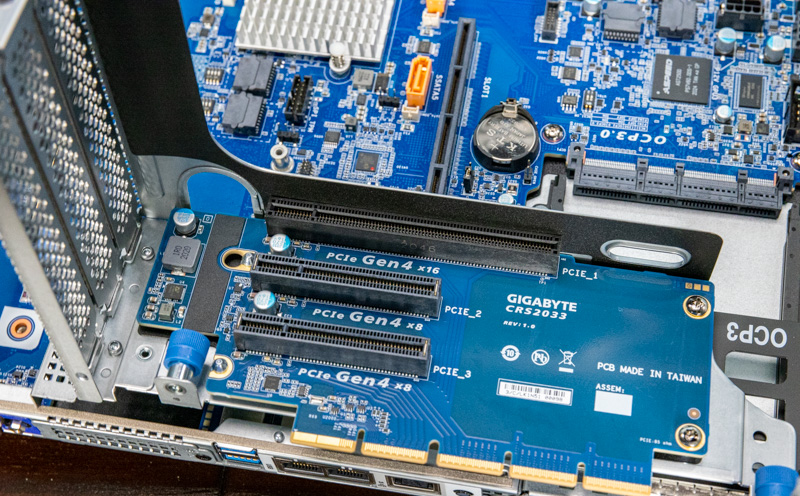

There are a number of PCIe risers at the back of the system. We are not getting to the flexible parts on the bottom plane, but the main set of risers comes in three sections.

The first has a PCIe Gen4 x16 slot and two x8 slots.

The middle section has two Gen4 x16 and one x8 slot. This is a bit different since there are 32x PCIe Gen4 lanes feeding this riser but 40 lanes of slots. Also, eight of those lanes are switched with the OCP NIC 2.0 slot. We are going to have a diagram of how this works in our block diagram section after the internal overview.

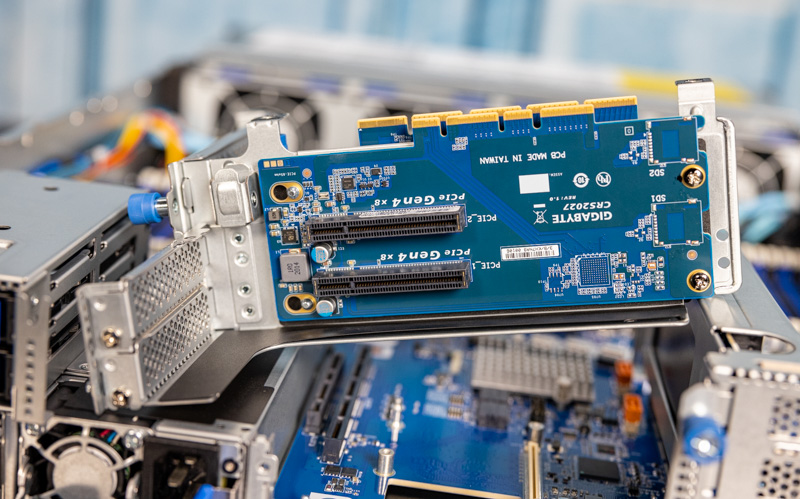

The third has two low-profile x8 slots that sit over the power supply. These are low profile partly to fit the two 2.5″ rear SSD bays.

That was a bit of the internal overview that we moved to the external portion to even the sections out but overall this is a fairly standard 2U platform.

Next, let us get inside the chassis to see what is powering the server.

It’s interesting to see that passive risers are still possible with Gen 4. Not much of that to see in the consumer space.

I’m guessing they’ve only managed it because they’re using an exotic, low loss, perfect impedance (surface mount by the looks of it?) connector between the riser and the motherboard.