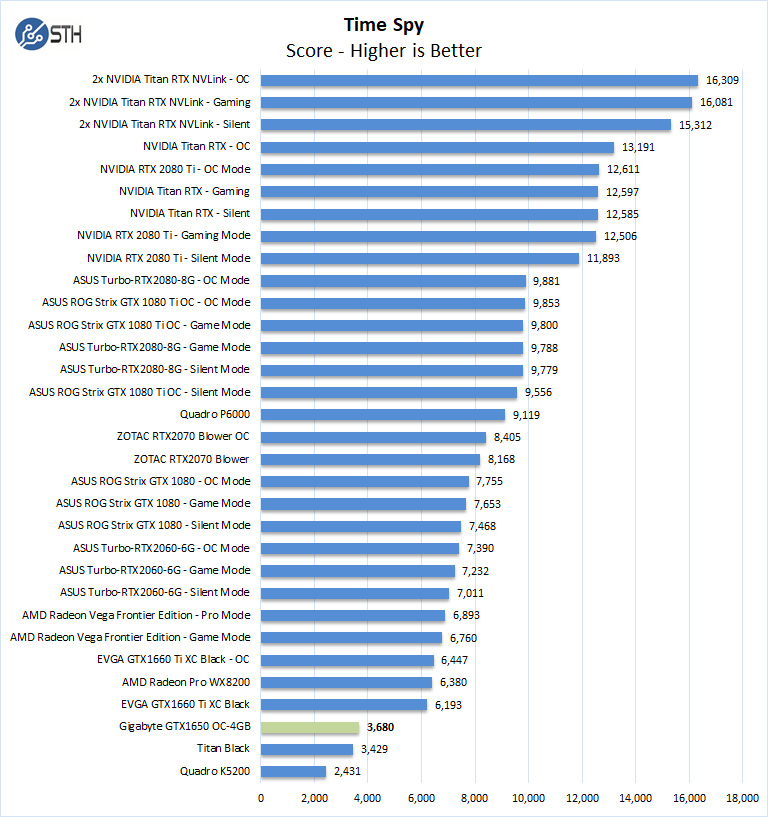

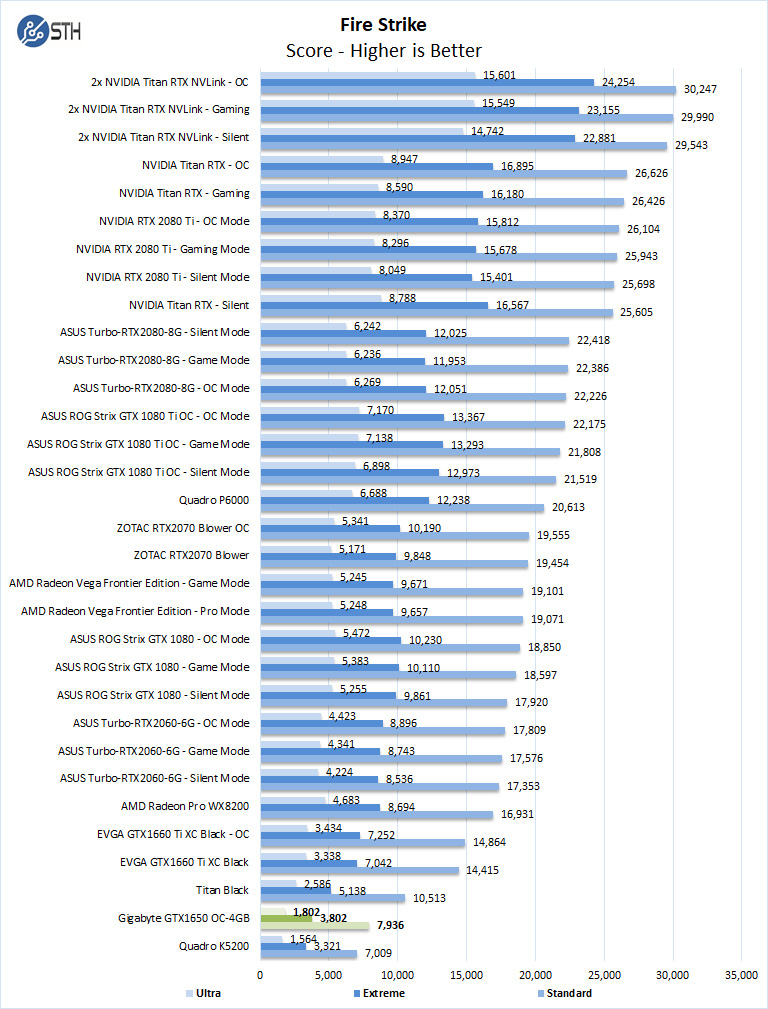

Gigabyte GeForce GTX 1650 OC 3DMark Suite Testing

Here we will run the Gigabyte GeForce GTX 1650 OC through graphics-related benchmarks. At the end of the day, this is still a GPU meant for 3D applications. Those 3D applications have traditionally been games, however, that market is expanding to training tools, demos, and other professional applications.

Here are the 3DMark suite results. We will discuss them after the charts.

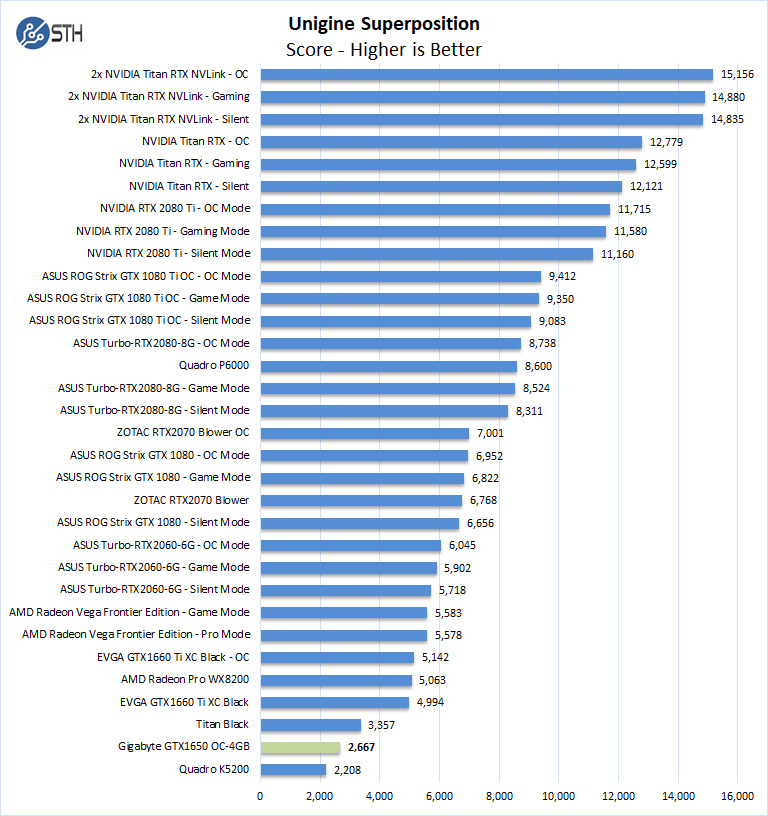

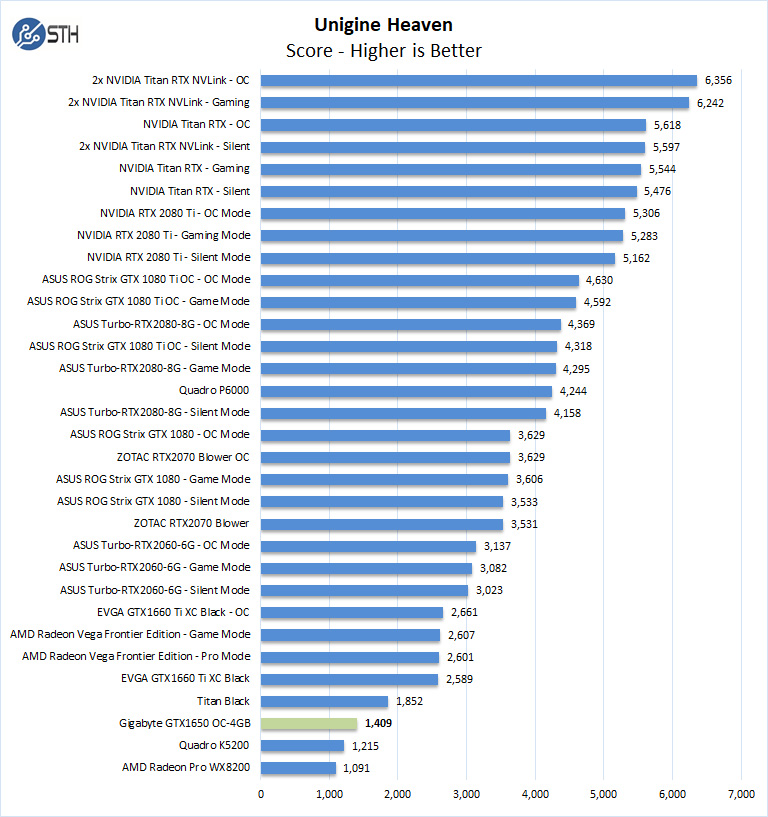

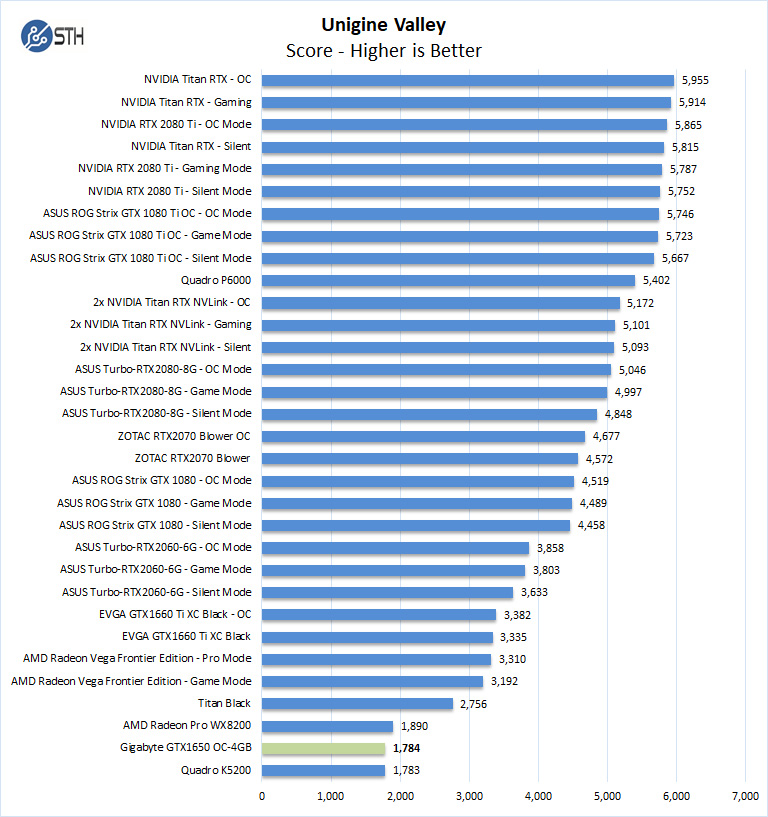

Gigabyte GTX 1650 OC Unigine Testing

Overall we see the entry-level performance from the Gigabyte GeForce GTX 1650 OC. This is to be expected in the $150 price segment.

Next, we are going to look at the Gigabyte GeForce GTX 1650 OC power and temperature tests, and then give our final words.

comptue is used twice on page 6.

Performance might seem on par with other nvidia models but if you compare this by price to lets say a RX570 or RX580, its very bad value for money.

You included too much worthless information and not enough practical information. We’re is the 1060 and 1070? Who gives a shit about how it stacks up against a Titan rtx x2? Get real.

I think this is one of NVidia’s most useless cards ever.

First the memory leaks, now the patent issue with Xperi Corp. It are hard times for mister “the more you buy, the more you save”. Linus Torvals will laugh his head off.

OVERALL SCORE 9.1?

Value 9.3?

Are you out of your mind?

How about comparison with cheaper and more powerful rx570?

I think people are being a little unfair here – or perhaps unrealistic – bearing in mind the target audience of the site is home severs, which may not have a PSU that supports extra power.

Yes, the price is high for the performance on an absolute scale. But that does not recognize that you simply *cannot get* a 75W PCIe-only powered card that matches it from AMD. No RX 580 or RX 570 here – no, you will be stuck with the RX 560 (or RX 460) and the same 4GB RAM, getting anywhere from two thirds to a half the performance, or maybe even less: https://www.anandtech.com/show/14270/the-nvidia-geforce-gtx-1650-review-feat-zotac

The WX8200, that meets the 1650 in some benchmarks, and does twice as well in others, uses *three* times the power – a TDP of 230W.

Absolutely, wait for Navi to see if it can do better. I hope it does, because I want to use a card with good open-source drivers. But if you can’t – if you need 75W, no more, now – this may be the best there is; and it’s priced accordingly.

This was clearly written by someone who doesn’t know anything about computer hardware or gpus.

We’ve been using these in many of our servers exactly for what you describe. We’re using them with Xeon D Supermicro boards powered off of the motherboard in 1U systems. It looks like someone here has linked and brought the gaming kids here. NVIDIA’s still where it’s at if you want inferencing at the edge. Anyone that thinks AMD’s in the game today against CUDA isn’t in the space. Maybe that’ll change, but NVIDIA’s easy and it works, AMD there’s always troubleshooting.

@Paul I can tell you it’s useful to us. We buy a spectrum of GPU’s for our customers. Having this info top to bottom is handy both for us and for our customers.

Good review. I’d like to see a blower style 1650 review since that’s the sweet spot in the market

Most of the comments here seem to have been made by those who own a giant rig and a powerful PSU — gamers. For those of us who prefer a system with a small physical footprint and low power consumption, while still being able to play at 1080p competently, this card is basically it for now until the fabled RX 3060 comes out. The comparison between this and the RX570 simply isn’t fair due to the huge discrepancy in power consumption. I do love AMD and support them when I can to create competition to Intel and NVIDIA, but it’s been a known fact that they compensate with lack of finesse with just raw and massive heat/power/energy consumption, which could costs end users hundreds if not thousands of dollars per year, and is horrible for the environment.

Hi, Thank you for providing such valuable information. In my opinion, the GeForce GTX 1650 is an excellent Graphics Card. It is in the best interests of everyone….