AMD Instinct MI300X at SC23

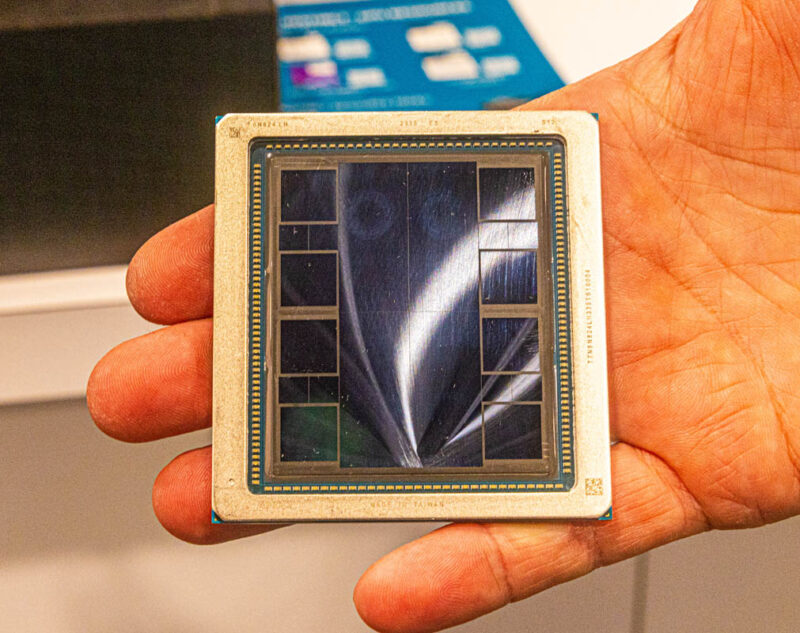

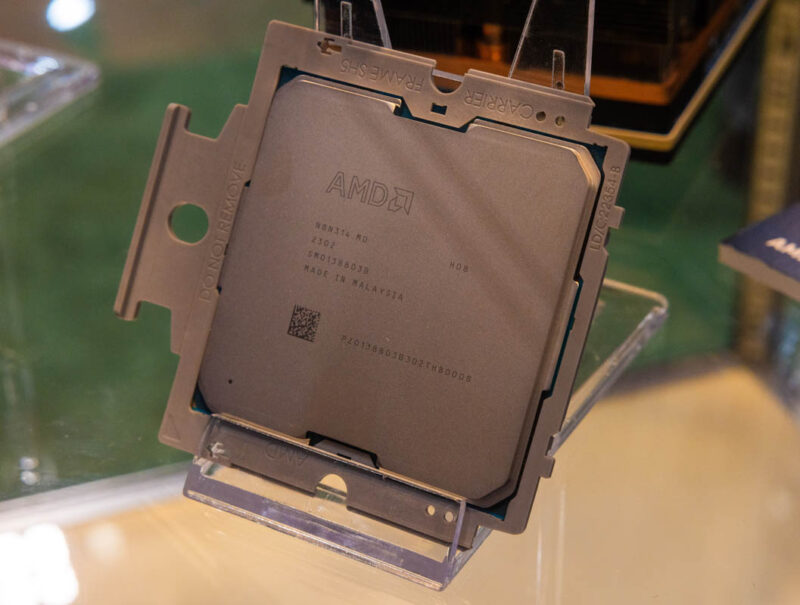

At SC23 we saw the MI300X. This uses four main compute tiles, eight HBM stacks, and more. This handheld photo shows some of the outlines between the four middle compute tiles.

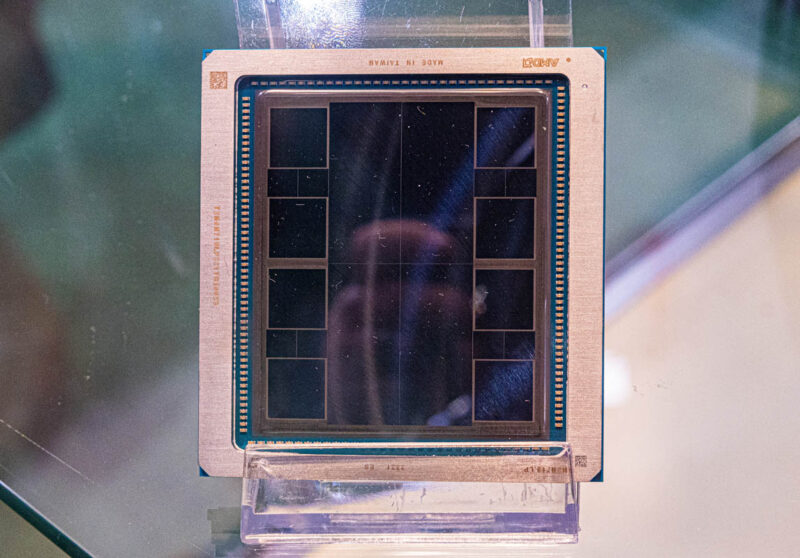

We also took on in a case where the tiles are clearly visible. This is not the same package at the MI300A because it is designed to be packaged into OAM modules for dense GPU compute.

If you prefer, there is also a selfie version of the MI300X photo.

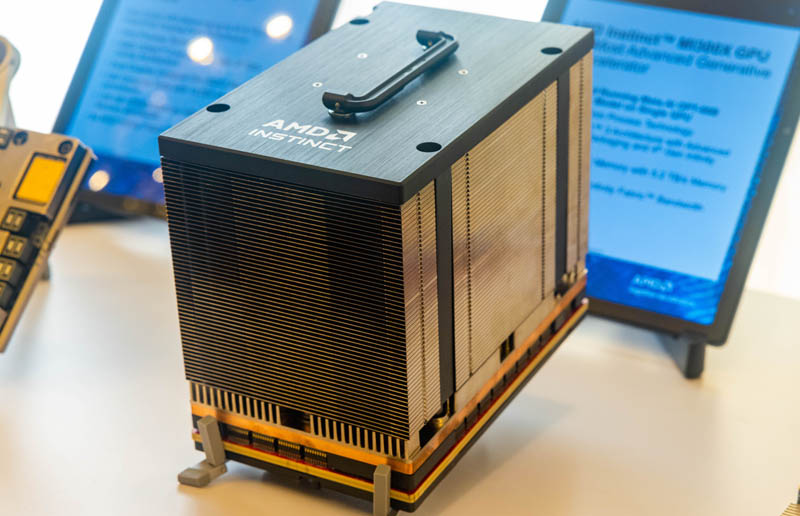

The OAM module is the industry’s answer to NVIDIA’s proprietary SXM5. It is used not just by AMD, but also by Intel, several startups, and some domestic Chinese AI accelerator companies.

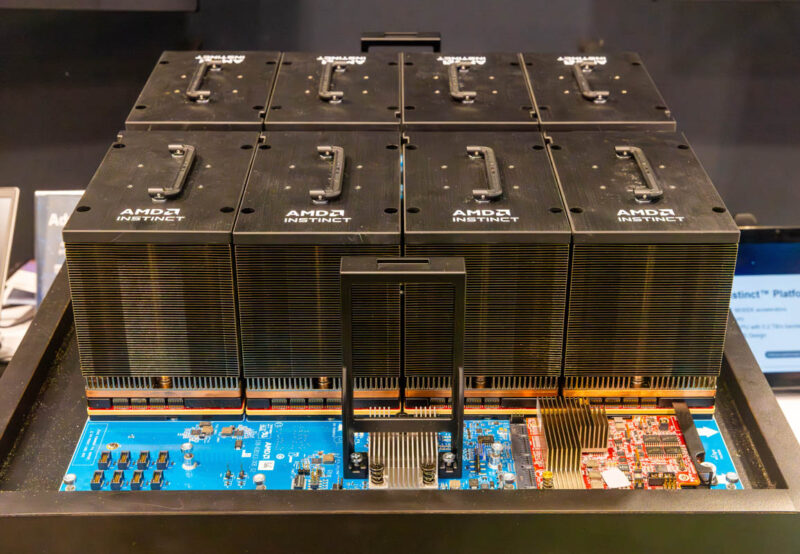

Eight of these modules fit into a standard OAM UBB.

We saw these on the show floor.

From here, these assemblies are attached to servers. These days, high-end accelerator servers are built to handle 700W-1kW accelerators, so many good designs can swap out OAM UBB and NVIDIA Delta-Next boards. One small difference is usually with accelerators like Intel Gaudi2, where the accelerators have built-in networking requiring a different face plate.

If you want to see more accelerated systems and some of the designs, we actually just looked at a bunch in our Touring the Intel AI Playground – Inside the Intel Developer Cloud piece, or you can see AI system reviews on the STH main site as far back as 2016.

Final Words

Between the Microsoft MI300X AI deal announced at Ignite this week and the MI300A in El Capitan, it seems like AMD is gaining more traction on the high-end accelerator market as folks hunt for NVIDIA alternatives.

It was also fun seeing the MI300A in its carrier and socket at the show. We should have more on the MI300 family at the company’s AI event in early December.

What is that funky looking power Delivery solution? Haven’t seen anything like that before

Those look for all the world like BMR510 stages. The integrated power stages have become very popular in PoL DC-DC converters the past several years.

SXM5. It is used not just by AMD, but also by

Missing the word only? Not only used by?

Delete that, just waking up here. Keep up the good work

Typo… Gigantic