Perhaps one of the most exciting things at SC22 was a new platform for Dell. The Dell PowerEdge XE9680 finally allows the company to offer a legitimate high-end AI training platform to its customers. In a blue-lit corner of SC22, the huge system was on display for all to see.

Dell PowerEdge XE9680 8x NVIDIA H100 Drops EMC and Finally Covers AI

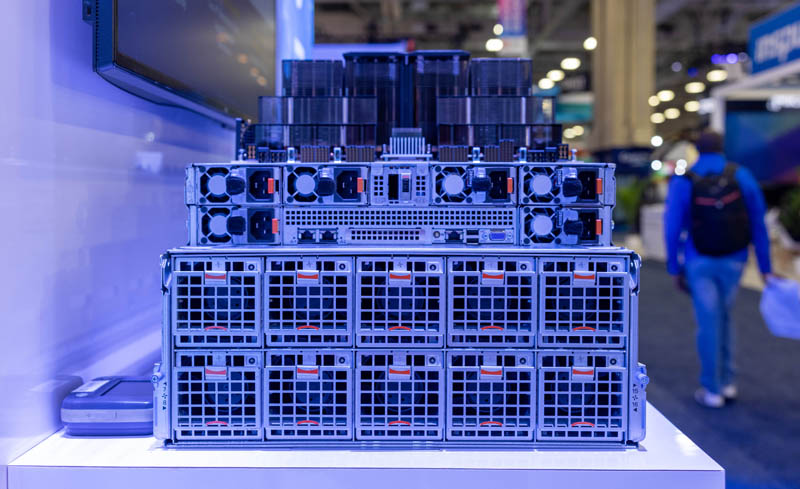

Here is the 6U server from Dell. One can see that we have the main server on the top of the chassis. This system has dual 4th Gen Intel Xeon Scalable processors and 16x DDR5 DIMMs/ CPU for 32 total. One can also see the 10x front PCIe Gen5 slots.

This side view is interesting for two reasons. First, we can clearly see Dell continue its design of offering what is essentially an integrated disaggregated chassis. Most other vendors in the market square off chassis for this class of device. Dell shows that it has a server and a PCIe/ accelerator portion of the server by making the chassis depth different depending on which portion you are looking at. Dell has effectively taken a disaggregated server and an acceleration box, and integrated the two. Just based on industry trends, and the strange chassis design, this is fun to see Dell do something different. At the same time, it is similar to the Dell EMC PowerEdge XE8545 we reviewed.

The other great thing that we see is that this is the “Dell PowerEdge XE9680” not the “Dell EMC PowerEdge XE9680.” Dell is finally, after six years, dropping the “EMC” from its naming convention.

The rear of the system is full of fan modules, both from the 10x fan modules on the bottom to the 6x PSUs on the top of the chassis.

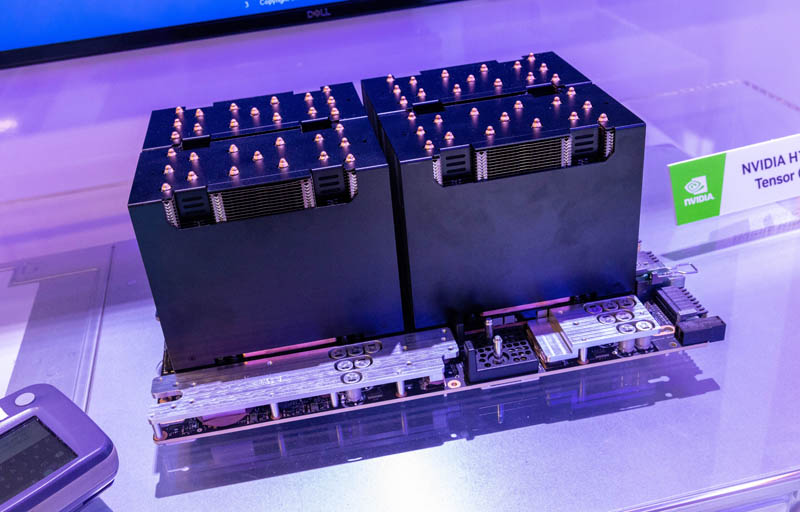

Atop the system is the 8x NVIDIA H100 board. In the previous generation A100 series, also supported by this platform, we called this the “Delta” platform while the 4x A100 assembly was the “Redstone” platform. In the H100 generation, these are now called Delta-Next and Redstone-Next. The XE9680 supports both Delta and Delta-Next using air cooling at up to 500W for the A100 and 700W for the H100.

Dell is going to continue supporting Redstone-Next, but it was great to see the Delta-Next H100 platform.

Final Words

This server may not seem like a huge deal to many of our readers. Afterall, we have reviewed several 8x A100 “Delta” platforms over the years and seen many H100 Delta-Next platforms already launched. The reason the PowerEdge XE9680 is such an important moment in the market is that Dell has finally decided it is time to compete in the high-end AI market. Along with NVIDIA’s enterprise software push, Dell can now sell additional software and support along with the AI training hardware to higher-margin customers. Many companies that utilize a lot of AI compute find that it is much less expensive to house clusters in a colocation facility than use cloud resources. Now Dell’s customers do not need to buy Supermicro, NVIDIA DGX, or another brand of training server for AI workload, they can instead just buy PowerEdge.

Most server vendors tell us that they are expecting the PCIe to OAM/SXM mix to be around 50-50 soon, so Dell needed this product line. It is great to see Dell finally start offering high-end AI training servers. Now we just want to see the company’s OAM platforms since that is becoming an industry trend and companies like HPE, Lenovo, Inspur, and Supermicro already are selling OAM-based solutions.

If you want to see more, you can check out the Best of SC22 video here:

The Dell system is at 07:38.

“Most server vendors tell us that they are expecting the PCIe to OAM/SXM mix to be around 50-50 soon”. Curious to know the reason behind this fast ramp-up of OAM/SXM. Is performance the decisive factor? Honestly don’t expect new vendors to be a meaningful competitor for nVidia in this segment.