2nd Gen Intel Xeon Scalable Refresh SKU Analysis

Let us delve into the details around what the changes are in each market segment. It turns out that when we looked at what Intel did in each segment, there were slight variations. We are going to start from the Xeon Bronze addition and work our way up the stack.

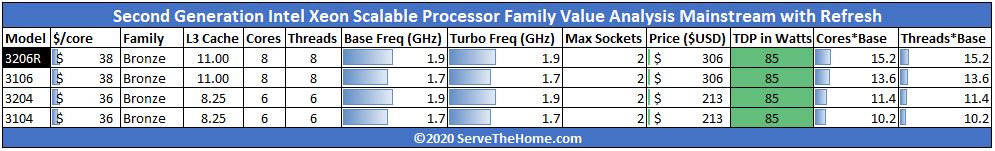

Intel Xeon Bronze 3200R Refresh Part

Intel finally added an 8-core Intel Xeon Bronze part back into the lineup. Here is the Intel Xeon Bronze family from its launch in 2017 to Q1 2020:

While the Intel Xeon Bronze 3206R may seem like something new, really it is getting the 2nd Generation Intel Xeon Scalable line to parity with the first generation. The Xeon Bronze series is part of what we call the “light the platform” segment where the goal is to use low cost and low power CPUs to make a minimal node for functionality such as lighting PCIe and memory lanes to create a storage server. We discussed this concept in our A Look at 7 Years of Advancement Leading to the Xeon Bronze 3204 and Intel Xeon Bronze 3204 review.

To that end, Intel in the 2nd Gen Xeon Scalable line only had the lowest-cost Bronze 3204 6-core non-Hyper-Threading part and omitted a successor to the Intel Xeon Bronze 3106. Now we have the Bronze 3106’s successor, the Bronze 3206R. This is a fairly consistent segment so we should see about a 11% performance improvement over the previous-generation Bronze 3106 at the same $306 price point. It is also, perhaps, the most underwhelming addition to the lineup albeit important for the low-end segment. These low-cost processors allow companies like HPE to sell “complete” systems (server, CPU, and RAM) to “Channel Cobblers” who then will put their own components in the system. They likely have outsized importance in the industry for the performance they have.

Perhaps more interesting is what happens when we move up the stack.

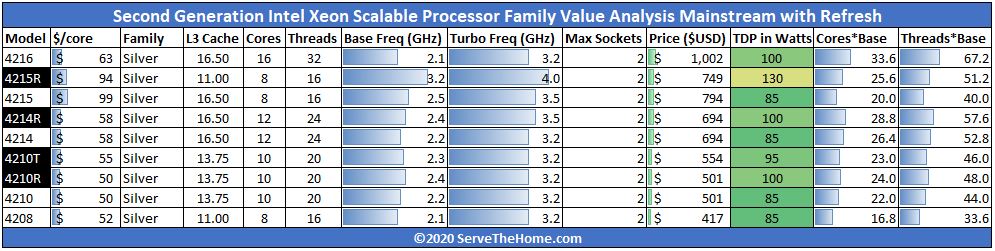

Intel Xeon Silver 4200R Refresh Parts

The Intel Xeon Silver 4200 series saw four new additions with the refresh.

Starting at the bottom, we see the Intel Xeon Silver 4210R which sees a 200MHz base clock jump from the Intel Xeon Silver 4210. More importantly, it also gets a 15W TDP bump to 100W so it can hold turbo frequencies longer. The Silver 4210T is an extended temperature part. We tested T series Intel Xeon Scalable CPUs to see if there is a performance impact and found that there was not. Here though, there is a 95W TDP versus 85W TDP for the Silver 4210 and a 100MHz base clock jump so we would expect there to be some differences. That marks another change in this refresh.

The Intel Xeon Silver 4214R follows a similar pattern. We get a 200MHz base, 300MHz turbo (now up to 3.5GHz) bump along with a 15W bump to 100W TDP at the same price. This is going to yield a nice performance increase, but it is not going to be of the same magnitude as higher in the stack. Frankly, given what we saw with the AMD EPYC 7272 Review 12 cores review, we think this needs a bit more help especially given the EPYC has broader platform capabilities adding to flexibility for those who need I/O not necessarily compute.

The Intel Xeon 4215R is a frequency optimized part, but Intel made additional changes. This 8-core part’s clock speed increases to 3.2GHz from 2.5GHz, which is almost a 22% increase. Turbo clocks jump 500MHz now up to 4GHz and the TDP adds 45W moving from 85W to 130W. That is a 42% TDP increase which is a lot for lower-power segments. One other notable item here is that cache was slimmed from 16.5MB to 11MB and is one of the only cases where this happens.

As we move past Xeon Silver, we get to Xeon Gold where the real refresh action happens.

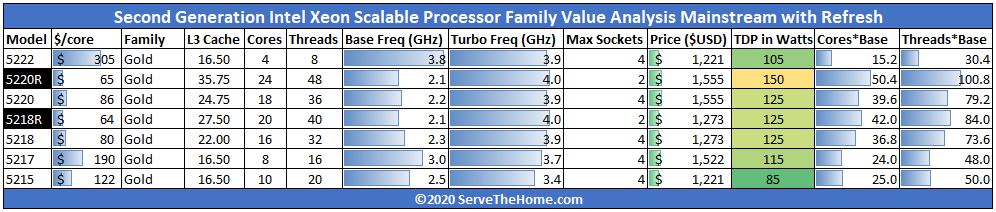

Intel Xeon Gold 5200R Refresh Parts

There are two new Intel Xeon Gold 5200R series SKUs, and they represent massive changes. The Xeon Gold 5200 series processors with two UPI links are better as value two-socket solutions than four-socket solutions. Our discussion of four-socket capabilities somewhat does not apply to these parts even though there are certain segments that use Gold 5200 in four-socket servers. Also, not all two-socket servers support 3x UPI links, so the Gold 5200 series is designed for the lower cost and lower power dual-socket server segment.

First, the Intel Xeon Gold 5218R has 20 cores which are 25% more than the Intel Xeon Gold 5218. Base clock loses 200MHz but the maximum Turbo Frequency raises 100MHz to 4.0GHz. TDP and pricing remain the same while the cache is scaling with core counts.

The bigger impact is that the Gold 5218R now has the same core count, base clock, cache, and TDP as the Intel Xeon Gold 6230 and a 100MHz higher turbo clock as well. One gives up four-socket scalability as well as 3x UPI two-socket but gets a discount of $621. That effectively means that Intel is giving a 43% discount for this level of 20-core low-power performance.

The Intel Xeon Gold 5220R adds 33% more cores (24 v. 18) at the same price as the Intel Xeon Gold 5220. Clock speeds are 100MHz lower at base but 100MHz higher on the Turbo side and the price point of $1,555 remains unchanged. The new chips also get a 150W TDP which is up 25W. Previously, if you wanted 24x Intel cores at 150W, you needed to go up to the Intel Xeon Gold 6252 at $3,665. That is a 57.6% discount for losing a UPI link and 4-socket capability.

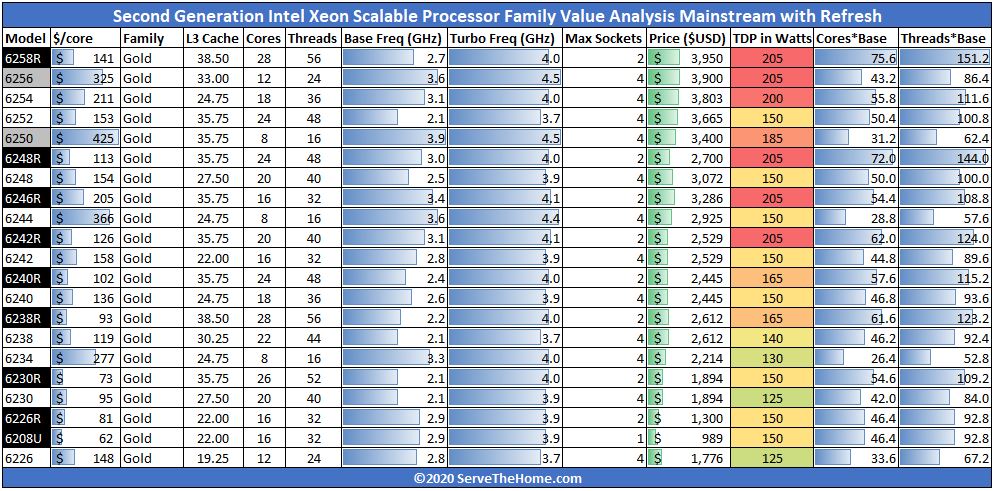

Intel Xeon Gold 6200R Refresh Parts

The Intel Xeon Gold 6200R saw some enormous changes. We are going to skip the discussion of the Gold 6256 (12-core) and Gold 6250 (8-core) since those are low core count, high-frequency SKUs designed for per-core license segments. Instead, we are going to focus on the seven Xeon Gold 6200R SKUs.

Starting at the bottom (the Gold 6208U we will discuss next) we have the Intel Xeon Gold 6226R CPU. This 16-core part is a 100MHz higher base clock version of the Intel Xeon Gold 6242 launch SKU. Losing 4-socket capability means this $1300 part saves around 49% off of the Xeon Gold 6242.

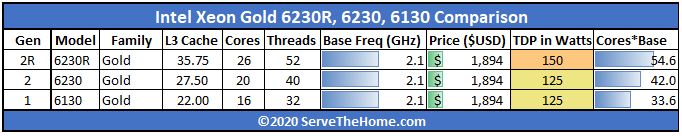

The Intel Xeon Gold 6230R is really interesting as a 26-core SKU. This core count had previously been uncommon in the line. At the same $1894 and with a 25W TDP bump this adds 6 cores (30% more) and a 100MHz Turbo clock bump to the popular price point versus the Intel Xeon Gold 6230. For some perspective here, this is how the same price point has evolved since 2017:

At 28 cores and 165W, the Intel Xeon Gold 6238R at $2612 is an enormous upgrade over the previous 22 core Gold 6238. Instead, it is a 100MHz higher but otherwise similar specs to the Intel Xeon Platinum 8276L we reviewed. That means this level of performance for dual-socket servers has moved from $8,719 to $2,612 in less than 11 months. That is a 70% discount for dual-socket servers with a 100MHz base clock jump thrown in. This is a direct response to AMD’s offerings and is in-line with what we said at the EPYC 7002 series launch that “AMD at around $7000 is essentially saying Intel needs to start their discounting at 73% to get competitive, and that is not taking into account using fewer servers.” (Source: here) Some commented I was crazy, but this is a prime example where at that higher-end we got 70% off plus 100MHz which is fairly close to the 73% discount we said was needed quarters ago.

The Intel Xeon Gold 6240R adds 6 cores for a 24-core total. It sheds 200MHz of base clock from the Xeon Gold 6240 but adds 100MHz more Turbo at the top end while also utilizing 15W more TDP headroom. The Gold 6240R is similar to a Xeon Platinum 8260 with a 100MHz maximum turbo bump. Even with that small clock bump, at $2,445 has a $2,257 lower list price than the Platinum 8260 for just over a 48% discount.

At 20 cores, the Intel Xeon Gold 6242R has a list of improvements over the Gold 6242. It has four more cores, 13.75MB of L3 cache, 300MHz base, and 200MHz turbo clock improvements over the launch part all at the same $2,529 price point. Intel increased the TDP from 150W to 205W which can impact which machines the Gold 6242R can be used in versus the Gold 6242.

The new 16-core license (e.g. Windows Server 2019) super chip may be the Intel Xeon Gold 6246R. For those Microsoft shops buying 16-core CPUs, the Gold 6242, you will get 600MHz base and 200MHz turbo clock bumps over the Gold 6242. But there is more than clock speeds here. Intel has increased the L3 cache to 35.75MB over the Gold 6242’s 22MB and TDP has increased from 150W to 205W which means it should spend time at higher clock speeds more often. Here the 16-core price increases to $3,286 from $2,529 for the Gold 6242 or $3,115 for the Platinum 8253. This shows the power of per-core licensing on CPU pricing models.

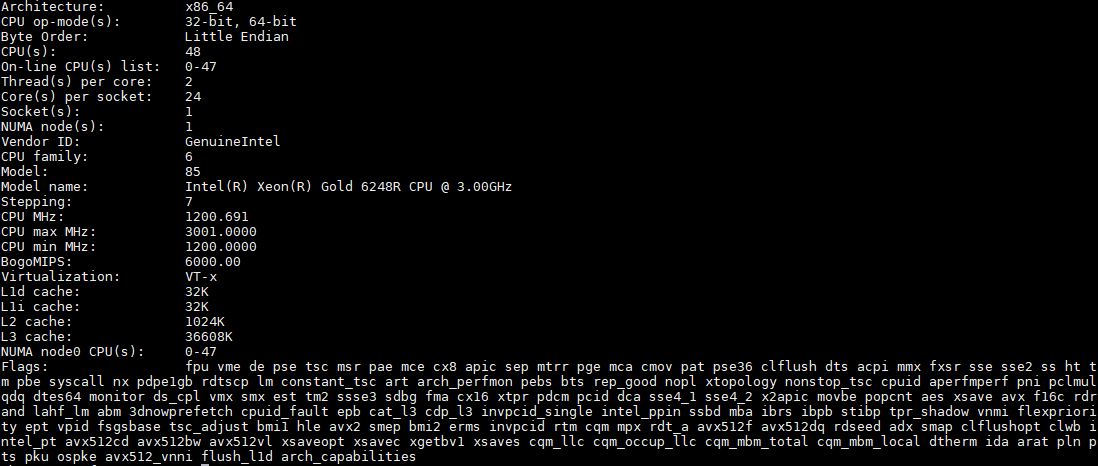

The Intel Xeon Gold 6248R we are going to go in-depth to in our benchmarks on the next page. Still, it is a part that adds 20% more cores than the Gold 6248. The Gold 6248R is more similar to the Platinum 8268 but with 100MHz higher base and turbo frequencies and a discount of over 57% versus the Platinum part. It is also a lower-cost part at $2700 versus the Gold 6248 at $3072. More performance for less is great for the value equation.

Finally, the big one, the Intel Xeon Gold 6258R. Let us call this one what it is. This is a 2-socket only Intel Xeon Platinum 8280 at $3,950 versus $10,009. From a competitive standpoint, even if nobody buys this CPU, it effectively changes all industry comparisons henceforth. If you are an Arm CPU vendor or AMD claiming 2x the performance per dollar versus the Platinum 8280 top-end part by simply comparing CPU list prices, but your chip is only dual-socket capable, you are now effectively claiming that your solution is 27% worse than Intel on a performance per dollar basis. I have been lobbying for Intel to make a change like this for exactly this reason. The Platinum 8280 was designed as a 4-socket or 8-socket Xeon E7 successor model, not as a mainstream part. Other vendors took advantage of this and used the Platinum 8280’s $10,009 price point as a measuring stick even though that list price was designed for heavy discounting in big systems with six or seven-figure each price tags. Now, most of the industry comparisons to the Platinum 8280 are not relevant except for AMD. We are going to go into more detail around this in our market analysis.

Intel Xeon Gold 6208U Refresh 1P Part

While the U parts have not been the most popular, the Intel Xeon Gold 6208U is intriguing for another reason. It puts pressure on Intel’s other single socket Xeon lines from the Intel Xeon E-2288G, Xeon W-2200, and Xeon W-3200 series. A 16-core part, with the Xeon Scalable platform, can be very attractive in its own right for the sub $1000 space. In fact, if you were looking at the Xeon W lines for a workstation and wishing you could have Optane DCPMM support, then this $1000 CPU makes a lot of sense. Further, for single-socket based higher performance storage platforms, a sub-$1000 DCPMM CPU with solid frequencies can be very attractive. On the other hand, it is now only a $311 discount to the Gold 6226R that it is based around which is not a large discount.

Next, we are going to take a look at the 2nd Generation Intel Xeon Scalable Refresh performance, specifically focusing on the Xeon Gold 6248R and the Gold 6248. After we finish the performance, we will get to our market analysis before concluding with our final thoughts.

This is the best coverage out there and I’ve skimmed Anand’s and Tom’s pieces. I wish you benchmarked more SKUs for it though but you’ve at least got something.

I’m with Teddy1974 and I read some analyst reports this morning too. I can’t believe this is free analysis.

Very nice article. What killing me is “Our review of the Xeon W-2295 is going to be online in the next few weeks” note inside it. I’ve slowly set on W-2295 as my workstation update (from old E5) hence would like to see your article rather soon than later.

Also W-22xx versus new “R” line, I think it’s matter of workstation preference. If the target software is more single-threaded oriented, then one may favor W-line for its higher turbo freq, however if the software is more multi-threaded and user is 100% sure about it, then investment into R line may make sense.

To put it bluntly, where is Cooper Lake? Wasn’t that supposed to be the stop-gap between Cascade Lake and Ice Lake? I know it was to be AI focused with BF16 support but is Intel squarely targeting only that segment for it? It’d be a means of getting a new platform out the door as well as negating several security issues that exist in Cascade Lake hardware.

Kevin, Cooper is coming. I think it is slightly different in market positioning at this point. All I can say for now is stay tuned to STH.

Too bad Patrick never mentioned DCPMM capabilities of these new CPUs

/s

Bored SysAdmin – 12 times in the article DCPMMs were mentioned including specific discussions. I am not sure what that comment means?

/s = sarcasm

/s is totally lost on me :-)

Great review. Thanks.

“For AMD, the road just got tougher. Contrary to many beliefs out there, Intel does not have to meet or beat AMD’s performance per dollar in markets primarily focused on compute. Intel just needs to be close.”

Really with Zen-3 Epyc/Milan sampling what’s going to happen after that and AMD’s Epyc/Rome pricing can go down even further what with those Zen-2 CCDs so easy to produce at such high Die/Wafer yields. AMD’s running a much leaner operation and TSMC’s process node R&D costs is amortized across an entire industry and not just AMD only. AMD’s response can be readily apparent and AMD’s economy of scale on those 8 core CCDs is testament to that. Intel’s 60%+ gross margins are no longer and if AMD’s Opteron competition was any indicator then that metric is going down further as well with Epyc at a level of parity in performance and at a price that Intel has now been forced to counter.

Currently as well some of AMD big Epic wins are going hand in hand with Nvidia’s GPU accelerators or even AMD’s Own GPU offerings for GPU compute that’s a bit more than any CPU can provide and Intel has not yet fielded any competition that has Nvidia shacking in it boots. AMD is really got the low overhead advantage in any price war what with Intel still on those monster monolithic designs that make the Die/Wafer yield calculator produce some sobering results.

The road has always been uphill for AMD and what’s new is that that hill is larger but AMD is about to crest for a good while and the going is not that bad when those CCDs only have 8 cores and mad Die/wafer yields and are scalable up to 64 cores on an MCM and probably higher if need be. Zen-3 is sampling and Zen-4 and Zen-5 have their own teams leapfrogging forward on a regular cadence. AMD has no large chip fab upkeep expenses in which to worry about and that’s good for any extra pricing latitude on AMD’s side. And this obvious move from Intel can be countered as AMD can afford to go lower to a point that high overhead Intel will have to go underwater for a while to match.

It’s maybe a little late for Intel as too much notice of Epyc/Rome is already producing results that Intel will not be able to quickly counter easily as in the past. I’d look to Intel’s large war chest only for the short term as Intel has to use more of that on process node and foundry retooling but price wars in the server market are certainly a rare occurrence that have the bean counters absolutely giddy since Epyc/Rome really turned up the competition after Epyc/Naples got AMD’s foot back in the door.

Really needed to be done on the Intel side, the parts were just not competitive for a large portion of the market. Intel-only shops began to look elsewhere, in some cases with larger refreshes went all in on Rome given the increase in performance and very significant cost savings.

Core count wise, they just can’t compete against the 64c Rome monsters. For VMware shops looking forward they also don’t have a 32c sku for optimized license utilization on smaller deployments.

Vendor hardware appliances aren’t as common as they use to be but almost all are exclusively Intel, with the newer models available better cost reductions.

@LowOverheadLarry: The only problem with your “theory” is the reality that Intel is making more money EVERY year that they have been producing 14nm chips!! Amd is NOT making nowhere near any money with Epyc and the market for 64 core chips is ridiculously small while Intel is still making many BILLIONS every year from 4,6 & 8 core laptop chips!!!

Epyc has been available for over 3 years while Intel has been producing inferior 14nm chips and they barely have over 5% market share for x86 servers worldwide!!

Amd has to start producing revenue in large numbers if they really want to compete with Intel in the long run.

The day of reckoning is in 2021 when 10nm Ice Lake comes out in huge numbers, then we will truly see how competitive Amd is with Intel in the server space as Intel still dominates with 5 year old 14nm xeon /laptop chips that the market is still buying in massive quantities regardless of the superiority of the Zen 2,3,4 platform!

More competitive pricing. Nice refresh meaning we don’t have to replace existing motherboards to accommodate these new SKUs for those of us on earlier releases of scalable. I’m on gen1 6130 16core 2.1 base 3.7 boost… and and to me it means eventual drop in prices on used gen1 6130 for my other open socket. Or I can sell off my existing single gwn1 6130 and upgrade to two refreshed 2020 silver 5000 series 20 or 24 core for not much more $ and use my existing mono.

Cooper lake will mean socket newer socket/mono required and new chipsets, so again I say it’s a nice refresh for those of us who adopted to earlier gen scalable.

AMD still kills it though with better price performance and features if ordering all new systems.

@lemans24, it’s not about Intel being large and making more money as we all know that Intel has money. But Intel is a high operating cost/high margins necessary business that has to have the 55%-60+% gross margins on higher markups in order to remain revenue positive and not drain its cash reserves.

It’s about AMD’s Epyc/Rome and soon to be Epyc/Milan and that modular CCD/DIE economy of scale and AMD not having any multi-billion dollar Fab up-keep costs. TSMC’s 7nm/smaller node R&D costs are not solely AMD’s burden as TSMC spreads that Fab/R&D upkeep cost across an entire industry whereas Intel has its expensive fabs’ upkeep and equally expensive process node R&D costs to shoulder on its own and that can drain cash reserves like nothing else for Intel.

AMD is fabbless and its Epyc/Rome and Epyc/Milan CCD 8 core die wafer yields are always going to be better than what Intel has with its monster monolithic Die production. AMD is such a low operating overhead business with, now, an Industry leading server CPU product line that’s levels above AMD’s past Opteron predecessors on the Price/Performance metric that got AMD up to around 23% of the server market share in the past. So now with Epyc/Rome and soon to be released Epyc/Milan Intel will have to contend with that and AMD’s Zen-4 Epyc/Genoa on 5nm by 2021 and Intel has already had/is planning layoffs because Intel’s high margin mark-ups are taking a hit and so will those revenues needed to run Intel’s high overhead operations.

AMD can turn a profit at 43% gross margins while Intel would loose billions at that gross margin level. So now Intel is dropping it’s server CPU markups like a brick just to match AMD’s Epyc/Rome price/performance metrics and AMD has Epyc/Milan ready and sampling to potential customers so Epyc/Rome can have its price lowered by AMD and AMD will not go revenue negative like Intel will be having to go in an attempt to stop the bleeding market share. Each and every week AMD racks up new Epyc/Rome cloud customer orders and Intel is still not able to ramp up enough production at 10nm and even 14nm to stop AMD’s market share gains.

Once the Wall Street Quants fully suss out Intel’s longer term Gross Margin basis points decline/decline potential Intel will really have to rely on its other non processor business units to maintain and server CPUs was/is Intel’s high margin holy cow that’s having to be priced significantly lower. So with each percentage of Sevrer market share lost to AMD that’s millions of dollars less revenue for Intel and millions of dollars more for AMD on the quarter to quarter balance sheets. TSMC’s 7nm and AMD’s modular/scalable CCDs are totally the very definition of economy of scale and since AMD is fabless and TSMC provides fab services to an entire market of fabless chip makers they all share in TSMC’s fab upkeep/node R&D costs amortizations.

You are not going to be able to go against AMD’s/TSMC’s economy of scale and that’s just economics there once AMD’s Epyc/Rome benchmarks proved that Intel is not able to compete at its traditional price points and the layoffs will continue at Intel as those massive operating overheads have to be reduced or Intel’s cash reserves will evaporate rather quickly. Chip fabs are the most hungry of operations to keep fed and at 7nm and lower that’s billions more compared to 14nm/above just to hit the ground running.