2nd Gen Intel Xeon Scalable Refresh Performance

We wanted to give some sense of the performance one can see with the new SKUs over the older generation parts with similar names. As a result, we have Intel Xeon Gold 6248 processors along with Intel Xeon Gold 6248R CPUs in a testbed, specifically the Supermicro SYS-2029UZ-TN20R25M or “2029UZ-TN20R25M” server.

The Supermicro 2029UZ-TN20R25M is a 2U dual-socket server that is part of the company’s “Ultra” line meant to compete in the higher-end of the server market. We requested this server specifically because it has 20x NVMe SSD bays, it supports Intel Optane DCPMM, and it has built-in 25GbE. 25GbE is a major networking trend and we have already started doing overviews of 25GbE TOR switches such as the Ubiquiti UniFi USW-Leaf 48x 25GbE and 6x 100GbE switch overview. We have done adapter reviews such as the Supermicro AOC-S25G-i2S, Dell EMC 4GMN7 Broadcom 57404, and the Mellanox ConnectX-4 Lx. We also have 100GbE switch reviews in the publishing queue so we wanted to start focusing on the new systems.

This Supermicro 2029UZ-TN20R25M platform is significant for another reason. It supports 205W TDP CPUs. Not all Intel Xeon Scalable platforms can support 205W TDP CPUs and we needed that for our testing since Intel added a lot more performance but also has increased power consumption by 55W moving from the Xeon Gold 6248 to the Gold 6248R.

If you want to see an example of how this can impact some systems, we put the Xeon Gold 6248 in a Supermicro X11SPM-TPF platform and it worked without issue. When we tried the Xeon Gold 6248R, that extra 55W caused an error since that platform supports only up to 165W TDP CPUs as we noted in that review:

For this reason, we wanted to use the Supermicro 2029UZ-TN20R25M which is a higher-end platform capable of handling this type of CPU.

Intel Xeon Gold 6248R Benchmarks

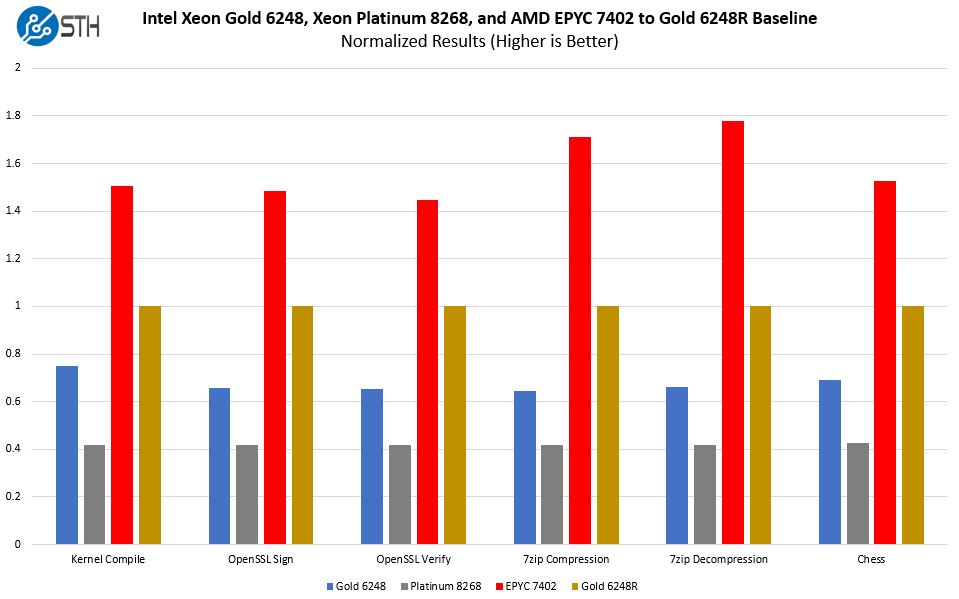

We wanted to give some sense of performance, specifically as it relates to four SKUs and this Intel Xeon Gold 6248R. Those other three SKUs are the previous generation Intel Xeon Gold 6248, the similar 8-socket capable Intel Xeon Platinum 8268, and the AMD EPYC 7402/ 7402P from a competitive standpoint.

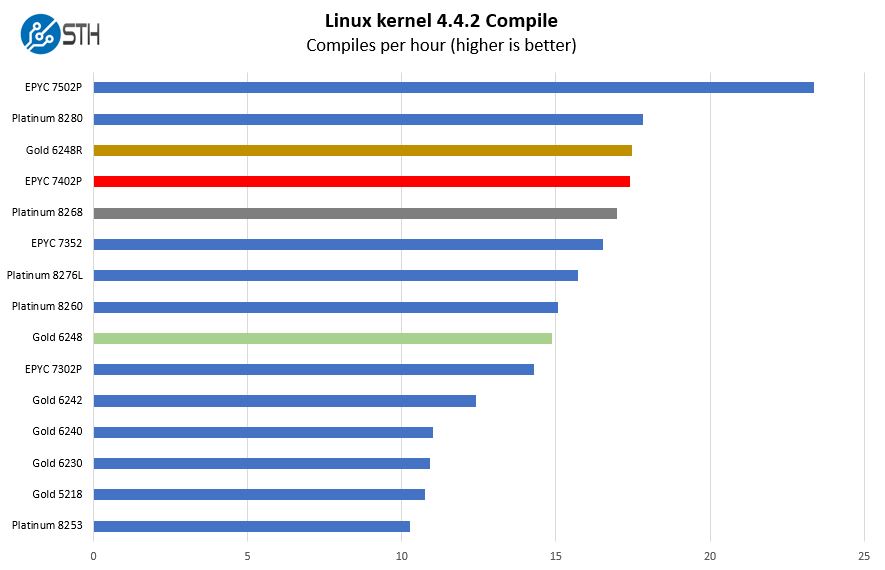

Python Linux 4.4.2 Kernel Compile Benchmark

This is one of the most requested benchmarks for STH over the past few years. The task was simple, we have a standard configuration file, the Linux 4.4.2 kernel from kernel.org, and make the standard auto-generated configuration utilizing every thread in the system. We are expressing results in terms of compiles per hour to make the results easier to read

In this test, the $2,700 list price Xeon Gold 6248R is just about dead-even with the AMD EPYC 7402/ 7402P at a $1,783/ $1,250 price point (2P/ 1P.) We can also see a slight bump above the Intel Xeon Platinum 8268 which was essentially the $6,302 part at this price point. Way down the list, we see the Intel Xeon Gold 6248. Our Linux kernel compile benchmark tends to be a decent indicator of performance. For STH’s internal workloads it is a fairly close indicator as to what we experience.

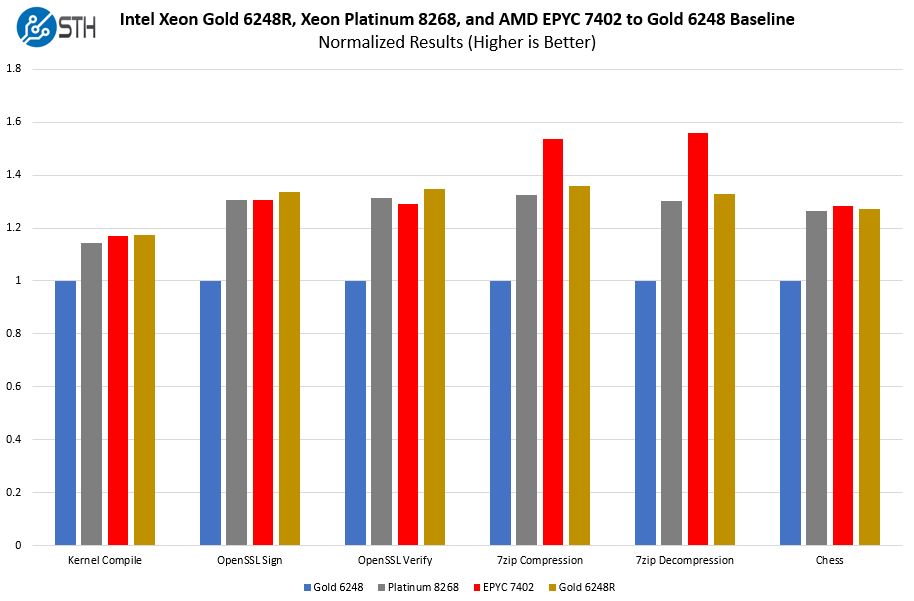

Intel Xeon Gold 6248R, Gold 6248, Platinum 8268, AMD EPYC 7402 Comparison

Since this article is already many charts long and is pushing 4,500 words already, we are going to give a small sample of workloads to again exemplify what we know we will see based on specs, equal or better than Xeon Platinum 8268 performance at a lower price. We are going to normalize this chart to the Intel Xeon Gold 6248 numbers as being 1.0 to make it easier to read:

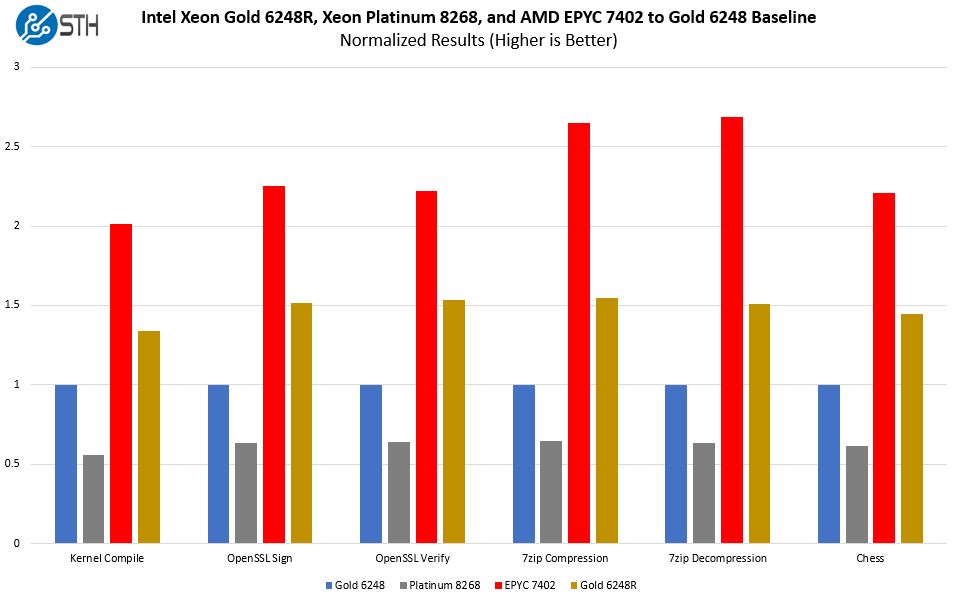

Looking at the competitiveness factor, here is a performance per dollar chart of the above-using list price and normalized to the Gold 6248’s performance as 1.0. We will note that discounting can have a significant impact on these prices for both Intel and AMD.

As you can see here, Intel is offering 35-55% better performance per dollar with the Xeon Gold 6248R over the Gold 6248. That is an enormous delta. We went through a decade where a 2-5% improvement was considered a “refresh” and a 5-20% improvement was considered a new line of processors.

Put in different terms, we normalized this chart to the performance per dollar of the Gold 6248R as 1.0. Here is what that looks like:

While one can claim a 35-55% better performance per dollar on the Intel Gold comparison, looking at the Xeon Platinum 8268 is more interesting. Intel is essentially discounting that higher-level of performance by about 58%. That is absolutely a huge move in 10-11 months after launch.

On the AMD EPYC side, the tables have also turned. Now that performance is very close, the question comes down to pricing. List prices still give AMD a 40-75% advantage over the Xeon Gold 6248R, however, those are list prices. If customers are willing to pay a 10-20% premium for Intel over AMD to simply not move vendors, it does not take much discounting to close that gap. Also, Intel can sell more silicon such as the PCH, NICs, SSDs, Optane DCPMM, and accelerators to close the gap further through bundle discounts. AMD needs to be ahead on the value side, but the breadth of Intel’s product portfolio can have a huge market impact.

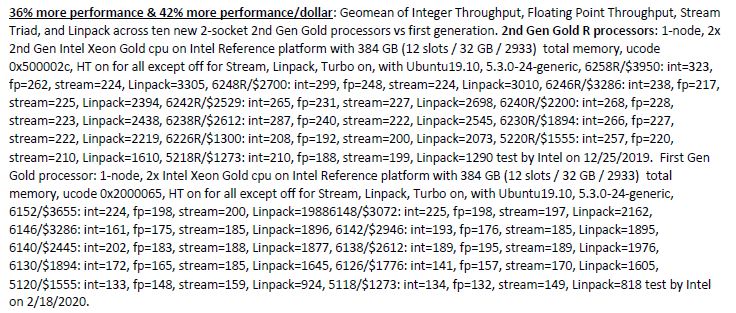

A quick note, Intel is claiming “36% more performance and 42% more performance per dollar” in their NewsByte. When we looked at the footnote, Intel is comparing the new refresh 2-socket Xeon Gold “R” SKUs to the 4-socket capable 1st generation Intel Xeon Scalable Gold SKUs. Here is the disclosure:

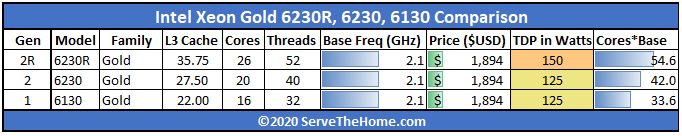

Taking the Intel Xeon Gold 6×30 level as an example since it has stayed at the $1,894 level, we see why comparing the first generation to the “R” SKU makes Intel look great:

Now Intel both disclosed this and used a geomean of many results to get to 36% and 42%, however, we just want our readers to be aware that this is not Gen 2 to 2R but instead Gen 1 to Gen 2R that Intel is touting the benefits of. Frankly, Intel has a great story even comparing its 2019 to 2020 generations. Make no mistake, if you are a mainstream dual-socket Xeon buyer, the Gold 5200R and Gold 6200R parts are going to offer huge performance per dollar gains over the 2019 generations as well.

Over the next few weeks, you will see reviews of the Intel Xeon Gold 6248R as well as the Supermicro 2029UZ-TN20R25M and other SKUs. We just wanted to give some examples of just how much more performance the new SKUs offer before discussing the market impact which we will continue with next.

This is the best coverage out there and I’ve skimmed Anand’s and Tom’s pieces. I wish you benchmarked more SKUs for it though but you’ve at least got something.

I’m with Teddy1974 and I read some analyst reports this morning too. I can’t believe this is free analysis.

Very nice article. What killing me is “Our review of the Xeon W-2295 is going to be online in the next few weeks” note inside it. I’ve slowly set on W-2295 as my workstation update (from old E5) hence would like to see your article rather soon than later.

Also W-22xx versus new “R” line, I think it’s matter of workstation preference. If the target software is more single-threaded oriented, then one may favor W-line for its higher turbo freq, however if the software is more multi-threaded and user is 100% sure about it, then investment into R line may make sense.

To put it bluntly, where is Cooper Lake? Wasn’t that supposed to be the stop-gap between Cascade Lake and Ice Lake? I know it was to be AI focused with BF16 support but is Intel squarely targeting only that segment for it? It’d be a means of getting a new platform out the door as well as negating several security issues that exist in Cascade Lake hardware.

Kevin, Cooper is coming. I think it is slightly different in market positioning at this point. All I can say for now is stay tuned to STH.

Too bad Patrick never mentioned DCPMM capabilities of these new CPUs

/s

Bored SysAdmin – 12 times in the article DCPMMs were mentioned including specific discussions. I am not sure what that comment means?

/s = sarcasm

/s is totally lost on me :-)

Great review. Thanks.

“For AMD, the road just got tougher. Contrary to many beliefs out there, Intel does not have to meet or beat AMD’s performance per dollar in markets primarily focused on compute. Intel just needs to be close.”

Really with Zen-3 Epyc/Milan sampling what’s going to happen after that and AMD’s Epyc/Rome pricing can go down even further what with those Zen-2 CCDs so easy to produce at such high Die/Wafer yields. AMD’s running a much leaner operation and TSMC’s process node R&D costs is amortized across an entire industry and not just AMD only. AMD’s response can be readily apparent and AMD’s economy of scale on those 8 core CCDs is testament to that. Intel’s 60%+ gross margins are no longer and if AMD’s Opteron competition was any indicator then that metric is going down further as well with Epyc at a level of parity in performance and at a price that Intel has now been forced to counter.

Currently as well some of AMD big Epic wins are going hand in hand with Nvidia’s GPU accelerators or even AMD’s Own GPU offerings for GPU compute that’s a bit more than any CPU can provide and Intel has not yet fielded any competition that has Nvidia shacking in it boots. AMD is really got the low overhead advantage in any price war what with Intel still on those monster monolithic designs that make the Die/Wafer yield calculator produce some sobering results.

The road has always been uphill for AMD and what’s new is that that hill is larger but AMD is about to crest for a good while and the going is not that bad when those CCDs only have 8 cores and mad Die/wafer yields and are scalable up to 64 cores on an MCM and probably higher if need be. Zen-3 is sampling and Zen-4 and Zen-5 have their own teams leapfrogging forward on a regular cadence. AMD has no large chip fab upkeep expenses in which to worry about and that’s good for any extra pricing latitude on AMD’s side. And this obvious move from Intel can be countered as AMD can afford to go lower to a point that high overhead Intel will have to go underwater for a while to match.

It’s maybe a little late for Intel as too much notice of Epyc/Rome is already producing results that Intel will not be able to quickly counter easily as in the past. I’d look to Intel’s large war chest only for the short term as Intel has to use more of that on process node and foundry retooling but price wars in the server market are certainly a rare occurrence that have the bean counters absolutely giddy since Epyc/Rome really turned up the competition after Epyc/Naples got AMD’s foot back in the door.

Really needed to be done on the Intel side, the parts were just not competitive for a large portion of the market. Intel-only shops began to look elsewhere, in some cases with larger refreshes went all in on Rome given the increase in performance and very significant cost savings.

Core count wise, they just can’t compete against the 64c Rome monsters. For VMware shops looking forward they also don’t have a 32c sku for optimized license utilization on smaller deployments.

Vendor hardware appliances aren’t as common as they use to be but almost all are exclusively Intel, with the newer models available better cost reductions.

@LowOverheadLarry: The only problem with your “theory” is the reality that Intel is making more money EVERY year that they have been producing 14nm chips!! Amd is NOT making nowhere near any money with Epyc and the market for 64 core chips is ridiculously small while Intel is still making many BILLIONS every year from 4,6 & 8 core laptop chips!!!

Epyc has been available for over 3 years while Intel has been producing inferior 14nm chips and they barely have over 5% market share for x86 servers worldwide!!

Amd has to start producing revenue in large numbers if they really want to compete with Intel in the long run.

The day of reckoning is in 2021 when 10nm Ice Lake comes out in huge numbers, then we will truly see how competitive Amd is with Intel in the server space as Intel still dominates with 5 year old 14nm xeon /laptop chips that the market is still buying in massive quantities regardless of the superiority of the Zen 2,3,4 platform!

More competitive pricing. Nice refresh meaning we don’t have to replace existing motherboards to accommodate these new SKUs for those of us on earlier releases of scalable. I’m on gen1 6130 16core 2.1 base 3.7 boost… and and to me it means eventual drop in prices on used gen1 6130 for my other open socket. Or I can sell off my existing single gwn1 6130 and upgrade to two refreshed 2020 silver 5000 series 20 or 24 core for not much more $ and use my existing mono.

Cooper lake will mean socket newer socket/mono required and new chipsets, so again I say it’s a nice refresh for those of us who adopted to earlier gen scalable.

AMD still kills it though with better price performance and features if ordering all new systems.

@lemans24, it’s not about Intel being large and making more money as we all know that Intel has money. But Intel is a high operating cost/high margins necessary business that has to have the 55%-60+% gross margins on higher markups in order to remain revenue positive and not drain its cash reserves.

It’s about AMD’s Epyc/Rome and soon to be Epyc/Milan and that modular CCD/DIE economy of scale and AMD not having any multi-billion dollar Fab up-keep costs. TSMC’s 7nm/smaller node R&D costs are not solely AMD’s burden as TSMC spreads that Fab/R&D upkeep cost across an entire industry whereas Intel has its expensive fabs’ upkeep and equally expensive process node R&D costs to shoulder on its own and that can drain cash reserves like nothing else for Intel.

AMD is fabbless and its Epyc/Rome and Epyc/Milan CCD 8 core die wafer yields are always going to be better than what Intel has with its monster monolithic Die production. AMD is such a low operating overhead business with, now, an Industry leading server CPU product line that’s levels above AMD’s past Opteron predecessors on the Price/Performance metric that got AMD up to around 23% of the server market share in the past. So now with Epyc/Rome and soon to be released Epyc/Milan Intel will have to contend with that and AMD’s Zen-4 Epyc/Genoa on 5nm by 2021 and Intel has already had/is planning layoffs because Intel’s high margin mark-ups are taking a hit and so will those revenues needed to run Intel’s high overhead operations.

AMD can turn a profit at 43% gross margins while Intel would loose billions at that gross margin level. So now Intel is dropping it’s server CPU markups like a brick just to match AMD’s Epyc/Rome price/performance metrics and AMD has Epyc/Milan ready and sampling to potential customers so Epyc/Rome can have its price lowered by AMD and AMD will not go revenue negative like Intel will be having to go in an attempt to stop the bleeding market share. Each and every week AMD racks up new Epyc/Rome cloud customer orders and Intel is still not able to ramp up enough production at 10nm and even 14nm to stop AMD’s market share gains.

Once the Wall Street Quants fully suss out Intel’s longer term Gross Margin basis points decline/decline potential Intel will really have to rely on its other non processor business units to maintain and server CPUs was/is Intel’s high margin holy cow that’s having to be priced significantly lower. So with each percentage of Sevrer market share lost to AMD that’s millions of dollars less revenue for Intel and millions of dollars more for AMD on the quarter to quarter balance sheets. TSMC’s 7nm and AMD’s modular/scalable CCDs are totally the very definition of economy of scale and since AMD is fabless and TSMC provides fab services to an entire market of fabless chip makers they all share in TSMC’s fab upkeep/node R&D costs amortizations.

You are not going to be able to go against AMD’s/TSMC’s economy of scale and that’s just economics there once AMD’s Epyc/Rome benchmarks proved that Intel is not able to compete at its traditional price points and the layoffs will continue at Intel as those massive operating overheads have to be reduced or Intel’s cash reserves will evaporate rather quickly. Chip fabs are the most hungry of operations to keep fed and at 7nm and lower that’s billions more compared to 14nm/above just to hit the ground running.