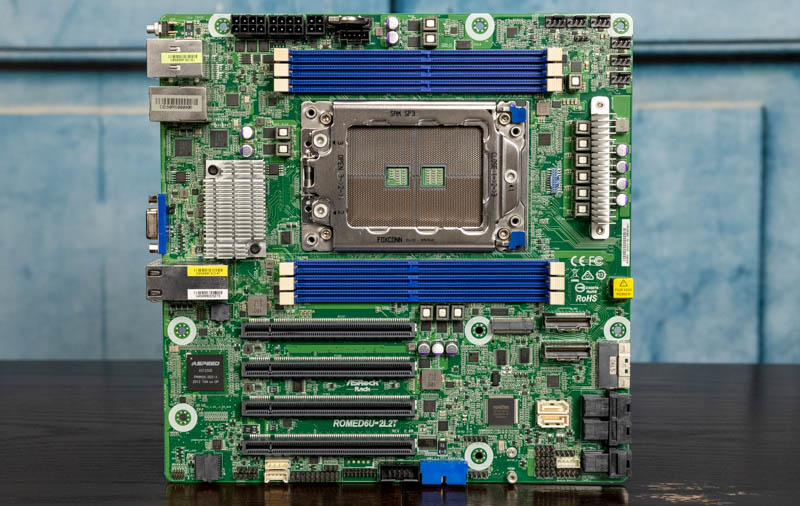

This motherboard certainly falls in the category of unique, if not borderline “wild” for a server motherboard design. One of the major challenges with current-generation server CPUs is that the CPU socket and the number of DIMM slots and PCIe lanes have gone up significantly, as has power. As a result, while mATX may have been common in the Xeon E5 generation, it has been less commonplace with the newer generations of CPUs. ASRock Rack, however, has taken the challenge of fitting an AMD EPYC 7002/ 7003 system into a mATX motherboard. That is the story of the ASRock Rack ROMED6U-2L2T which we are now going to review.

ASRock Rack ROMED6U-2L2T Overview

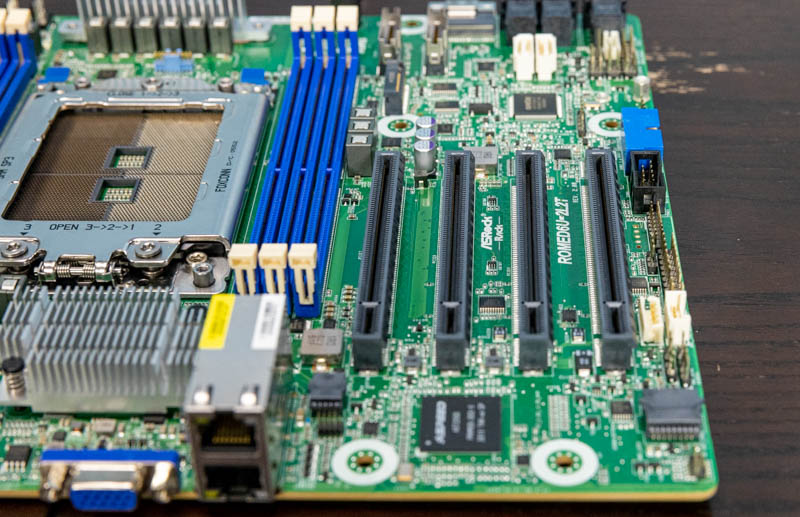

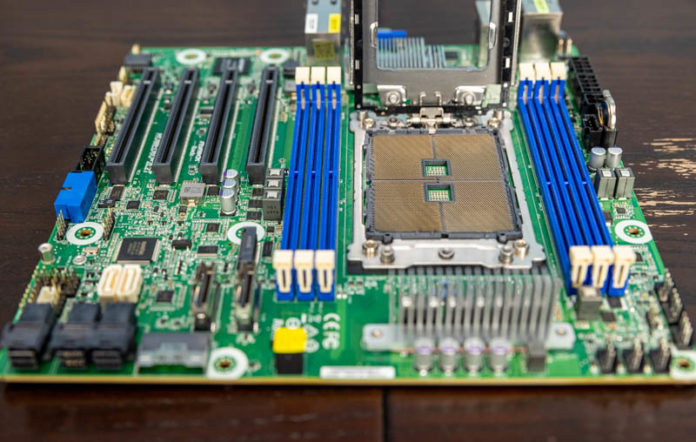

The motherboard itself is a relatively standard mATX size motherboard which is 9.6” x 9.6” (244mm x 244mm.) This is very interesting since that means it will fit in a variety of different chassis. It also poses a challenge since it restricts the total surface area ASRock rack has to place components. At the same time, ASRock Rack almost maximized a single-socket AMD EPYC 7002/7003 platform even in this small form factor.

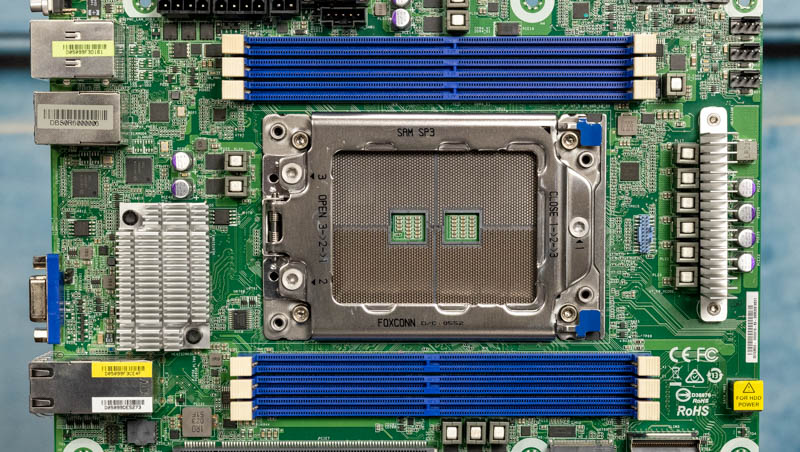

For the CPU support, officially this supports the AMD EPYC 7002 and EPYC 7003 series. We started using the EPYC 7002 series for our review, then used an upgraded firmware package to add EPYC 7003 series processors. At the time we are writing (and we will check this on the day the review goes live) the only available EPYC 7003 firmware is designated as “lab firmware.” We confirmed this firmware worked with the EPYC 7713 but would caution our readers to check what is the most current version supported if they are reading this review in the future to make a purchasing decision.

This also shows us perhaps the biggest trade-off with this motherboard. An AMD EPYC 7002/ 7003 CPU can support up to 8 channels of memory in two DIMMs per channel (2DPC) mode. Given the space limitations, we get only six DIMM slots for six-channel memory. This does mean that we are practically limited to 75% of the total memory bandwidth available in this platform. The other way to view it, however, is that compared to a 2nd Generation Intel Xeon Scalable part we have the same number of channels but speeds supported up to DDR4-3200 so there is still some benefit to the EPYC platform here. The slots support the standard set of EPYC memory options such as ECC memory, RDIMMs, and LRDIMMs. We see 6x 16GB, 6x 32GB, and 6x 64GB being popular options here, but one can go higher.

The cooling is set up for standard front-to-rear airflow. To power fans, we get a total of six 4-pin PWM fan headers. On many compact motherboards, we will see this number cut to something like four headers to save space. ASRock lists the EPYC 7H12 as compatible (we could not test this) so with 280W TDP CPUs and the potential for many PCIe devices, cooling is going to be important in systems built around this platform.

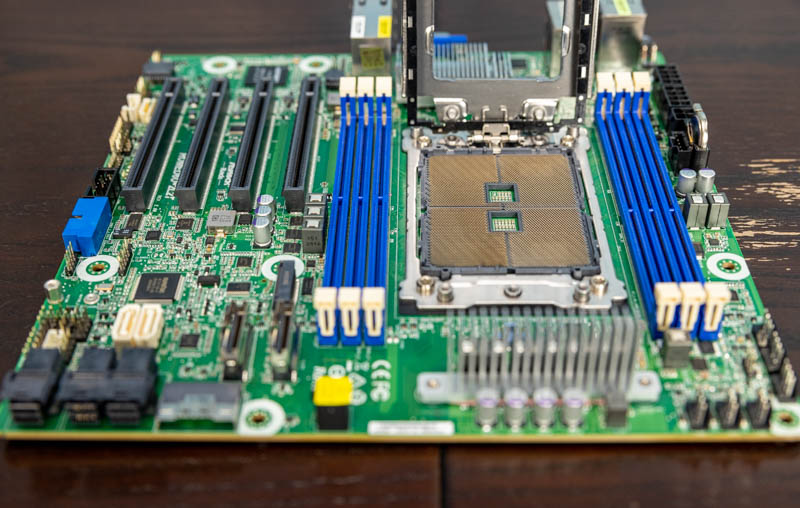

While ASRock certainly had to compromise on the memory slot configuration, it did not have to do so on the rest of the I/O configuration. For PCIe slots we get four slots which is common on mATX motherboards. The difference is that these are PCIe Gen4 x16 slots and all have full x16 electrical interfaces to the CPU. On some of the more consumer motherboards, we see many PCIe x16 slots with shared links to the CPU. That is not the case here since this only uses 64 of the platform’s 128 PCIe lanes.

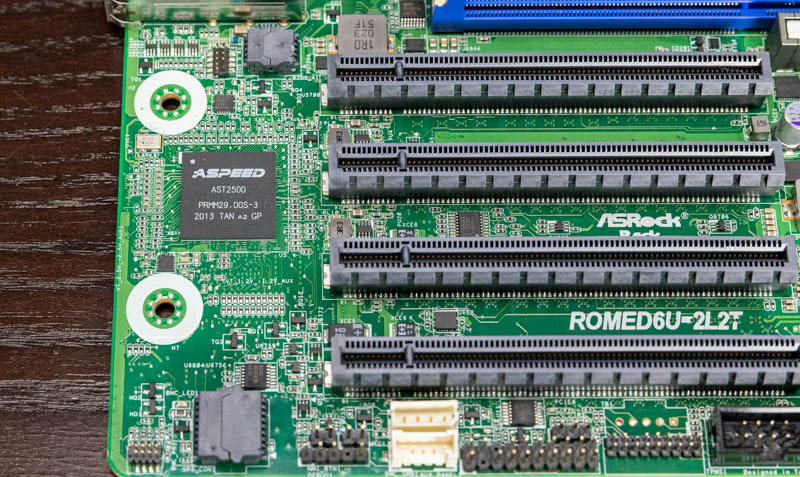

Next to the PCIe slots, we are just going to quickly note the ROMED6U-2L2T uses an ASPEED AST2500 BMC. If you are unfamiliar with the BMC in a server, see our article: Explaining the Baseboard Management Controller or BMC in Servers. This is what provides out-of-band management functionality.

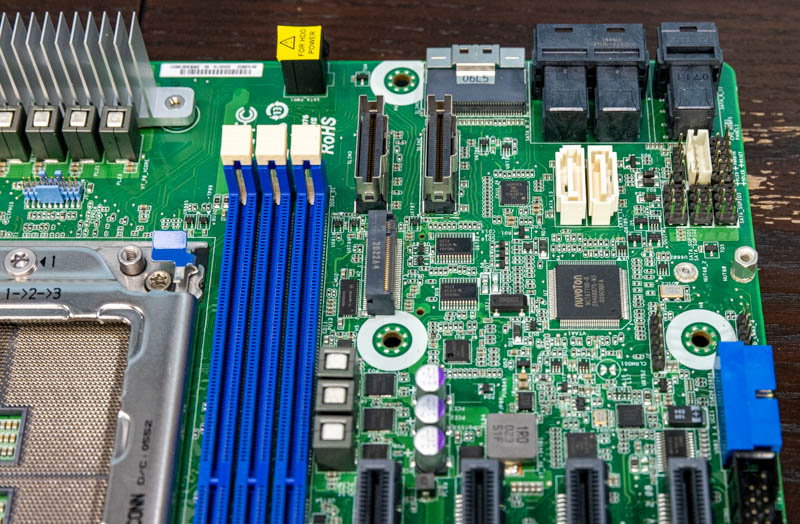

Next, there is an M.2 2280 slot. This goes just behind the four PCIe slots and near the storage I/O interfaces.

There are also two 7-pin SATA ports. If you simply need a SATA port or two for boot, then these are the options.

Then we get to what may be the most special feature of this platform, the rest of the I/O. As a fair warning, on the next page, we are going to have the block diagram for this motherboard. It may be worthwhile opening page two in a side-by-side tab if you want to trace through this.

There are a total of three MiniSAS HD and Slimline NVMe connectors on the motherboard.

Even beyond the two onboard SATA ports and the M.2 slot, there is a lot of storage connectivity. The three MiniSAS HD connectors each provide four SATA ports for a total of twelve from those.

One can also see three Slimline NVMe connectors. Each of these is PCIe Gen4 x8 capable, which means one can use them to connect to backplanes for NVMe SSDs, or other PCIe devices. One of the Slimline ports can also be used for 8x SATA. We ordered a cable via Aliexpress to try the 8x SATA but 5 weeks in, it has still not arrived so we are going to have to assume the block diagram is correct on this one.

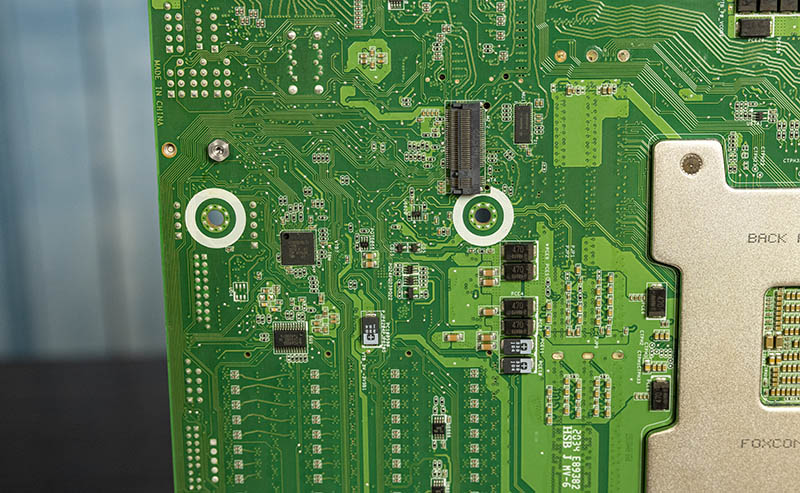

Underneath the board, there is actually a second M.2 slot, however, we did not get to test this one.

Overall, ASRock Rack is exposing almost all of the 128x high-speed I/O lanes as either PCIe or SATA III on this platform. Many larger ATX motherboards do not even do this.

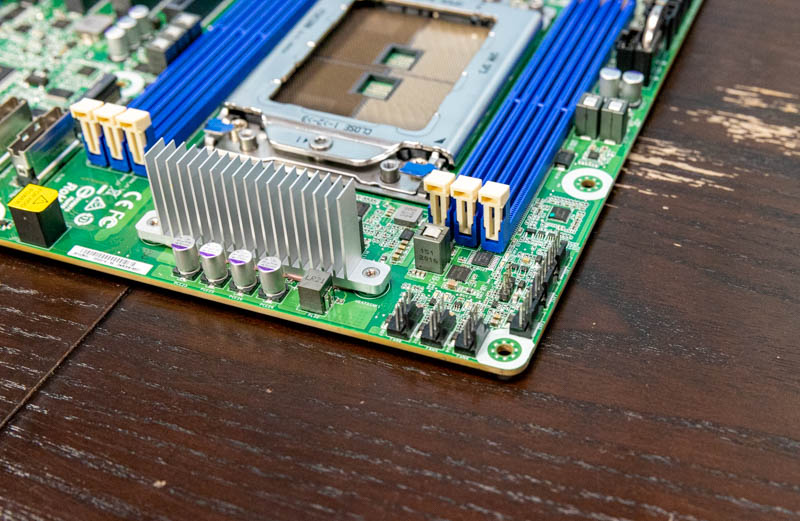

Given the expansive I/O on the rest of the motherboard, one may assume basic I/O on this system. We have a standard I/O set with two USB 3 ports and an out-of-band management port for the BMC. There is a legacy VGA port for basic connectivity. 1GbE connectivity is powered by dual Intel i210 controllers. The nice, and perhaps a surprising feature, is the dual 10Gbase-T ports powered by an Intel X710-AT2 controller. The heatsink shown below between the VGA port and the SP3 CPU socket cools the X710 NIC. As a result, there is no room for a serial console output port on the rear I/O block.

On the bottom edge of the motherboard, there is a USB 3.0 front panel header oriented parallel to the motherboard PCB plane. We will simply note that you may need to check for clearance on this as well as the MiniSAS HD and Slimline 8i ports that come off of the other edge of the motherboard as it may be a challenge to use the ports in tight cases.

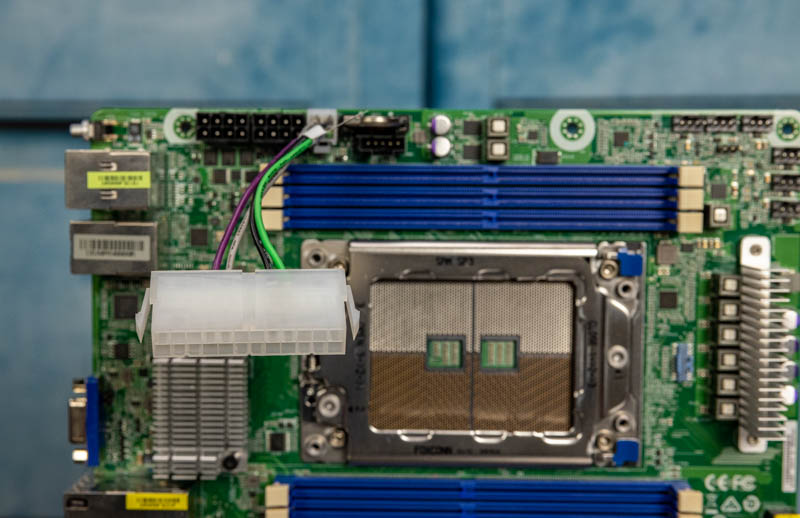

Then things get a bit more special. One will notice that we do not have a standard ATX power supply connector in this system. Instead, this can be DC-powered via the two 8-pin 12V DC IN in or using a standard ATX power supply using a 24-pin to 4-pin converter. One still needs to connect 8-pin power with the 4-pin power converter though. Our motherboard that ASRock Rack sent had this 24 to 4 pin converter in the box. (Apologies for how dark this photo is, the white connector was overexposed otherwise.)

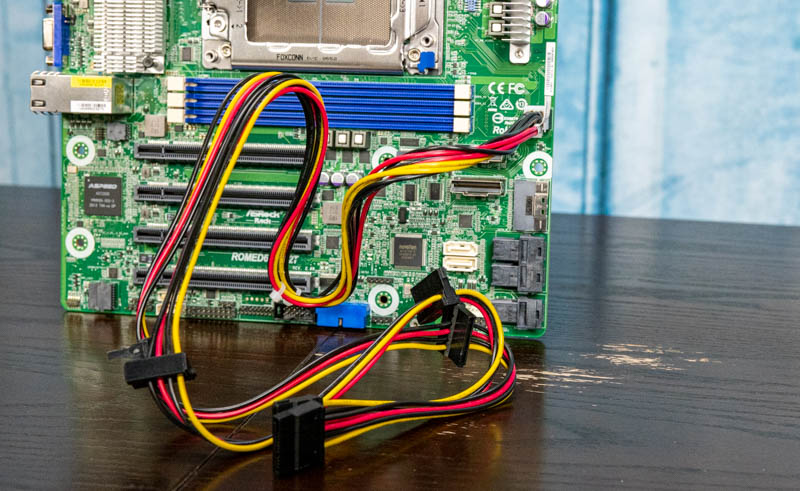

Our box also had a MiniSAS HD to 4x SATA breakout cable, but the more interesting inclusion was this cable. There is an additional output on the motherboard that can supply power. This is especially useful if using a DC power input where one may not have the SATA power cable assortment found on ATX power supplies. The cable provides two sets of three SATA power connections for a total of six.

A quick word of warning here, one should be careful to use the 24-pin to 4-pin converter and this SATA power cable on the correct 4-pin headers. As one can imagine, using them incorrectly could cause damage to the motherboard. That is why we are showing these installed.

Next, let us get to the block diagram before we get to the management and the remainder of our review.

ASRock Rack should start building boards with onboard sfp connectors for ethernet >= 10G.

That makes the stuff much more useable.

Thanks for this long waiting review !

I think it’s an overkill for high density NAS.

But why did you put a 200Gb NIC and say nothing about it?

Incredible. Now I need to make an excuse that we need this at work. I’ll think of something

Hello Patrick,

Thanks for the excellent article on these “wild” gadgets! A couple of questions:

1. Did you try to utilize any of the new milan memory optimizations for the 6 channel memory?

2. Did you know this board has a second m.2? Do you where I can find an m.2 that will fit that second slot and run at pcie 4.0 speeds?

3. I can confirm that this board does support the 7h12. It also is the only board that I could find that supports the “triple crown”! naples, rome and milan (I guess genoa support is pushing it!)

4. cerberus case will fit the noctua u9-tr4 and cools 7452, 7502 properly.

5. Which os/hypervisor did you test with and did you get the connectx-6 card to work at 200Gbps? with vmware, those cards ran at 100Gbps until the very latest release (7.0U2).

6. which bios/fw did you use for milan support? was it capable of “dual booting” like some of the tyan bios?

Thanks again for the interesting article!

Beautiful board

@Sammy

I absolutely agree. Is 10GBase-T really prevalent in the wild? Everywhere I’ve seen that uses >1G connections does it over SFP. My server rack has a number of boards with integrated 10GBaseT that goes unused because I use SFP+ and have to install an add-in card.

Damn. So much power and I/O on mATX form factor. I now have to imagine (wild) reasons to justify buying one for playing with at home. Rhaah.

@Sammy, @Chris S

Yes, but if they had used SFP+ cages, there would be people saying why don’t they use RJ-45 :-) I use a 10GbE SFP+ MicroTik switch and most the systems connected to it are SFP+ (SolarFlare cards) but I also have one mobo with 10GBase-T connected via an Ipolex transceiver. So, yes if I were to end up using this ASRock mATX board it would also require an Ipolex transceiver :-(

My guess it that ASRock thinks these boards are going to be used by start-ups, small businesses, SOHO and maybe enthusiasts who mostly use from CAT5e to CAT6A, not fiber or DAC.

The one responsible for the Slimline 8654-8i to 8 x SATA cable on Aliexpress is me. Yes. I had them create this cable. I have a whole article write up on my blog and I also posted here on STH forums. I’m in the middle of them creating another cable for me to use with next gen Dell HBA’s as well.

The reason the cable hasn’t arrived yet, is because they have massive amounts of orders, with thousands of cables coming in, and they seemingly don’t have spare time to make a single cable.

I’ve been working with them closely for months now, so I know them closely.

Those PCIe connectors look like surface mounts with no reinforcement. I’d be wary of mounting this in anything other than flat/horizontal orientation for fear of breaking one off the board with a heavy GPU…

@Sammy and @chris s….what is the advantage of sfp 10gb over 10gbase-t ??

I remember PCIe for years not having reinforcement Eric. Most servers are horizontal anyway.

I don’t see these in stock anymore. STH effect in action

@Patrick, wouldn’t 6 channel memory be perfect for 24 core CPUs, such as the 7443P; or would 8 channels actually be faster – isn’t it 6*4 cores?

@erik answering for Sammy

SFP+ would let you choose DAC, MM/SM fiber connectors. Ethernet @ 10gb is distance limited anyway, and electrically can draw more power than SFP+ instances.

Also 10G existed as SFP+ a lot longer than ethernet 10G existed, I’ve got sfp+ connectors everywhere, and SFP+ switches all over the place. I don’t have many 10G ethernet cables (cat 6a or better recommended for any distance over 5ft), and I only have 1 switch around that does 10G ethernet at all.

QSFP28 and QSFP switches can frequently break out to SFP+ connectors as well so newer 100/200/400gb switches can connect to older 10g sfp+ ports. 10GB ethernet conversions from SFP+ exist but are relatively new and power hungry.

TLDR: SFP became a server standard of sorts that 10Gbase-T never did.

One thing I noticed is that even if ASRock wanted SFP+ on this board they probably couldn’t fit it. There isn’t enough distance between the edge of the board and the socket/memory slots to fit the longer SFP+ cages.

Thanks Patrick, that is quite nice find. Four x16 PCIe slots plus a pair of m.2, a lot of SATA and those 3 slim x8 connectors is IMO nearly a perfect balance of I/O. Too bad about the DIMMs. Maybe Asrock can make an E/ATX board with the same I/O balance and 8 DIMMs. I still thimk the TR Pro boards with their 7 x16 slots are very unbalanced.

My main issue with the 10GbaseT (copper) ports is the heat. They run much hotter than the sfp+ ports.

I believe there is a way to optimize this board with it’s 6 channels if using the new milan chips. I was looking for some confirmation.

Patrick, Thank you for your in-depth review. Hit every mark on what i was looking for that is important. ASRock ROMED6U-2L2T fantastic board, for the I/O, can’t find one that works like Data Center server in mATX form. Is there a recommended server like or other casing you or anyone tested this board, the required FANs cooling and power with fully populated and maintains server level reliability. I love that this has IPMI.

I would like to see a follow on review with 2x100G running full memory channels on this board, and running RoCE2 and RDMA.

Great review, thank you. I am still confused about what, if anything, I should connect to the power socket label 1 and 2 in the Quick Installation Guide. The PSU’s 8-pin 12 V Power connector fits but is it necessary?

@Martin Hayes

The PSU 8-pin/4+4 CPU connectors are what you use to connect. You can leave the second one unpopulated however but with higher TDP CPU’s it’s highly recommended for overall stability.

Thanks Cassandra – my power supply only has one PSU 8-pin/4+4 plug but I guess I can get an extender so that I can supply to both ports

OMG, this is packed with features then my dual socket xeon. I must try this. Only ASRock can come up with something like this.