AMD EPYC 7F32 Market Positioning

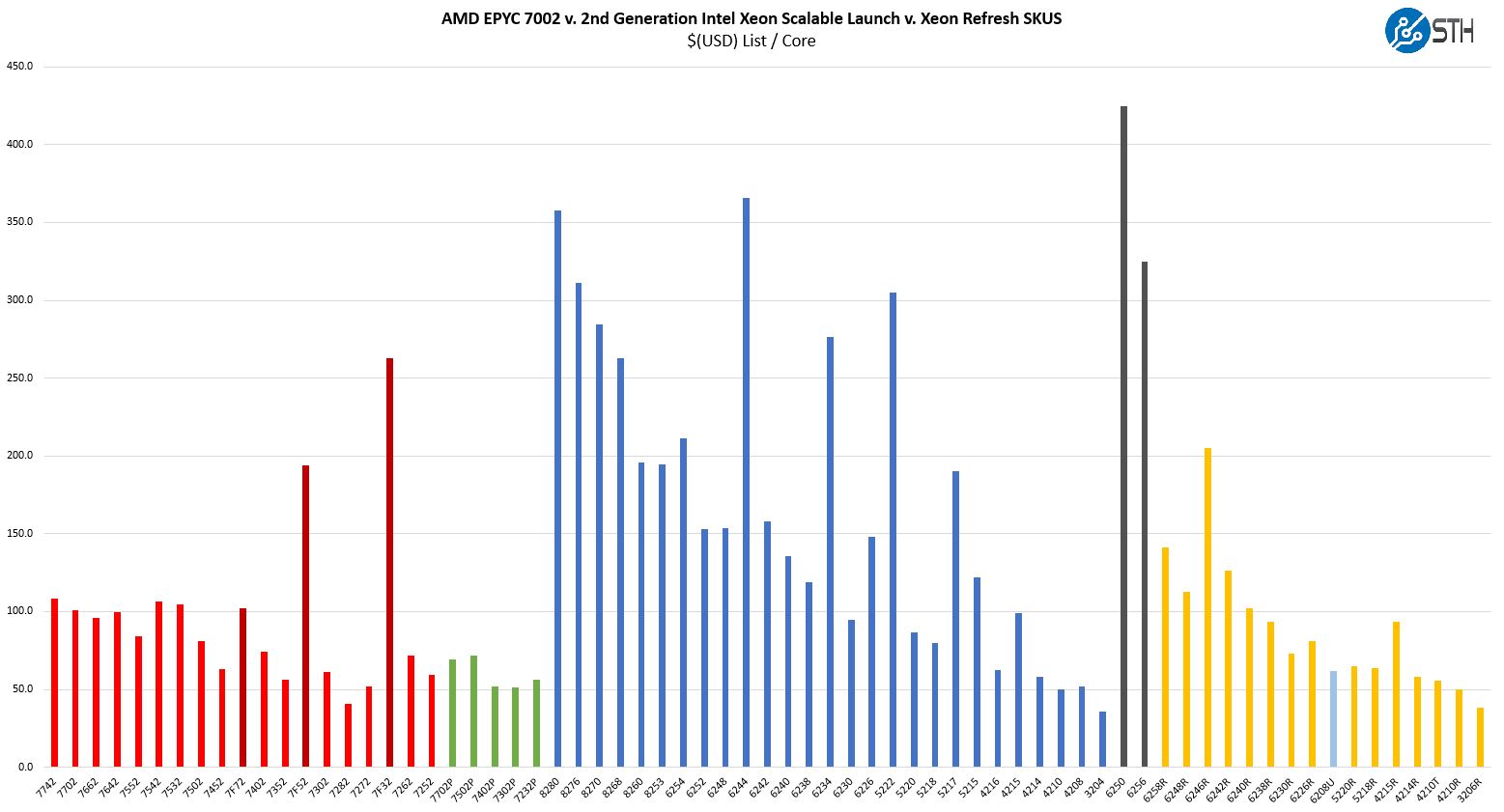

Thes chips are not released in a vacuum instead, they have competition on both the Intel and AMD sides. When you purchase a server and select a CPU, it is important to see the value of a platform versus its competitors. Here is a look at the overall competitive landscape in the industry:

We are going to first discuss AMD v. AMD competition then look to Intel Xeon.

AMD EPYC 7F32 v. AMD EPYC

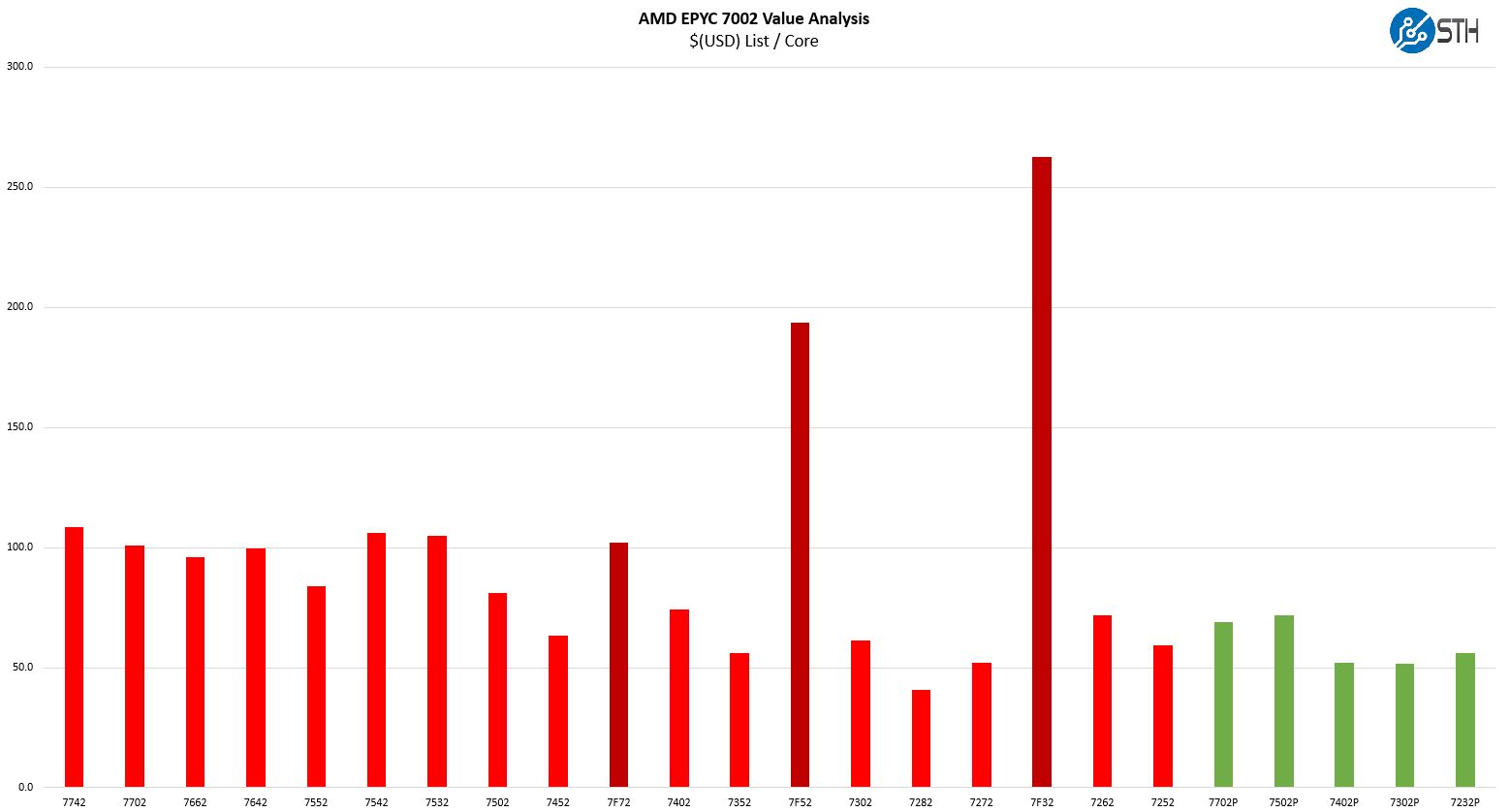

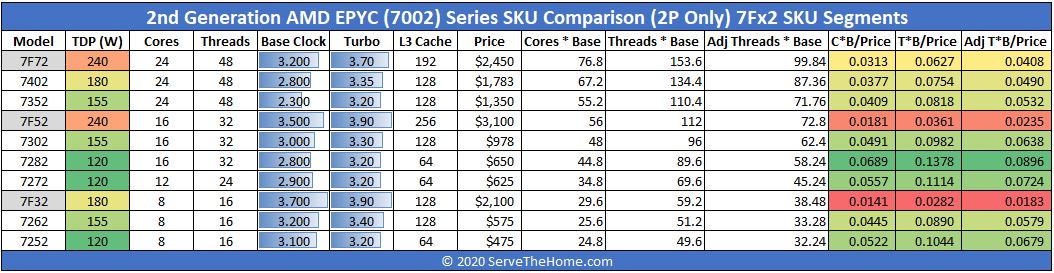

One of the nice features surrounding a frequency optimized part is that there are only a few effective competitors. There are the other 8-core SKUs in AMD’s stack as well.

Here we can see that the AMD EPYC 7F32 is around 262% more expensive per core than the EPYC 7262. That puts the chip in-line on a price basis with the bottom-end of the 32-core SKU stack such as the EPYC 7452, but with a quarter of the cores. If you have some moderately priced licensed by core software, then this is going to be a small premium to pay. We are talking about software that can cost thousands or tens of thousands of dollars to license per core, so getting maximum 8-core performance is going to be worth a $1500 premium.

Unlike the other “F” SKUs such as the EPYC 7F52 and EPYC 7F72, this is a 180W TDP part instead of a 240W TDP part. As a result, it is well within the ranges that one can cool in 2U 4-node dense dual-socket servers. While at 240W thermals are a big deal, at 180W it is relatively mundane to handle.

AMD EPYC 7F32 v. Intel

Although the AMD EPYC 7F32 a few months ago would have launched versus the Intel Xeon Gold 6244 there was a development around the same time as this chip was launched. The first development has been the Big 2nd Gen Intel Xeon Scalable Refresh altered the competitive landscape. Intel effectively dropped prices across most of its mainstream CPUs.

That did not necessarily touch this segment as much. Intel’s primary competitor is the Xeon Gold 6250. That is a $3400 8-core part or $425 per core with a higher TDP at 185W. Unlike most of the SKUs launched with the “R” suffix, this is considered a standard Xeon Gold, not a true refresh part. That means the Xeon Gold 6250 has 3x UPI links for higher-performance dual-socket applications and can scale to 4-sockets.

AMD offers a larger memory footprint with up to 4TB in 8-channel DDR4-3200 versus 1TB in 6-channel DDR4-2933. AMD has more PCIe I/O with 64, 96, or 128 PCIe Gen4 lanes per socket compared to Intel’s 48x PCIe Gen3 lanes. Intel has features such as AVX-512 and VNNI instructions (DL Boost) that AMD does not have but are new for 2nd Generation Intel Xeon Scalable. Perhaps the biggest feature Intel has is Optane DCPMM support. We should note here that DCPMM support is limited by memory capacity since the Gold 6250 is not a “L” SKU. Still, if one wants fast storage, DCPMM is a significant option that AMD does not have. If you are not using small DCPMM footprints, nor the new instructions, then AMD is extremely competitive.

Even at the highest price per core, by a wide margin, for the EPYC 7F32, AMD is still providing a discount for more performance and a bigger platform over Intel.

A note from the Editor:

The elephant in the room is that the per-core license server market is tough. Organizations that use this type of software tend to also be enterprises that have standardized on certain server models. For example, the Dell EMC PowerEdge R740(xd.) As a result, the same servers organizations have purchased for years can also use the Gold 6250. From an operational standpoint, there is no need to change. This is one of the biggest inhibitors to AMD adoption but one that is rarely discussed in reviews that focus on the chips or individual servers themselves.

While John has shown that the AMD EPYC 7F32 is a great platform, the organizations purchasing this are not the same ones that are buying the lowest-cost servers that run all open-source software. What this does represent is AMD showing it can offer a more full-stack solution. For those organizations looking at economic constraints for the next cycle or cycle and a half, one can purchase Rome servers today, and run the same servers through 2020/ 2021 with Milan (EPYC 7003) instead of making a jump to shorter-lived Cooper Lake Intel Xeon Platforms or Ice Lake “Whitley” platforms later in 2020/ 2021.

Refresh cycles and planning have a major impact on the market that we see the EPYC 7F32 play in. While the product itself may be great, there are structural inhibitors to EPYC adoption thus far. We also suggest that our readers think about the 2020-2021 planning cycle in the context of companies looking to increase EBITDA by limiting IT spend. Saving thousands on servers and software licenses can help hit numbers and keep the IT team employed during a downturn. /Patrick

Final Words

At first, the EPYC 7F32 pricing may seem jarring. In reality, it is in-line with much of Intel’s pricing over the last few years and still represents almost a 40% discount over Intel’s competitive solution. It is actually healthy for the market that we are seeing AMD expand and compete in these last bastions of Intel product dominance. AMD has largely closed the performance gap even at the high-end here. As a result, it also provides a more complete “Rome” solution with different scaling vectors from lower cost, to more cores, to more per-core performance. For AMD and its partners, this is a huge step in moving the needle on that front.

The AMD EPYC 7F32 did well in most tests, but not every test. As a result, AMD has a competitive solution that performs better than the Xeon Gold 6250 in most cases. In the two storylines of this review, AMD is now competing in the 8-core frequency optimized segment and AMD is usually winning.

It seems strange to offer this, but if you are looking to save your organization money in 2020 by further optimizing on per-core performance, then the most expensive $/core AMD EPYC 7002 part may actually be the solution. Hopefully, this review offers some points that can help you make a better-informed decision for your team or at least start the discussion.

‘F’ is for ‘F-ing confusing’ names.

I like including the HPE numbers. You’re using a Gen10+ for AMD but I get why. You’re basically making the point for why you would in the editor’s note.

We are considering the 7F* models despite the price premium. When your license costs (vastly) dominate your hardware costs, peak per-core performance can rule the day. We’re looking at 7F32 for our critical workloads that gate delivery to manufacturing, and 7F72 for volume workloads as a nod to datacenter space constraints.

Your pg.4 analysis is spot on.

I agree with @HansF1973.

The candor of the product/market analysis on page 4 is refreshing to see in a review article.

I’ve read too many review articles elsewhere that are little more than blah cheerleader material.

3.9 GHz on 8 cores for …how much???! $2100? Those will likely gather some dust on assorted shelves…(too pricey, IMO)

Sadly the processor cost is nothing compared to the licensing fees on a couple of dozen programs, see: https://glennsqlperformance.com/2020/05/20/recommended-amd-processors-for-sql-server/ .

If you are entangled it’s probably worth paying to dig your way out and move to PostgreSQL, MySQL or another open source solution: https://db-engines.com/en/system/Microsoft+SQL+Server%3BMySQL%3BOracle%3BPostgreSQL – though in some cases running under a VM with fewer cores is an option the pain comes when the fine print says that the Enterprise edition must be licensed for a minimum of 16 cores, so it’s twice as bad as first thought.

In some cases (Maya, Ansys, others) there is ‘no’ alternative, trying to get something to look like Maya is an Art in itself, and some things are simply the accepted Standard (a selling point, in a service being offered).

In a narrow set of cases it’s not just the higher clocks and larger cache but also the larger memory, even the reliability; leaving dreams of ThreadRipper short lived and the longevity of Intel solutions better understood (look at the difference in price of the whole, not just a single part).

Still, these processors would need to be more than half as expensive to appeal to me; and I can afford to wait to see what next year brings us.