Many of the servers we review at STH are designed for maximum expandability, maximum performance, or optimizing on a specific density metric. While those are all great goals, that extra level of optimization adds costs. The Supermicro SYS-1029P-WTRT is a 1U server designed instead for cost optimization providing a dual-socket Intel Xeon Scalable compute platform in a small space. In our review, we are going to see how this impacts the server platform.

Supermicro SYS-1029P-WTRT Overview

The front of the SYS-1029P-WTRT is very straightforward. There are ten 2.5″ bays that are designed for SATA/SAS. Supermicro has options for NVMe but we are not testing those here. We are starting to see twelve bay 2.5″ 1U server designs. Those would forgo the clear set of LED indicators, large buttons, and two front-panel USB 3.0 ports.

As a quick note, our test system arrived without rack ears installed. For this class of server, we almost never see that. We left the rack ears and rails in the box for shipping, but as one would expect, these are rack-mountable. We just are doing an experiment with this server since the rack ears add horizontal distance to our photos that we reclaim by omitting them from photos like the above.

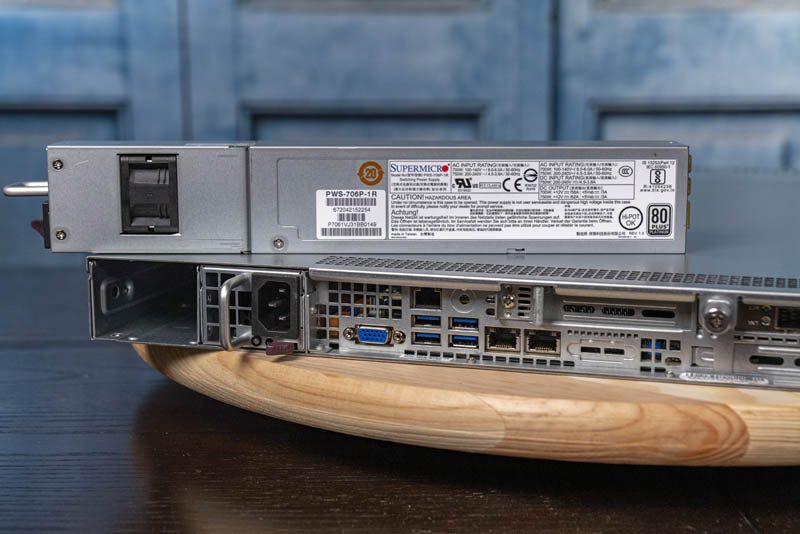

The rear of the chassis has a fairly typical layout for a Supermicro 1U server.

On the left side, we can see redundant hot-swappable 80Plus Platinum power supplies rated at 700W at lower voltages and 750W at higher voltages. We are not going into this here, but this server meets European Union Ecodesign Lot 9 requirements staying well below the thresholds for power consumption.

The rear I/O of the server has a VGA port as well as four USB 3.0 Type-A ports. There is an out-of-band management port. We have a section later in this review on management. Also, there are two 10Gbase-T network ports in the rear of the chassis. These 10GbE network ports provide basic connectivity and are connected through the Lewisburg PCH (C622.) Supermicro has a lower-cost SYS-1029P-WTR model that has 8-front panel 2.5″ bays and uses the C621 chipset making these ports 1GbE instead.

On the right side of the rear, we have an array of three expansion slots. Two are full-height and one is a low-profile slot. We are going to discuss those more as we move to the inside of the chassis.

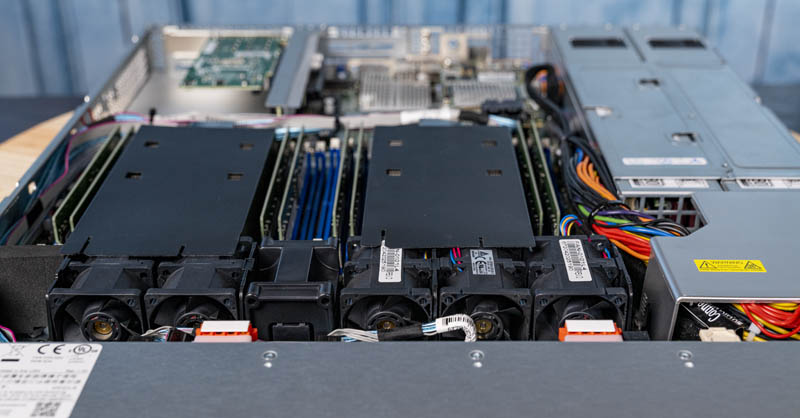

Upon opening the chassis, we find a fairly standard Supermicro 1U server layout. Behind the hot-swap, drive bays is the storage backplane followed by the fans, CPUs, and memory, then the redundant power supplies and motherboard I/O.

The CPUs have air shrouds that hook into the heatsinks. There was also adhesive that secured the shroud to the redundant 1U fans. One item we wanted to note around the fans is that the clearance between the fans and the storage backplane is far from vast. Extra cable lengths in this chassis need to be bound and neatly organized. Otherwise, they risk migrating into the spinning fans in the middle of the backplane. With a short 23.5″ or 597mm depth, this server is designed to be packed into short racks. The trade-off is that there are portions like the one depicted above and below where one needs to be careful about cable placement whenever the system is serviced.

Our tip here is to double-check the area around the set of five fan modules to ensure no cables are obstructing a fan nor are they prone to migrating into the fan modules during handling.

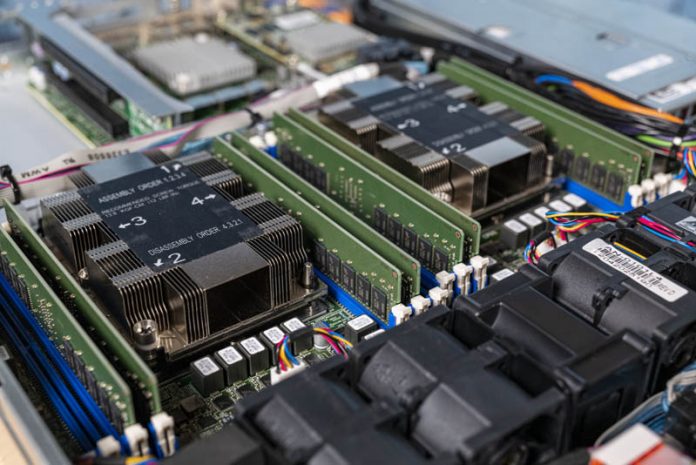

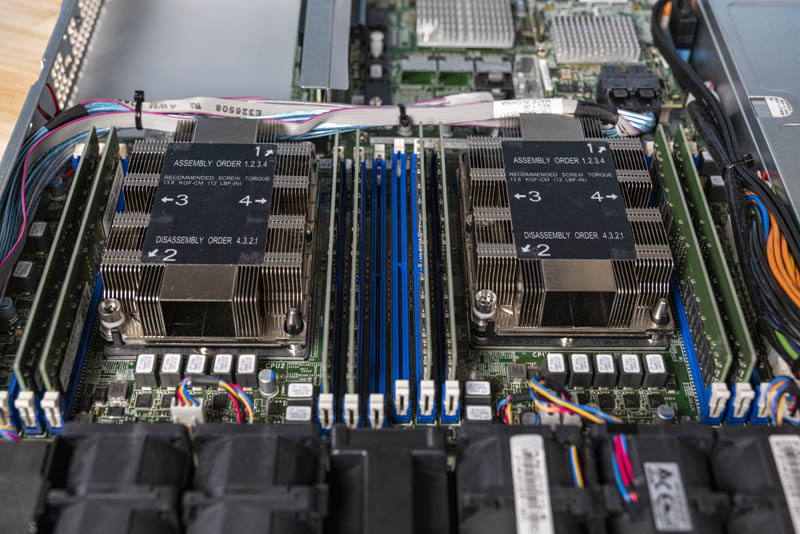

At the heart of the system are two Intel Xeon Scalable processors. Each CPU is flanked by six DDR4 DIMM slots. The system supports Optane DCPMM which is in the process of being re-branded Optane PMem 100’s. Support for PMem 100 / DCPMM is up to four modules or two per socket in a 1-1-1 configuration. Running in that mode would mean there are two DDR4 modules and a PMem 100/ DCPMM on each memory controller. With two memory controllers per CPU, we get four DDR4 DIMMs and two PMem 100/ DCPMMs per socket. In theory, one could get up to 2TB of DCPMM (4x 512GB) plus 1TB of DDR4 (using 128GB DDR4 DIMMs) in this system. To hit that level of memory, one will want a Xeon Scalable “L” SKU now that the 2nd Gen Intel Xeon Scalable M SKUs have been discontinued.

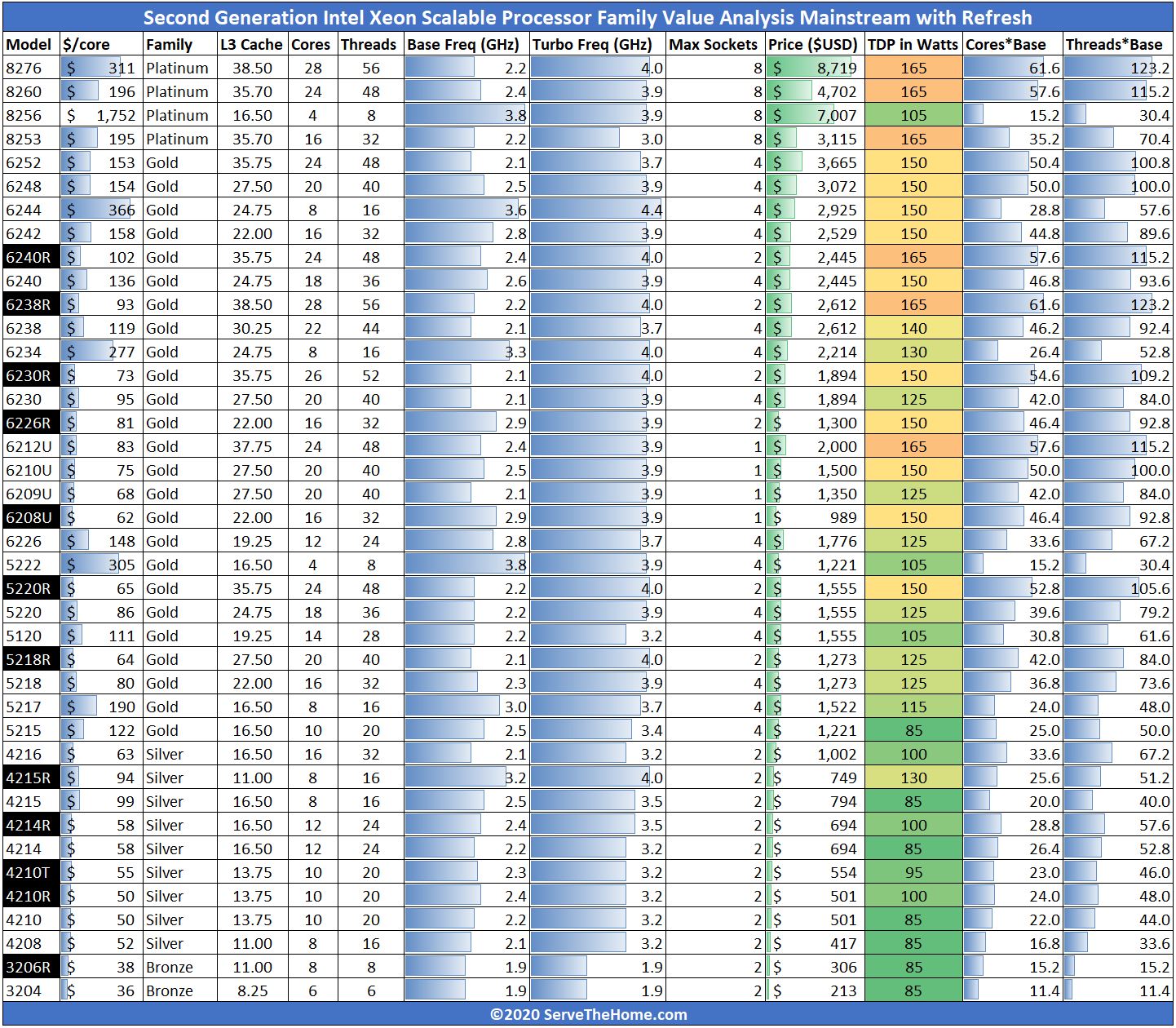

The CPU situation is fascinating. Supermicro makes motherboards that support up to 205W TDP CPUs and that support features including the Intel Skylake Omni-Path Fabric SKUs. Since this is a cost-optimized platform, the SYS-1029P-WTR is one of Supermicro’s 165W TDP SKU platforms. As a result, it does not support the higher-TDP SKUs in Intel’s stack such as the Intel Xeon Gold 6248R we reviewed and utilized in our Supermicro 2029UZ-TN20R25M. To make this easier for our readers, here are the public 2nd gen Xeon Scalable SKUs with 165W or lower TDPs:

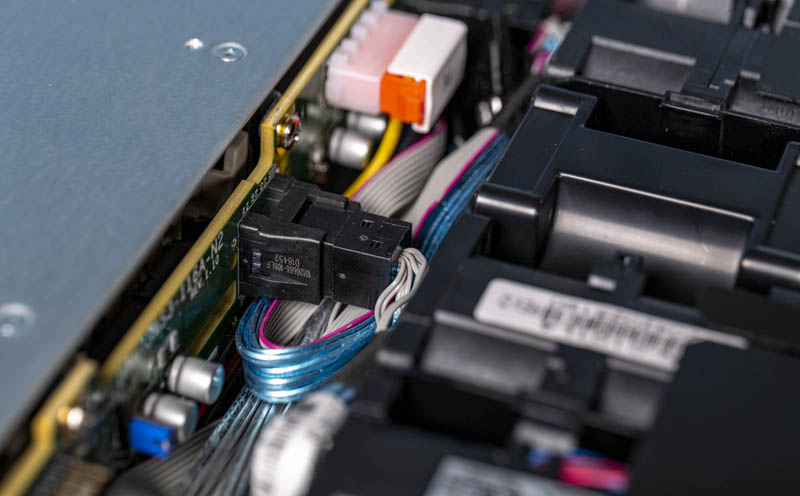

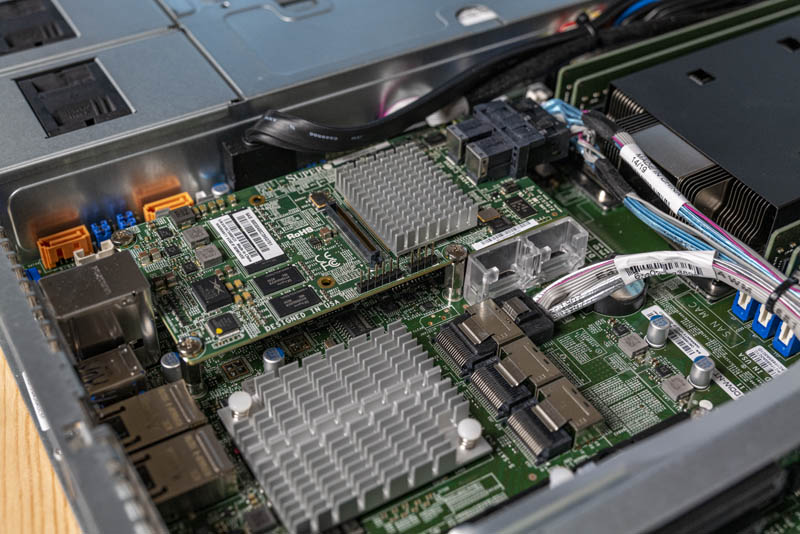

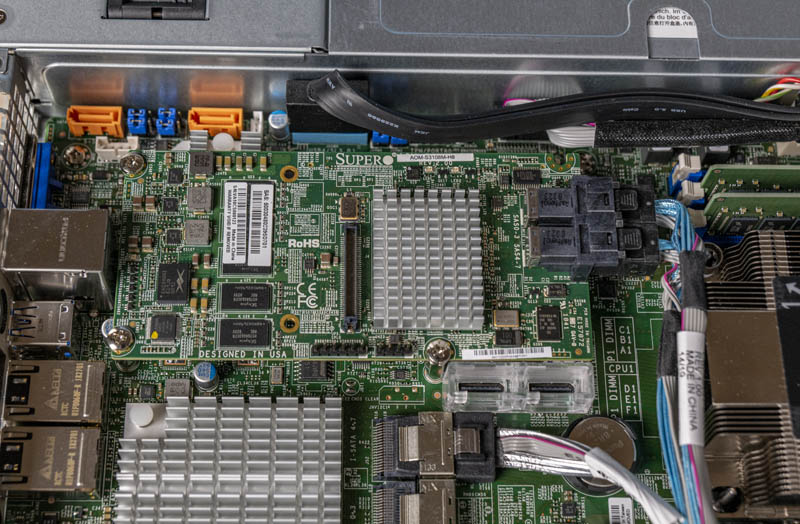

Near the rear of the motherboard, we see the internal I/O and storage section. Here we can see features such as the SFF-8087 ports for front panel SATA connectivity. We can see the Intel C622 PCH as well.

One will also notice the Supermicro AOM-S3108M-H8 mezzanine card. This is a SAS3 12.0Gbps RAID controller built on the LSI/ Broadcom SAS3108 controller with eight internal ports.

One can also see the two gold SATA ports that can be used for SATA DOMs and provide power directly to the DOMs without an external cable.

Looking at this area from the opposite angle, we can see Supermicro’s M.2 mounting solution. This solution allows for tool-less installation of M.2 drives. Small M.2 drive screws are prone to get lost and take a few seconds to service just by the nature of being a screw. Supermicro has a retention mechanism that can service different sizes of M.2 drives without needing to change the mounting point from the opposite side of the motherboard.

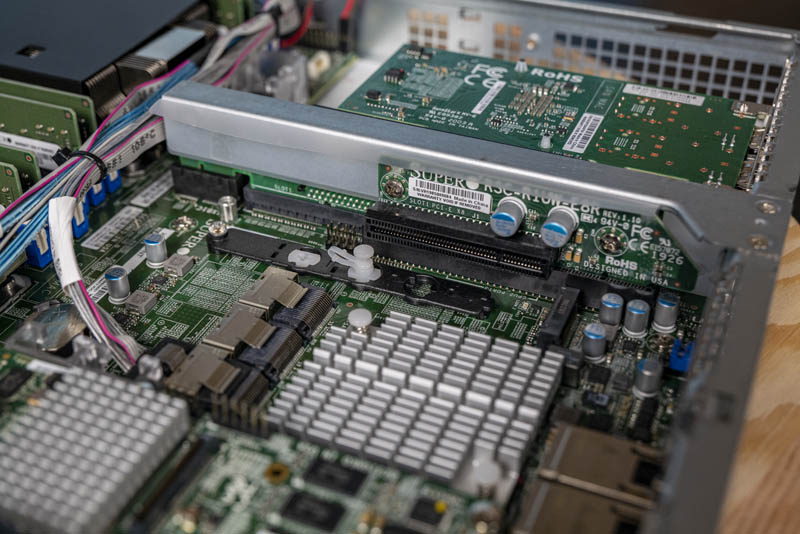

On the PCIe riser in the middle of the chassis, we get a PCIe x8 low-profile slot. This is a common configuration that we see on higher-end Supermicro servers. For example, most of Supermicro’s “Ultra” line of servers have the low-profile PCIe x8 riser in this location.

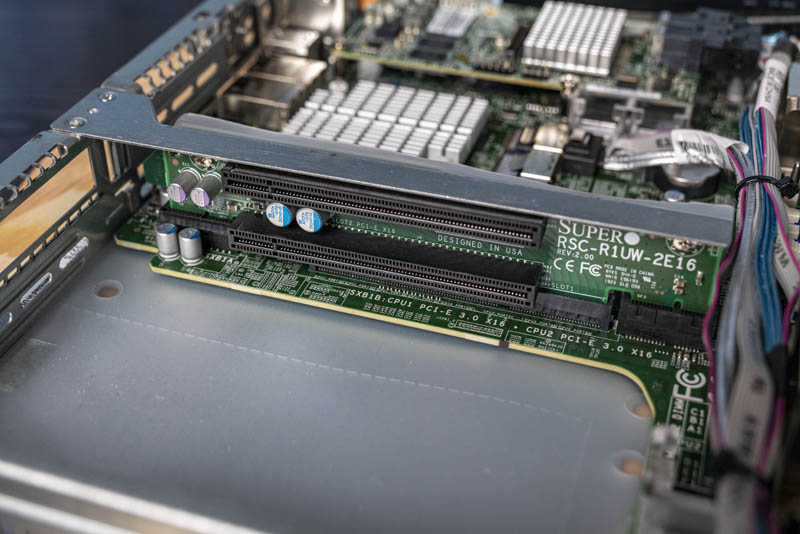

On the other side of the riser are two PCIe x16 slots. Interestingly enough, each slot goes to a different CPU. Part of Supermicro’s WIO design which is why these servers carry a “W” designation, was the innovation on adding more standard PCIe risers to 1U chassis. Instead of a rectangular motherboard where a PCIe card positioned on a riser would interfere with the motherboard PCB, the WIO design means there is enough room for two full-height, half-length PCIe expansion cards in this 1U design.

Here is a view of the riser from a slightly different cable but with a NIC installed.

While there are certainly trade-offs being made for cost optimizations, there is a lot of hardware packed into a relatively shallow chassis. We see many 1U servers extend past 30 inches which is more than 25% deeper than the SYS-1029P-WTRT. A short-depth design helps these servers access lower-cost shorter racks.

Next, we are going to take a look at the system topology, management, and our test configuration before moving to benchmarks.