Supermicro BigTwin SYS-2029BZ-HNR Storage Performance

Storage in the Supermicro BigTwin SYS-2029BZ-HNR is differentiated by the NVMe bays in the chassis for each node, but also the ability to support Intel Optane DC Persistent Memory Modules. We wanted to show what these can do to give some sense of what one can get out of each node.

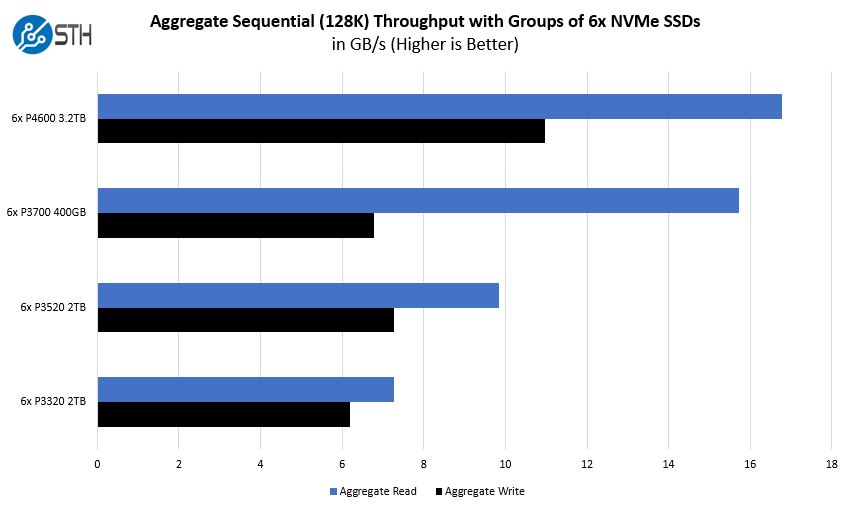

Having the ability to handle six NVMe SSDs per node means that the system can offer substantial storage performance. For those building hyper-converged clusters, each node can have large capacity NVMe SSDs which offer significant capacity as well. For example, one can get 48TB per node using 8TB NVMe SSDs or 96TB per node using 16TB NVMe SSDs. That translates into 192TB and 384TB per chassis respectively. The Supermicro BigTwin SYS-2029BZ-HNR is designed to cool these SSDs while still allowing for high power CPUs, Intel Optane DCPMM, as well as PCIe expansion modules. Some vendors are unable to support 24 NVMe SSDs due to cooling issues, but the BigTwin has no issue with this.

Supermicro BigTwin SYS-2029BZ-HNR Intel Optane DCPMM Testing

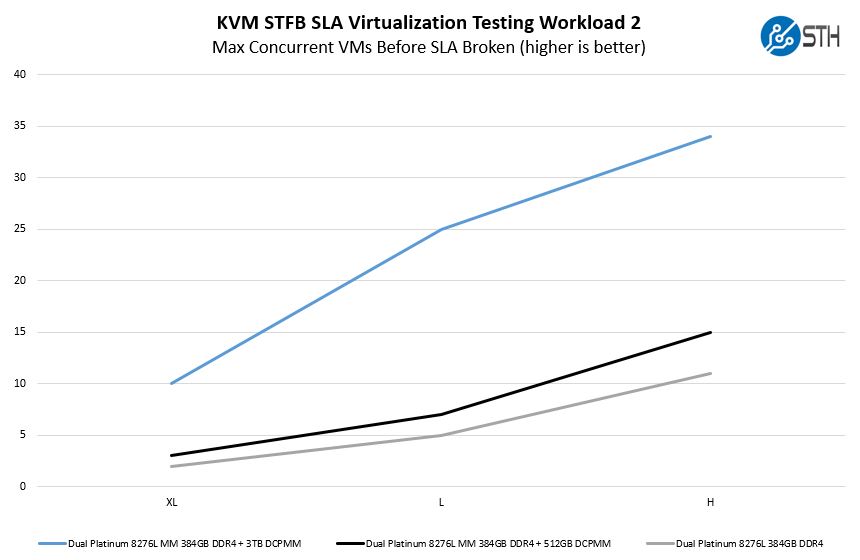

We wanted to test the Supermicro BigTwin SYS-2029BZ-HNR with Intel Optane DCPMM. We have started to use a workload from one of our DemoEval customers as we saw in our Quad Intel Xeon Platinum 8276L Benchmarks and Review. We have permission to publish the results, but the application itself being tested is closed source. This is a KVM virtualization based workload where our client is testing how many VMs it can have online at a given time while completing work under the target SLA. Each VM is a self-contained worker.

There is an interesting result here. Even with being mostly CPU bound, we are seeing uplift due to the additional capacity of Intel Optane DCPMM to handle more VMs.

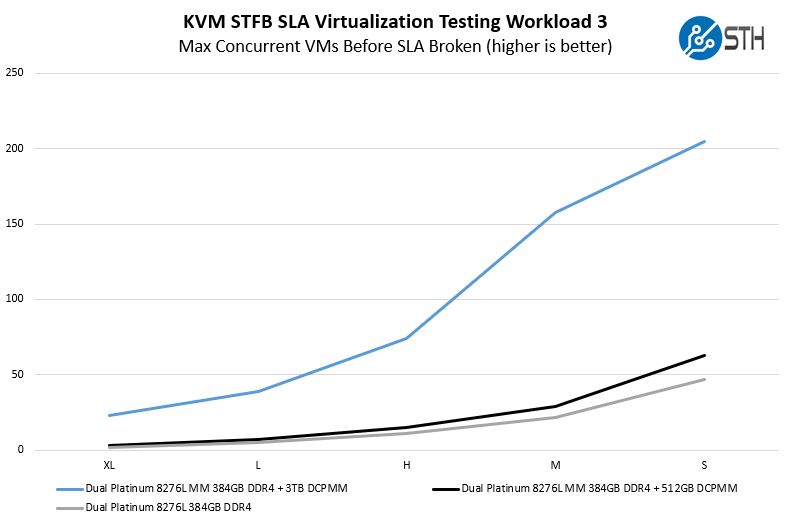

The company also has a CPU-light back-end workload that is mostly dependent on Redis performance and memory capacity with less of a CPU stressor.

This is a perhaps more stark example where Intel Optane DCPMM plus the 28 cores in the Intel Xeon Platinum 8276L are able to handle considerably more VMs than using DRAM alone. With the new generation of Intel Xeon Scalable processors and Intel Optane DCPMM, one can get more VM density than with previous generations.

Compute Performance and Power Baselines

One of the biggest areas that manufacturers can differentiate their 2U4N offerings on is cooling capacity. As modern processors heat up, they lower clock speed thus decreasing performance. Fans spin faster to cool which increases power consumption and power supply efficiency.

STH goes through extraordinary lengths to test 2U4N servers in a real-world type scenario. You can see our methodology here: How We Test 2U 4-Node System Power Consumption.

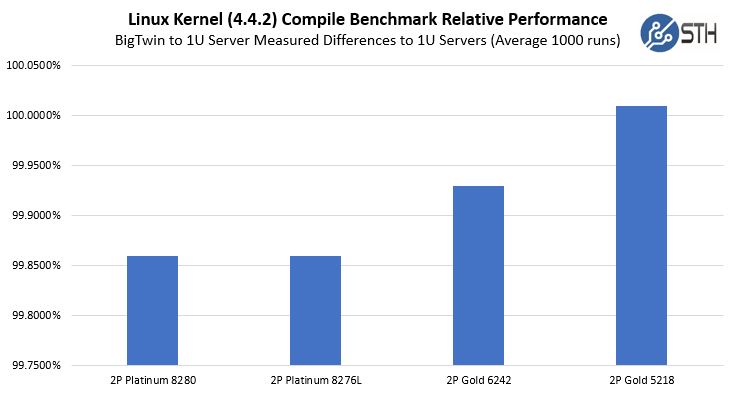

Supermicro BigTwin SYS-2029BZ-HNR Compute Performance to Baseline

We loaded the Supermicro BigTwin SYS-2029BZ-HNR with a few configurations to see the performance compared to our average 1U system performance. We then ran one of our favorite workloads on all four nodes simultaneously for 1400 runs. We threw out the first 100 runs worth of data and considered the 101st run to be sufficiently heat soaked. The other runs are used to keep the machine warm until all systems have completed their runs. We also used the same CPUs in both sets of test systems to remove silicon differences from the comparison.

Here, please note this is not using a 0 Y-axis. The differences are sub 0.3% so they would all look as though they are exactly on the 100% marker. They are close, and the Intel Xeon Gold 5218 configuration actually performed slightly better in the BigTwin than in our 1U servers.

Next, we will continue with our power consumption testing along with our STH Server Spider and final words.

That is amazing what they can fit into a 2U. Especially considering dual sockets and 24 DIMMS per node. My 4U chassis with SM X11-DPI has two sockets and 16 DIMMS total (and only 12 of those 16 are usable if I want to have 6 channel). Quite amazing. But I’m homelab, so this is way out of my league. Cool though

This is amazing dual socket processor 4 node in 2U rack form factor server. Price very competitive with a giant brand.

My concern only at after sales support. Once they have it. They will be a leader for this region.