What do you get if you combine a 2U 4-node chassis with NVMe and ample expansion? Supermicro’s incarnation is the BigTwin. Our review of the Supermicro BigTwin has an exciting new twist: we were able to measure the difference in power consumption between 1U and 2U systems and a consolidated 2U chassis. One of the most frequently asked questions we get is whether there is a good 24-bay four node NVMe system on the market. There are a few systems we are bound by NDA not to discuss that will arrive later this year, but for now, the Supermicro BigTwin NVMe may just be the top dog.

Supermicro BigTwin NVMe Hardware Overview

The Supermicro BigTwin NVMe is the company’s next-generation 2U 4-node platform that has a myriad of improvements over its predecessors. We are going to take a quick look at the BigTwin NVMe system hardware starting with the exterior. The front of the chassis has 24x NVMe SSD slots. These can be for anything from current generation NAND SSDs to next-generation 3D Xpoint / Optane SSDs. You can also see individual node controls and status LEDs on each rack ear.

The extra vents on the front panel were intriguing. Our sense is that they have to do with the fan orientation (more on this soon.)

Upon lighting this up in the data center we got a “hey that is cool” from one of our neighbors. The SuperMicro BigTwin has some panache! The Supermicro logo lights up. As word spread we soon had a fairly nerdy crowd gathered to see flair from a usually conservative server manufacturer.

The Supermicro BigTwin does have a feature we are seeing more often on 2U 4-node systems. The chassis top has a middle section so that fans can be serviced without having to pull the chassis entirely out of the rack.

The rear of the chassis features power supplies in the middle with two nodes on either side. These skinny PSUs are capable of 2.2kW of output making them slender powerhouses. This configuration does have one minor drawback. In some racks the PDU can interfere with access to nodes. This is a simple factor to plan for, one just needs to keep it in mind with any 2U 4-node system, especially those with center PSUs.

Our first node took around 7 minutes to assemble. Even with add-on cards. We did a test fitting just to see how adding two low profile cards would work. Subsequent nodes took us under 4 minutes/ node to outfit.

Here is what a minimally configured node looks like. As you can see, the air shroud on this node is relatively small. We have seen some designs where the air shrouds are screwed in and those take an extra minute or two per node to remove/ re-install. This clip on air shroud was extremely simple.

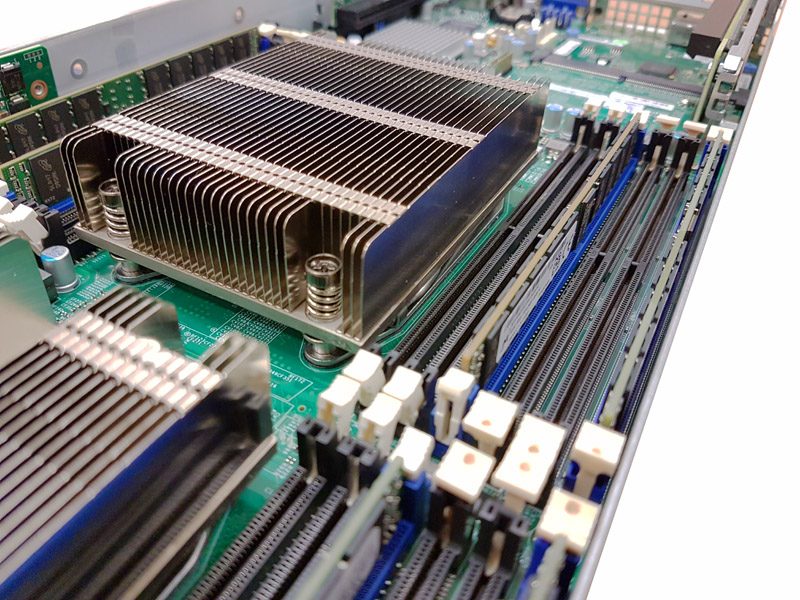

Heatsinks were supplied with the unit as we would expect. One of the other major enhancements here are the 3 DIMMs per channel memory configuration. That allows for up to 12 DDR3 RDIMMs per CPU or 24 per node. Older generations of 2U 4-node systems had either 1 DIMM per channel (8 per node) or 2 DIMM per channel designs (16 per node).

Expansion options are surprisingly plentiful in this chassis. We found a gold SATA DOM slot that allows for SATA DOM installation without a power cable.

There are a total of two PCIe x16 slots. In the NVMe chassis this is important since with 6x NVMe SSDs there are 24x PCIe lanes dedicated to storage I/O. 2U 4-node designs with only one or two PCIe x8 slots cannot handle enough 100GbE / EDR InfiniBand/ Omni-Path fabric I/O to effectively get data onto the network from the six NVMe drives.

Under the second riser is an SIOM slot. That is Supermicro’s proprietary network slot. As of now, the highest speed options are an Omni-Path 100Gbps SIOM or a 2x 25GbE (SFP28) and 2x 10Gbase-T module. Our test server was equipped with a pedestrian dual 1GbE SIOM so we added our 40GbE adapters via add-on cards.

Finally, we wanted to show off the connectors that are the interface between the chassis and node. Each is labeled with NVMe. Since there can be SAS BigTwin configurations as well, this is an overt and handy reminder of which type of node one is handling.

Supermicro BigTwin Management Software Overview

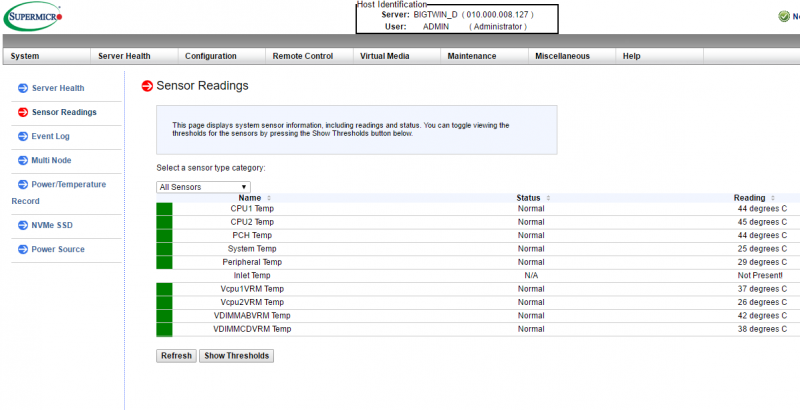

Our Supermicro BigTwin came with something we were not expecting. Some higher-level chassis management features via the standard IPMI interface. Most 2U 4-node systems we have tested to date (10 models from 6 vendors) have treated each node separately. They simply shared power and cooling via the chassis. When we logged into the chassis we saw Supermicro’s standard IPMI interface that provides iKVM (HTML5 or Java), sensor readings, authentication settings, event logs firmware updates, power control and etc.

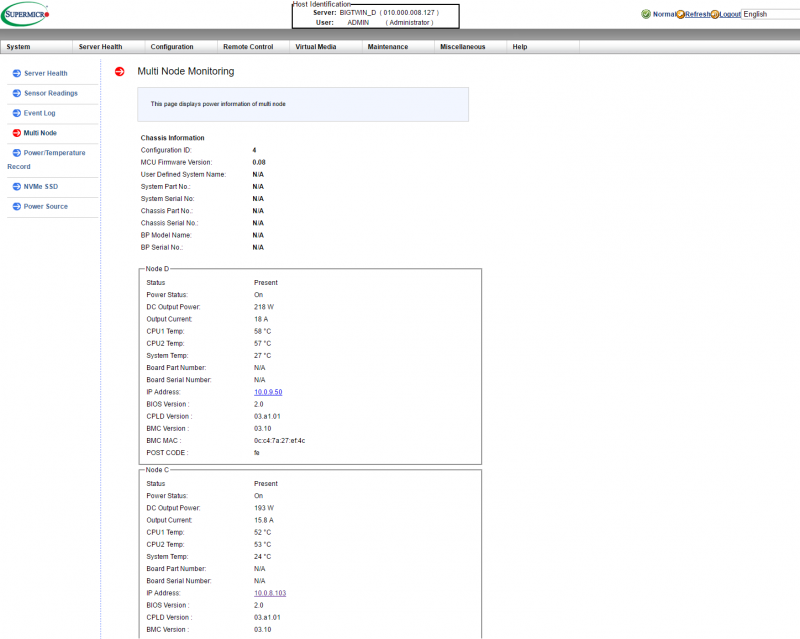

We also noticed a number of new features. One example of this was “Multi Node” which allowed us to see the power draw, post codes, CPU temperatures and several other bits of information.

Perhaps the most useful feature here is that the Multi Node tab showed the IP address and MAC address of each node in the chassis. If you need to quickly jump from one node to another, this is useful. This is also a feature not found in many of our other 2U 4-node systems.

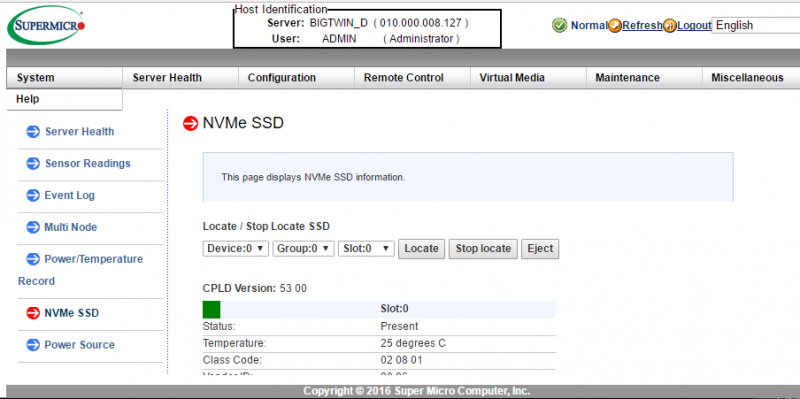

The system also was able to tell us the NVMe SSD information. We could see health status and slots of each NVMe SSD we had in the chassis and even click locate to make the front panel LEDs blink.

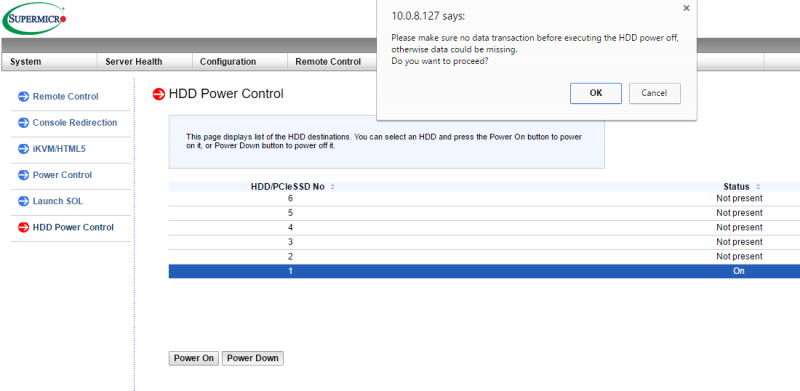

One of the more interesting features is that we were able to power up or power down individual NVMe drives from the web interface. This apparently works with hard drives or SSDs in the non-NVMe version.

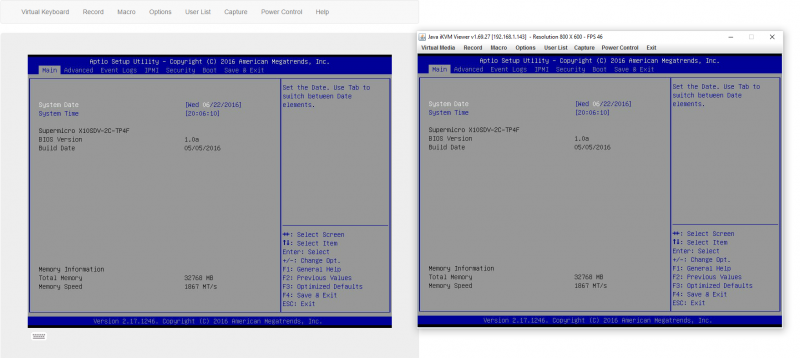

These chassis level features were available beyond the standard set of IPMI management features like the iKVM that we come to expect.

Overall, we like the direction this is going and wish some of our lab’s other 2U 4-node systems had this capability.

Power Consumption Comparison

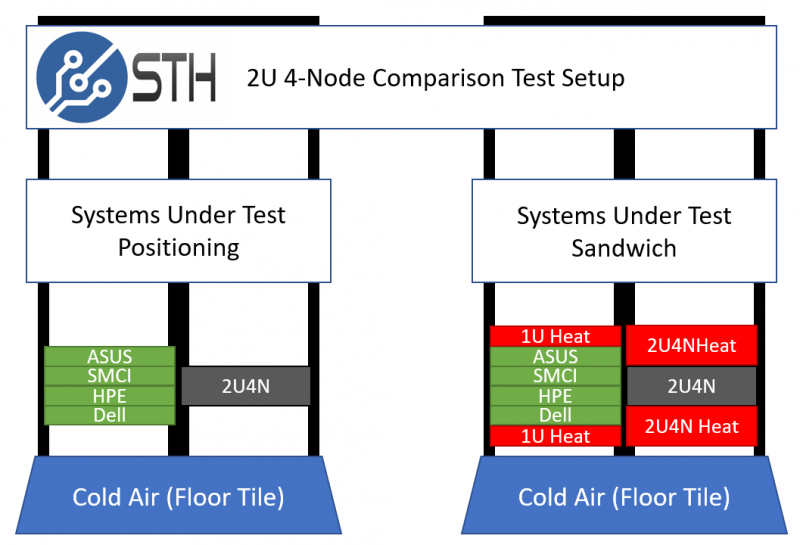

This is going to be one of the first power consumption tests of its kind performed on a 2U 4-node system according to our 2U 4-node power consumption testing methodology. We setup four independent 1U systems in the lab from various vendors. We then take all four sets of CPUs, RAM, Mellanox ConnectX-3 EN Pro dual port 40GbE adapters, SATA DOMs and put them into the nodes of a 2U 4-node system we are testing. We then “sandwich” the systems with similar infrastructure to ensure proper heat soak over the 24-hour testing cycle.

We then apply a constant workload, developed in-house, pushing each CPU to around 75%-80% of its maximum power consumption. There are few workloads that hit 100% power consumption (e.g. heavy AVX2 workloads for Haswell-EP and Broadwell-EP.) So we believe that this is a more realistic view of what most deployments will see. That gives us a back-to-back comparison of how much more efficient the 2U 4-node layout is. The figures were shocking.

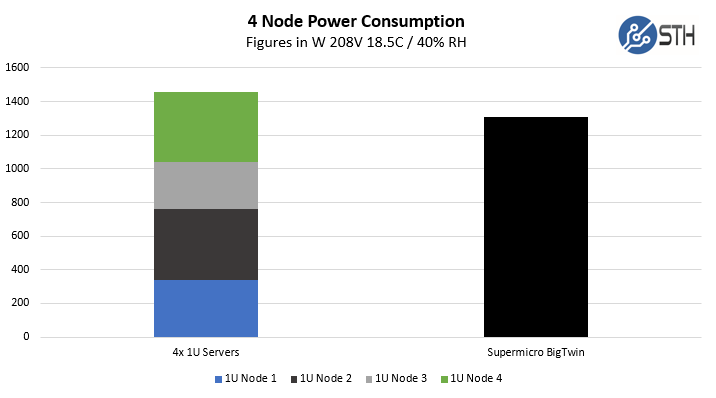

As you can see, we got a consolidation power savings of just over 10% by consolidating into 2U 4-node systems. If you are wondering why these platforms are so popular, they cut space by 50% and power consumption by over 10% for the same workloads. In some of our data centers this equates to over $100/ month per chassis in operational savings.

Storage Performance: Ceph View

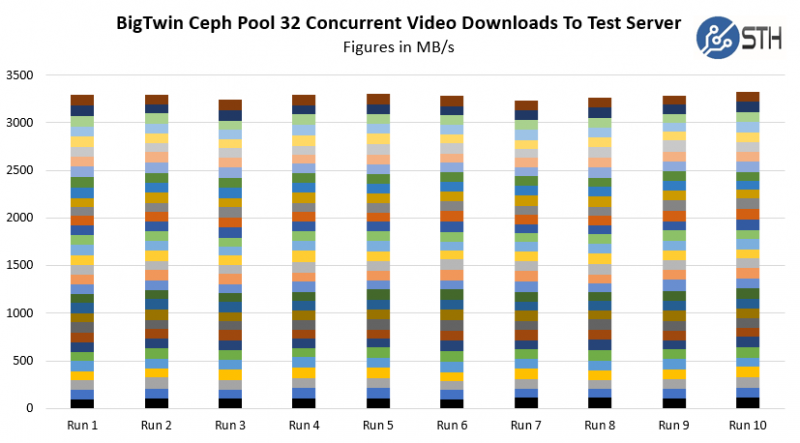

The storage performance testing is something we had to cut short. We got the test setup but were unable to spend time tuning and adding additional load generation nodes. For this test we utilized 24x 800GB Intel DC P3600 SSDs which are 3DWPD drives that provide solid read and write performance at a reasonable price. We also swapped the CPUs for Intel Xeon E5-2628L V4 chips which are a better match for this type of cluster. Our load generation node was a quad Intel Xeon E7-8870 V4 system with a Mellanox ConnectX-3 Pro EN dual-port 40GbE card. All five nodes were wired into our Arista 32-port 40GbE switch using 1M to 3M DACs depending on the distance of the node to the switch.

Performance wise, our load generation node had absolutely no issue pulling video files off the BigTwin NVMe Ceph cluster at 40GbE speeds. Due to the short review period and the timing of when we could borrow the Intel DC P3600 SSDs, we were unable to keep running these 24-hour workloads.

CPU Performance Baselines

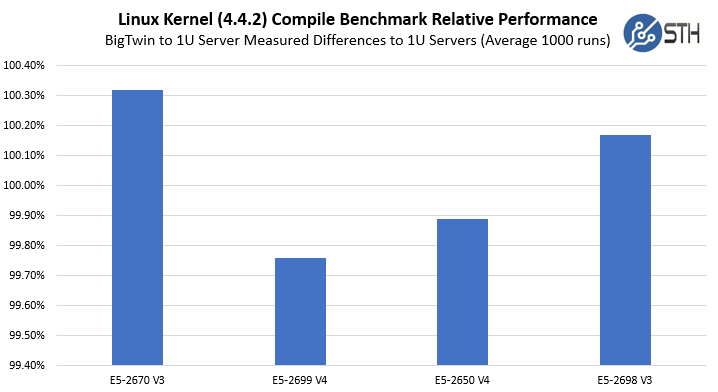

We did run heat soaked machines through standard benchmarking. One of the more amazing feats of the BigTwin was the ability to handle 24x NVMe drives (up to 600w) while still cooling the CPUs and maintaining performance. We perform these benchmarks in the “sandwich” we use for power consumption tests. Since the dual E5-2699 V4 and E5-2698 V3 systems finish a full day before the E5-2650 V4 system, we immediately spin up our power consumption test load script at the end of the 1000 runs.

This is an example of the four nodes running iterations of our Python driven Linux Kernel Compile benchmark. As you can see, the four nodes were extremely consistent in terms of performance and consistent with our baseline runs from 1U servers. In early generation 2U 4-node servers we saw issues that CPUs would throttle when heat soaked. What we found was that the BigTwin was able to cool the CPUs as effectively as the 1U servers. By running the 4kW+ test load over three days our test results held within an expected benchmark variance which was the purpose of the test. We did zoom that graph’s Y-axis because seeing sub-0.5% variations on a o-101% Y-axis was difficult.

Final Words

The Supermicro BigTwin is another 2U 4-node play in a market that already has dozens of competitors each with multiple models just in the Intel Xeon E5 V3/ V4 space. With that said, there are some major differentiators. First and foremost, there are 24x NVMe bays with six per node. Some companies (e.g. Intel using a Chenbro sourced chassis) are using 2U 4-node system with 2 NVMe drives per node and four SATA/ SAS. Others have stuck with 2.5″ SATA / SAS. Tackling the challenge of being able to cool 24x 25W NVMe SSDs (up to 600w total per specs) heating air before being blown over nodes with dual Xeon E5 V3/ V4 CPUs, 24 DDR4 RDIMMs, two PCIe x16 low profile cards and a SIOM networking module is no small feat. At several trade shows such as Flash Memory Summit last year we asked why there are so few 2U 2.5″ 4-node NVMe chassis. Many vendors are simply waiting for Skylake-EP to design for 24x NVMe bays. Supermicro is pushing its BigTwin product out for those that need density and performance now. For those companies building hyper-converged solutions, the Supermicro BigTwin is a great solution.

The Linux Kernel Compile Benchmark graph is missing values for the BigTwin system

On supermicro site, server’s spec has “2 M.2 Cards support”.

Is there any m.2 slot on the motherboard?

Thanks.

We did not see any on our test system. Supermicro may have a different version of the board or a riser slot. We sent a clarification message on this point and will update with the response.

this is a killer platform, is it shipping yet?

The future of SSD has arrived. The NVMe is the leading non-volatile memory manufacturers focus their shift on creating products based on it and as more people.it’s important to take the time to assess how you will be using your drive in order to avoid major headaches like outages or even data loss.

Did you managed to install FreeBSD or vSphere 6.x in this system? In Linux, did the NVMe drives being detected as JBOD config?

I wonder the possibilities for this server as vSphere + VSAN hyperconvergence system

The NVMe in this will all be a JBOD configuration. The drives show up as NVMe devices.

Are the drives able to be shared between the nodes? Something like this, but in a 2-node configuration would be awesome for a self-contained HA ESX setup, or as a 4 node with 3 nodes as ESX hosts and the 4th providing NFS/iscsi storage for the other 3 if they can’t be shared. Out of my price range though…

1) Can you show what is the base(barebone) cost for 1 system with 4 nodes ( no storage, no memory, no cpu)

2) What cpu this unit support & cost for each?

3) Storage supported & cost ?

4) the same with memory?

Thanks.

PS: Does any company have these for AMD EPYC CPU?

if they do can you post the above answer for them?

Please & Thanks.

Great questions for a vendor or the STH forums.

Trick question on the EPYC part. As of 2017-07-25 vendors are still not shipping EPYC.