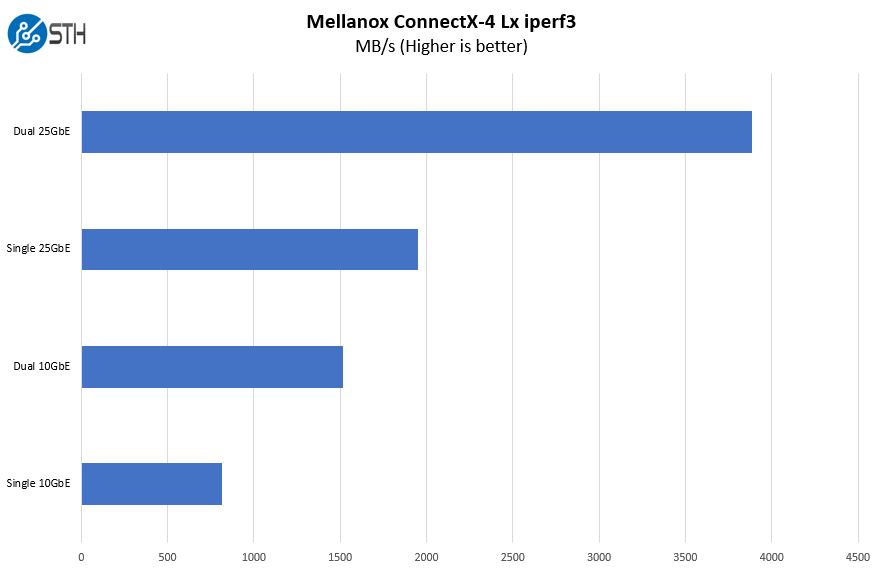

Supermicro 2029UZ-TN20R25M Network Performance

If you want 25GbE one can see that the performance advantage in throughput is enormous.

To achieve this, 25GbE is using higher signaling rates which also means that latency is vastly improved. By utilizing the Mellanox ConnectX-4 Lx onboard, the SYS-2029UZ-TN20R25M is able to deliver performance well beyond previous generation 10GbE solutions. If you are looking for a generational upgrade, 25GbE is significantly faster while offering backward compatibility for 10GbE networks.

Supermicro 2029UZ-TN20R25M Power Consumption

Our Supermicro SYS-2029UZ-TN20R25M has redundant 80Plus Titanium 1.6kW power supplies that provide 96%+ efficiency levels. These are very high-efficiency power supplies meant to deliver power with minimal loss.

With that, and the Intel Xeon Gold 6248R processors, we saw relatively modest power consumption figures for this class of machine:

- Idle: 0.16kW

- STH 70% CPU Load: 0.58kW

- 100% Load: 0.70kW

- Maximum Recorded: 0.74kW

The Supermicro SYS-2029UZ-TN20R25M runs on the higher-efficiency side compared to many other solutions. This is partly due to the cooling design as well as the power supplies used. Again, these come standard in the server and Supermicro is not up-charging a few percent on the server for these features.

Note these results were taken using a 208V Schneider Electric / APC PDU at 17.5C and 71% RH. Our testing window shown here had a +/- 0.3C and +/- 2% RH variance.

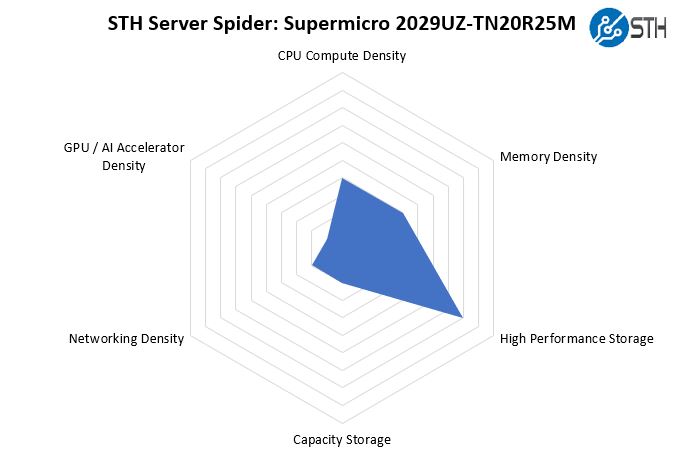

STH Server Spider: Supermicro 2029UZ-TN20R25M

In the second half of 2018, we introduced the STH Server Spider as a quick reference to where a server system’s aptitude lies. Our goal is to start giving a quick visual depiction of the types of parameters that a server is targeted at.

This server is primarily focused on delivering high-performance NVMe storage. Only the 1U next-gen form factor servers are going to be denser. Achieving this goal means that there are limited other expansion options. That is exactly the point of this 2U server so this makes a lot of sense.

Final Words

The Supermicro 2029UZ-TN20R25M is a server with a purpose. It is designed for those who need fast access to NVMe storage. With a balanced 10x NVMe data SSDs per CPU for 20 total, there is a lot of potential bandwidth that we saw the unit excel in with our 5th SSD testing. This topology also clearly defines different PCIe roots for the SSDs on the CPU which can help push data through the Intel Xeon Scalable on-chip mesh faster. That is exactly the point of this server which is to provide direct attach NVMe SSDs to the server.

Adding 25GbE means that this server is essentially ready-to-go for most of its deployment scenarios. Instead of needing to cobble together a solution, Supermicro has what is essentially the highest-end 25GbE dual Intel Xeon Scalable NVMe 2U storage server on the market without using PCIe switches. This singular focus of the design also means the system is less complex than other models we have seen. Since it is based on the 2U Supermicro Ultra platform, it will be very familiar to customers who use Ultra servers, either Xeon or EPYC based elsewhere in their infrastructure.

Overall, this unit performed very well for us. If you have wanted an Intel Xeon platform with the ability to use 205W TDP CPUs and Optane DCPMM along with directly attached NVMe drives, then this is a very strong option.

It seems like an awful lot of storage bandwidth to the processor with very little bandwidth out to the network. Maybe database where the compute is local to the storage, but I don’t see the balance if serving the storage out.

It is a shame that Supermicro doesn’t offer that chassis/backplane in an single AMD EPYC CPU config, that combination of mixed 20 NVME + 4 SATA/SAS drives would be ideal while costing a whole lot less with a single EPYC CPU, while leaving plenty of available PCIe channels to support 100+Gbs network adapter(s) all without breaking a sweat.

Suggestion for the workload graph please: Dots and Dashes.

It’s tough to see what colour belongs with which processor graph line.

Thanks.

So just to confirm, 20 x PCI-e drives were connected to one of the few servers that can directly support this config. Then you install 20 NVME drives and didnt show that bandwidth or IOPS capabilities?

I apologize for dragging in Linus Sebastian of Linus Tech Tips fame because he is a bit of a buffoon, but he might have hit a genuine issue here:

https://www.youtube.com/watch?v=xWjOh0Ph8uM

Essentially, when all 24 NVME drives are under heavy load there seem to be IO problems cropping up. Apparently, even 24-core Epyc Rome chips cannot cope and he claims the problem is widespread – Google search results shown as proof.

I would like to hear from the more knowledgeable people that frequent STH. Any comments?

Good article, Patrick. However, with regards to the IPMI password, couldn’t it potentially be possible to change to the old ADMIN/ADMIN un/pw using ipmitool from the OS running on the server?

Philip – what about a bar chart?

Henry Hill – 100% true. With the whole lockdown, we had to use the Intel DC P3320 SSDs. Look for reviews on retail versions like we have. We have some of the only P3320’s in existence. They would not go overly well showing off the capabilities of this machine. Instead, we focused on a simpler to illustrate use case, crossing the PCIe Gen3 x16 backhaul limit.

Nickolay – check out the AMD/ Microsoft/ Quanta/ Broadcom/ Kioxia PCIe Gen4 SSD discussion. They were getting over 25GB/s using just 4 drives per Rome system. At these speeds, software and architecture becomes very important.

Oliver – there are new password requirements as well. More on this over the weekend.

Patrick,

A late catch-up, can you post a link to that AMD/… / Kioxia PCIe Gen4 SSD discussion, tks

Hi BinkyTo https://www.servethehome.com/kioxia-cd6-and-cm6-pcie-gen4-ssds-hit-6-7gbps-read-speeds-each/

What about storage class memory?

hi,

may i ask how it is possible to create a RAID5/ array with ESXi or Windows on bare metal?