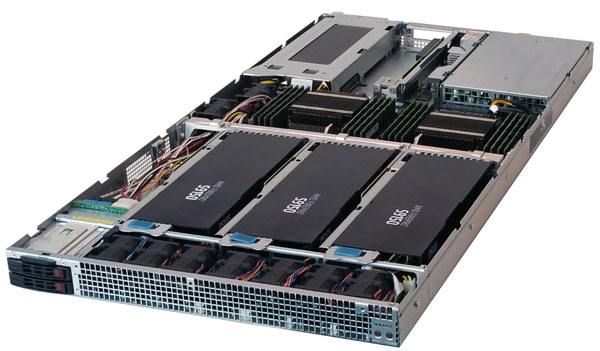

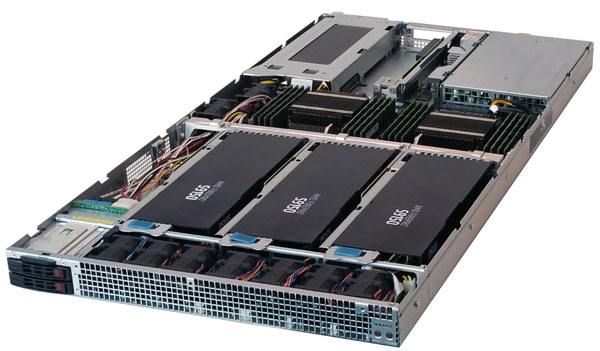

At the beginning of August 2015 we posted a news story about Supermicro releasing its new line of GPU/Xeon Phi SuperServers, the 1a028GQ-TRT and 4028GQ-TRT to be more precise. Supermicro X10 GPU/ Xeon Phi SuperServer Solutions Overview. Today in the Lab we will be taking a look at the 1U 4x GPU/Xeon Phi 1028GQ-TRT solution. This 1U system packs 4 GPUs and two Xeon E5 V3 processors making it a higher density compute option.

Supermicro 1028GQ-TRT Key Features

- Dual socket R3 (LGA 2011) supports Intel Xeon processor E5-2600 v3 family; QPI up to 9.6GT/s

- Up to 1TB ECC, up to DDR4 2133MHz; 16x DIMM slots

- 4x PCIe 3.0 x16 slots (4x GPU/Xeon Phi cards opt.), 2x PCIe, 3.0 x8 (in x16) LP slot

- Dual port 10GbE LAN

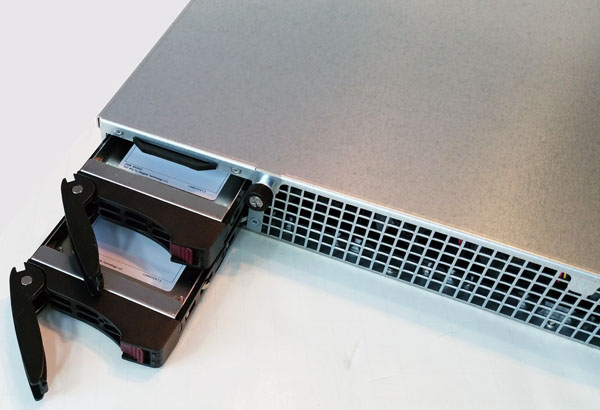

- 2x 2.5″ hot-swap drive bays, 2x 2.5″ internal drive bays

- 9x 4cm heavy duty counter-rotating fans with air shroud & optimal fan speed control

- 2000W redundant power supplies (Platinum Level 94%+)

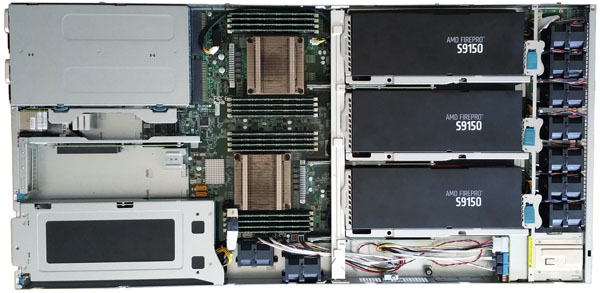

The 1028GQ-TRT offers direct CPU connection to GPU’s through 4x PCIe 3.0 x16 slots to achieve low latency and reduce power consumption. This system supports up to four Nvidia Tesla cards including K80 dual-GPU accelerators up to 300 watts, AMD FirePro Server GPUs or Intel Xeon Phi cards. Installing GPU’s is simple and no complex cabling or repeaters are required.

Cooling fans placed at the front of the server allow for direct outside of box air flow to keep the GPU’s cool and running smoothly. Storage provisions include front 2x 2.5” Hot-swap internal and 2x 2.5” internal bays. Network needs are supplied with 2x 10GbE LAN and remote management ports, in addition there are two PCIe 3.0 x8 (in x16) low-profile slots available for expansion cards in the back of the server.

Supermicro 1028GQ-TRT hardware

Here we are looking at the front of the 1028GQ-TRT which is dominated by air flow access to provide cooling for the GPU/CPU’s. On the left side we have 2x 2.5” Hot-swap storage bays and over on the far right are power and reset buttons.

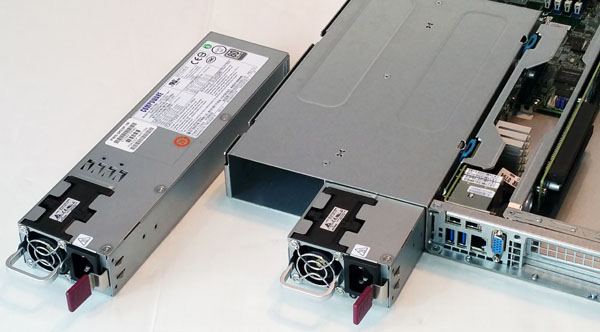

At the back of the server we find the 2x 2000 watt redundant power supplies with 2x 10GbE (10Gbase-T) and remote management ports and 2x USB 3.0 ports right next to those. VGA output is also provided right next two these ports. 10Gbase-T allows for flexibility integrating into existing Cat6/ Cat6a infrastructure at either 1GbE or 10GbE speeds.

Next are 2x PCIe 3.0 x8 (in x16) low-profile slots and the first GPU cooling vent.

At the front left of the server we find the 2x 2.5” hot-swap drive bays, directly behind these are the 2x 2.5” internal drive bays.

The redundant 2000 watt (1+1) platinum level high-efficiency power supplies are simple to remove and install.

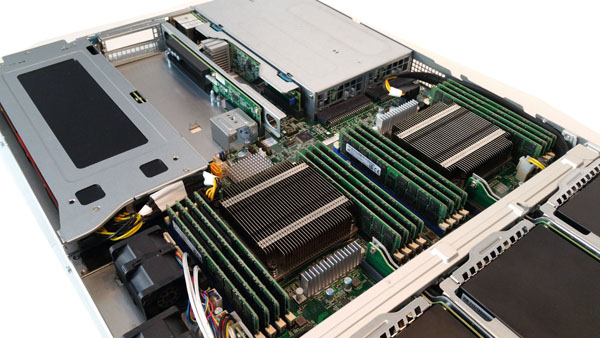

Taking the top lid off of the server we get our first look inside. The front has 14x 4cm heavy duty counter-rotating fans that provide the bulk of the server cooling needs, these are feed directly into the front GPU’s for optimal cooling. Right behind the fans we find three of the four GPU’s housed in this server. On the left side we see the two internal 2.5” drive bays located right behind the two front bays.

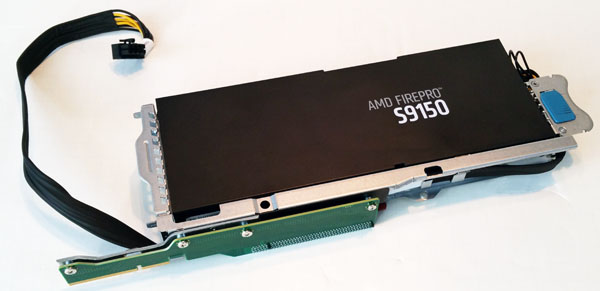

Each of the GPU’s are secured by a locking frame, push the blue slider to unlock the GPUs and simply pull them out. There is also a locking bar that fits just behind the GPU’s that secures them in place. At the rear of the frame is a 90 degree PCIe adapter which connects directly to the main motherboard. Power connections are provided with a short cable that connects directly to the motherboard right behind the GPU, no extra cable extensions are needed to reach the PSU’s in the back of the server which helps air flow and keeps everything nice and tidy inside.

Here we get a closer look at the GPU module and hold down frame. The one shown is for GPU #4 on the far right of the server. This one has the longest power cable and is still plugged into the motherboard. The other three GPU’s power cables are 1/3rd the length of this one.

Directly behind the front GPU’s we find the CPU/RAM area and on the left the 1st GPU. In the middle we can see the extra low profile PCIe expansion bays.

Cooling for the first GPU is provided by an additional 4x 4cm heavy duty counter-rotating fans. The air flow path is through the drive bay area so it is not preheated to any great extent.

A top down view shows the complete system layout. It’s clear to see how air will flow through the server and how power connections are laid out in this view. Cabling is very clean with nothing to obstruct air flow throughout the server.

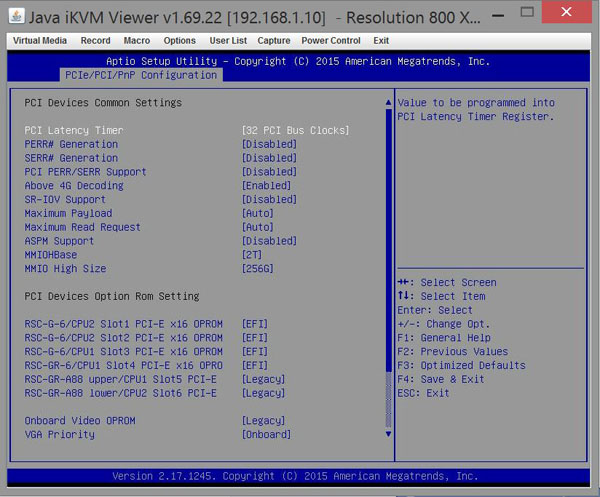

Supermicro BIOS

The Supermicro BIOS on this machine is based on the American Megatrends, Inc (AMI) BIOS.

Looking at the BIOS we can see how it reports the PCIe slots. Each of the 4 slots used for the GPU’s are PCIe x16 and the 2x expansion slots are 3.0 x8 (in x16).

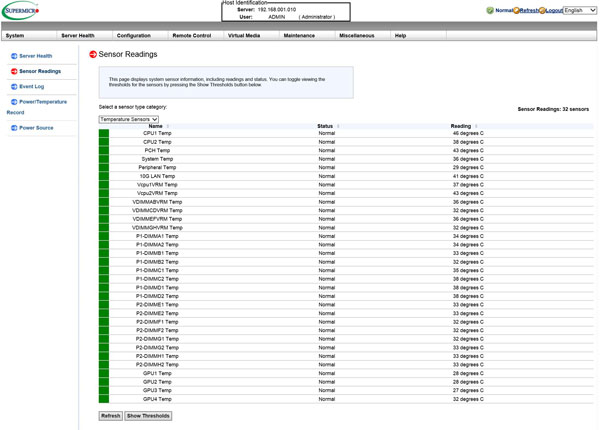

Supermicro Remote Management

For remote management simply enter the IP address for the server into your browser and login, or use a tool such as IPMIview or ipmitool.

The default login information for the Sipermicro 1028GQ-TRT is:

- Username: ADMIN

- Password: ADMIN

As a best practice, Administrative users should change factory default Username/Password logins before connecting any new server to their network.

We were able to run our tests completely through onboard iKVM. Remote management also reports the temperature of each CPU/GPU and also all the other aspects that are typical of Supermicro remote management solutions. One has the ability to monitor various sensors including CPU, DIMM, GPU temperatures. This can be done through the web interface, monitoring scripts or even the IPMIView Android/ iOS app.

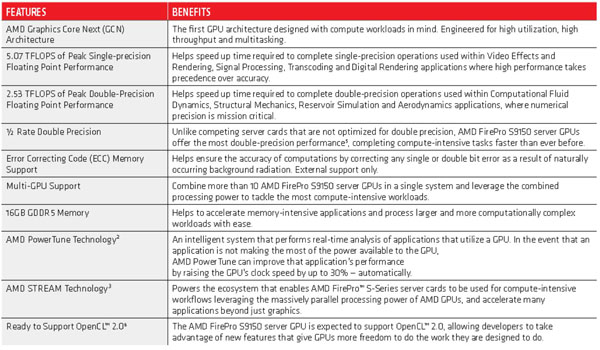

AMD FirePro S9150 Server GPU’s

Our test server came equipped with 4x AMD FirePro S9150 Server GPU’s. One will note these are passive designs so they rely upon the chassis fans to move airflow through their heatsinks. This is unlike most consumer and workstation GPUs but is standard for server units.

Here are AMD’s marketing specifications for these cards:

Key AMD FirePro S9150 Cooling/Power/Form Factor Specs:

- Max Power: 235W

- Bus Interface: PCIe 3.0 x16

- Slots: Two

- Form Factor: Full height/ Full length

- Cooling: Passive heat sink

The AMD FirePro S9150 is an interesting GPU, it offers excellent performance per watt with its 235watts of power consumption and is the first GPU to break the 2.0 TFLOPS barrier with enhanced support for double precision computations. The AMD S9150 also offers support for OpenGL 4.4, OpenCL 2.0, OpenACC 2.0, OpenMP 4.0 and DirectX 12 through AMD’s software ecosystem.

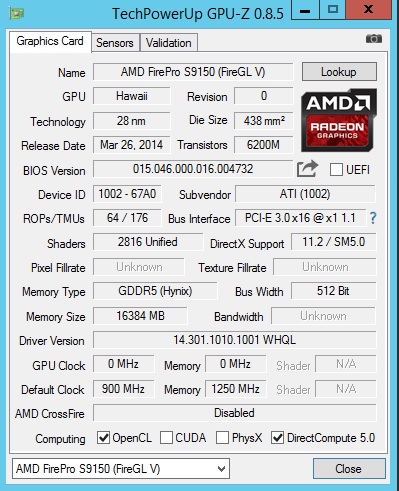

This is how GPUz reports these cards.

Test Configuration

The Supermicro 1028GQ-TRT does not include a DVD drive for installing software so in our case, we used a USB DVD drive to install Windows Server 2012 R2 and run our Ubuntu Disk directly off of the USB drive. We had no issues getting a OS installed on the system, drivers were used directly from Supermicro’s website.

- Processors: 2x Intel Xeon E5-2697 V3 (14 cores each)

- Memory: 16x 16GB Crucial DDR4 (256GB Total)

- Storage: 1x SanDisk X210 512GB SSD

- Operating Systems: Ubuntu 14.04 LTS and Windows Server 2012 R2

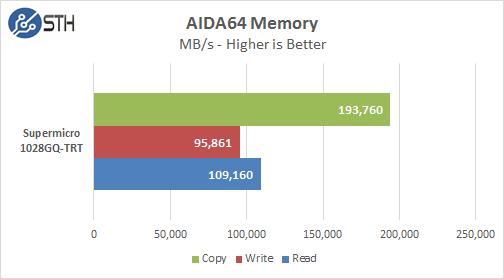

AIDA64 Memory Test

Our AIDA64 memory test is run to test performance of memory subsystems.

Memory Latency ranged at ~86.7ns and our average systems using 16x 16GB DIMM’s ranged about ~78ns.

With 16x 16GB DDR4 memory sticks installed we saw memory speeds of 1866MHz with performance that matched many Supermicro systems we have tested, there was nothing unexpected here and performance was very good.

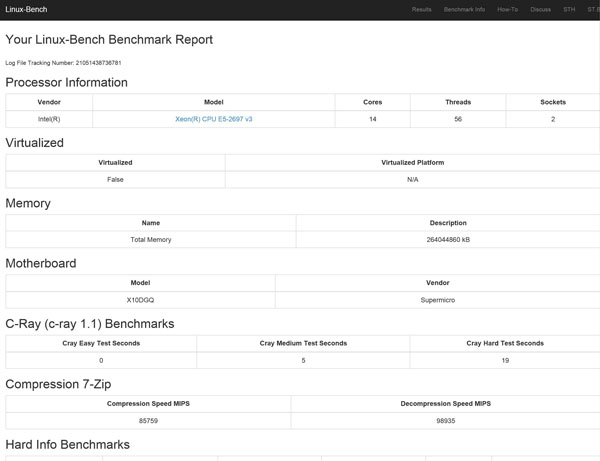

Linux-Bench Test

Our Linux-Bench is a test suite scripts common Linux benchmarks and provides a consistent and easy to run methodology. One can compare these tests to hundreds of other configurations in the Linux-Bench database but also with sites such as Tom’s Hardware and Anandtech that use the script.

The full test results for our Linux-Bench run can be found here. Supermicro 1028GQ-TRT Linux-Bench Test Results

The Intel Xeon E5-2697 V3 processors are rated at 145 watts TDP and are equipped with 14 cores at 2.6GHz/3.6GHz Turbo speed. For higher memory requirements the system can support up to 1TB ECC LRDIMM, 512GB ECC RDIMM memory kits.

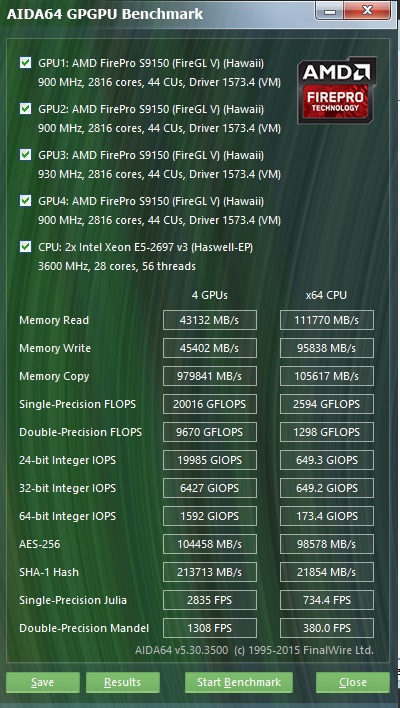

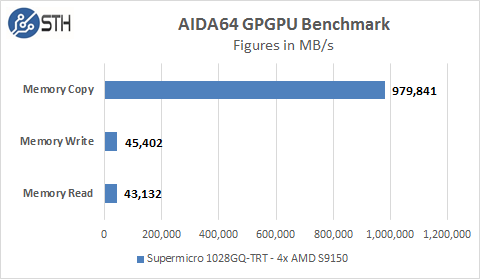

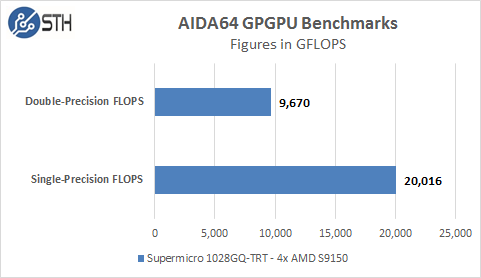

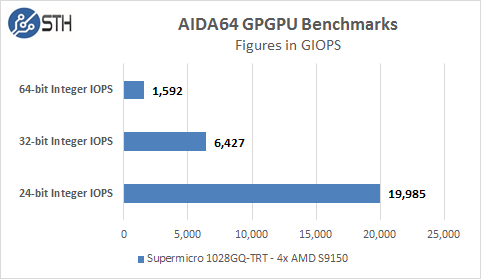

AIDA64 GPGPU Test

The first test we ran was AIDA64 GPGPU benchmark. This test see’s all GPU/CPU present in the system and goes through a lengthy benchmark test.

Each of the S9150 GPU’s are rated for 5.07 TFLOPS of single-precision performance and we can see our cards perform right in these specifications. Our combined single-precision performance came in at 20,016 GFLOPS or 20 TFLOPS performance.

We included these graphs to compare with additional systems we will be reviewing later as we do have a large backlog of GPU compute servers to test.

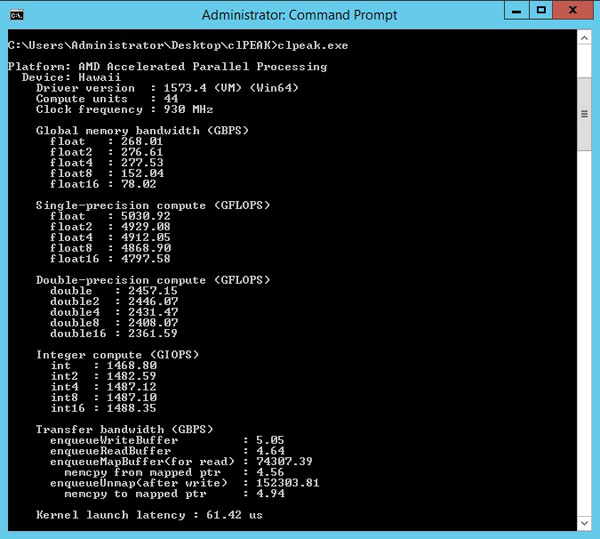

clPEAK Benchmark

We also ran clPEAK benchmark on the system and saw number right where we expected them to be. This test runs on only one GPU at a time so the screen shown was repeated four times. We did notice improved Kernel Launch Latency on the 1028GQ-TRT of 61us vs 80us from base line tests.

clPEAK measures the peak bandwidth for all vector-widths of float. Measure single & double precision compute capacity for all vector-widths. Measure transfer bandwidth from host to device and kernel launch latency.

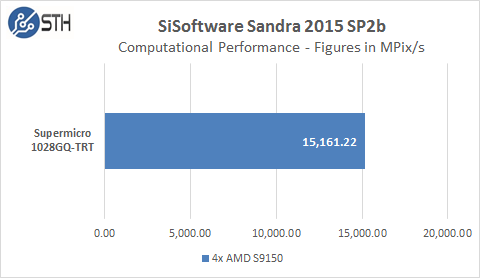

SiSoftware Sandra 2015 GPU Benchmark

We did run several of the SiSoftware Sandra 2015 benchmarks, several of the GPU tests provided would not see all four GPU’s or would hang while running.

The first GPU test is Computational Performance which did not give us issues but we only include this one for reference. We think the issues we experienced with this benchmark are related to the benchmark itself and not the system.

Power Tests

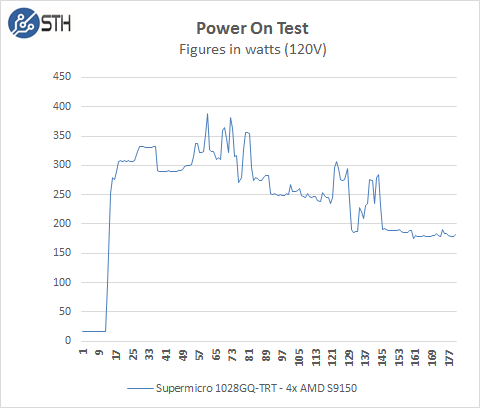

An important consideration to weigh in on when deciding what servers to run is how much power will these systems require to boot up. Systems like these in high density environments can pull a great deal of power during the boot up process vs idle states. We start with the power needs of the system when it is turned off. The 1028GQ-TRT pulls ~16watts to keep remote management on. That is about standard for a ASPEED 2400 based BMC server. When the power button is pressed the boot process starts and we go through various boot up processes, initializing various components and finally the OS start up.

Our test system jumps to ~300watts very quickly, peaks at ~380watts and finally settles down ~195watts on idle, depending on what the system is doing and other BIOS settings can cause this to increase. For our tests we use default BIOS settings and we make no changes to these. Overall this test compares well to other systems we have tested, some that do not even have this number of GPU’s installed.

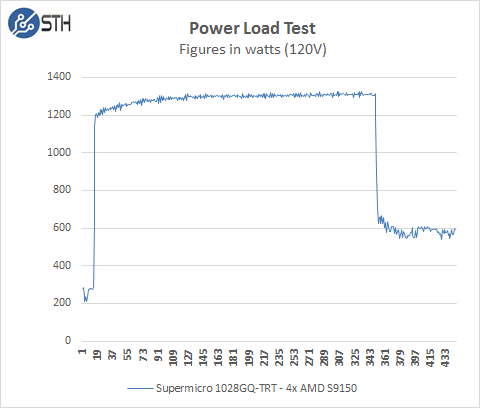

We then load the system to see what our maximum power use will be. For our tests we use AIDA64 Stress test which allows us to stress all aspects of the system. For our power meter we use a Yokogawa WT310 which feeds its data through a USB cable to another machine where we can capture the test results. We start off with our system at idle which is pulling ~200 watts and press the stress test start button. You can see power use quickly jumps to over 1200 watts and continues to climb until we cap out at ~1340 watts.

Adding up power use we have 4x S9150’s which can pull 235 watts each, that equals 940 watts for the four cards. The dual Intel Xeon E5-2697 V3’s and 256GB RAM and other components normally pull around 400 watts. That equals the roughly 1340 watts for the entire system, so our figures seem reasonable for this configuration. We found the AMD S9150’s are very efficient in power use and we were pleased to see these power results.

We have run other setups in the past the included 4x Intel Xeon Phi 3120A’s which peaked out at total power use almost reaching 1600 watts, so there are hotter GPU/ PCIe MIC cards out there.

Thermal Testing

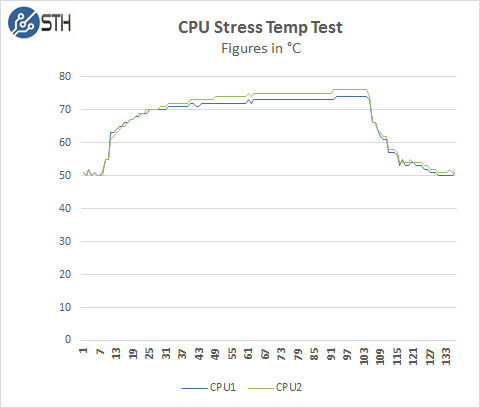

Now let us take a look at how heat from the stress test loads were handled by the cooling system in the 1028GQ-TRT.

Our test lab is set to 78F/25.5C. To measure this we used HWiNFO sensor monitoring functions and saved that data to a file while we ran our stress tests. The processors in this system sit behind the front GPU’s so they are not getting direct air from the outside of the server.

AIDA64 Stress Test does put a heavy load on the system, but other programs like LinPack might run higher temps. Over all the cooling system handles the heat output of the CPU’s very well.

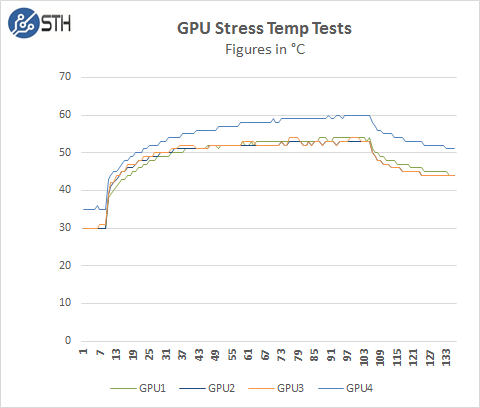

Here we see the temperatures of the GPU’s while the stress test is running. GPU #4 (this is really GPU #1 as seen in the Supermicro charts, but HWiNFO sees it as #4) is the one that sits in the back of the server so it runs a little warmer than the front three which have direct air flow from the outside. There is a slight increase in operating temperatures for this GPU but it is still in normal operating range.

Conclusion

We have to say we enjoyed our time testing the Supermicro 1028GQ-TRT, the shear amount of computational power packed into this system is amazing. This system can push 20,000 GFLOPS of single-precision computations just using the AMD S9150 Server GPU’s. This 1U system provides supercomputer level performance from only 10 years ago, yet is compact and can be run from a 12A @ 120V circuit.

The 1028GQ-TRT is rated up to 95F/35C operating temperatures which is a fairly hot for a server room. Our tests were run at 78F/25.5C ambient room temperature, running at 35C could push our temperatures up another 9.5C which could push our CPU temps into the high 80F’s using the E5-2697 V3 processors. We are still in operating temperatures at this level that would not cause throttling of the CPU’s, but it is getting close. The best solution, depending on workloads while running at these warmer server room temps would to select processors like E5-2650 V3’s which run cooler and we find that to be an excellent choice. The AMD S9150’s would not suffer from running at the 35C temperatures and we do not see an issue with this. We measured temperatures of 114F/45.5C for the air exhaust out the back of the server while our stress tests were run, this is not anything to extreme for a server like this and we have seen other servers in datacenters that output much warmer air exhaust.

Installing GPU #1 in the far back of the server allows for more space between the GPU’s in the front, this allows for better air flow throughout the case and in addition provides space for storage in the front without having to give up space in the back for expansion cards or redundant PSU capability.

Easy access to all components makes maintenance of the Supermicro 1028GQ-TRT simple. GPU’s simply unlock and lift, in addition the CPU and RAM area is clean with no cables getting the way.

In a rack environment access to the front GPU’s is direct by pulling out the server about half way and removing the top lid. Access to the GPU in the back is a bit more difficult and would require pulling the server all the way out. This should not be a problem as it is in the same location as the expansion cards so no extra effort is needed.

Overall, for those looking to cram four GPU’s into a small 1U form factor for dense compute or even VDI applications, the supermicro 1028GQ-TRT is an excellent solution. With 10Gbase-T networking, the server is easy to integrate into existing datacenter infrastructure so long as the rack is able to handle higher-power rated gear.