NVIDIA RTX 6000 Ada Specifications

Here are the key specs for the card:

- GPU: AD102

- Shader Units: 18176

- Video Memory: 48GB GDDR6X

- Memory Bus: 384-bit

- Engine Clock Boost: 915 MHz (Boost: 2505 MHz)

- Memory Clock: 2500 MHz (20 Gbps effective)

- PCI Express: 4.0

- Display Outputs: 4x DisplayPort 1.4a

- TDP: 300W

- Recommended Power Supply: 700W

- Cooling: Single Blower Type Cooler

- Slot Size: 2

- Power Connector: 1x 16-Pin

- Card Length: 267 mm x 112 mm (10.5” x 4.4”)

Test Setup

Our workstation has been used for many of our reviews. So that we can get data to compare this review to some of our other reviews, PNY and BOXX helped us with a workstation that closely matched those specs that could also handle the RTX 6000 Ada. If you want to learn more, check out our BOXX APEXX S3 Overview An Intel Core i9 and NVIDIA RTX 6000 Ada Workstation.

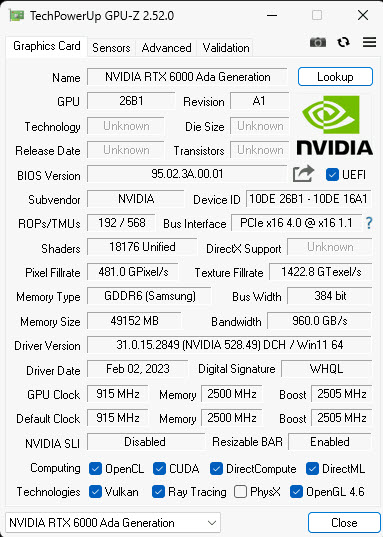

Here is the obligatory GPU-Z shot of the NVIDIA RTX 6000 Ada:

GPU-Z shows the main stats of our NVIDIA RTX 6000 Ada.

Let us move on and start our testing with compute-related benchmarks.

nice review, was wondering why the RTX A6000 wasn’t taken into the comparisons, the “original” RTX A6000 is a generation before that, the A6000 is one generation old

edit: the “original” RTX 6000 (minus the A), and i ment it is 2 generations old,

sorry for the typo

Did you use Nvidia’s “Studio Driver” for the 4090 tests? That driver has optimizations for some professional applications. I’m particularly interested to see if the 4090 gets a performance increase for the specviewperf tests.

junk in price/performance terms. But we don’t have other choice if we want to use +24GB memory over the 3090/4090 memory (for 3x the price – wow sooo expensive extra 24G GDDR-6). The premium price is clearly usage of the monopoly situation of Nvidia.