The Lenovo ThinkSystem SR650 is the company’s mainstay 2U general purpose compute server. As such, it is designed to fill many different roles. In our ThinkSystem SR650 review, we are going to show why the solution is flexible and how Lenovo’s engineers have taken this Intel Xeon Scalable platform to the next level. We have our signature STH thorough review where you will see more details on the Lenovo SR650 platform than anywhere else online. Settle in for an in-depth look at the server.

Lenovo ThinkSystem SR650 Hardware Overview

As a quick forward here, the configuration we had in the lab is a single socket solution. Lenovo has specific configurations it uses to cost optimize deployments where the SR650 utilizes only a single socket.

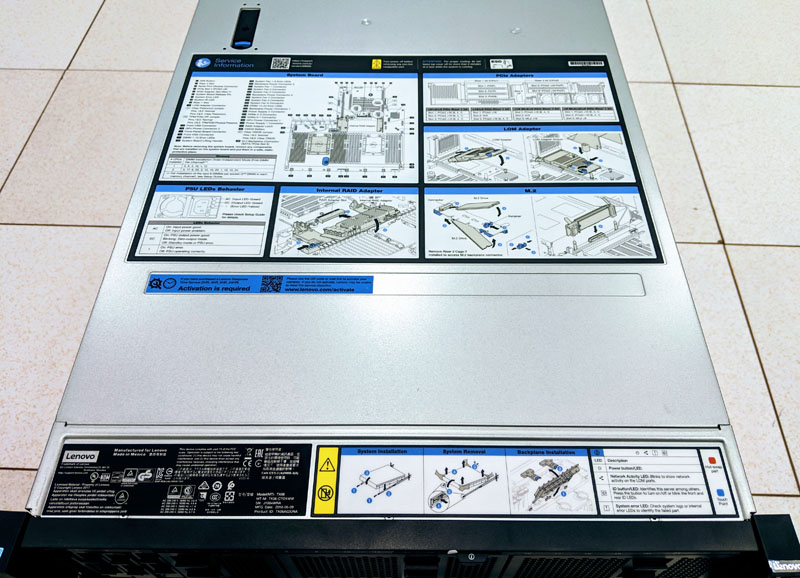

We wanted to start this review with a simple but important feature. When one first unboxes the server, labels are printed with all major features and some of the most common service items. This even includes instructions on installing the server into the rack. This is a detail other top-tier competitors also have, but Lenovo does a great job with theirs. Commodity white box servers typically do not have these types of features.

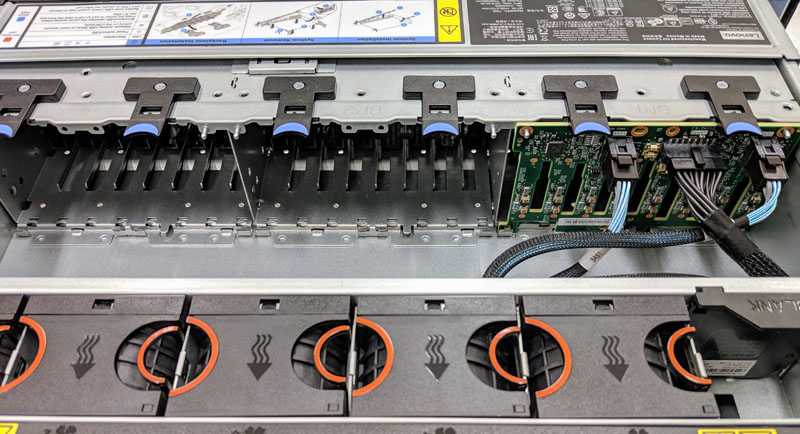

The front I/O area is three sets of configurable expansion bays, allowing for up to 24x 2.5″ front panel drives. We wanted to show off the front I/O and LEDs before moving to the rest of the server. One can see USB 3.0 and 2.0 service ports one can see status and ID LEDs as well as a power button with protection from an accidental push. The other side only has a VGA port for cold aisle KVM cart access along with the USB ports.

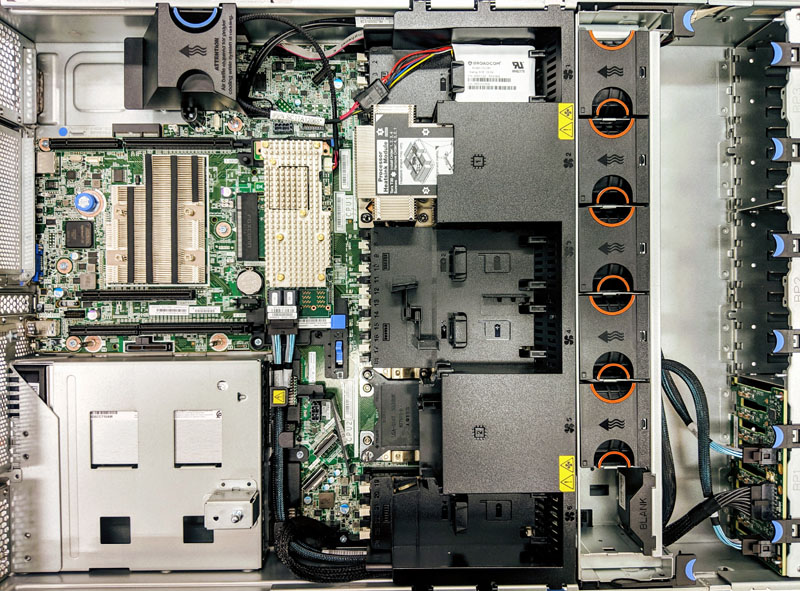

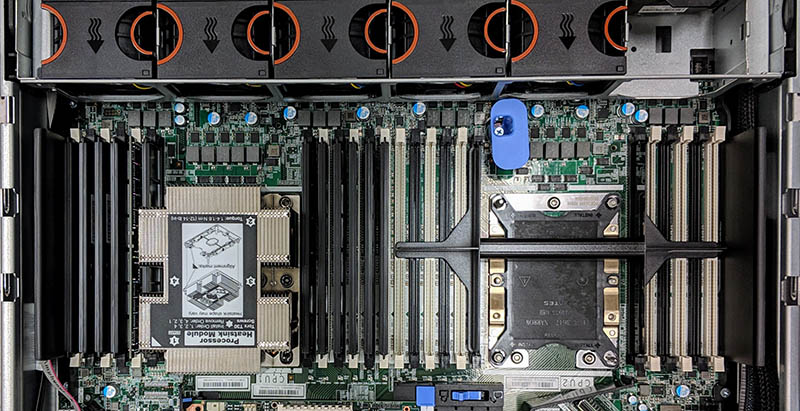

Inside the server, we see a fairly standard design. Airflow is from drives to a fan partition, then the CPUs and memory, followed by the SAS controller, expansion slots and power supplies. Lenovo uses a hard shroud that both directs airflow and adds extra functionality that we are going to cover shortly.

One example of how this shroud is used is to hold the battery units for the storage HBAs. The Lenovo mechanical engineering team did a great job here using this space for tool-less servicing. They also have airflow directly over the batteries to increase their longevity.

The front of the unit uses a modular approach to drives. As you can see, one can easily clip in a backplane, attach cables, and add more drive capacity. We pulled our 8x 2.5″ SAS/ SATA PCB out to service, and it was one of the best designs we have seen in the space.

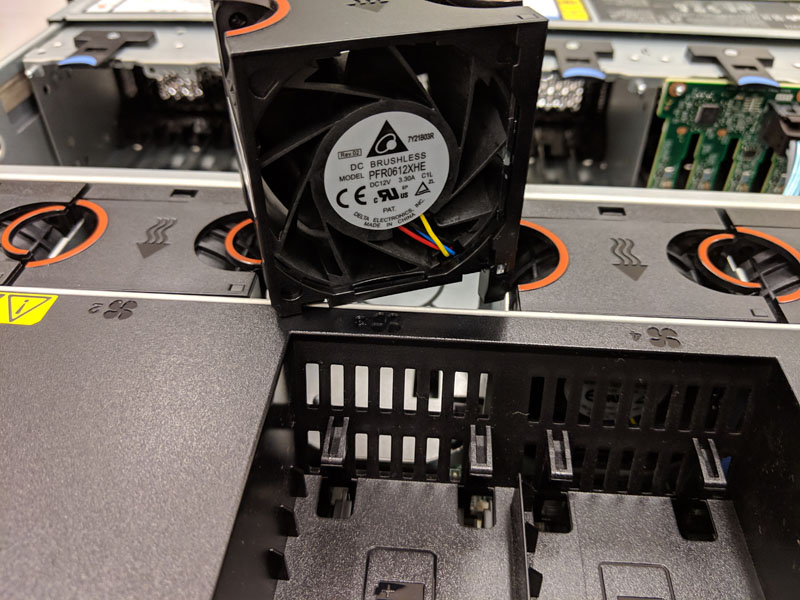

Our test system had five fans since it was a single SPU configuration. The fans are high-quality Delta fans. Lenovo does a great job with their hot-swap fan mechanism which makes locating and replacing a failed fan extremely easy.

Removing the shroud, we can see a single CPU configuration for our review unit. One can see CPU1 is labeled and configured. CPU2 is blanked out ready for an upgrade later. We did not have the sixth fan, and given what we saw from thermals on our test system (see the later Test Configuration section), we did not do dual-socket testing without this fan.

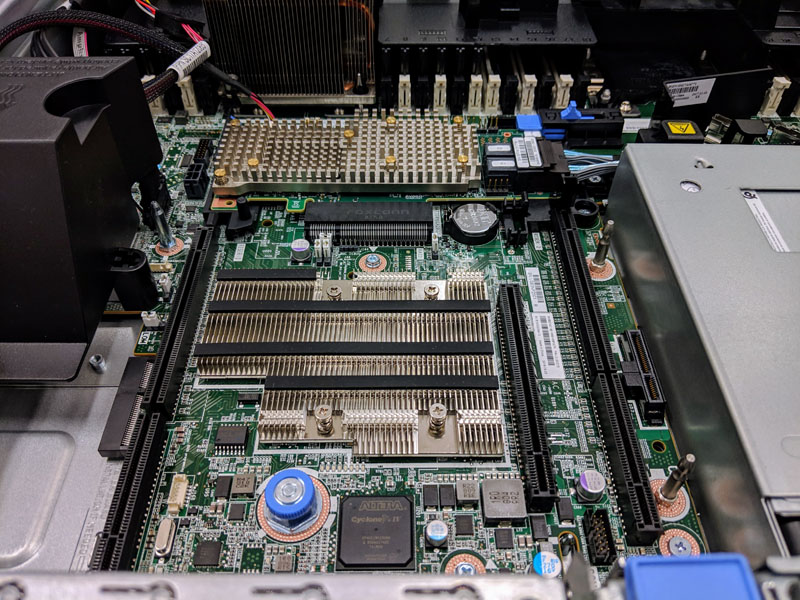

The rear expansion area has set places for the RAID controller and network LOM. Beyond these critical components are several PCIe slots primarily intended for risers. We did not have these risers in our test system, so this area is relatively clear. One can see the Intel Lewisburg PCH heatsink clearly in the middle of the expansion slot area.

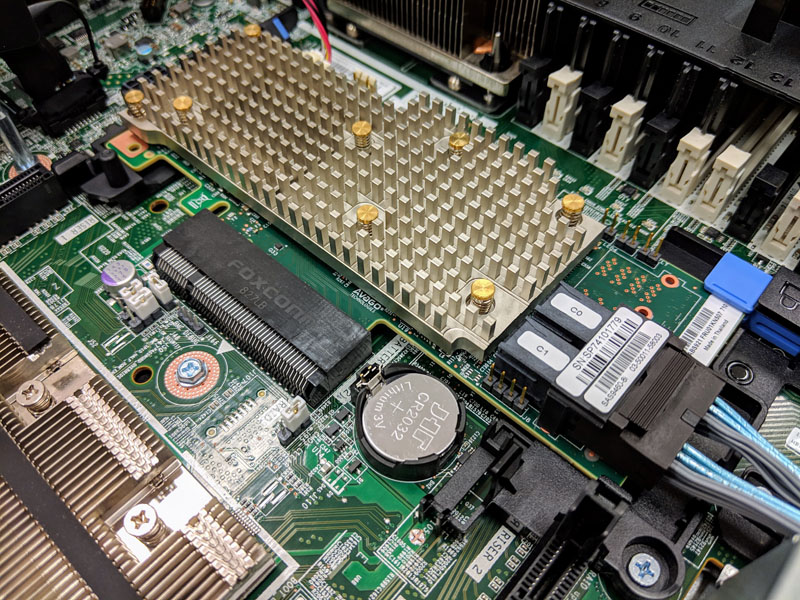

The SAS controller is called the Lenovo 930-8i which is a 2GB cache unit. One can see from the photos that this is still labeled a (Broadcom) SAS9460-8i which comes from the company’s LSI acquisition. Lenovo has a fantastic great tool-less retention and service mechanism in the server to make upgrades easy.

In Ubuntu/ Debian, the SAS controller showed up as:

af:00.0 RAID bus controller: LSI Logic / Symbios Logic MegaRAID Tri-Mode SAS3508 (rev 01)

That is an excellent choice although we would have loved to have used it with NVMe drives. LSI controllers have broad OS support and have become the defacto industry standard outside of the HPE realm. We fully expect that these controllers will be supported in every major OS for years to come and that there will be plenty of upgrade parts on the market for as long as you have one of these servers.

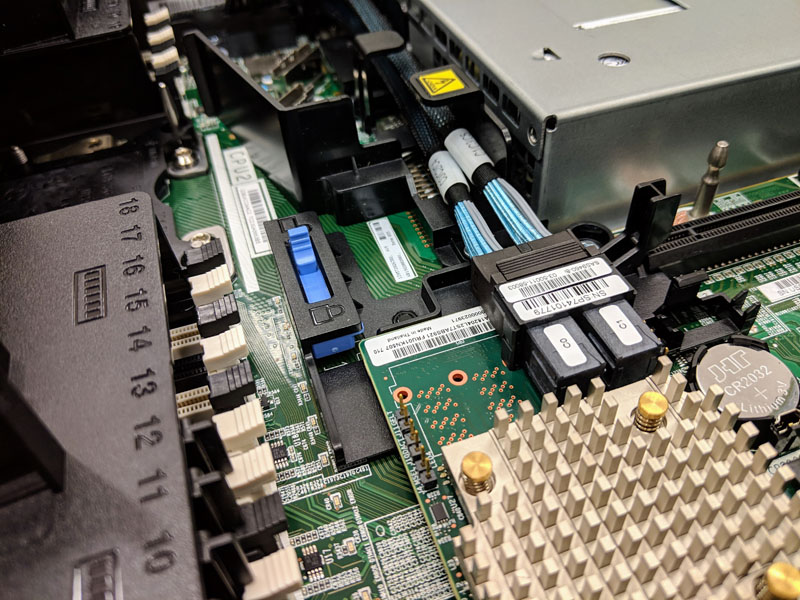

We wanted to take the opportunity to highlight something that is often overlooked: cabling. Lenovo has a great set of cable management features in the system that allows cables to be neatly tucked away while also being extremely easy to service. Some lower-end servers rely heavily on plastic zip ties which are a pain to clip and replace. You can see the SAS RAID controller cables here being routed from the center of the motherboard to the chassis edge en route to the front panel via cable hooks. This is a great design that comes from decades of top-tier server design experience.

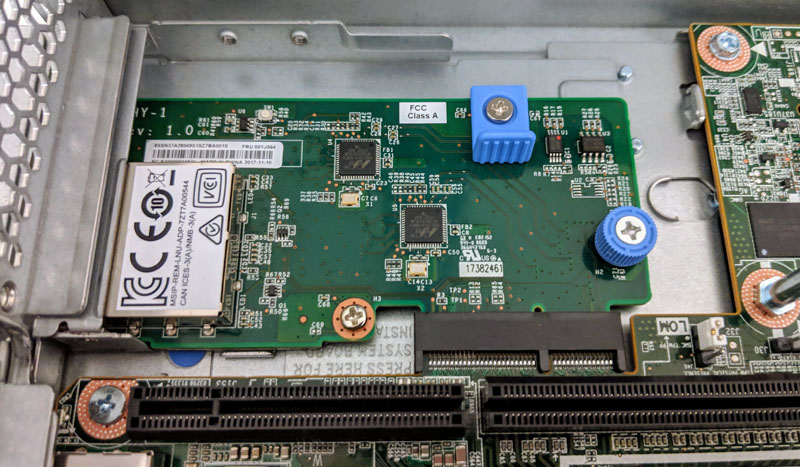

Networking is provided via a LOM. We purchased a basic 1GbE LOM and it was surprising. The LOM utilizes the Intel X722 1GbE NIC. For those that need some background, this is the NIC found on the Intel Xeon SP Lewisburg PCH. On the 1GbE LOM we found low-cost Marvell chips being used as PHYs. Frankly, this is a very low-cost LOM solution that should be included with servers as a default if another LOM is not ordered. Drivers are handled through standard Intel offerings which makes this an extremely inexpensive device to build and maintain, in-line with low-cost dual port NICs that retail in the $25 range.

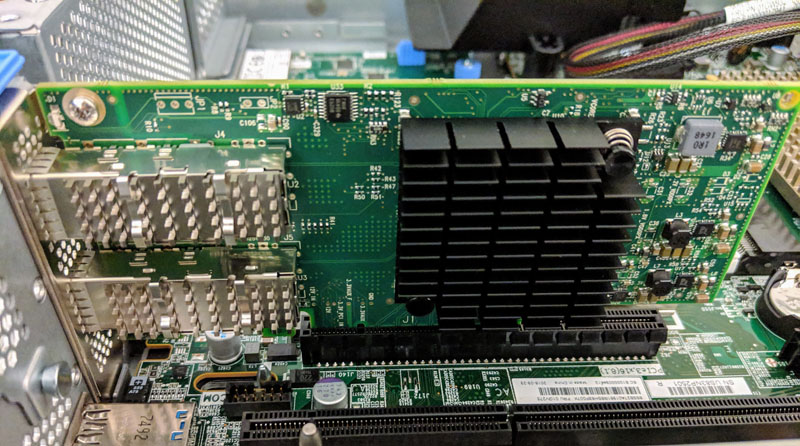

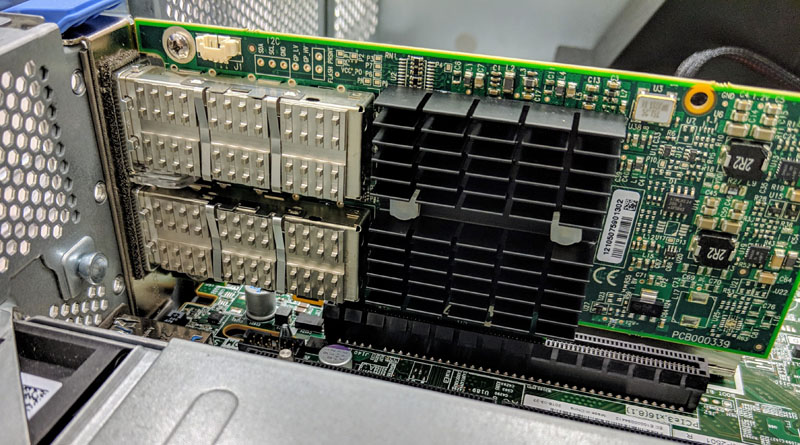

Since our test unit did not have risers, we had a single external PCIe slot to use which we opted to try common networking options. Since 25GbE is going to be the dominant networking interface for this class of servers over the next few years, we have a Mellanox ConnectX-4 Lx dual port SFP28 adapter installed here.

We also used a legacy Mellanox 40GbE adapter for those who are installing these into existing networks.

One issue we ran into is that we needed drivers for Mellanox cards for Ubuntu installation. That was a pain given no iKVM into the OS installation, no 1GbE (prior to installing the LOM) and therefore, we had no way to do this remotely or get the server properly configured with our provisioning environment without an onboard NIC. Our advice to buyers is to always get a LOM. To Lenovo, an onboard NIC is important. Mainstream HPE ProLiant Gen10 servers, including two in our lab, now have quad onboard 1GbE NICs. Dell EMC’s R740 does not come with onboard networking, but their configurator mandates at least a dual port 1GbE LOM is ordered. Lenovo should not ship servers without LOMs to avoid this frustration, or simply spend $2 to put dual 1GbE NICs onboard for provisioning and out of band management of the OS/ hypervisor.

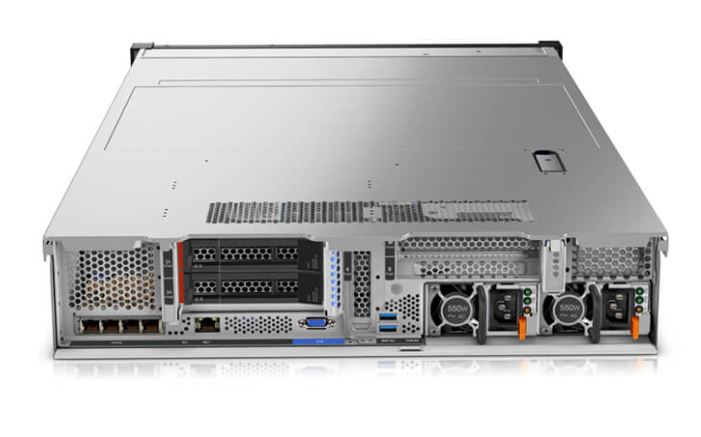

The Lenovo ThinkSystem SR650 has multiple options depending on configuration. For example, ours did not have this feature but there are options for multiple I/O card risers as well as rear hot swap bays. Here is a look at a better-configured system’s rear view. One can see redundant PSUs and a quad port LOM on opposite sides. The Lenovo ThinkSystem SR650 has a management NIC, VGA connector and two USB 3.0 ports standard. Everything else is configurable making the platform very flexible.

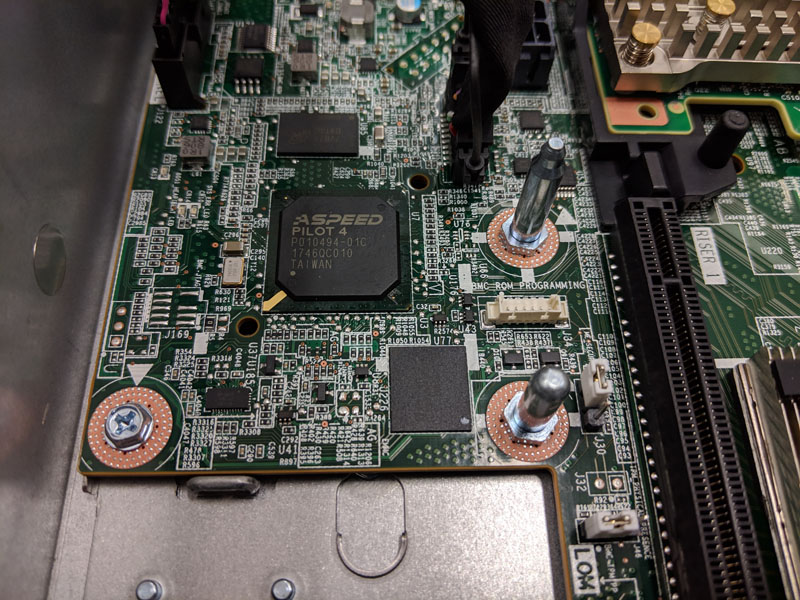

One other area that we will point out given our recent explosive coverage in Investigating Implausible Bloomberg Supermicro Stories, the BMC is an ASPEED Pilot 4. You can read about BMCs in our piece Explaining the Baseboard Management Controller or BMC in Servers. Essentially, this is what is powering the Lenovo XClarity management and monitoring functions. This is a new controller for Lenovo. One item we were not overly fond of is the BMC ROM Programming header. We understand why this exists, but it is something our readers may find interesting.

Lenovo has a ton of great features such as a multitude of riser options, multiple front hot swap bay configurations, and dual processor support. Given our testbed, we did not have the ability to test these as we do with other servers.

Next, we are going to look at the system specs as tested, along with an interesting finding around the CPU. We are then going to look at the system topology, followed by management, and then performance.

dang, 9.2. you must have really hated this one!

I’m looking for a cheap software-defined-storage server that would run Red Hat Ceph, anyone would recommend this or something similar for a Private OpenStack cloud?

Are the connectors on the sata/sas backplane standard or proprietary?