Inspur Systems NF5180M5 Management

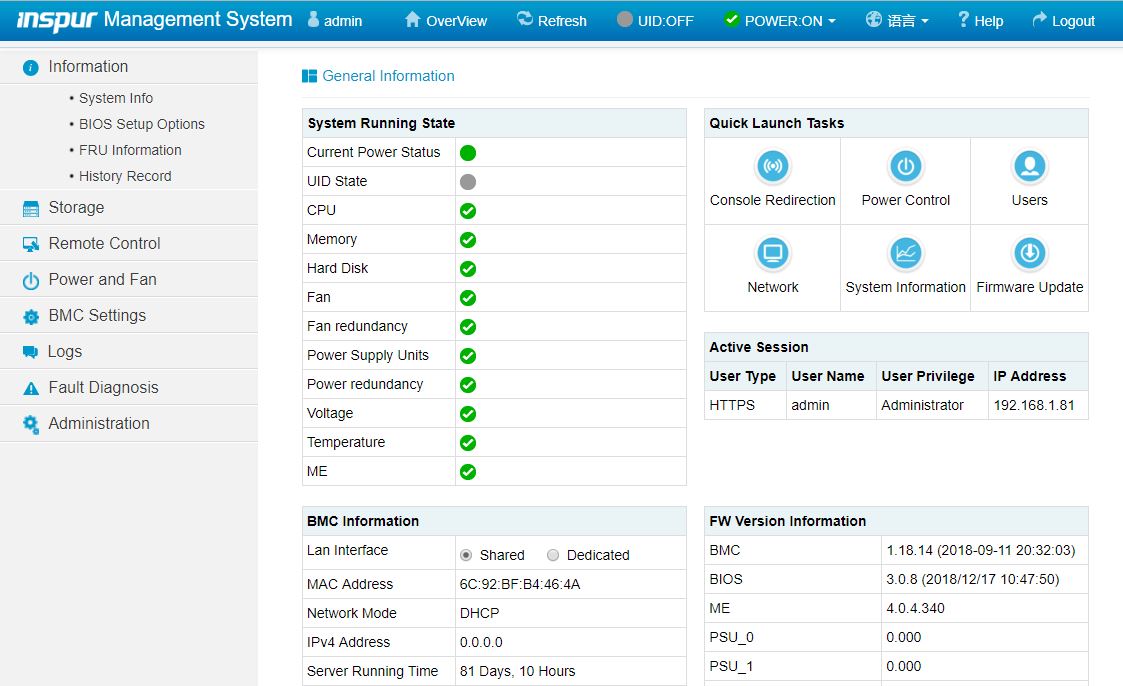

Inspur’s primary management is via IPMI and Redfish APIs. That is what most hyperscale and CSP customers will utilize to manage their systems. Inspur also includes a robust and customized web management platform with its management solution.

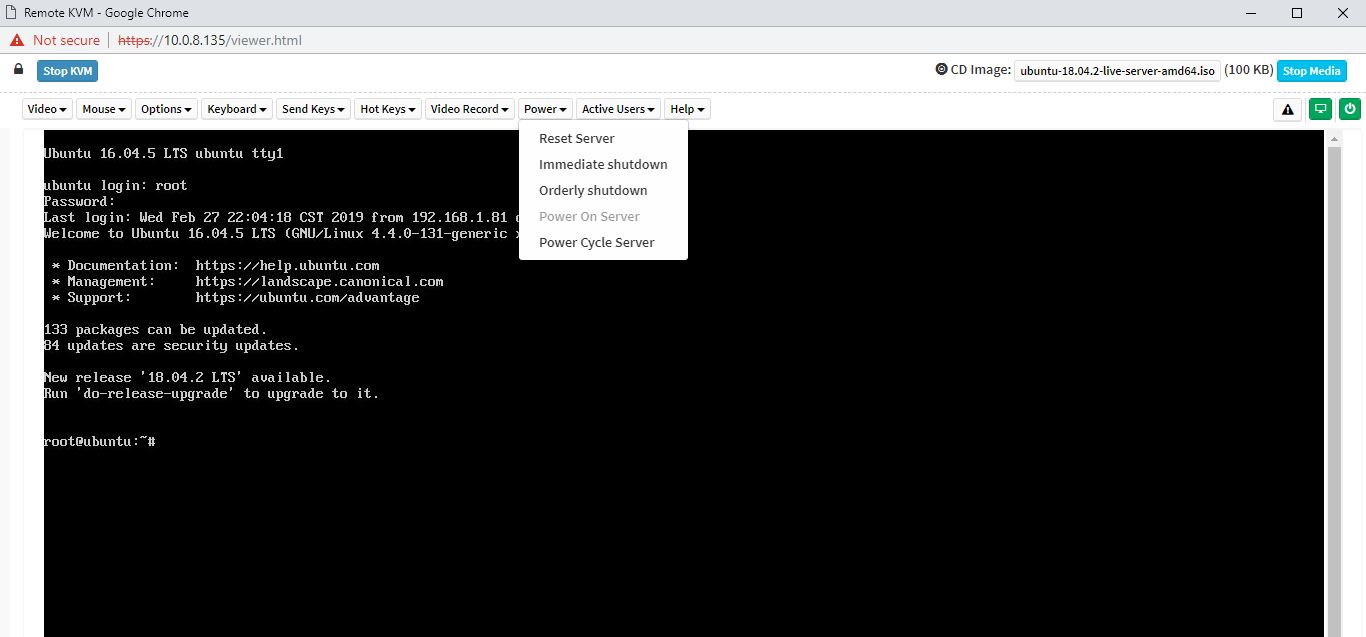

There are key features we would expect from any modern server. These include the ability to power cycle a system and remotely mount virtual media. Inspur also has a HTML5 iKVM solution that has these features included. Some other server vendors do not have fully-featured HTML5 iKVM including virtual media support as of this review being published.

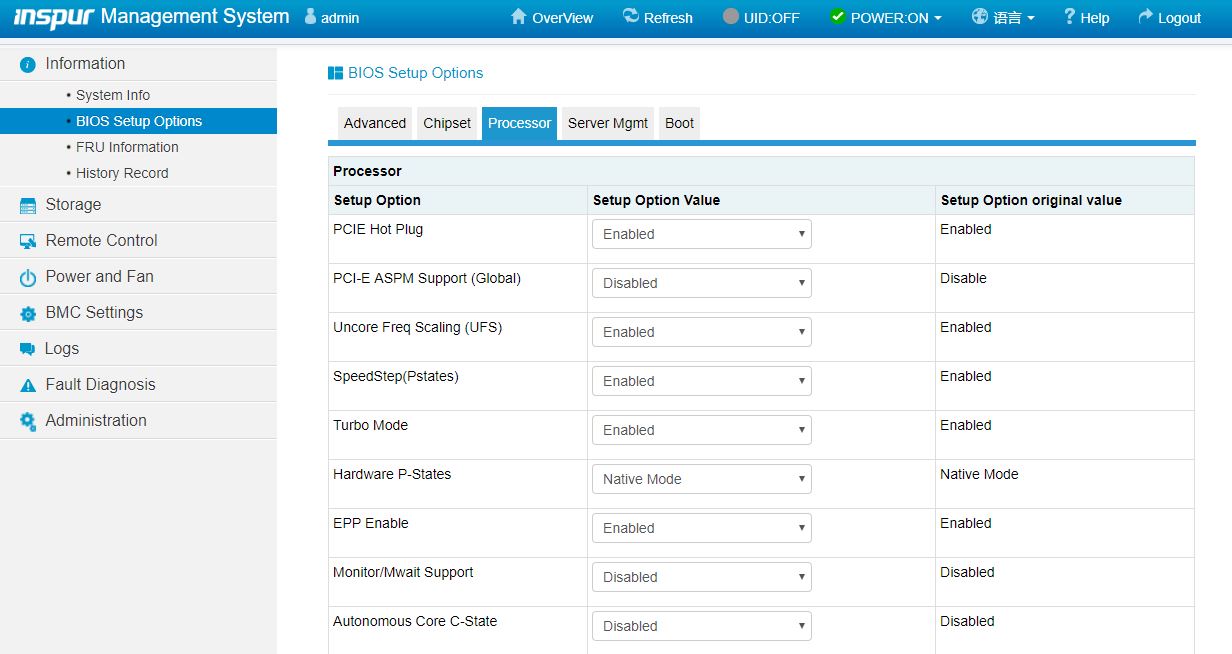

Another feature worth noting is the ability to set BIOS settings via the web interface. That is a feature we see in solutions from top-tier vendors like Dell EMC, HPE, and Lenovo, but many vendors in the market do not have.

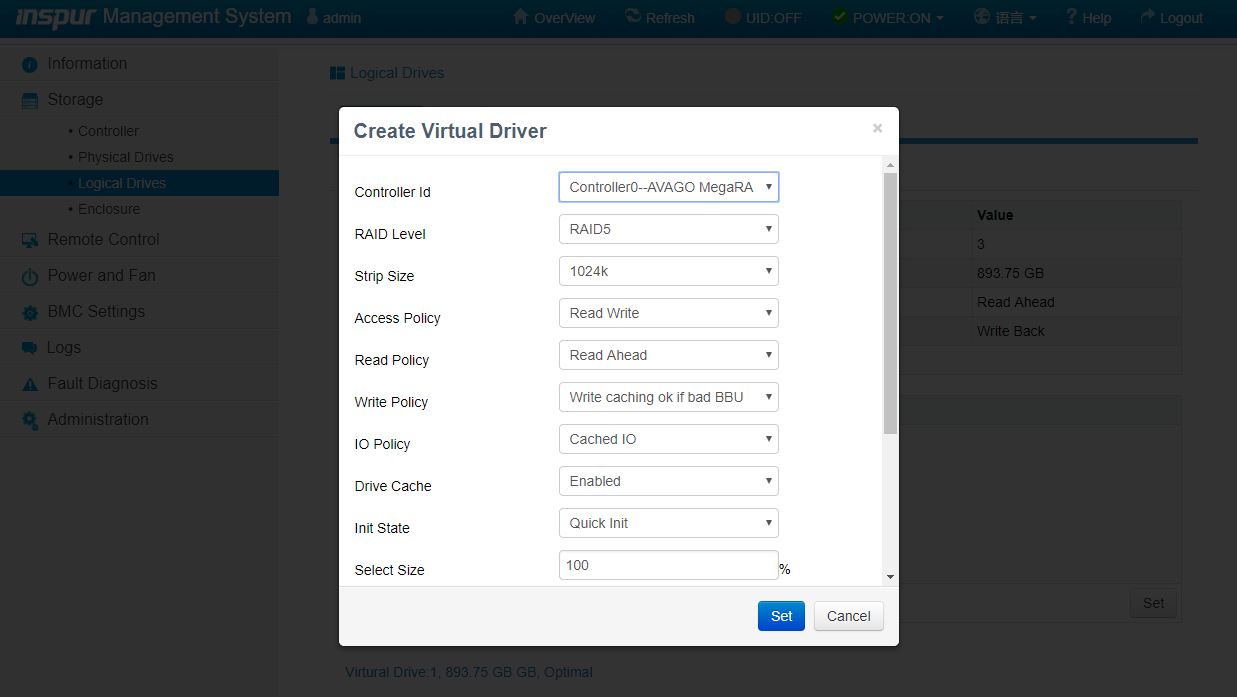

Another web management feature that differentiates Inspur from lower-tier OEMs is the ability to create virtual disks and manage storage directly from the web management interface. Some solutions allow administrators to do this via Redfish APIs, but not web management. This is another great inclusion here.

Based on comments in our previous articles, many of our readers have not used an Inspur Systems server and therefore have not seen the management interface. We have an 8-minute video clicking through the interface and doing a quick tour of the Inspur Systems management interface:

It is certainly not the most entertaining subject, however, if you are considering these systems, you may want to know what the web management interface is on each machine and that tour can be helpful.

Inspur Systems NF5180M5 Test Configuration

For our review, we are using the same configuration we have been using for our 2nd Generation Intel Xeon Scalable CPU dual-socket reviews, we are using the following configuration:

- System: Inspur Systems NF5180M5

- CPUs: Intel Xeon Gold 5115

- RAM: 12x 32GB DDR4-2933 ECC RDIMMs

- Storage: 2x Intel DC S3520 480GB OS, 1x Samsung PM883 960GB SSD

- Networking: Mellanox ConnectX-4 Lx 25GbE OCP, Intel X520-DA2 OCP

- PCIe Accelerator: NVIDIA Tesla T4

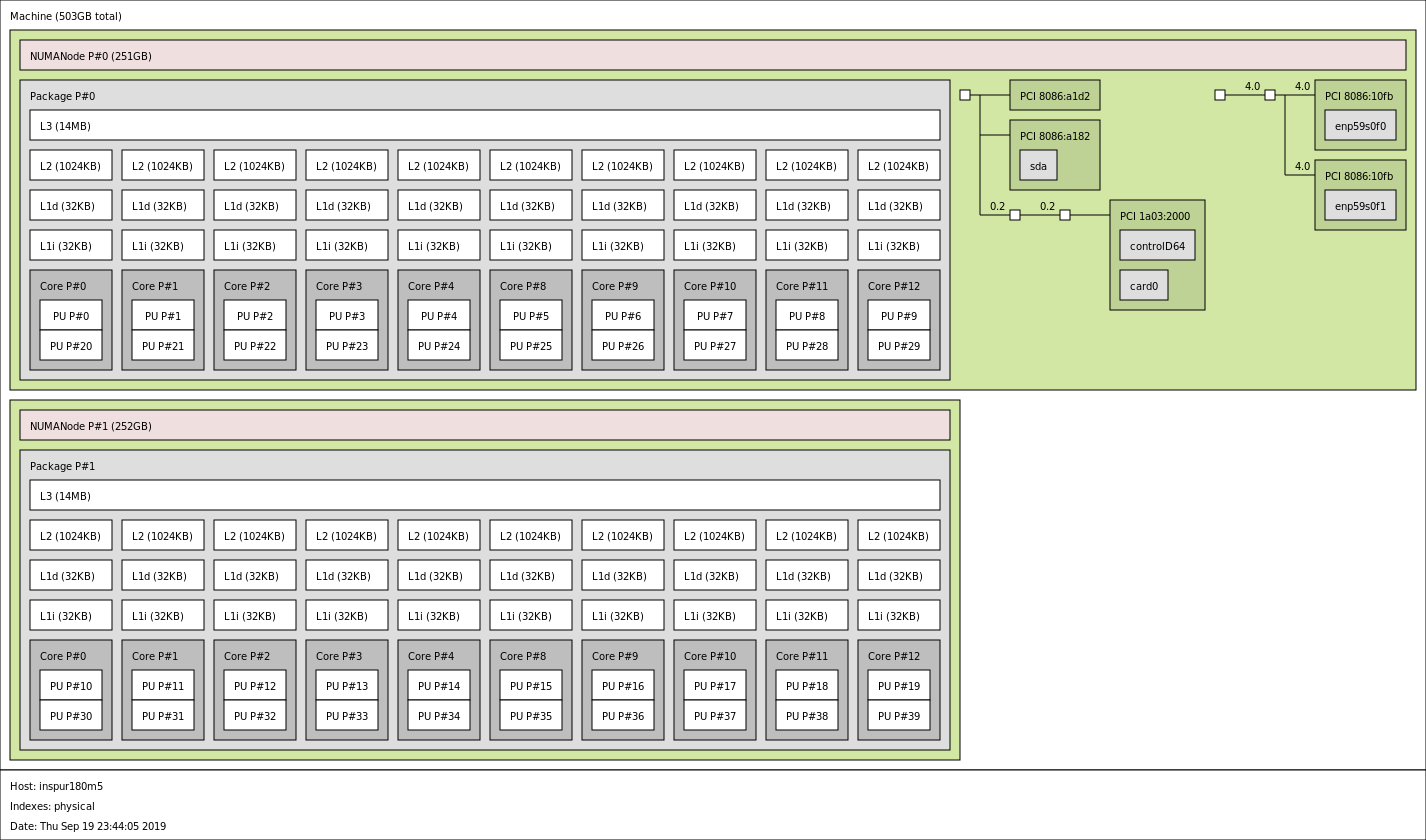

A quick note here, we did not utilize the Intel Optane DCPMM here because we had standard chips. Using Intel Optane DCPMM even with two 128GB modules per CPU to stay well below the 1TB per CPU memory limit would have meant our memory would work at only DDR4-2666 speeds. Here is what the topology looks like with the dual Intel Xeon Gold 5115 CPUs installed:

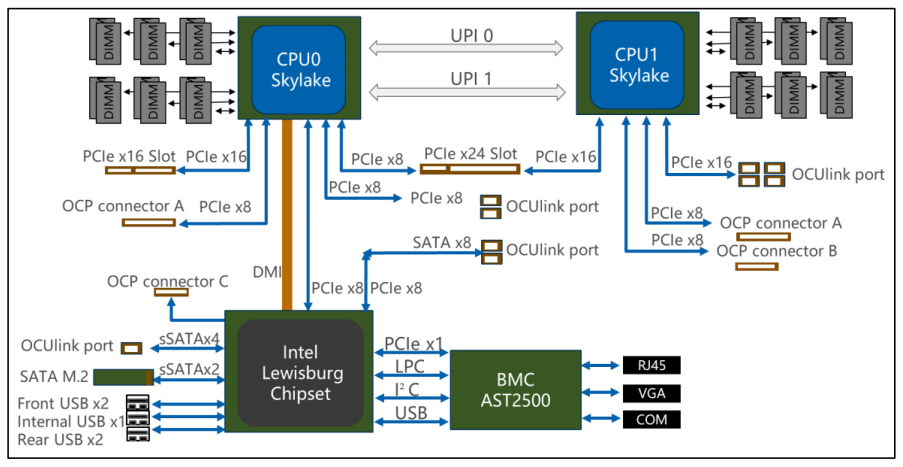

You can see the system’s block diagram here:

Overall, we like the electrical layout of the system utilizing all 48x PCIe lanes per CPU. One of the more unique features is that Inspur adds an additional PCIe x8 link to the Lewisburg PCH.

Next, we are going to take a look at the Inspur Systems NF5180M5 CPU performance.

They are not going to get a lot of sales with their current “purchase/contact us page”. The serve details page is also not elaborate. TS860G3 info page have all product images broken.

If they showed at least ballpark price, lead-in times, available components(what ‘Intel Xeon Scalable’ are supported?) and put at least some attention to the form, it might be better.

“Maximum Observed: 0.6W” thats very power efficient server ;)

@altmind

Yea, it’s interesting to read about the these challenger brands, but frustrating that they don’t offer any outlet besides wholesale bulk purchases and then they make it difficult to find out any real information on their products.

The big brands need challenging, it’s what keeps the market vibrant, but when I read a glowing review for a product you cannot practically buy, it’s somewhat disappointing.

This server is available in the market. Inspur in over 110 countries and regions across the globe. You should check on their website.