Facebook buys a lot of hardware and founded the Open Compute Project. It is using that power to push vendors towards open standards. Both Facebook and Microsoft have been pushing OCP so that it can meet operational needs as well as avoiding vendor lock-in. As a result, the companies are pushing standards that expand beyond server blocks, and even into how multiple vendors deliver technology for OCP servers. The new OCP Open Accelerator Module or OAM is Facebook’s form factor for accelerators that it wants to deploy.

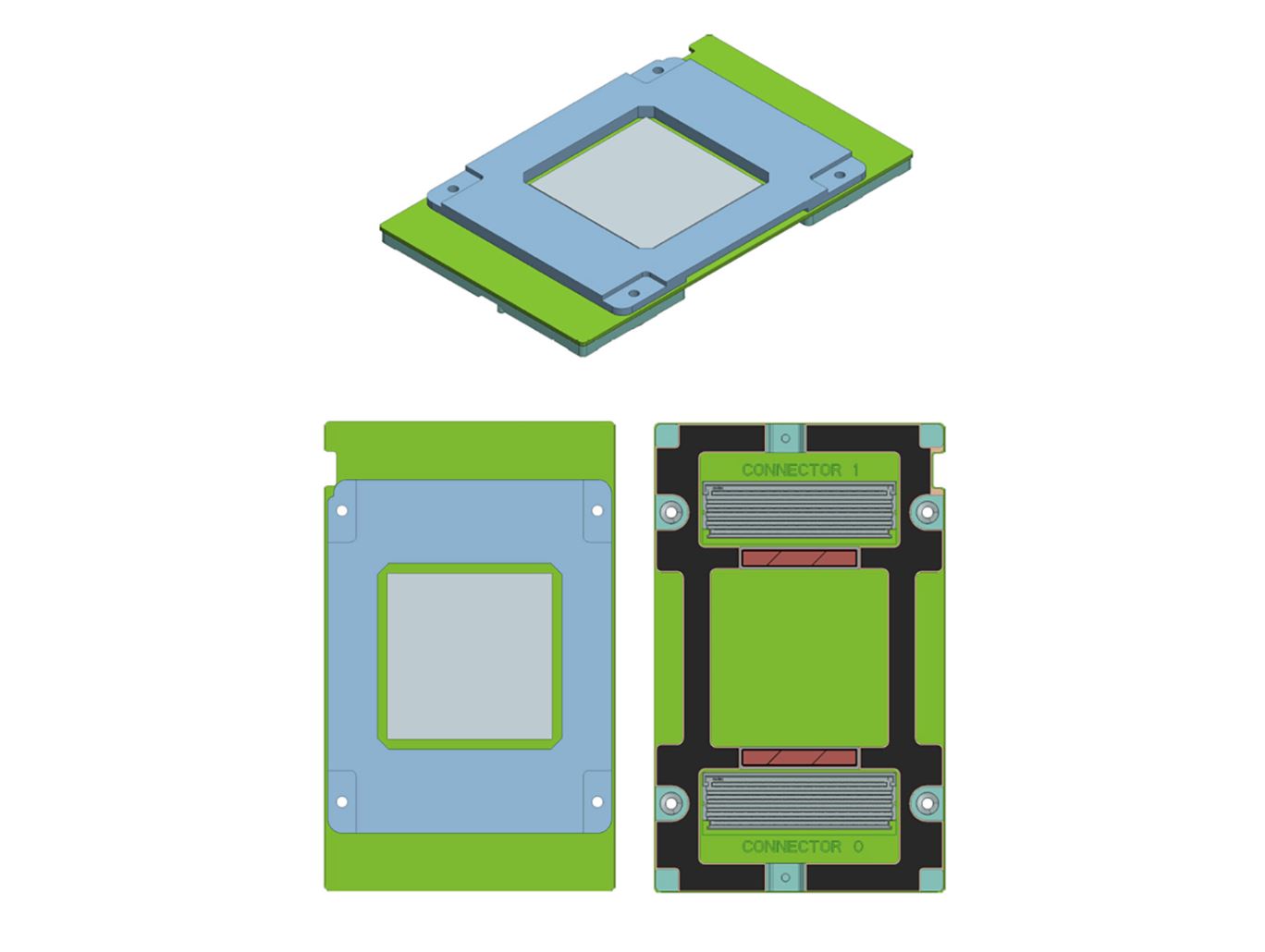

Facebook OCP Open Accelerator Module OAM

The Emerald Pools system supports up to eight OCP Open Accelerator Modules. Here are the basic form factor drawings for OAM.

This is an OCP OAM with its associated heatsink.

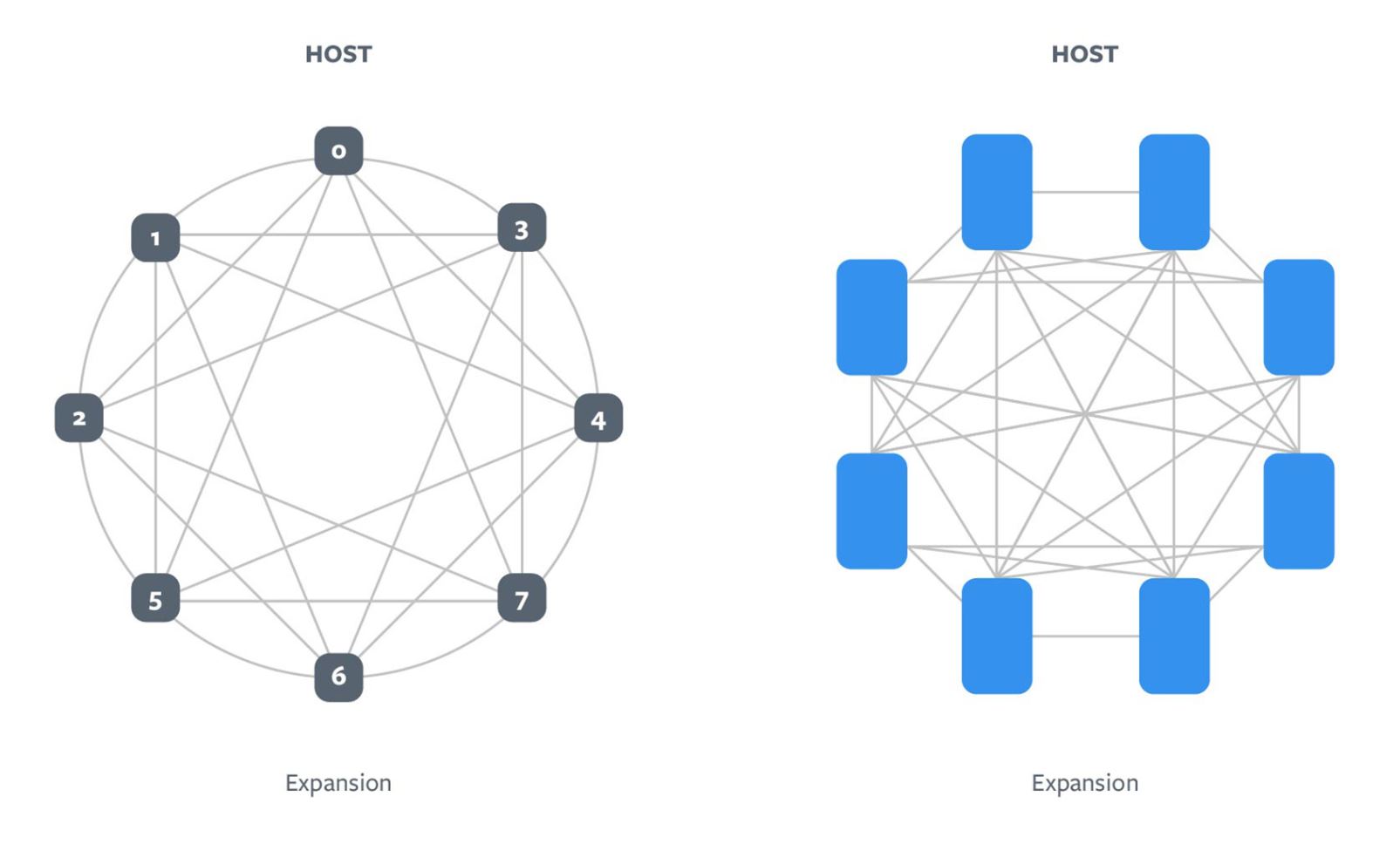

With the OCP Accelerator Module Facebook is enabling several topologies for the accelerator to accelerator communication.

We have already seen Intel show off a Spring Crest OAM module in our piece: Intel OCP Summit 2019 Keynote CLX Cooper Lake and Spring Crest.

We will have pieces later during OCP on Zion and the OAM infrastructure when we can get some more hands-on time with the modules.

Key OCP Accelerator Module Specs

Here are the key specs for the OCP Accelerator Module:

- Support for both 12V and 48V as input

- Up to 350W (12V) and up to 700w (48V) TDP

- Dimensions of 102mm x 165mm

- Support for single or multiple ASICs per module

- Up to eight x16 links (host + inter-module links)

- Support for one or two x16 high-speed links to host

- Up to seven x16 high-speed interconnect links

- Expect to support up to 450W (air-cooled) and 700W (liquid-cooled)

- System management and debug interfaces

- Up to eight accelerator modules per system

These are also designed to fit into standard 19″ racks which means we could see future mainstream server offerings utilize OAM designs.

Support for the OAM

The OCP Accelerator Module project has a strong set of companies signed up. Some of these are:

- Intel

- AMD

- NVIDIA

- Graphcore

- Xilinx

- Baidu

- Alibaba

- Microsoft

- Tencent

- Huawei

- Molex

- Wiwynn

- Lenovo

- Bittware

- Qualcomm

- Habana

- Quanta

- Penguin Computing

There are a lot of members that appear to be standardizing on the new OAM standard. In person, these look like larger versions of the NVIDIA SXM2 modules. We covered installing SXM2 inHow to Install NVIDIA Tesla SXM2 GPUs in DeepLearning12 which you can see in action here: