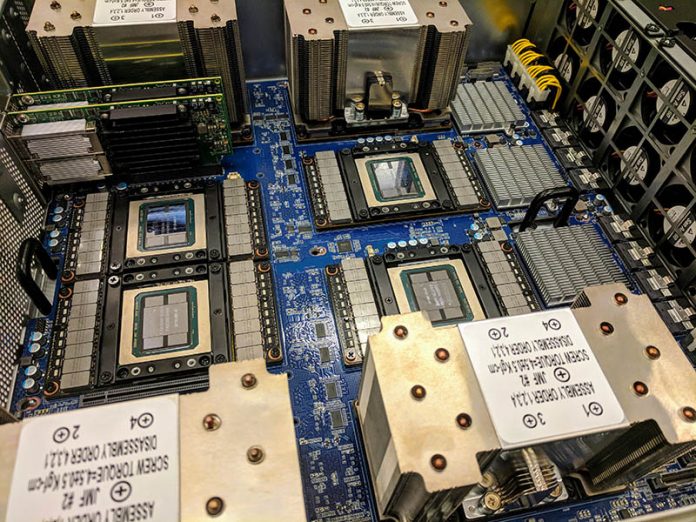

As part of our DeepLearning12 build, we had to install NVIDIA Tesla P100 GPUs. These NVIDIA Tesla GPUs are SXM2 based. SXM2 is NVIDIA’s form factor for GPUs that allows for NVLlink GPU-to-GPU communication which is significantly faster than over the PCIe bus. The installation of SXM2 GPUs is significantly more difficult than that of PCIe GPUs, so we made a quick video of the process.

DeepLearning12 the DGX-1.5 Build

We are going to have more on the actual build later, but we are calling DeepLearning12 the DGX-1.5. That is because the system has a number of updates to the original DGX-1 including using a newer architecture.

- Barebones: Gigabyte G481-S80

- CPUs: Intel Xeon Gold 6136

- RAM: 12x 32GB Micron DDR4-2666

- Networking: 4x Mellanox ConnectX-4 EDR/ 100GbE adapters, 1x Broadcom 25GbE OCP, 1x Mellanox ConnectX-4 40GbE

- SSDs: 4x 960GB SATA SSDs

- NVMe SSDs, 4x 2TB NVMe SSDs

We are calling this a DGX-1.5 because DeepLearning12 is based on the Gigabyte G481-S80, Intel Skylake-SP platform. Although for low-cost single root PCIe servers Skylake was a step backward, for the larger NVLink systems, Skylake-SP has a number of benefits. These benefits include an increase in memory bandwidth of over 50%, and better IIO structure for PCIe access, and more PCIe lanes for additional NVMe and networking capabilities.

We started DeepLearning12 from a barebones box. Here is the (heavy) Gigabyte G481-S80 as it arrived in a small pallet box in the data center.

From here, we had to build the system ourselves.

As you can see, we had eight SXM2 NVIDIA Tesla P100’s ready for installation along with a lot of extra gear. This is ~$65,000 worth of gear sitting on the table at Element Critical in Sunnyvale, California waiting for installation.

Just as a note here, while this was successful, I spoke to Rob Ober, Tesla Chief Platform Architect at NVIDIA, at Hot Chips 30. He was surprised that we successfully installed SXM2 GPUs saying that he had only heard of 1-2 other people who have done it themselves. Apparently big OEMs have jigs that they use to ensure that they do not break pins. We did not have a jig.

NVIDIA Tesla SXM2 GPU Installation in DeepLearning12

We made a video of the GPU installation process. Everything else was easy to install. The GPU installation took several days.

Since Element Critical is home to a number of deep learning/ AI clusters in the Silicon Valley, we had an interesting experience. Someone that works on the large clusters walked by and told us “you aren’t using that screwdriver are you?” The ensuing discussion was immensely valuable. Over-torquing the screws, especially the heatsink screws, can damage/ crack the GPU. We have heard stories even from major OEMs that the threshold is somewhere around 15%.

As part of the process, we got an expensive screwdriver, that had the right tolerances, calibration, and accuracy off of Amazon, the CheckLine TSD-50. This expensive digital driver allowed us to successfully install all eight GPUs which worked the first time.

Final Words

We are going to have more on DeepLearning12 and the Gigabyte G481-S80 in the coming weeks. Thus far the system has been performing flawlessly. We are still working on what our exact CPU recommendation will be. Stay tuned for more.

Blue PCB? Is that an EVT?

Hugh, Gigabyte uses blue PCB on their server motherboards. They also use blue in some of their ODM/OEM designs. There are some HPE CloudLine servers with blue PCB because they are Gigabyte designs.

Nice system, I guess that in fp32 it will be slower than Deeplearning 11 and in all other apps faster.

Misha – beyond just raw numbers, at the application level it is a different ballgame due to the memory bandwidth and GPU to GPU bandwidth. Also, having one 100gbps adapter per two GPUs means that feeding via GPUdirect RDMA is much different than a 1080 Ti system.

Patrick – Can you let both systems run Gromacs (STH’s favorite AVX-512 app)?. Sure it’s different so is the price. Looking forward to the differents between 100 gbps adapter per 2 GPU’s and 16 PCIe-3 lanes per 1 GPU. The difference in memory bandwidth makes a lot of difference as does the NVLink. Can’t wait for AMD jumping on the bandwagon with the upcoming VEGA 20 with XGMI and 1.2 TB/s of memory bandwidth. To bad Intel had to go back to the drawing board with Xeon-Phi being EoL

$65k worth of gear, the specual $350 screwdriver must have been the cherry on top toward the end. Well worth the investment!

Patrick – Can you explain the architecture with GPUdirect RDMA and the measure the performance benefits. I think its highly beneficial in Skylakes NUMA architecture?

Hi Martin, we will have a series. This is something I have already brought Mellanox over to the lab to discuss doing.

Great writeup and video. I didn’t even know Gigabyte made servers like this

What has the stability of this system been like?

k8s, so far great. We have been rebooting it once a quarter for patches but that is it.

Thanks for the reply!

Any plans to build a deeplearning13? I hope to see AMD make some effort to get more into the AI market.

We are going to have something really interesting in the next 2 weeks. We are not saying this is “DeepLearning13” but it is an awesome server.