EDSFF E3

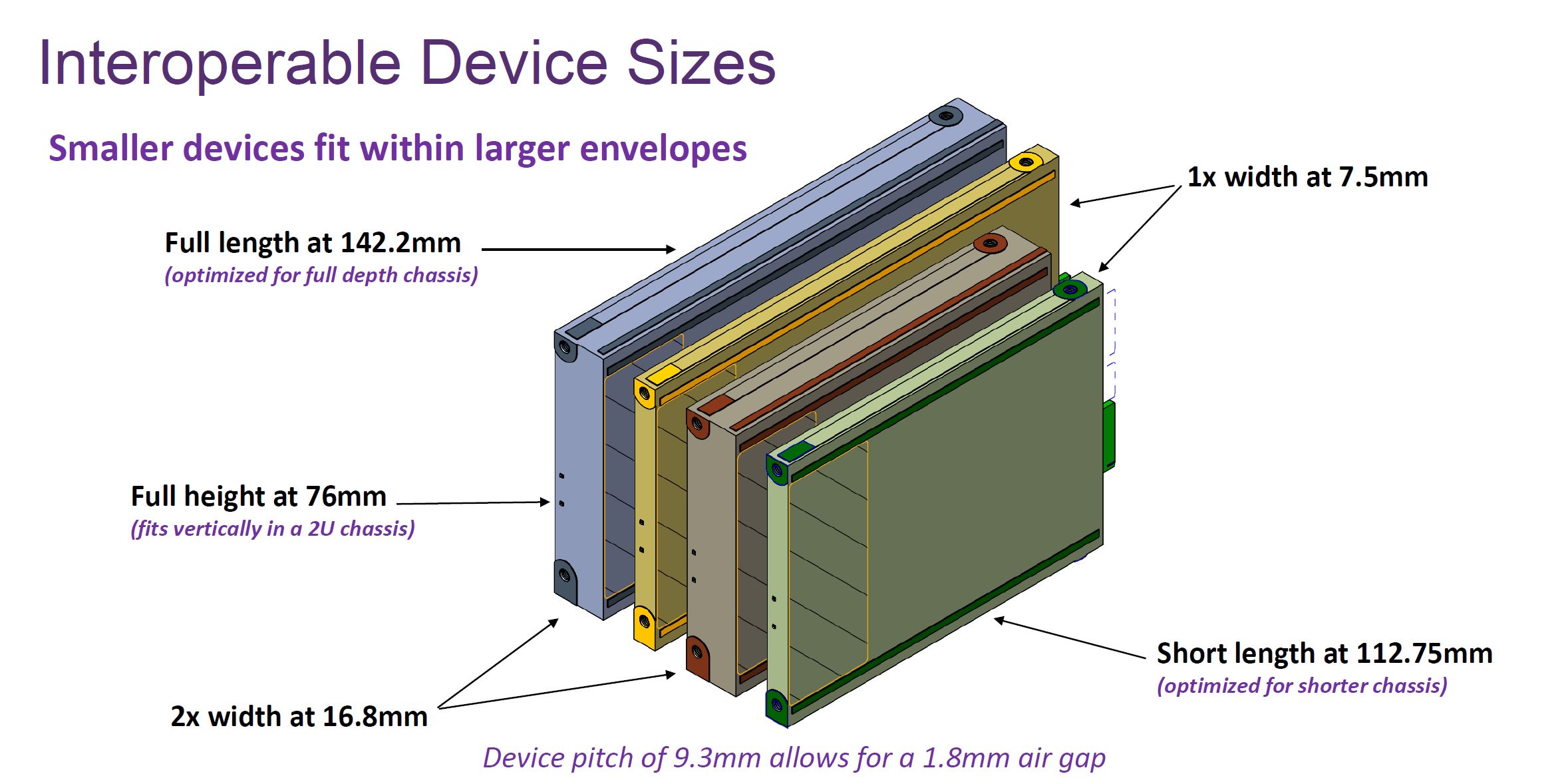

The EDSFF E3 specification has four primary form factors. E3.L, E3.S and then either in single or double widths. The “double” width is 16.8mm while the single width is 7.5mm. For those wondering since 7.5mm x 2 = 15mm, the double-width is double plus what would be the 1.8mm air gap between 7.5mm devices for a total of 16.8mm. Some vendors call single-width “1T” and double-width “2T” (“T” is for “thick”) and for some reason the way I remember this is double-width or double-wide like a trailer.

The E3.S 1T device is roughly similar in size to the U.2/ U.3 2.5″ drive size. There is some extra room used since these drives do not use drive trays.

Key here is that this is really the first re-imagining of a data center-focused form factor since perhaps the 1988 release of a 2.5″ hard drive. Previous generations of SATA, SAS, and NVMe SSDs have used the 2.5″ form factor to retain compatibility with hard drives, but that is effectively going away as 2.5″ hard drives are no longer a reasonable performance option in the data center, and have not been for years. This is the transition that forces storage tiering pushing hard drives into capacity 3.5″ storage chassis.

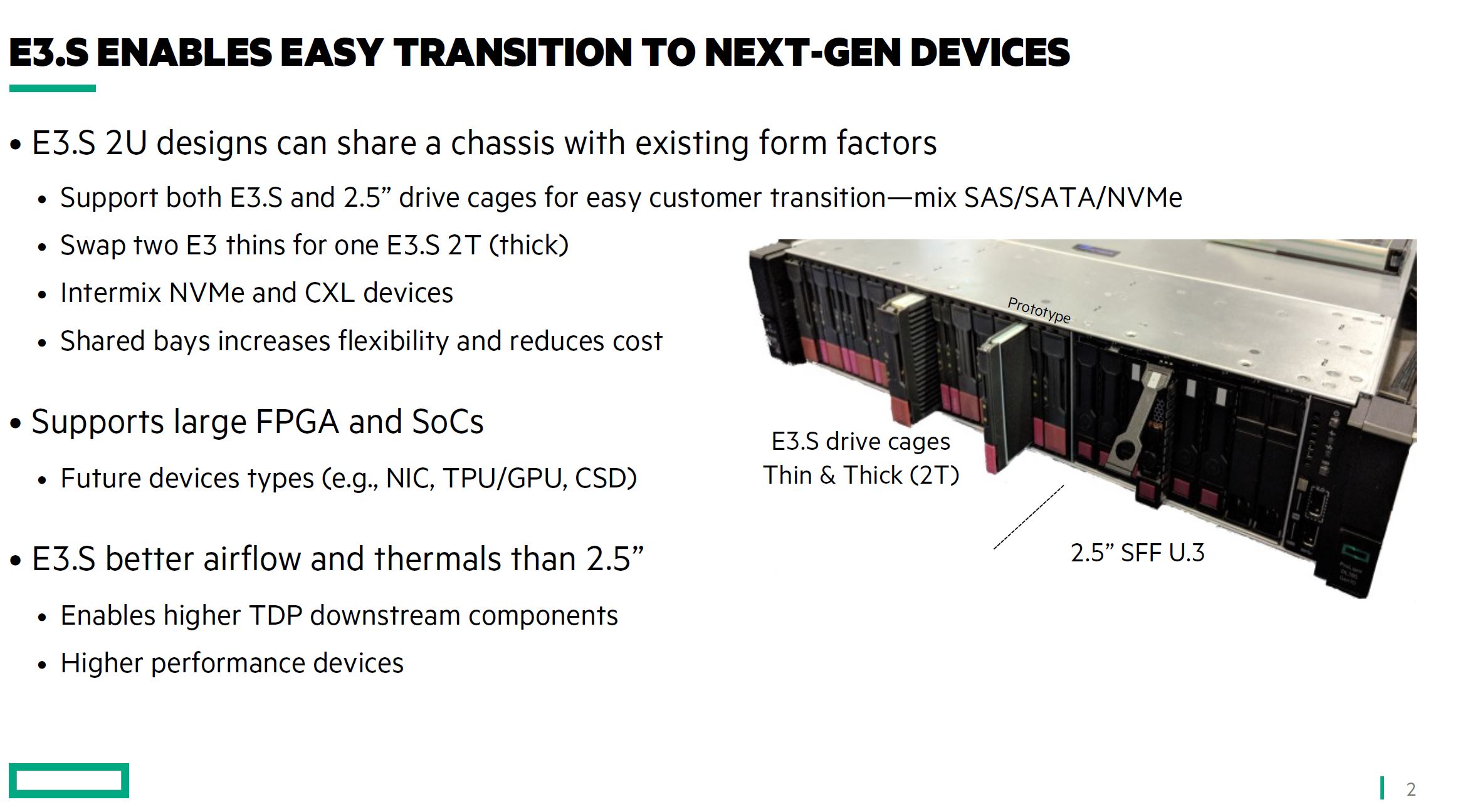

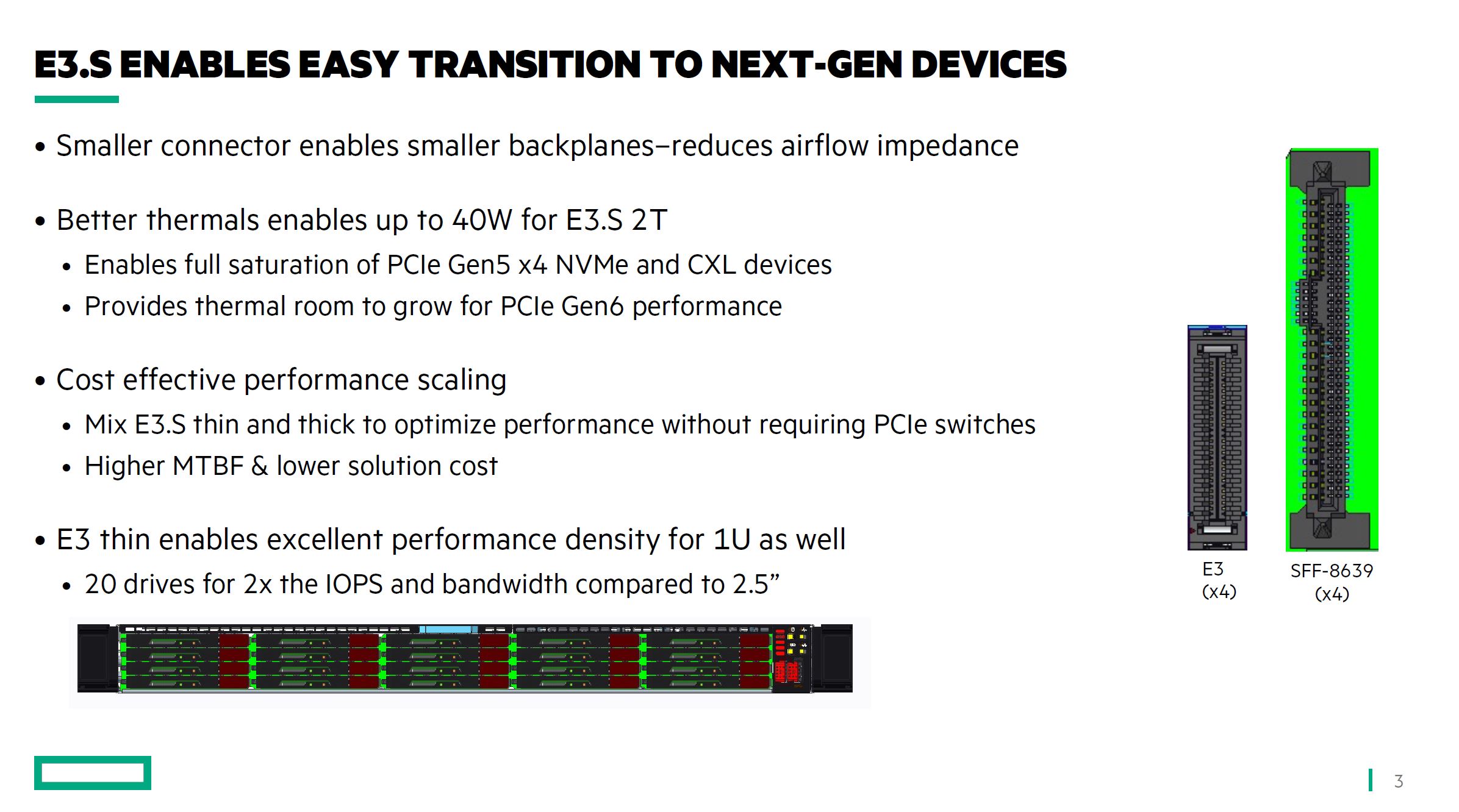

Traditional server vendors are using E3.S 1T and 2T devices as an option to help traditional server buyers transition to the next generation of devices. Here HPE is showing its prototype with swappable drive cages to enable 2.5″ SFF U.3 or E3.S 1T/ 2T devices in a ProLiant server.

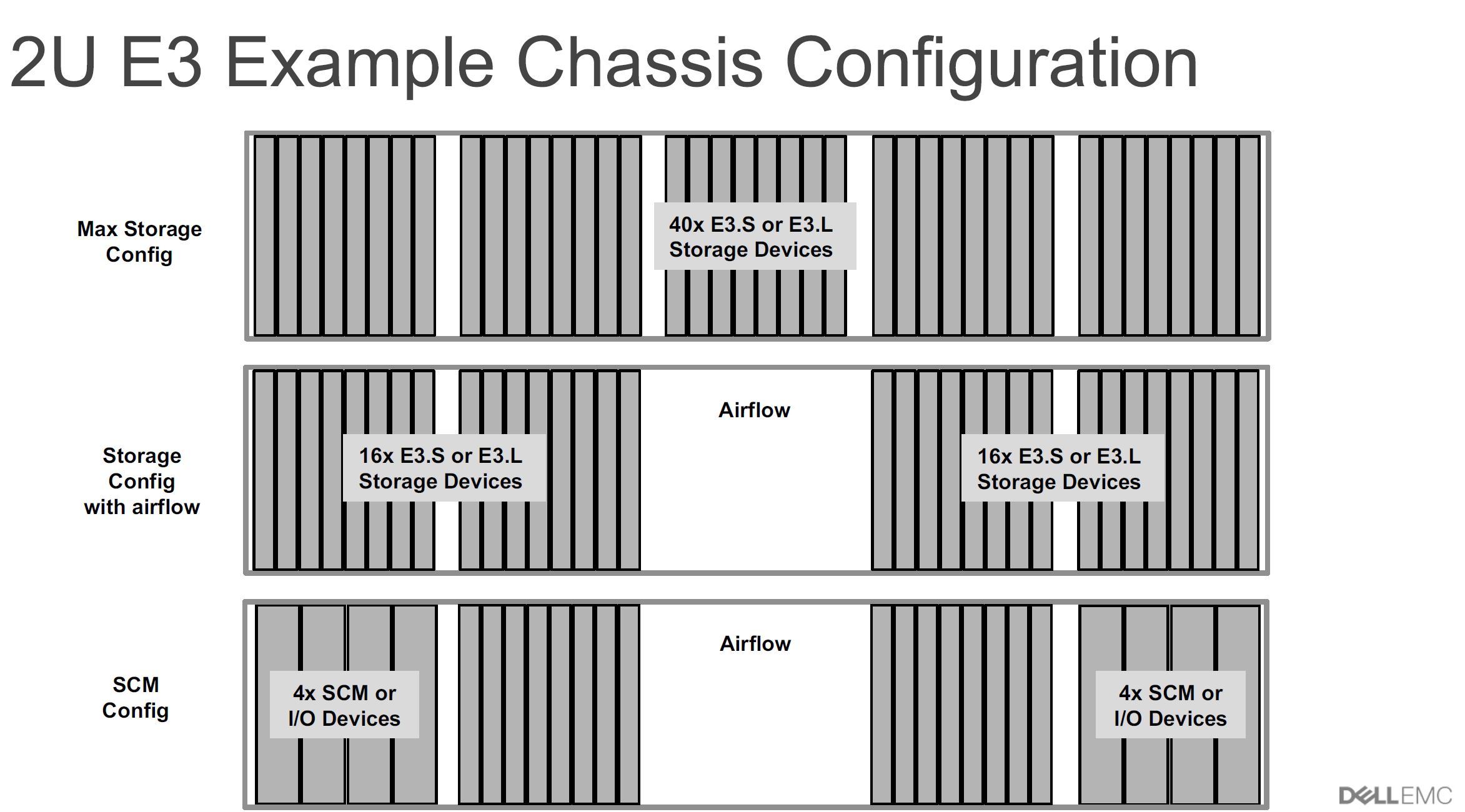

Dell EMC has also shown off E3 drive configurations with up to 40 drives across. Both HPE and Dell EMC are discussing the use of E3 not just for SSDs, but also for storage-class memory, GPUs, FPGAs, NICs, and other accelerators. With power and cooling targets ranging from 25-70W we have enough cooling for devices up to DPUs and even NVIDIA T4 type AI accelerators.

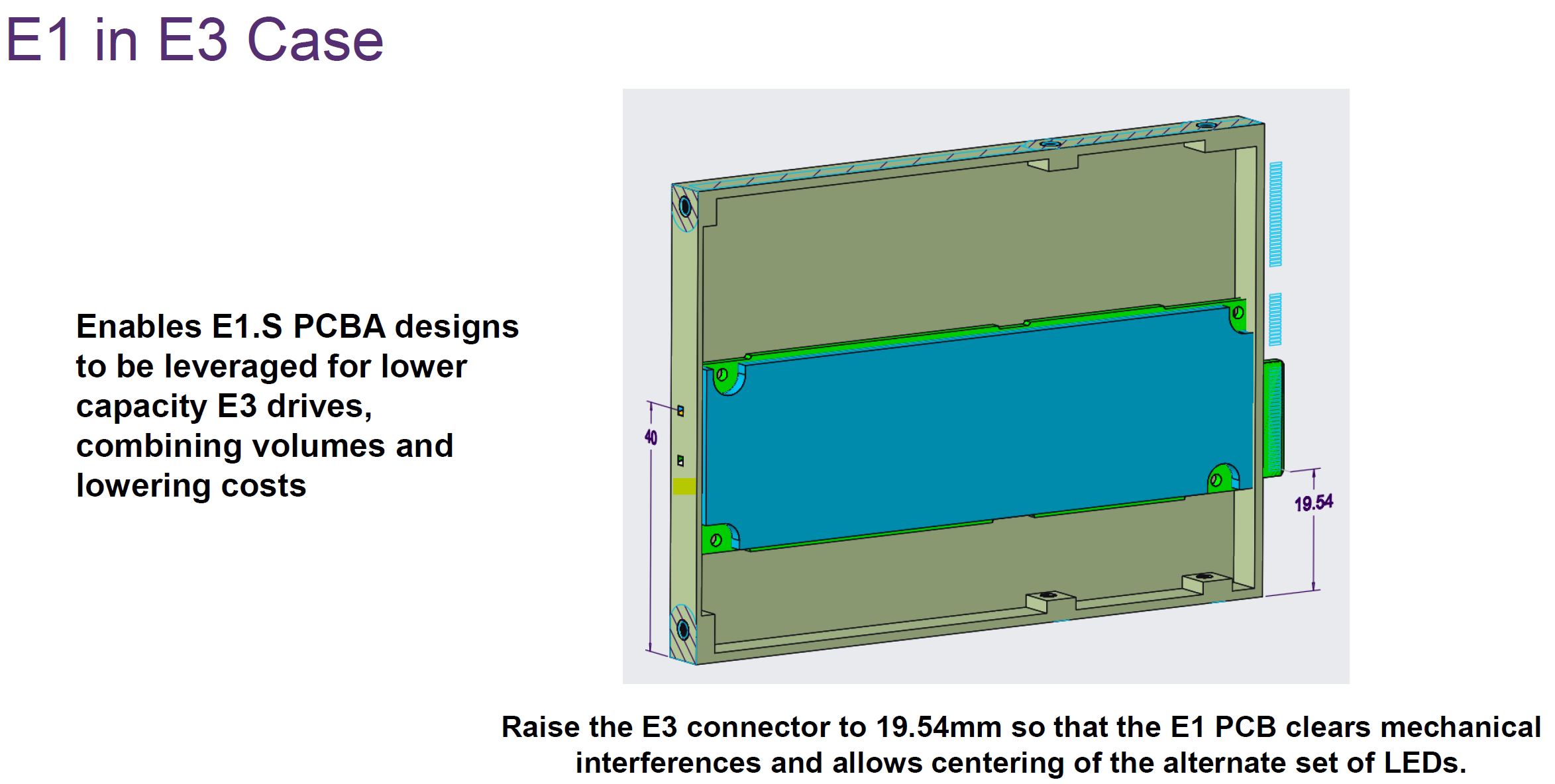

For those wondering about the relationship between E3 and the E1 variants we have discussed previously, the basic x4 connector is shared between the two.

There are also concepts that one can put an E1 drive and house it in an E3 case:

The additional height on E3 also allows for an x8 connector which may be important if one needed more lanes. To give some sense, the E3 form factor is being positioned as a replacement for dual-port NVMe and SAS SSDs. Also, as an example with 70W devices, more lanes may be desired.

If we recall the market share numbers from earlier in this article, E3 is not expected to drive the same volume as E1.S, but there seems to be a lot of industry effort behind it.

Latches and Other Form Factor Considerations

There are a few other fun parts of EDSFF that we wanted to quickly cover. One is perhaps the most fun. We mentioned with E1 and E3 drives that they are designed to align to chassis for insertion without drive trays/ caddies. Those trays for 2.5″ drives have the latching mechanisms to secure drives in place. So the ESDFF drive spec does not currently encompass a common latch.

There are a few reasons given for this, but let us “get real” for a moment. The EDSFF E1 and E3 drive specs do not include this latch because server OEMs do not want it. If there was a common latching mechanism, a drive manufacturer could make a drive and it could be used in any EDSFF server. Companies like Dell, HPE, and Lenovo charge premiums for drives that come in their respective trays so the simple mechanical latch has some profitability metric attached. Further, having a standard latch would mean that colors of the server that spends most of its time locked in a data center when not being featured in a STH server review, might not match. For some sense, we just toured a data center and one could not see drive trays.

Hopefully, the ecosystem can figure this out as this is something that will encourage re-use and a circular economy.

Aside from this, the new connector is specifically designed for the next-generation PCIe Gen5 and Gen6 era where we will have CXL connected devices as well. The new electrical specification means better signal integrity without PCIe Gen5 and CXL 2.0 retimers which helps control server costs.

Final Words

Hopefully, this was a helpful look at the next generation of SSD, or more broadly lower-power PCIe / CXL device form factors. EDSFF is a major force in the industry that is being deployed today, but we will see rapidly take over current generation M.2 and U.2/ U.3 2.5″ form factors in the data center.

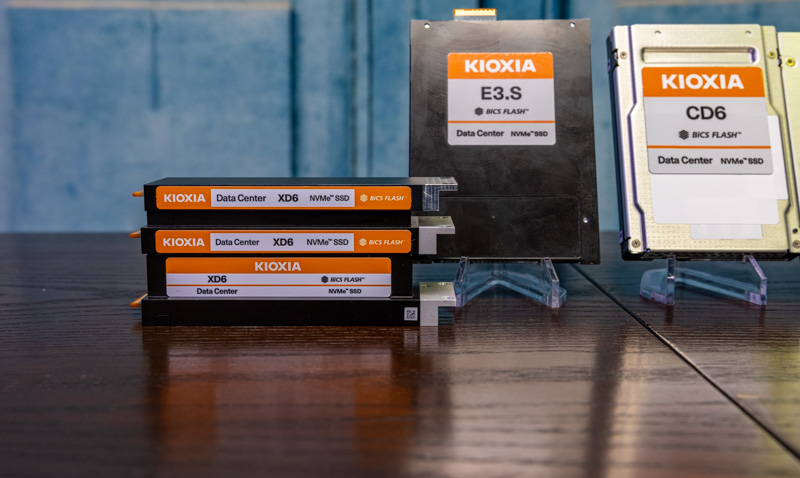

We just wanted to say thank you to the folks at Kioxia for getting us the drives with different EDSFF form factors that we could show. For many of our readers, these types of comparative pictures help to make the new form factors more tangible.

This is otherwise known as “ruler” storage due to its dimensions. I have spoken to several OEM’s about ruler storage and its viability in the future. The problem is standards. When ruler storage was proposed, everyone was excited about it, but no one wanted to agree on format and connectors even though Intel had one in place. HPE, Dell, Supermicro, Lenovo all had their ideas on how ruler storage should operate. Most datacenter folks saw this as no different than the disk caddy differences. No OEM wanted to lose margin and certifications by having ruler storage be 100% interoperable between server brands. As long as the OEM’s maintain the same standards and don’t stick some proprietary front end snap in that only works on their equipment, it should take off pretty fast. If they wander off, then look for ridiculous unit pricing to maintain their margins.

I was excited about EDSFF when I found out about them a year or so ago, with claims about being “optimized” for NVMe form factors and doing denser configs with 30TB drives. Unfortunately that never happened, and the OEMs and ODMs stuck to U.2/U.3 outside of niche chassis SKUs. Now we have 30TB U.3 NVMe drives coming out and EDSFF never took off.

I hope the adoption curve changes and EDSFF differentiates itself, but we will see if this ever makes it out of niche use cases.

U.3 is dead tech funded by Broadcom to make it’s SAS controllers still relevant It makes me sick to see all the tech sites repping U.3

Very informative, thank you. FYI, there’s an unfinished sentence: “We have been discussing next-gen server power consumption as part of this series, but this is a clear example of where that will…”

Is there any mention of a servers bay can still use a SAS connection and ED3 at the same time, so OEMs can use both SATA/SAS and E3 drives interchangeably in bays?

So what is the plan for storage solutions for embedded network appliances? There are a lot of requirements for boot drives, and non-user access storage applications like the M.2 NGFF form factor. From what I am hearing, Micron, and Intel are planning on abandoning M.2 NGFF form factors starting with GEN5 PCIe. Are there plans for an EDSFF embedded form factor?

3 years later and I still see a lack of adapters/cables for plugging EDSFF drivers into servers with no pre-installed EDSFF interfaces. Maybe a PCI card where cables to RDSFF drives and/or EDSFF backplanes could connect to the PCIe bus, perhaps with an on-card PCIe bridge chip to decouple PCIe slots from ESD and signal strength issues at the drive connector. Also to use a single x16 slot to handle over 4 EDSFF drives without custom drivers, just the generic PCIe bridge support in the server OS.

Without such adapter products, EDSFF use will be limited to server motherboards designed from the ground up to only work with those drives.

JD – Just as a heads-up – In the PCIe Gen6 generation, the U.2 connector is going away for storage. So adoption of EDSFF will no longer be an issue