Dell EMC PowerEdge R7415 CPU Performance

At STH, we have benchmarked every single and dual socket AMD EPYC 7001 combination available and we have at least a pair of each EPYC SKU in the lab. Normally with this type of review, we would focus solely on all nine of the current AMD EPYC single socket options that you have in the PowerEdge R7415. Since a big question for buyers is going to be AMD EPYC v. Intel Xeon we are going to add in a few single and dual Intel Xeon configurations to show the differences.

Running through our standard test suite generated over 1000 data points for each set of CPUs. We are cherry picking a few to give some sense of CPU scaling.

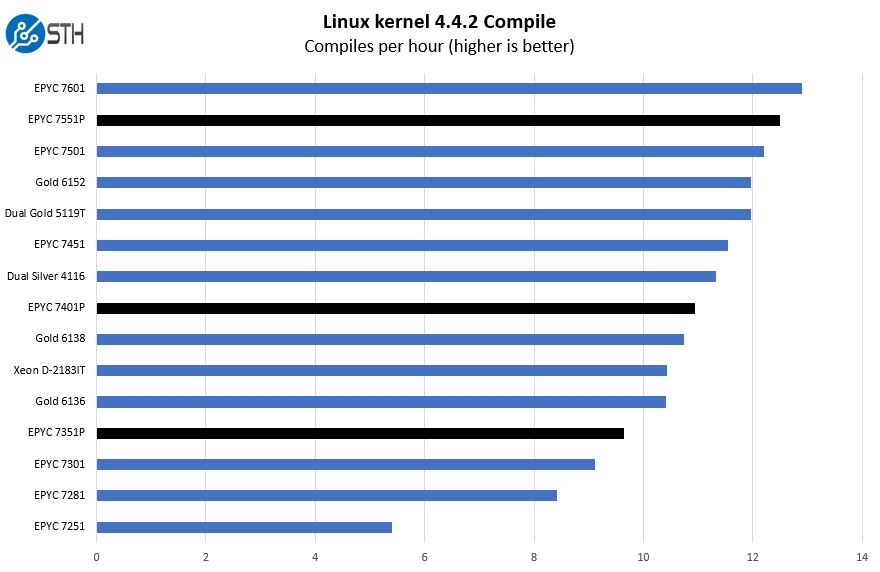

Python Linux 4.4.2 Kernel Compile Benchmark

This is one of the most requested benchmarks for STH over the past few years. The task was simple, we have a standard configuration file, the Linux 4.4.2 kernel from kernel.org, and make the standard auto-generated configuration utilizing every thread in the system. We are expressing results in terms of compiles per hour to make the results easier to read.

Here is our configured AMD EPYC 7551P that Dell EMC sent and a few other configuration options compared to Intel Xeon solutions and even a few dual AMD EPYC solutions that can be used in servers like the PowerEdge R7425.

When Dell EMC and AMD say that a single socket AMD EPYC solution can compete with dual Intel Xeon Scalable solutions in the price range and even single Intel Xeon Gold SKUs that cost much more, that is the picture you should keep in mind.

We are going to highlight the P series CPUs in this section so you can look at the Dell EMC configurator and see why the P series CPUs are such a strong value in the market. The other key trend is that the AMD EPYC 7251 is the least expensive part here. With eight cores on four NUMA nodes, the performance bump between the AMD EPYC 7251 and EPYC 7351P is so large that we suggest making the AMD EPYC 7351P the minimum SKU to order given the relatively low price impact.

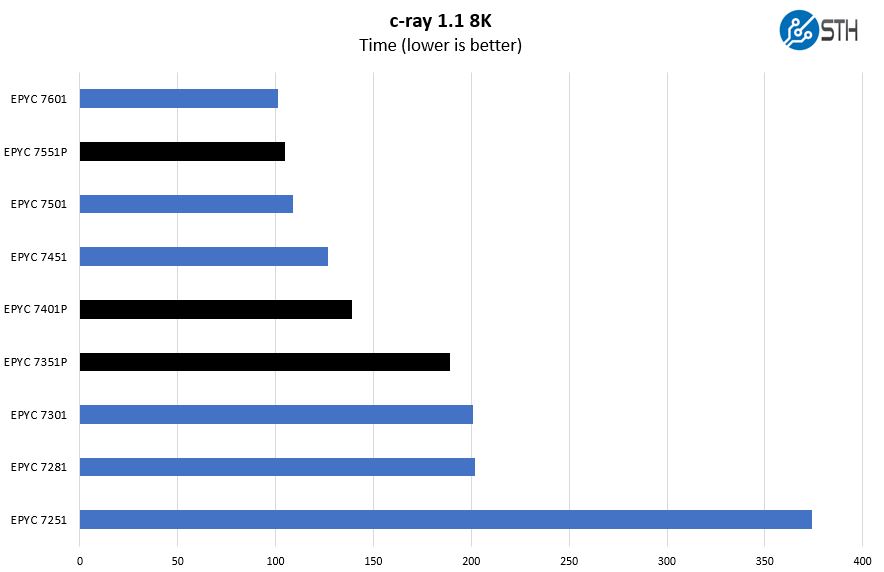

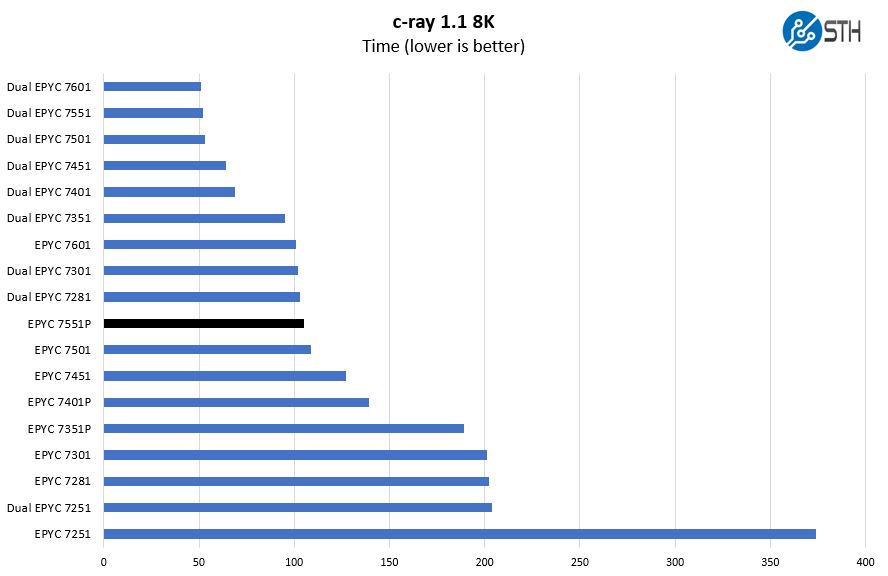

c-ray 1.1 Performance

We have been using c-ray for our performance testing for years now. It is a ray tracing benchmark that is extremely popular to show differences in processors under multi-threaded workloads. We are going to use our new Linux-Bench2 8K render to show differences.

First, we wanted to highlight every single AMD EPYC single socket solution for the PowerEdge R7415. We highlighted the P-series EPYC SKUs. These SKUs have great pricing in the PowerEdge configurator, so we see them as being the most popular options.

This is the SKU stack compared to every AMD EPYC 7001 series configurations. If you are looking to get a sense of Dell EMC PowerEdge R7415 to PowerEdge 7425 performance, that gives you an idea about where the single v. dual AMD EPYC solutions stack rank. We highlighted the AMD EPYC 7551P here which is the top-bin P series value part and the one Dell EMC selected for our PowerEdge R7415 configuration.

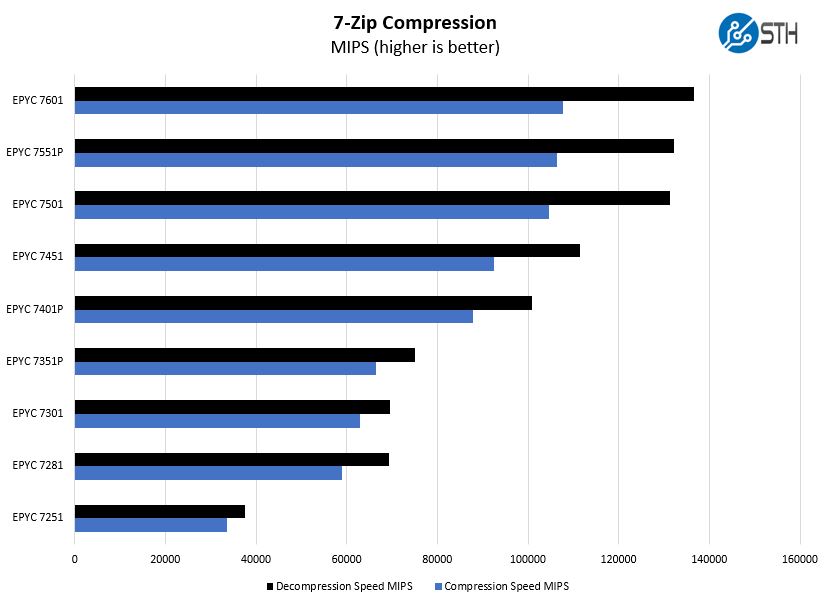

7-zip Compression Performance

7-zip is a widely used compression/ decompression program that works cross-platform. We started using the program during our early days with Windows testing. It is now part of Linux-Bench.

If you are starting to notice a pattern here, it should be clear that the AMD EPYC 7551P is a better single-socket value than the AMD EPYC 7501 for the Dell EMC PowerEdge R7415. The EPYC 7601 also offers a minor performance upgrade, but the EPYC 7551P is a better value.

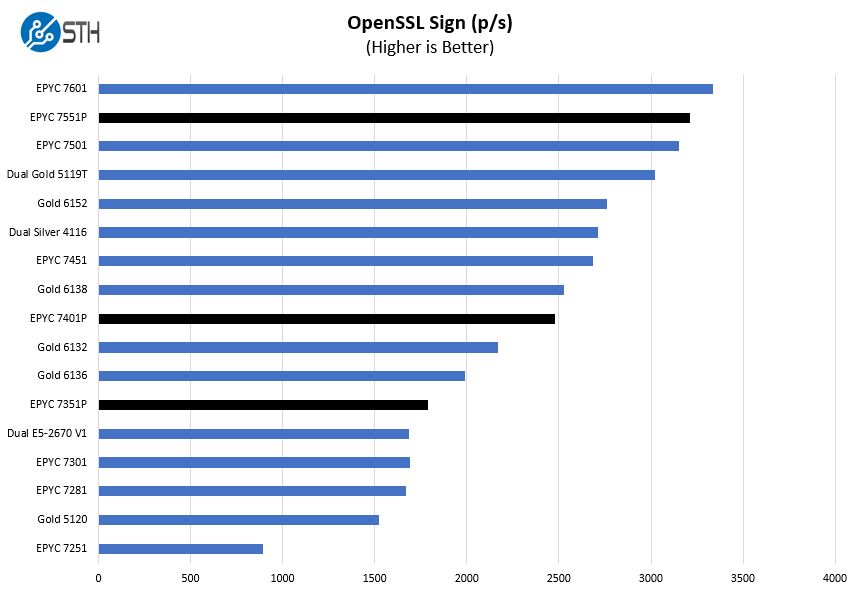

OpenSSL Performance

OpenSSL is widely used to secure communications between servers. This is an important protocol in many server stacks. We first look at our sign tests:

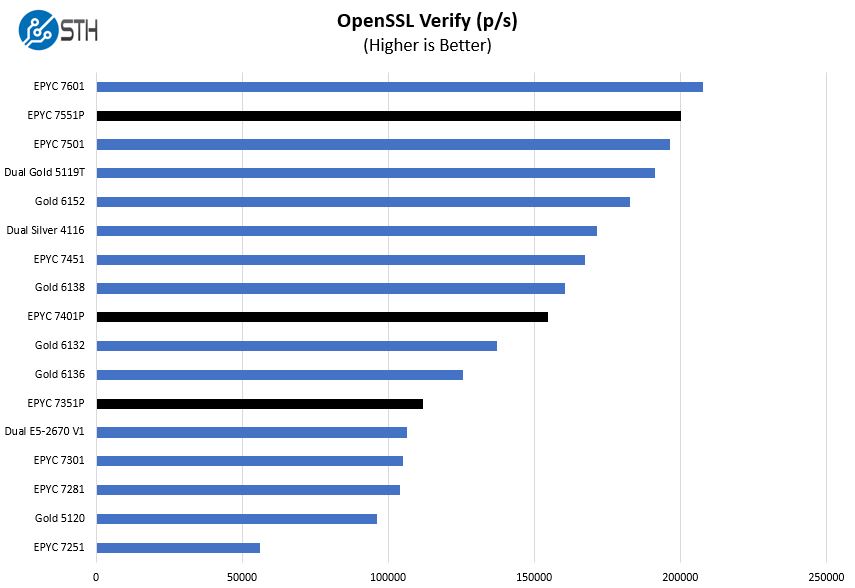

Here are the verify results:

We highlighted the AMD EPYC “P” series parts again. If you are looking toward Intel Xeon comparisons, you can see that the less expensive AMD EPYC 7351P out-performs the Intel Xeon Gold 5120 by a wide margin here. Likewise, the AMD EPYC 7401P is a step up from almost every Intel Xeon Gold 613x series SKU. By the top end, you can see that the AMD EPYC 7551P is faster than a dual Intel Xeon Gold 5119T or a single Xeon 6152 solution.

For those looking at upgrading from legacy platforms. The single socket AMD EPYC 7351P bests dual Intel Xeon E5-2670 CPUs meaning one can trade legacy dual socket servers for a new single socket option.

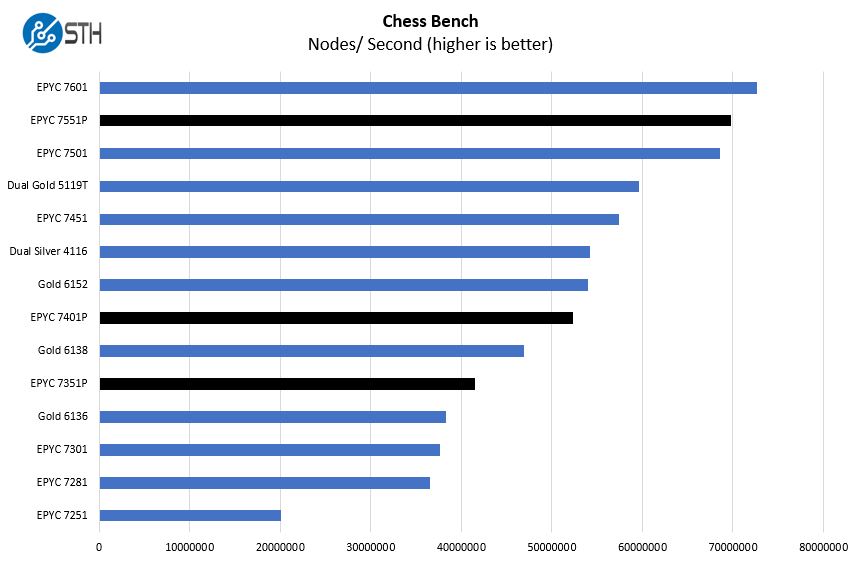

Chess Benchmarking

Chess is an interesting use case since it has almost unlimited complexity. Over the years, we have received a number of requests to bring back chess benchmarking. We have been profiling systems and are ready to start sharing results:

Again, using the P series parts will significantly lower your CPU costs over Intel Xeon Scalable single and dual socket solutions. On a per-core basis, Intel Xeon Scalable is leading here. For applications that utilize per-core licensing, Intel Xeon is still the better option due to higher frequency parts.

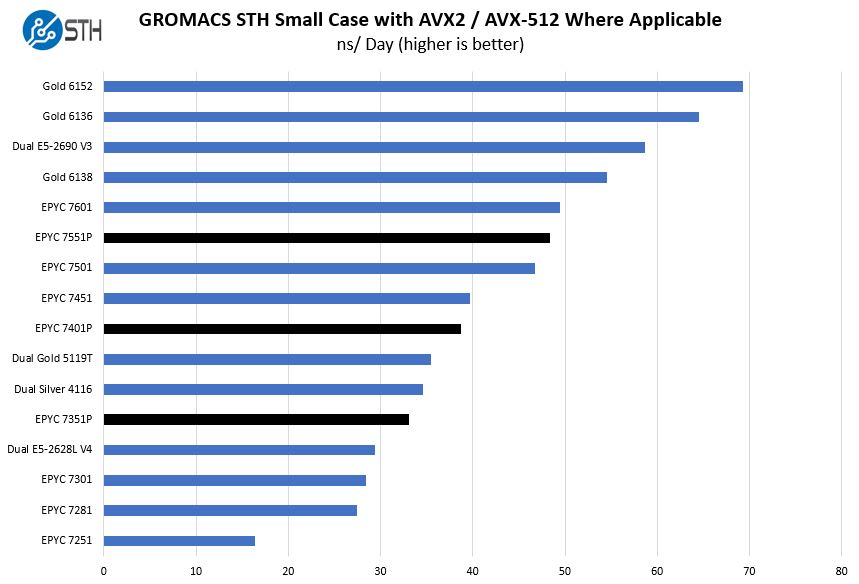

GROMACS STH Small AVX2/ AVX-512 Enabled

We have a small GROMACS molecule simulation we previewed in the first AMD EPYC 7601 Linux benchmarks piece. In Linux-Bench2 we are using a “small” test for single and dual socket capable machines. Our medium test is more appropriate for higher-end dual and quad socket machines. Our GROMACS test will use the AVX-512 and AVX2 extensions if available.

The AMD EPYC 7001 series CPUs support AVX2, but not the newest AVX-512 instruction set of Intel Xeon Scalable. In some workloads that have been optimized for AVX-512, that is a significant disadvantage. Intel does not give all of the Xeon parts dual port FMA AVX-512 so AMD’s AVX2 implementation effectively trounces the Intel Xeon Gold 5100, Silver, and Bronze CPUs in this test. Still, the Intel Xeon Gold 6100 or Xeon Platinum range is superior when AVX-512 can be used.

Next, we are going to look at the Dell EMC PowerEdge R7415 storage, networking, and power consumption before giving our final thoughts on the server.

Patrick,

Does the unit support SAS3/NVMe is any drive slot/bay, or is the chassis preconfigured?

You can configure for all SAS/ SATA, all NVMe, or a mix. Check the configurator for the up to date options.

@BinkyTO

It depends on how you have the chassis configured. It sounds like they have the 24 drive with max 8 NVMe disk configuration. With that the NVMe drives need to be in the last 8 slots.

How come nobody else mentioned that hot swap feature in their reviews. Great review as always STH. This will help convince others at work that we can start trying EPYC.

The system tested has a 12 + 12 backplane. 12 drives are SAS/SATA and 12 are universal supporting either direct connect NVMe or SAS/SATA. Thanks Patrick and Serve the Home for this very thorough review… Dell EMC PowerEdge product management team

These reviews are gems on the Internet. They’re so comprehensive. Better than many of the paid reports we buy.

I take it the single 8GB DIMM and epyc 7251 config that’s on sale for $2.1k right now isn’t what you’d get.

You’ve raved about the 7551p but I’d say the 7401p is the best perf/$

Great review! Any chance of a cooling analysis (with thermal imaging)?

Impressive review

Koen – we do thermal imaging on some motherboard reviews. On the systems side, we have largely stopped thermal imaging since getting inside the system while it is racked in a data center is difficult. Also, removing the top to do imaging impacts airflow through the chassis which changes cooling.

The PowerEdge team generally has a good handle on how to cool servers and we did not see anything in our testing, including 100GbE NIC cooling, to suggest thermals were anything other than acceptable.

Great review.

Thanks for the reply Patrick. Understand the practical difficulties and appreciate you sharing your thoughts on the cooling. Fill it up with hot swap nvme 2.5″ + add-in cards and you got a serious amount of heat being generated!

Great Review! Many thanks, Patrick!