The Dell EMC PowerEdge C6525 is a “kilo-thread” class server. This 2U 4-node (2U4N) design uses AMD EPYC 7002 “Rome” (and likely future EPYC 7003 “Milan”) processors to pack up to 512 cores and up to 1024 threads into a single chassis. Even moving to a midrange AMD EPYC 7002 processor such as the EPYC 7452, one can still get 256 cores and 512 threads in a single chassis which is more than Intel Xeon currently offers. In our review, we are going to take a look at the C6525 and show what it has to offer.

Dell EMC PowerEdge C6525 2U4N Server Overview

Since this is a more complex system, we are first going to look at the system chassis. We are then going to focus our discussion on the design of the four nodes. We also have a video version of this review for those who prefer to listen along. Our advice is to open it in another YouTube tab and listen along while you go through the review.

Since our full review text is getting close to 5000 words as we are adding this video, this review has more detail. Still, we know some prefer to consume content in different ways so we are adding that option.

Dell EMC PowerEdge C6525 Chassis Overview

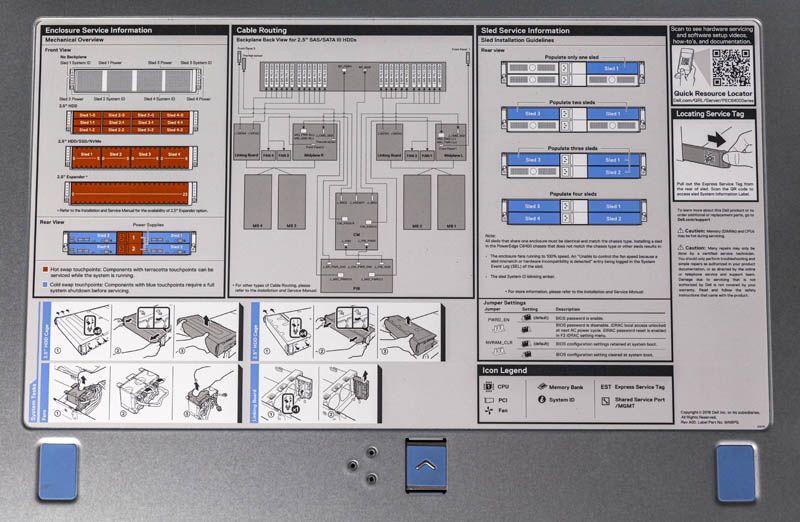

The Dell EMC PowerEdge C6525 is a 2U 4-node system. Fitting in a single 2U enclosure one gets four nodes which effectively doubles rack compute density over four 1U servers. On the front of the chassis, one gets one of three options. Either 12x 3.5″ drives, 24x 2.5″ drives, or a diskless configuration. Since 2.5″ storage is popular in these platforms, that is the configuration we are testing. There are also 24x SATA/SAS and 16x SATA/SAS and 8x NVMe configurations for the 2.5″ version.

There are two other features we wanted to point out on the front of the chassis. First, each node gets power and reset buttons along with LED indicators all mounted on the rack ears. This is fairly common on modern 2U4N servers to increase density. While many vendors use uneven drive spacing in the middle of their chassis, the PowerEdge C6525 has extra vents located outside the drive array. In dense systems like this, extra airflow is needed and that is often provided by these vents.

Moving to the rear of the chassis, we see the four nodes. Dell denotes the model number on a blue handle. If you had both C6420 and C6525 models in your data center this would help give an easy indicator of what you are looking at. For either system, the Dell C6400 chassis is shared, and that is why we see “C6400” on the right ear of the C6525. Even though it is the same chassis, we asked, and Dell told us you cannot just mix and match. Instead, the chassis needs a firmware swap to have the right firmware for Intel or AMD. Thinking further into system lifecycles, it would have been great if this was not the case since it would operationally turn racks full of C6400 chassis into simply plugging the nodes in where there is an available slot. It also allows for greener operations since it would make the C6400 agnostic for Intel or AMD. Some other vendors have this capability for 2U4N chassis.

In the rear of the chassis, we have power supplies in the middle with two nodes on either side. These redundant hot-swap 80Plus Platinum power supplies. Our test unit uses 2400W power supplies but also has 2000W and 1600W options available. With AMD EPYC, 1600W is going to push it for usefulness in a full 8x CPU configuration since AMD CPUs tend to run at higher TDPs. We also wish that Dell started using 80Plus Titanium units in these designs. Other vendors have those options, and adding a small amount of power efficiency would help lower operating costs and be a bit more environmentally friendly.

Dell has a service cover the runs atop the chassis. This provides useful information for a field service tech regarding chassis-level integration.

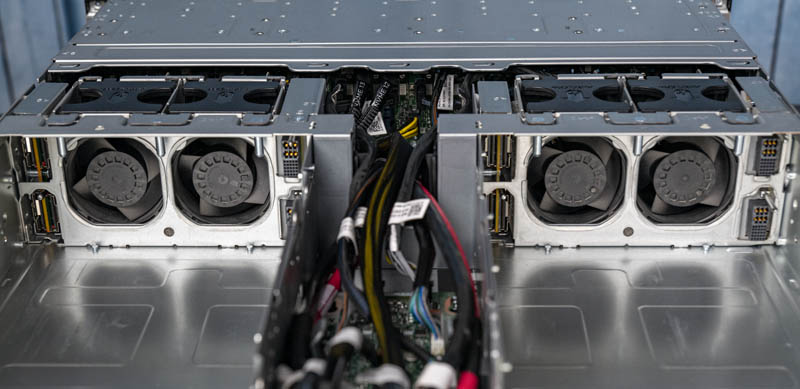

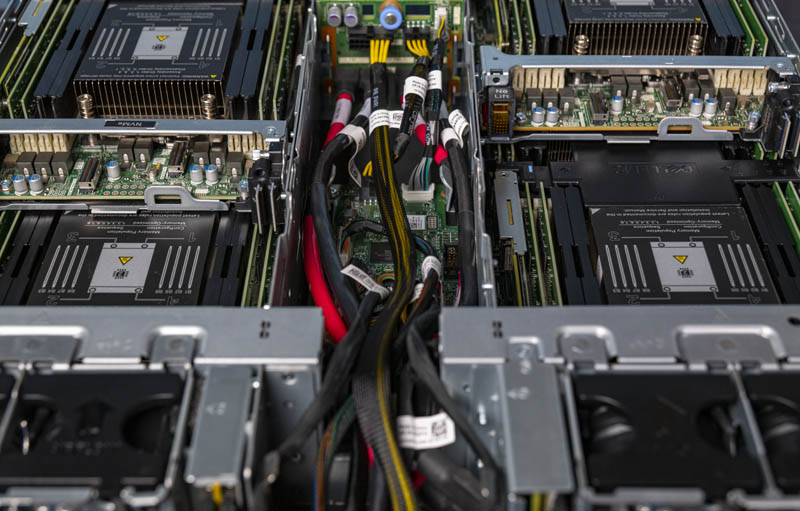

Inside, one can see four pairs of 80mm fans. We will see how these perform in our efficiency testing. There are effectively two ways that 2U4N systems are designed these days. One option is an array of 80mm fans, such as what the C6525 is using. The other common design for 2U4N systems is to put smaller 40mm fans on each node. Typically the arrays of larger fans like the C6525 uses translate to lower power consumption. Some organizations prefer to have the entire node assembly, including fans be removable for easier service. Given the reliability of fans these days, for STH’s own use we prefer the midplane 80mm fan design like the C6525 has and we will show why it helps lower overall system power consumption later in this review.

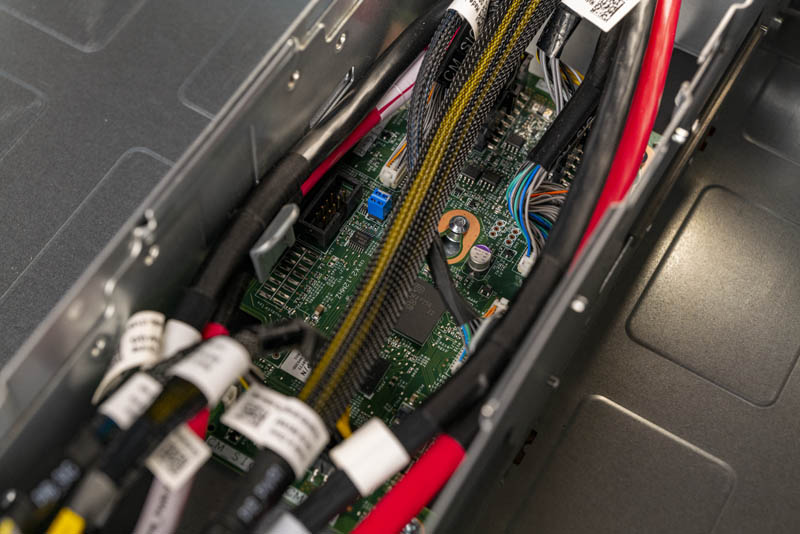

Inside the chassis, there is a center channel with power cables and other chassis-level features. One item we will note here is that the PowerEdge C6525 does not have an iDRAC BMC in the chassis (iDRAC is found on the nodes.) We commonly saw this design for the past few years, dating back to the Dell PowerEdge C6100 XS23-TY3 Cloud Server. Many 2U4N systems that are more influenced by hyper-scaler requirements and that use industry-standard BMCs (e.g. the AST2500 series these days) tend to add a BMC here. We have even seen examples where there is even a dedicated management NIC and port for the chassis.

The channel design keeps all cabling out of nodes. Dell has large walls on either side of this channel which ensures these cables are not inadvertently snagged by nodes. The entire section is designed to be cooled via power supplies leaving the 80mm fan complex to cool the nodes.

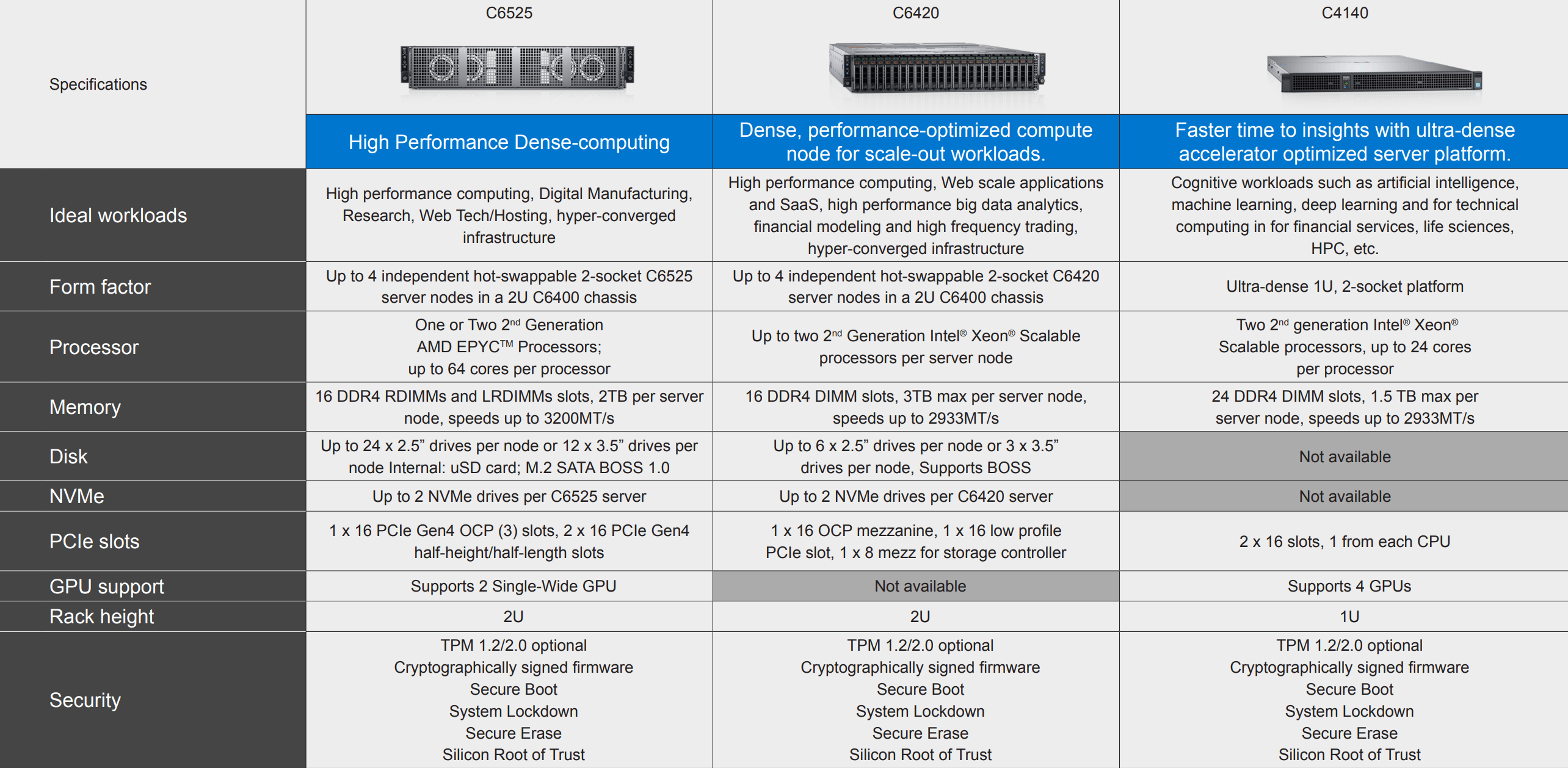

Before we move on to the nodes, we just wanted to point to the differences in the systems you can put in this chassis. Here is the C6525 and C6420 side-by-side with the C4140 there as well as a different class of system.

Now that we have looked at the exterior, it is time to look at what each node in the C6525 offers.

“We did not get to test higher-end CPUs since Dell’s iDRAC can pop field-programmable fuses in AMD EPYC CPUs that locks them to Dell systems. Normally we would test with different CPUs, but we cannot do that with our lab CPUs given Dell’s firmware behavior.”

I am astonished by just how much of a gargantuan dick move this is from Dell.

Could you elaborate here or in a future article how blowing some OTP fuses in the EPYC CPU so it will only work on Dell motherboards improves security. As far as I can tell, anyone stealing the CPUs out of a server simply has to steal some Dell motherboards to plug them into as well. Maybe there will also be third party motherboards not for sale in the US that take these CPUs.

I’m just curious to know how this improves security.

This is an UNREAL review. Compared to the principle tech junk Dell pushes all over. I’m loving the amount of depth on competitive and even just the use. That’s insane.

Cool system too!

“Dell’s iDRAC can pop field-programmable fuses in AMD EPYC CPUs that locks them to Dell systems”?? i’m not quickly finding any information on that? please do point to that or even better do an article on that, sounds horrible.

Ya’ll are crazy on the depth of these reviews.

Patrick,

It won’t be long before the lab could be augmented with those Dell bound AMD EPYC Rome CPUs that come at a bargain eh? ;)

I’m digging this system. I’ll also agree with the earlier commenters that STH is on another level of depth and insights. Praise Jesus that Dell still does this kind of marketing. Every time my Dell rep sends me a principled tech paper I delete and look if STH has done a system yet. It’s good you guys are great at this because you’re the only ones doing this.

You’ve got great insights in this review.

To who it may concern, Dell’s explanation:

Layer 1: AMD EPYC-based System Security for Processor, Memory and VMs on PowerEdge

The first generation of the AMD EPYC processors have the AMD Secure Processor – an independent processor core integrated in the CPU package alongside the main CPU cores. On system power-on or reset, the AMD Secure Processor executes its firmware while the main CPU cores are held in reset. One of the AMD Secure Processor’s tasks is to provide a secure hardware root-of-trust by authenticating the initial PowerEdge BIOS firmware. If the initial PowerEdge BIOS is corrupted or compromised, the AMD Secure Processor will halt the system and prevent OS boot. If no corruption, the AMD Secure Processor starts the main CPU cores, and initial BIOS execution begins.

The very first time a CPU is powered on (typically in the Dell EMC factory) the AMD Secure Processor permanently stores a unique Dell EMC ID inside the CPU. This is also the case when a new off-the-shelf CPU is installed in a Dell EMC server. The unique Dell EMC ID inside the CPU binds the CPU to the Dell EMC server. Consequently, the AMD Secure Processor may not allow a PowerEdge server to boot if a CPU is transferred from a non-Del EMC server (and CPU transferred from a Dell EMC server to a non-Dell EMC server may not boot).

Source: “Defense in-depth: Comprehensive Security on PowerEdge AMD EPYC Generation 2 (Rome) Servers” – security_poweredge_amd_epyc_gen2.pdf

PS: I don’t work for Dell, and also don’t purchase their hardware – they have some great features, and some unwanted gotchas from time to time.

What would be nice is some pricing on this

Wish the 1gb Management nic would just go away. There is no need to have this per blade. It would be simple for dell to route the connections to a dumb unmanaged switch chip on that center compartment and then run a single port for the chassis. Wiring up lots of cables to each blade is a messy. Better yet, place 2 ports allowing daisy chaining every chassis in a rack and elimate the management switch entirety.

Holy mother of deer… Dell has once again pushed vendor lock and DRM way too far! Unbelievable!

It’s part of AMD’s Secure Processor, and it allows the CPU to verify that the BIOS isn’t modified or corrupted, and if it is, it refuses to post.. It’s not exactly an efuse and more of a cryptographic signing thing going on where the Secure Processor validates that the computer is running a trusted BIOS image. The iDRAC 9 can even validate the BIOS image while the system is running. The iDRAC can also reflash the BIOS back to a non-corrupt, trusted image. On the first boot of an Epyc processor in a Dell EMC system, it gets a key to verify with; this is what can stop the processor from working in other systems as well.

Honestly, there is no reason Dell can’t have it both ways with iDrac. iDrac is mostly software, and could be virtualized with each VM having access to one set of the hardware interfaces. This ould cut their costs by three, roughly, while giving them their current management solution. After all, ho often do you access all four at once?

haw is the price for this server PowerEdge C6525