At GTC 2022, NVIDIA had some nice renderings of the NVIDIA H100. A few weeks ago I was able to hold one, and I just got the call that I can now share the photos. For those that prefer live shots instead of renderings, here is our first look at the NVIDIA H100 Hopper GPU that will be a big deal in the market starting later this year.

Checking out the NVIDIA H100 at NVIDIA HQ

Contrary to some rumors, I was not in Santa Clara, California in early April checking out NVIDIA’s newest BBQ gear.

Saw this smokin' hot @NVIDIA rig yesterday.

Between NVIDIA Voyager and Endeavor there were two Santa Maria style pits. They looked and smelled great! Check the branding! pic.twitter.com/eqJxzhNw1E

— Patrick J Kennedy (@Patrick1Kennedy) April 8, 2022

Instead, as you may have seen in our NVIDIA Cedar Fever 1.6Tbps Modules Used in the DGX H100 piece, I was checking out some new hardware. One of those was this covered by the Random Floating Black Box at NVIDIA:

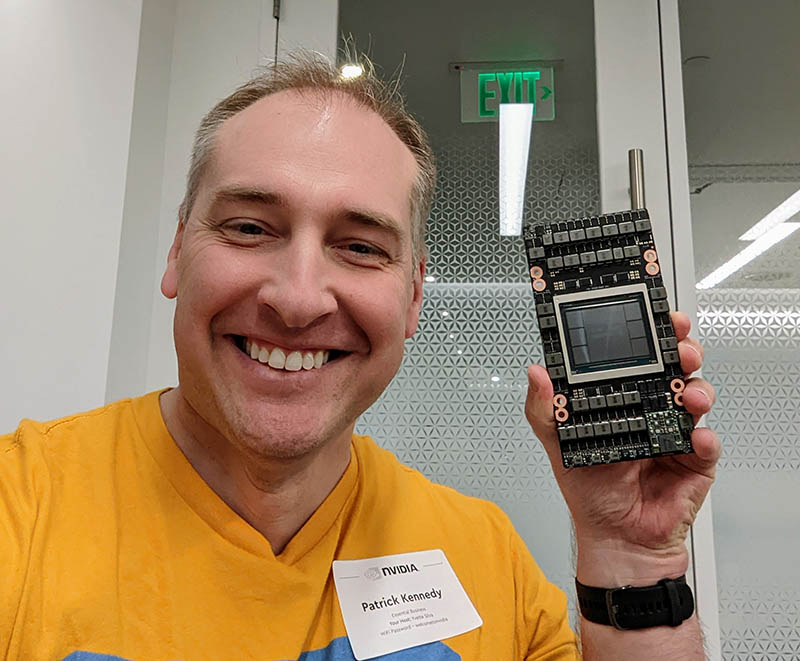

As of today, the RFBB can be lifted, and this was actually me posing with a NVIDIA H100.

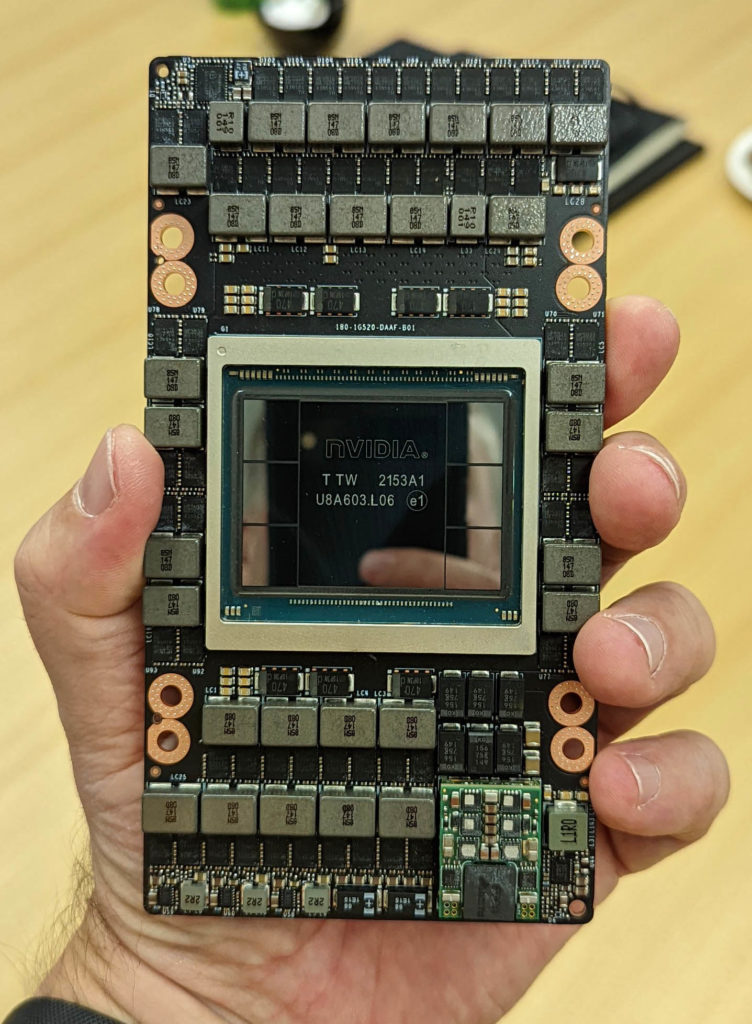

Here is the front side of the NVIDIA H100. You can see the SXM packaging is getting fairly packed at this point. There is a lot more here than we saw on the V100 generation.

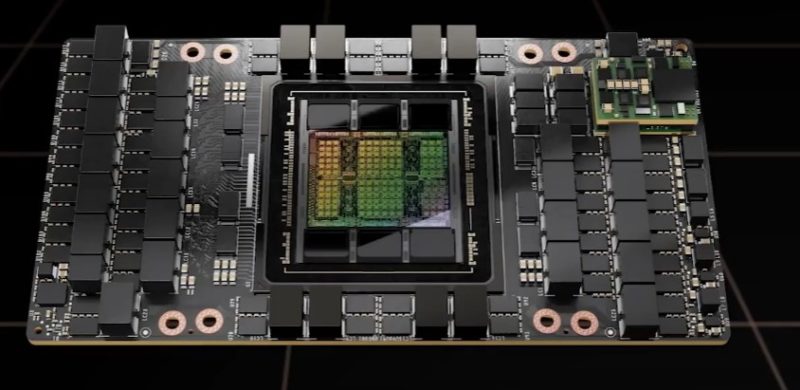

The GPU itself is the center die with a CoWoS design and six packages around it. Five of those packages we were told during GTC 2022 are active as HBM and one is there for yield/ structural support. Just for some comparison, here is a V100 from the SC show floor:

While I was there, I was allowed to hold the second H100 package that failed and that was not mounted on the SXM PCB. I was just not allowed to take photos of that, even with a RFBB. It was marked as #2. The module itself looks fairly close to the rendering we saw at GTC 2022.

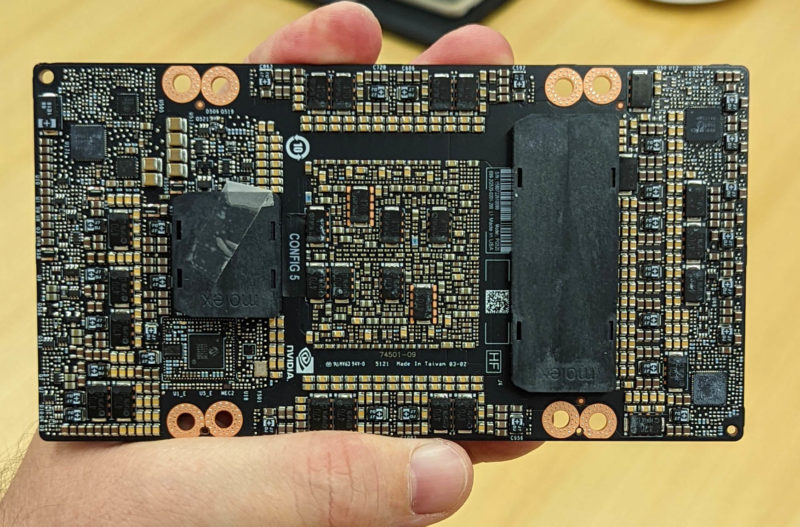

Here is the back of the module:

Something that is very different in this generation is the connectors. Since I was not really supposed to take pictures of these (I used my phone, not the Canon R5/ R5 C I had with me for these), I also did not get to take the Molex covers off. In previous generations, like the V100, we would have two long connectors on each side of the GPU. So this is certainly a new connector layout.

Final Words

Since I just got the call that we could publish the photos that I was probably not supposed to take in the first place, I just wanted to get these out there. The H100 is real. What NVIDIA needs is for the next-generation of AMD EPYC and Intel Xeon CPUs to come out for PCIe Gen5 support so that it has a platform for its next-gen PCIe Gen5 GPUs, BlueField-3 DPUs, ConnectX-7, and more. We confirmed in the Cedar Fever article that NVIDIA has motherboard designs for the DGX H100 for both Sapphire Rapids and Genoa, but one will be picked closer to launch (if it has not been in the past month.)

These photos are actually one of the big drivers behind NVIDIA Grace. NVIDIA has the H100 today, but it does not have x86 PCIe Gen5 platforms to sell to customers, and it will not be using Ampere or another Arm solution in this generation. NVIDIA having its own CPU will allow the company to put out new accelerator generations without having to wait for delayed x86 CPUs in the future.

“We’re gonna’ need a bigger power cord” (the data center version of Jaws).

You weren’t there a full month ago. Your grill twitter was 8 April. It’s only now 5 May. That’s only 27 days not a month

You look so happy holding that card! Great photo!

The problem with SXM is that the baseboard is not sold separately starting the A100 generation along with the 54V input issue for >350W. Gigabyte G262-ZR0, Supermicro 2024GQ-NART, Dell XE8545 all price their barebones at $60K or so(that’s because Nvidia charged 40K for the GPU bundled with the redstone board). So literally you are paying a 20K tax on top of the 40K forced bundle by Nvidia. Even SXM2 servers right now are priced at $1.5K(4GPU 2nd hand Dell C4140) and $3-5K for 2nd hand Gigbayte 8GPU barebones which are deprecated by 4x PCIe ampere cards.

Noticed that Nvidia separated the power plane and NVLINK plane plane with the SXM5. How about Nvidia start selling the 4x SXM4/SXM5 redstone board, 8x Delta board, or even better 1/2 SXM4/5 gpu boards separately without GPUs so that server vendors can start selling the barebones below 10K?

Thanks for sharing Patrick!

Interesting piece of silicon – all covered in caps and VRMs to satisfy that 700W appetite. Look like it’s either those damn 25K RPM Delta fans or leaky water cooling for DCs + the same challenges for a desktop Hopper.