For those that do not know, I was at NVIDIA HQ last week, checking out NVIDIA-themed grills. The new Voyager building, and more. That visit is all going into a video/ article that Alex is currently putting together. One insight that I thought needed its own piece was around NVIDIA Cedar. NVIDIA did not use the name during the GTC 2022 keynote, but this is a product OEMs can purchase from NVIDIA, and it is very interesting.

NVIDIA Cedar Fever 1.6Tbps Modules Used in the DGX H100

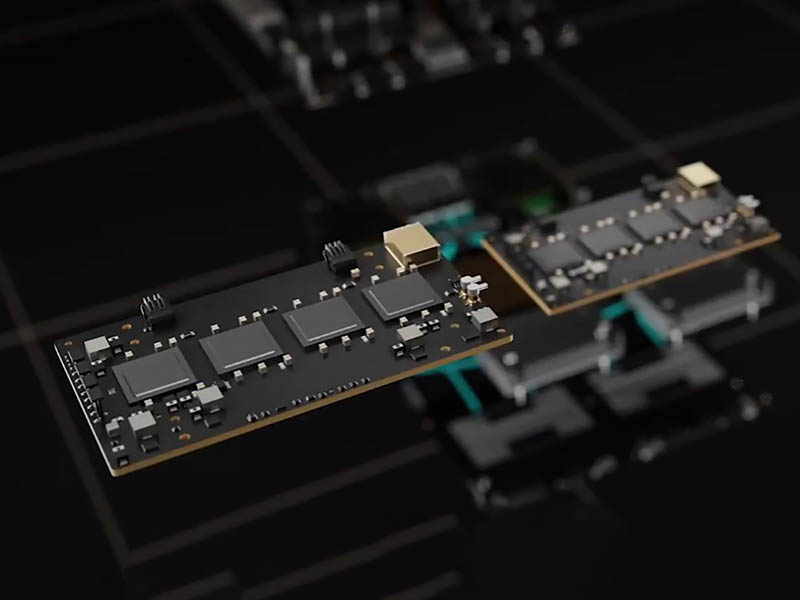

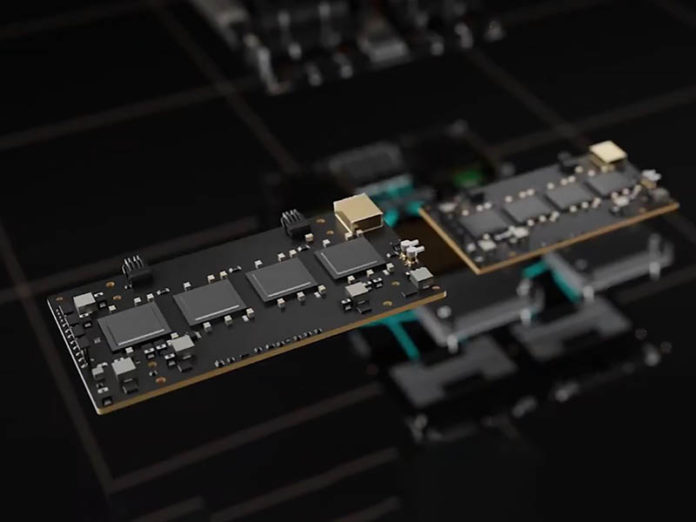

One of the big changes in the A100 to H100 series is the switch to PCIe Gen5. With PCIe Gen5, there is enough bandwidth to transition from 200Gbps networking to 400Gbps networking. With the NVIDIA DGX H100, the company is taking a different approach with its networking, specifically, it is ditching its traditional PCIe cards in favor of modules called “Cedar”.

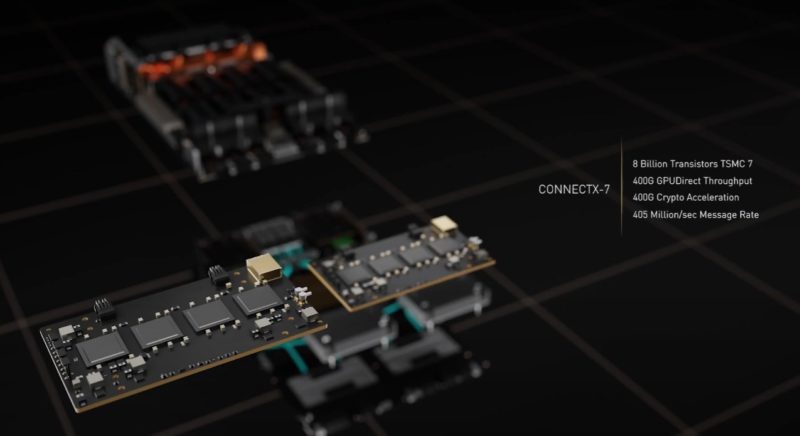

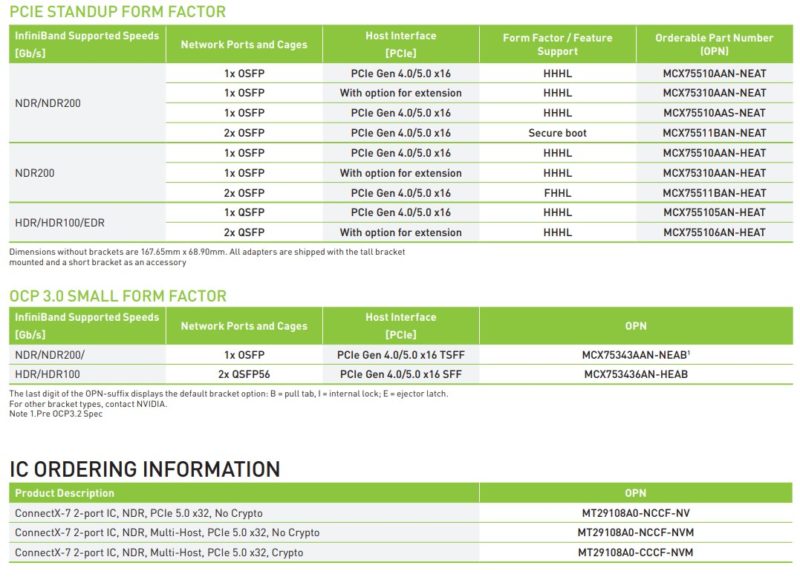

Each Cedar module has four ConnectX-7 controllers onboard. Each provides 400Gbps of network bandwidth. There are also two of them in a DGX H100 for 2x Cedar Modules, 4x ConnectX-7 controllers per module, 400Gbps each = 3.2Tbps of fabric bandwidth. We did not find them on the ordering sheet, but the SKU sheet gives some sense of just how much bandwidth is required to run these controllers.

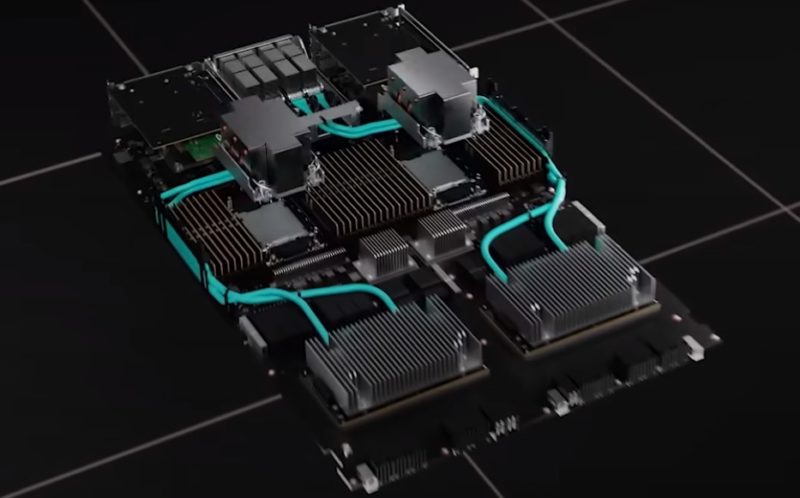

In the DGX H100, these Cedar modules have heatsinks designed to specifically cool the components while allowing airflow to the rest of the CPU and memory tray. These are then linked to the rear cages via flyover cabling that wraps around the CPUs and memory. In the rear, the DGX H100 can use direct attach copper cables (DACs), active optical cables, or standard optical modules.

A quick note about the rendering. While this may look like Sapphire Rapids to many, I was told that NVIDIA has different motherboard designs for the DGX H100, and that the CPU has not been decided. I was also told that the CPU would be x86 so either Intel Sapphire Rapids or AMD Genoa, not Arm via Ampere’s next-generation or NVIDIA Grace. Grace will be too late for this platform.

If you are wondering why not use BlueField-3 for the DGX H100, NVIDIA has that covered as well. In addition to the two Cedar modules with a combined 8x ConnectX-7 400Gbps controllers, there are two PCIe BlueField-3 controllers. Those two BlueField-3 controllers are used for tasks such as getting to storage and the user plane leaving the Cedar modules focused on the compute plane.

Final Words

I asked NVIDIA why not just use standard PCIe or OCP form factor modules for the DGX H100. Using Cedar modules is largely due to space efficiency in the system since this is much more compact than adding 8x PCIe ConnectX-7 cards to the system. It also helps airflow in the DGX H100.

While the Cedar module may sound completely exotic, NVIDIA told me that it is “broadly available” from the company’s networking team for any vendor to use in systems. With the newer and next-generation of AI models the scale required is much larger and so having large amounts of bandwidth to the compute plane of AI systems is more important. That is why there are two Cedar modules in the system to provide a huge amount of bandwidth.

For those wondering, I did not get to hold a Cedar module, but I may or may not have held two H100’s including one that may or may not have been labeled the second to fail. There also may or may not be a H100 + Patrick selfie on my phone that I would be forbidden to post if it did exist. Instead, I can confirm that NVIDIA’s newest building has two slick NVIDIA branded grills:

Saw this smokin' hot @NVIDIA rig yesterday.

Between NVIDIA Voyager and Endeavor there were two Santa Maria style pits. They looked and smelled great! Check the branding! pic.twitter.com/eqJxzhNw1E

— Patrick J Kennedy (@Patrick1Kennedy) April 8, 2022

The DGX H100 is real, but it seems like NVIDIA needs the rest of the ecosystem to catch up a bit before we see them. We expect a lot of really cool tech inside.

That’s good info right there. It’s also telling me it’ll be several months until we’ll see a DGX H100