Re-Think Your Node Strategy: 1P Versus 2P

Now let us address one of the most persistent myths in server architecture: the idea that dual-socket servers are primarily about redundancy. This misunderstanding leads many organizations to over-provision on hardware they do not actually need. We have gone over this so many times, yet it is one we still hear.

Let me be clear: Two processors in a server are not for redundancy. They are there to scale up the node to provide more cores, more memory bandwidth, and in some cases, more memory capacity than a single processor can support. Understanding this distinction is the key to making smarter purchasing decisions in 2026.

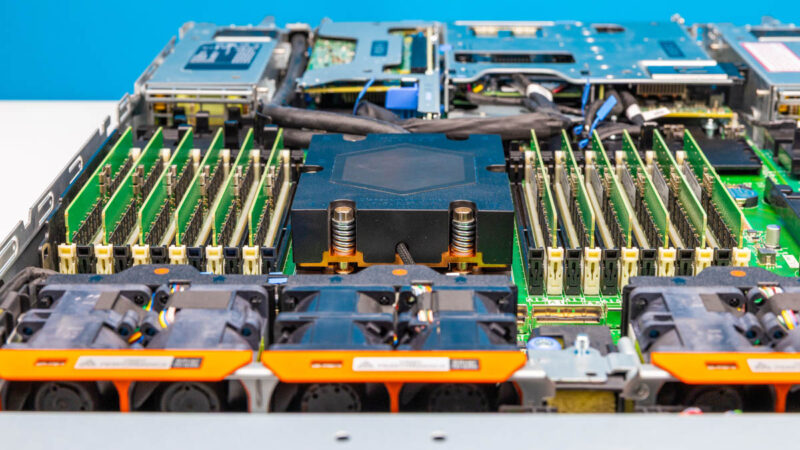

The benefits of a dual-socket (2P) system are real but specific. You get access to more cores and significantly more memory bandwidth because you are running multiple memory controllers in parallel. Some systems allow you to add more total memory than a single-socket configuration can support physically. On the cost side, you benefit from shared network interfaces, shared power supplies, a shared motherboard, and shared chassis components. If you are operating at scale in a data center, you can achieve additional savings by needing fewer nodes, which translates into fewer PDU ports, fewer management ports, and simplified cabling.

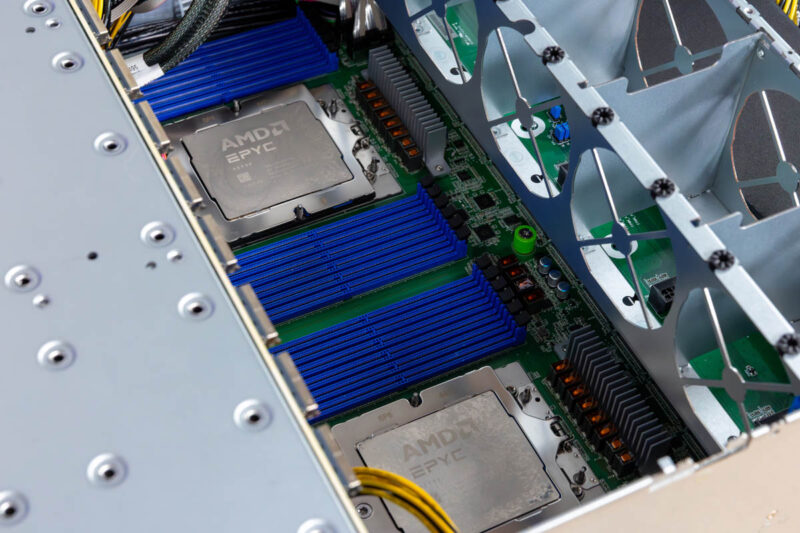

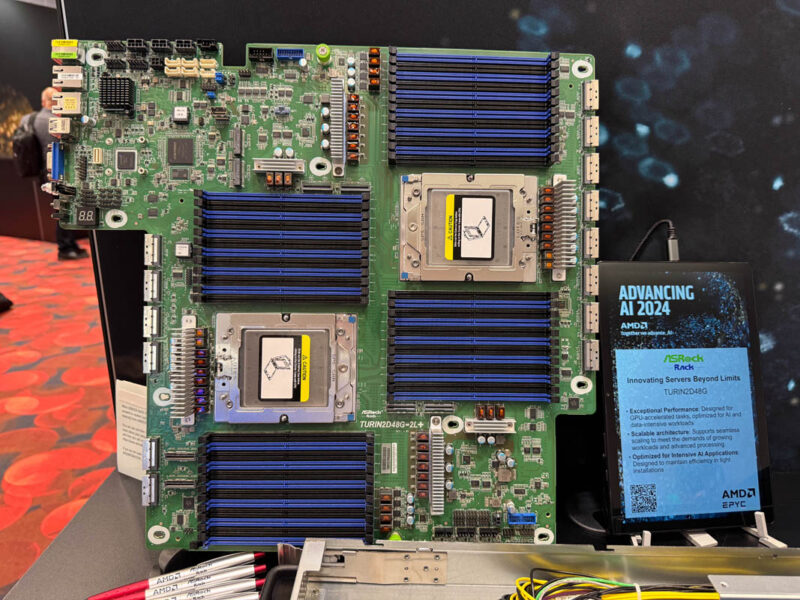

However, the benefits of a single-socket (1P) system are often overlooked and increasingly compelling in today’s market. If your workload does not require the absolute maximum core count, you can now get substantial computing power in a single socket. AMD’s Turin Dense EPYC 9005 processors, for example, offer up to 192 cores and 384 threads in a single-socket configuration. That is an enormous amount of compute.

Here is what surprises many people: you can frequently get the same amount of memory in a single-socket server as you would in a dual-socket configuration. This is because physically, it is not possible to have a 12-channel memory CPU and populate all 24 DIMMs per CPU, 48 in a server, without doing some unusual things to the motherboard. For mainstream servers, the practical limit tends to be around 24 DIMMs, regardless of whether you have one or two CPUs. The key insight is that many organizations do not need the theoretical maximum memory capacity that dual-socket configurations provide.

Single-socket servers also offer reduced complexity, resulting in fewer failure points and simpler maintenance. While this benefit exists, there is a more practical consideration in modern environments: today’s networking is so fast that driving traffic over CPU-to-CPU links can introduce congestion. Modern AI servers, for example, often use one NIC per CPU specifically to avoid this bottleneck.

At lower core counts, it is relatively easy to get the equivalent number of cores in a single-socket server simply by choosing a higher-core-count CPU. Instead of two 32-core processors, you can deploy a single 64-core CPU and achieve similar performance. Since you are running just one processor, you also benefit from lower power consumption, and typically, this approach costs less than adding a second CPU. Motherboards are less expensive, and you actually get more PCIe lanes per CPU in modern single-socket configurations compared to their dual-socket counterparts.

If you are migrating from older systems, it is worth noting that virtualization has never been easier. Open-source solutions like KVM, Ubuntu, and Proxmox VE make it straightforward to consolidate workloads. KVM is the underpinnings of cloud infrastructure, so using it does not mean settling for second-rate virtualization it is actually what the major public clouds use internally.

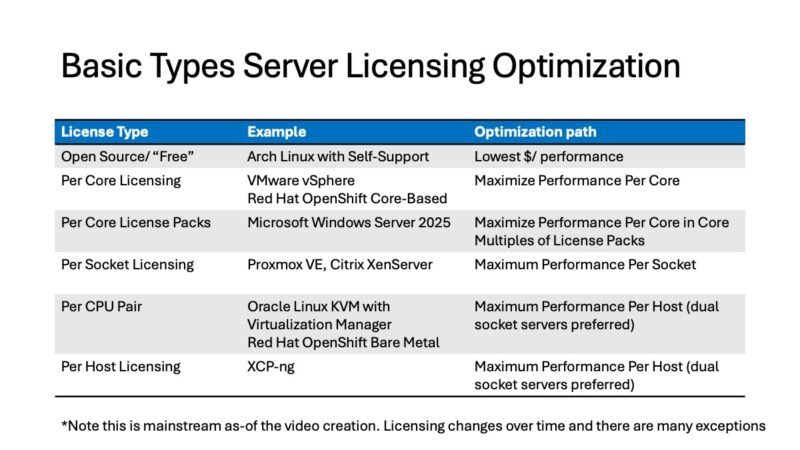

Do the Math on Licensing

This is another area where the conventional wisdom can cost you significant money, and it is worth revisiting even if we discussed it last year. The key insight is that newer CPUs deliver so much more performance per core that the economics of software licensing can completely change your purchasing calculus.

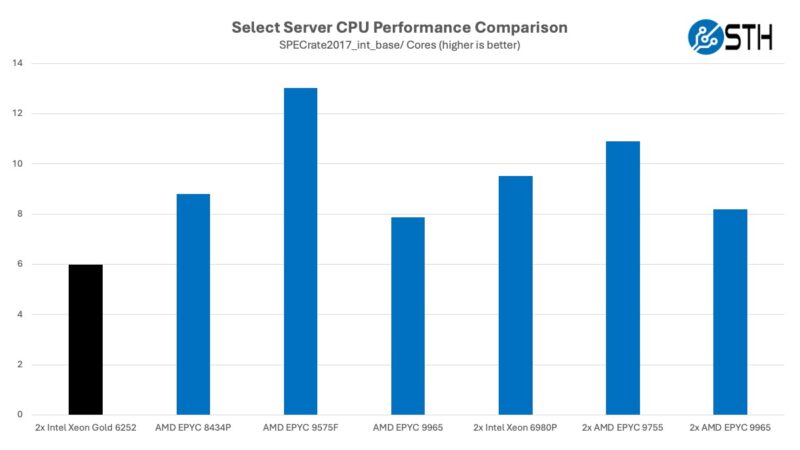

Consider a concrete example: the AMD EPYC 9575F, a 64-core processor. It is more than twice as fast as the most common Supermicro server SKU from 2020-2021, the Intel Xeon Gold 6252. That means a single 64-core socket today delivers more performance than approximately 136 cores across three dual-socket servers that would be due for replacement.

If your organization pays per-core licensing for virtualization or other software, this is transformative. Instead of needing licenses for multiple servers with lower core counts, you can consolidate to a single server with high-core-count processors and dramatically reduce your licensing costs. These savings often pay for the hardware itself very quickly.

For software licensed on a per-socket or per-node basis, the math is even more compelling. You can go relatively wild with CPU core counts. A single 192-core, 384-thread server represents an enormous amount of compute. The same principle applies whether you are moving from multiple 16-core sockets to a modern 64-core processor or jumping even higher.

It is worth noting that certain core counts have become particularly popular due to licensing considerations. 32 and 64-core parts tend to see high demand, as do 64, 128, and 192-core parts, especially for AI server deployments. There is also an interesting supply chain angle: if you transition from a dual 24-core server to a single 48-core or 96-core configuration, you may have better luck actually getting your server delivered on time. The supply constraints affecting lower-core-count parts are creating allocation challenges, while high-core-count SKUs often receive priority.

Another important consideration is Intel’s server processor roadmap. Through our coverage at STH, we have reported that Intel’s Diamond Rapids-SP, the intended mainstream 8 memory channel part, is not coming to servers. Instead, Intel will only offer 16-channel CPUs (AMD will have 16-channel options with Venice). More significantly, Intel Diamond Rapids does not include Hyper-Threading. If you need each physical core to equal two virtual CPUs, you are stuck with Granite Rapids or waiting until Hyper-Threading returns to Intel’s roadmap. We have heard from partners that Diamond Rapids licensing concerns were significant, which is why we were not overly surprised when Intel decided late in 2025 not to release the 8-channel parts. This is one of the reasons we are deploying EPYC ourselves to get two threads per physical core.

This hits hard. We’ve been buying from a reseller for almost 10 yrs and they’ve change quote validity to 14 days. It used to be valid for 90 days