For a long time, the idea of having a home storage server meant compromising on price, performance, or form factor. The Silverstone CS01-HS is building something that a few years ago was improbable: a high capacity, low power mITX NAS in an attractive case. Although this case was released a few years ago, we saw it in a new light at Computex 2018 and Silverstone sent us one for review. When this case was released, the embedded motherboards for the Atom C2000 and Intel Xeon D-1500 lines all had up to six SATA connections. Now, the successor Intel Atom C3000 “Denverton” and Intel Xeon D-2100 chips are out with more SATA I/O and 10GbE, making the CS01-HS with 8x storage bays a more useful platform.

Silverstone CS01-HS Overview

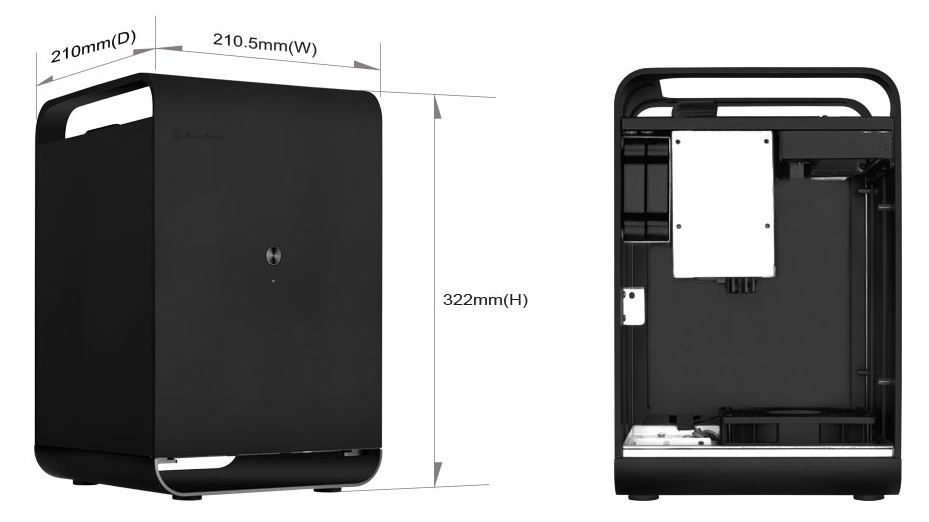

The Silverstone CS01-HS series has two models: the SST-CS01B-HS and the SST-CS01S-HS. Our review unit is the SST-CS01B-HS with the “B” denoting the black finish. From a size perspective, this is a mITX only chassis. Key dimensions for reference are 8.29″ x 12.68″ x 8.27″ (WxHxD). That yields a relatively petite form factor.

The pictures of the Silverstone when the unit is unpopulated look great. Here is the top view. If you are so inclined, you could stick aftermarket rubber feet on the side of the chassis and also make this the front/ rear of the chassis.

This is the view that shows off almost every feature, save the power button and LEDs. The Rear I/O panel has a standard space for a motherboard I/O panel. There is an external power supply connector that bridges to the internal PSU. It has a USB 3.0 “front panel” dual port setup. In the background of this shot, one can see the 120mm cooling fan.

The big feature though is the 6-bay 2.5″ hot-swap cage. These six bays pop out and provide externally hot-swappable SATA or SAS connectivity, via a 7-pin connector. Our review unit was able to handle SAS3 12gbps speeds using a short 0.3m SFF-8643 to 7-pin breakout cable. We did not get to test SAS3 with a longer cable but that is impressive for a several-year-old backplane design.

The top of the case has what we will call “handles” which are metal pieces that arch over the case. There is another metal bar which allows cables terminating at the rear I/O panel to be hidden in the rear of the chassis.

Inside there are two more 2.5″ trays. These are not connected via backplanes so they need to be wired manually. The PSU mounts in the bottom corner of the chassis.

The bottom of the chassis has a massive 120mm fan. Our review unit had a 3-pin 1200rpm fan with a sub 20dba noise rating. This is great, as noise can be an issue. We would have preferred if this fan were a PWM controlled 4-pin fan. Still, for the vast majority of use cases, in such a small chassis this provides plenty of airflow. The fan has a nice filter mechanism which keeps the setup relatively clean. Our hyper-converged NAS is meant to be accessed remotely and in a home or office environment.

This orientation has an interesting impact. The airflow of the case for the CPU and motherboard is much better than in many competitive offerings, even those by companies like Supermicro when using server-style mITX embedded motherboards.

The metal case was nice. We would have liked metal drive trays or screw-less drive trays, but the overall case feels like a winner. We wanted to take this piece a step further and actually see what the Silverstone CS01-HS can do. While mITX form factors are compact, they often sacrifice power and capabilities. We had a feeling the CS01-HS could support a surprisingly big platform and so we decided to try it. For this, we are introducing the Improbable Hyper-Converged NAS. Check out more about that build on the next page.

Will you be using this for something or was it just an over the top example of what could be built?

This platform is being used with a few minor modifications (swapping to larger 1.92TB SATA SSDs for example) for some of the embedded/ edge testing infrastructure we have. I do want to upgrade it to 5TB hard drives at some point. We just had the 4TB drives on hand.

$3200? Did we miss a zero somewhere?

Are you thinking a making a follow-up on the software setup/config side?

No way this will run quietly when it gets warm, and it would probably start throttling soon when you really push it. Hope that 120 has a high top-end rpm!

Jon – we can. Right now we are using the setup we detailed here: https://www.youtube.com/watch?v=_xnKMFuv9kc and Ultimate Virtualization and Container Setup

Navi – this has done a number of benchmarking sessions. 46-47C is the hottest it has gotten thus far. Numbers are comparable to the active cooling version we tested Intel Atom C3958 benchmarks

Hard Drives: 6x 5TB

SATA SSDs: 2x 2TB

Write Cache: 1x Intel Optane 64GB

Read Cache: 1x Intel 400GB NVMe

Can you give a quick overview of what each type of storage will be used for? What will you be doing that you need 3 levels of speed?

Are you going to leave it in service without an I/O shield? I can imagine not having one helps from a cooling perspective but leaving it out means lot’s of the PCB is exposed. Presumably there is a reason they supply one.

Andrew – the hard drive with caches are for larger capacity items. For example, we generate about 1GB of log data per configuration we run through the test suite. Compressed, we generally serve 300GB of data or so to a host being tested during a run. Those then have to get analyzed. Usually, the SATA SSDs with low speed (10GbE) networking work decently well for VMs.

Goose – great question. These tend to work okay without I/O shields, but there is a lot exposed. We took that front-on photo also without the sides to let a bit more light in for the shot. In a horizontal orientation, it is a bit less of a concern. In a vertical orientation, the I/O shield is a good idea. We tried with the I/O shield and it was about 1C higher CPU temps under load but the ambient changed 0.2C so net 0.8C movement. Your observations are on point.

Thanks for the rapid response Patrick. It’s a very interesting idea and one that a friend of mine has explored. He used a U-NAS NSC-800 but you would buy the U-NAS NSC-810 nowhttp://www.u-nas.com/xcart/product.php?productid=17639&cat=249&page=1

Has all the features of the one you used but has 8 3.5″ instead of 6 2.5″

The issues he faced were that he used a consumer board and the fan on the heatsink failed, which because it’s so compact it’s a pain in the arse to change it.

We have the U-NAS 8-bay in the lab, the Silverstone is better quality and a better airflow design. It is a 2.5″ (8x including the internal bays) design as well.

Oh my bad it’s the 800 that has the 3.5″ disks. http://www.u-nas.com/xcart/product.php?productid=17617

Airflow for the motherboard isn’t the best but the disks are fine as they have 2x 120mm.

I can understand what you mean about quality though as silverstone and others such as lian-li make really high quality gear.

Great article, can you guys follow up with your recommended hyper-converged software and other fun stuff to add.

Thanks!

I am interested to see how those 2.5″ 4TB Seagates work for you. I know the article said they were SSDs but I cannot find a 4TB Seagate SSD anywhere on their site with that profile. Based on the one pic it looks like they have a hard drive controller board and room on the bottom for a spindle, so I am guessing they have to be these: https://www.seagate.com/www-content/product-content/barracuda-fam/barracuda-new/files/barracuda-2-5-ds1907-1-1609us.pdf

I built a 24 drive ZFS (Solaris 11.3) array with the 5TB version on a supermicro platform with a direct attach backplane and 3 SAS contollers and had nothing but issues with those drives. Under heavy load (was using as a Veeam backup target) the disk would timeout and then drop out of the array. All I could figure out was that the drives being SMR had issues with a CoW file system like ZFS.

Yes a follow up and how you configured the thier storage and a bit of the reasoning behind it.

The acticle referenced by Patrick is a good start to install the software side but stopped at thing mostly all on the is drive still.

Was wondering also if the 3200$ stated price includes all the drive and ram? Is this from regular web store like new egg, etc… ? Or there was a wholesale discount of some short?

*mostly on the os drive still

Cool NAS build but how does this qualify as Hyper-Converged?

Hyper-Converged architecture is a cluster architecture where compute, memory, and storage are spread between multiple nodes ensuring there is no single point of failure. This is a single board, single node NAS chassis so unless I missed something, this is anything but Hyper-Converged.

This case has several issues you guys should know.

1) It has very bad capacitors at backplane, after a year or two them could give short circuit and prevents system to power up. Easy to remove backplane or replace capacitors.

2) HDD Cage is a lack of ventilation. Any type of HDD will warm up. The only way to prevent burning of data – is removing backplane.

3) Bottom dust shield not centered with fan’s axis

4) SSD cage has all same problems as HDD cage.

I believe since this is one compute node, this is technically a Converged Infrastructure (CI), save the single points of failure (power). Hyper-converged Infrastructure implies multiple systems (think two, four, eight, etc.) of these CI nodes with all nodes being tightly-coupled with SDDC to greatly reduce the impact of a single or multiple nodes going offline.