Aligning with the AMD EPYC launch, we have a dual socket system in the lab for testing. We are not going to publish a bevy of performance and power consumption data today because we know there are some performance and power tweaks in a future BIOS revision for the platform. Still, we wanted to share some insights into power consumption we are seeing on the platform.

AMD EPYC 7601 Test Configuration

Our test system was relatively simple which lets us get directional power consumption data. Here is a quick overview:

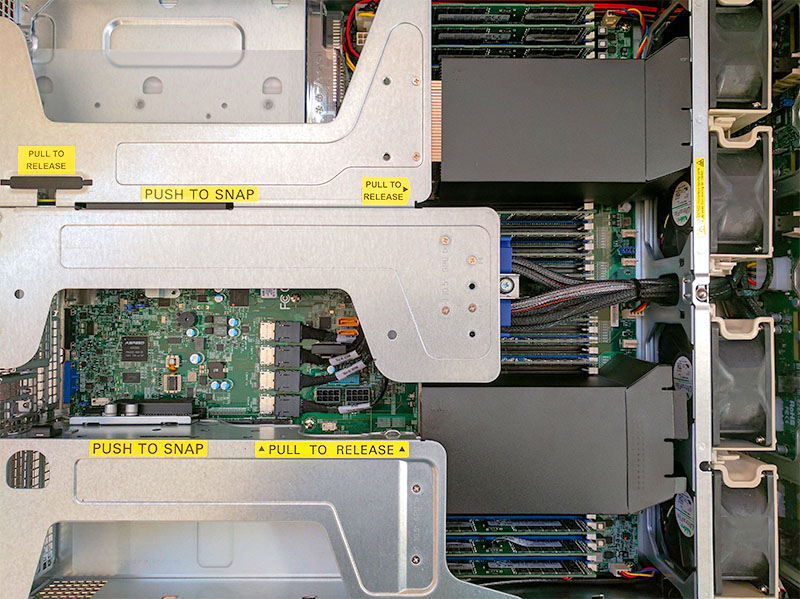

- Platform: Supermicro 2U Ultra Server with NVMe Support

- CPU: 2x AMD EPYC 7601 (32 cores/ 64 threads each)

- RAM: 512GB using 16x 32GB DDR4-2400 ECC RDIMM (eight per CPU)

- Boot SSD: Intel DC S3520 480GB

- NVMe SSD(s): Intel DC P3600 800GB

- Network Adapter: Mellanox ConnectX-3 Pro EN 40GbE (2x QSFP+ 1m DACs)

Overall, AMD is pushing the EPYC processors as part of larger systems. The 2U Ultra platform has a huge number of PCIe 3.0 slots and can accept 4x 2.5″ or 3.5″ NVMe drives. The possibility to use significantly more power is certainly present.

We test in our Silicon Valley data center where we use 208V 30A circuits to power machines.

Dual AMD EPYC 7601 Initial Power Consumption Observations

We have been running workloads in the AMD EPYC platform for several days. Although we do not have a full set of data, we did want to publish some preliminary numbers. Aside from the configuration changes, we do expect some power consumption impact from newer AMD BIOS improvements.

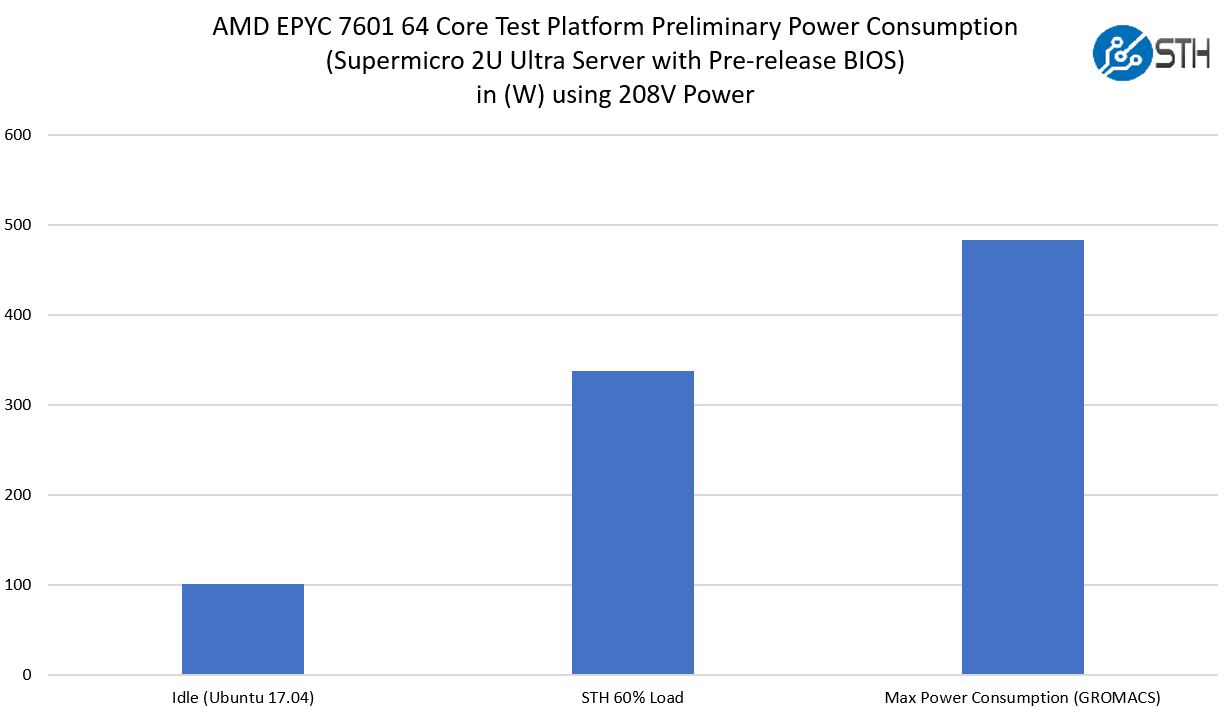

We took three quick data points just to get a sense of how much power the system was using.

- Idle

- Our 60% enterprise static load

- GROMACS AVX2_256 across 128 threads

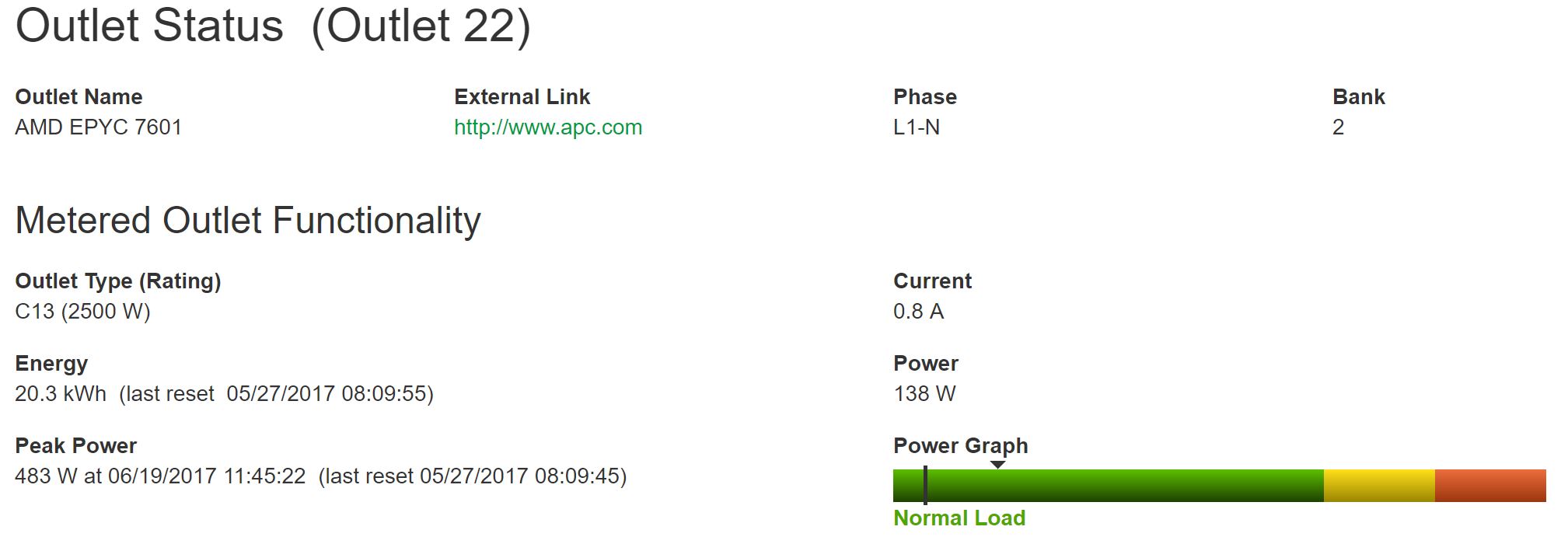

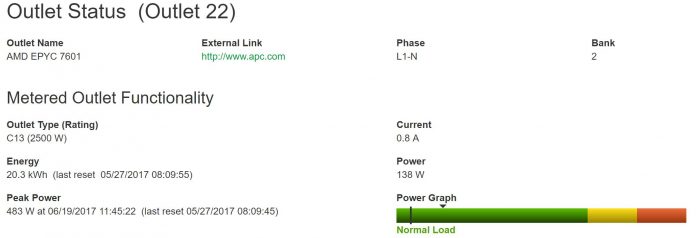

These are being measured on our APC/ Schneider Electric PSUs in the data center lab, similar to how a colocation provider may meter a customer. Here is a quick look at the outlet. If you were wondering why it is using 138W that is installing packages from Ubuntu 17.04 repos:

Overall these are good numbers for the platform. The idle power consumption is about the same as we would expect on a high-core count Broadwell-EP chips configured with 16x DDR4 DIMMs in 2 DPC configuration. The 60% workload number is great. Although we are not publishing benchmarks, the numbers were very good. In terms of the GROMACS AVX2 workload, we hit the 483W maximum thus far in our testing.

Final Words

In systems that leverage large numbers of power hungry devices, for example, GPU compute servers and NVMe storage servers, the CPU is a relatively low power consumption line item. We were surprised to see how low the actual power consumption is on the platform. Running an AVX2 workload we were expecting much higher power consumption but at under 500w for 128 threads, this is excellent.

With the level of power/ performance of the new systems, you can essentially replace four Intel Xeon E5-2600 (V1) servers with a single dual socket EPYC node and get more performance (in most cases) in a single node that uses half the power. That is absolutely stellar. The AMD EPYC platform is still seeing major updates to BIOS for power and performance which is why we are calling these preliminary results. At the same time, we are already seeing some impressive figures.

More AMD EPYC Launch Day Coverage From STH

- AMD EPYC 7000 Series Architecture Overview for Non-CE or EE Majors

- AMD EPYC 7000 Series Platform-Level Features PCIe and Storage

- AMD EPYC 7000 Series Key Security Virtualization and Performance Features

- AMD EPYC 7000 Series SKU Lists for Launch

We will have more information on AMD EPYC including benchmarks once we are allowed to release the information.

For that Gromacs benchmark I know you are under NDA for anything RyZen centric but could you at least put the power consumption number into context for a similarly specced Broadwell with 22 Cores and maybe even a Xeon Phi since you’ve tested one?

I know you can’t publish the actual performance of Zen just yet (and the performance number is absolutely critical to understanding what 483 watts means) but you could provide some context as to what older systems do. Of course Skylake SP would be another interesting datapoint when it becomes available.

You could do the same thing for the “STH 60%” workload too.

Sorry, we agreed to wait for final production firmware for details on benchmarks. There are a lot of, understandable, concerns about publishing numbers on pre-production firmware. Not ideal, but we can at least put some bounds around how the system may perform. For example, given what we have seen thus far, there are likely workloads that can generate more power consumption by 10% or so, but it is unlikely you will see 1kW from this configuration. Likewise, we were able to provide an idle view as another approximate goalpost.

We are under embargo for Skylake-SP. We will have more on all of this in future launches. Stay tuned.

It is equally hard having tons of data and not being able to share/ use it.

I’m sure SuperMicro would appreciate you don’t spell their product as Supermciro. Seriously.

Interesting results. I was going to spec out an E5-2630v4 system for Plex media server capable of 4k transcoding, but with Epyc, it may make more sense. Would it be safe to assume (beta firmware, etc) aside, that a single socket Epic 7601 + 16GB of ram (2 sticks) and no 40GbE adapter might draw ~40-50 watts at idle? (given the same server, etc)? a 32core/64 thread machine that idles under 60watts would be insane

meant to say 2GB sticks

Evan – usually these things do not scale linearly. There are cooling fans, BMCs, PSUs, network adapters and etc that all take some power. Will have a single socket option to test soon.

Interesting, is there any reason to run the machine on 208V and not 110V? Thanks.

Andor – 208V is common in North American data centers.