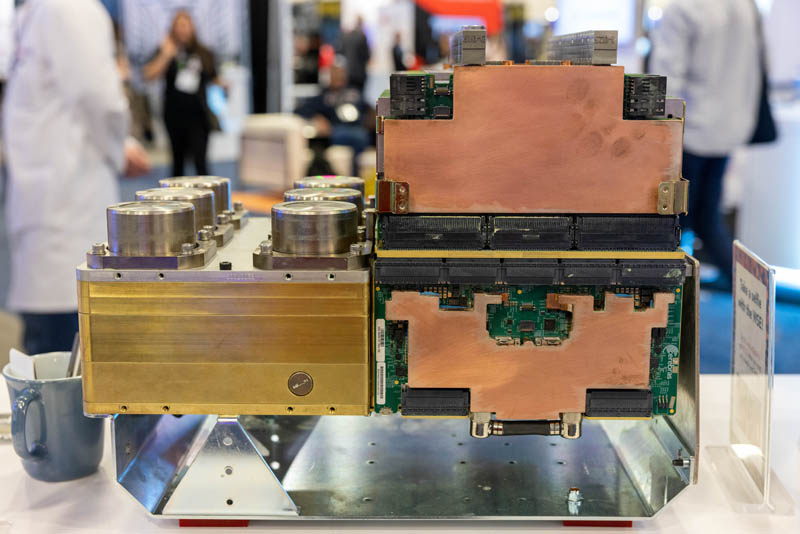

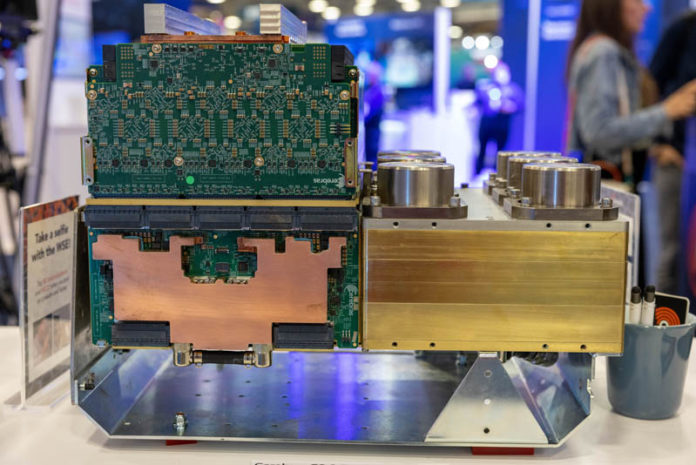

At SC22, Cerebras showed off something that we rarely get to see, the heart of its CS-2 computing platform, its engine block. By that, we do not just mean the company’s giant WSE-2 chip that we have seen many times before. It was instead the stuff that goes around a giant chip that makes it tick.

A Cerebras CS-2 Heart Bare on the SC22 Show Floor

When we discuss the Cerebras product, we either discuss one of two views. The first is the CS-2 system that the company sells.

The second way we typically discuss the Cerebras product is in terms of its massive chip, or its Wafer-Scale Engine-2.

Still, going from an enormous AI chip to a system is not an easy feat. That is what was shown at SC22.

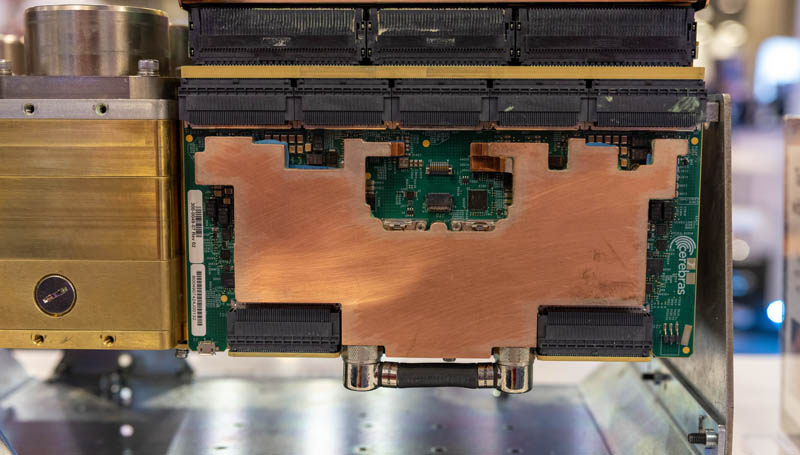

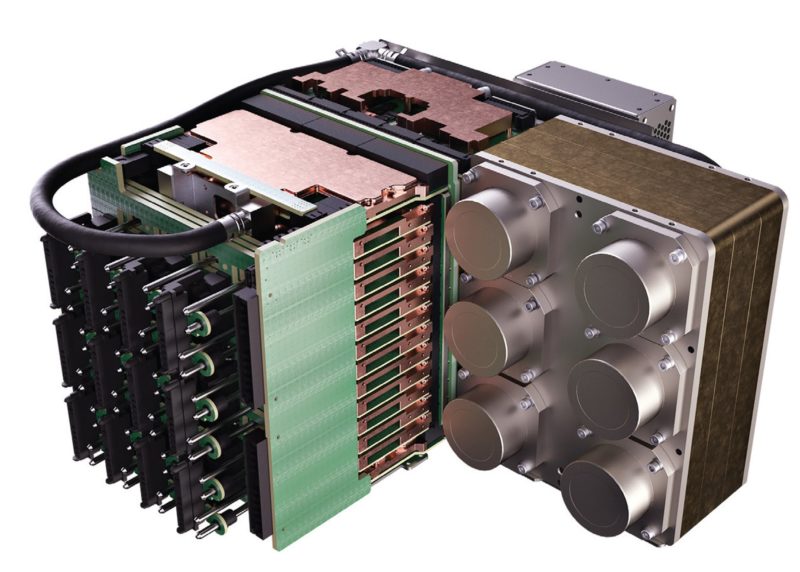

At the show, the company showed what looked like a bunch of metal with a few PCBs poking out. The company calls this its engine block. In our previous discussions with Cerebras, that was a huge engineering feat. Figuring out how to package, power, and cool the massive chip was a key engineering challenge. It is one thing to have a foundry create a special wafer. It is another to get that wafer to turn on, not overheat, and do useful work.

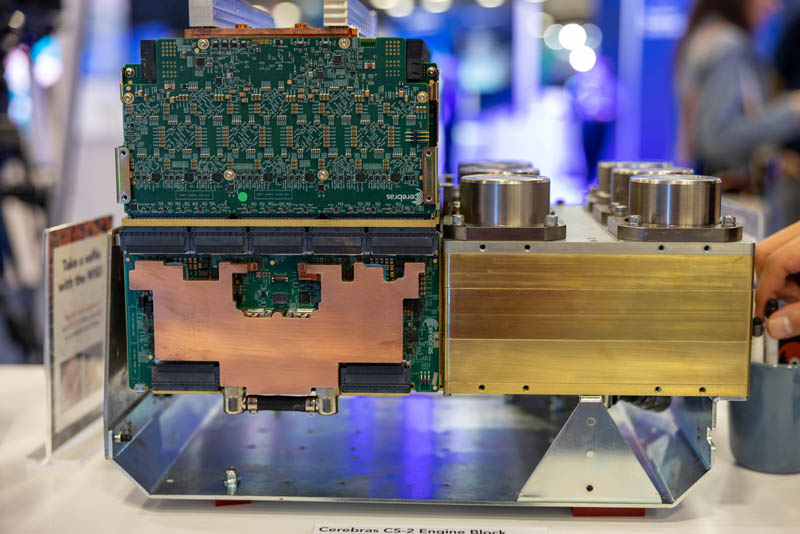

This is a view of the other side:

While we talk about servers having to move to liquid cooling because of density, we are talking about 2kW/U servers or perhaps accelerator trays with 8x 800W or 8x 1kW parts. For the WSE/WSE-2, all of that power and cooling needs to be delivered to a single large wafer, meaning that even things like the thermal expansion rates of different materials matter. The other implication is that virtually everything on this assembly appears liquid-cooled.

Some of our readers may see writing on the fittings on the bottom boards. That is a Koolance label on the fittings for those who are interested.)

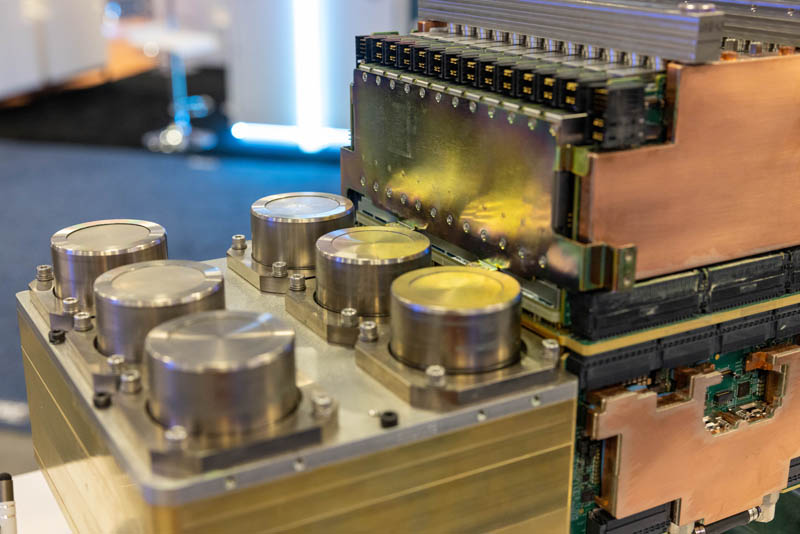

The top row of boards is very dense. The Cerebras rep at the booth told me those were power supplies which makes sense, given that we see a relatively low connector density on them.

The way that the CS-2’s engine block was shown at SC22 may have seemed strange to some. This is how the engine block would be situated in the rear of the system (the CS-2 is a “rear engine supercomputer”?):

Overall this was great to see at the show.

Final Words

At SC22, I was able to sit down with Andrew Feldman. We discussed the company’s new Andromeda announcement, scaling work almost linearly across 16 CS-2’s. We also discussed new areas of research that are underway utilizing the compute and memory capabilities of the platform. From what I would imagine, more systems are deployed today than when I stopped by for a pre-pandemic shutdown interview in 2019. That is leading to more opportunities for teams to use the hardware in new domain areas. My big takeaway, and hopefully that of our readers, is that Cerebras is not focusing on the jobs that take eight GPUs an hour or a day to do. The company is instead focusing on problems so large that if you used GPUs, the inter-accelerator traffic would become too great.

Update: We just published our video that has more views and some video on this one here:

Folks may know I have been critical of Graphcore’s approach of trying to build a similar-sized but less expensive accelerator to compete with NVIDIA. Perhaps the key to why Cerebras is doing something different is that its “engine block” replaces all of the power, cooling, and nests of interconnect wiring found in a GPU cluster and packages it all into something set on a table on the SC22 show floor.

The Cerebras CS 2 WSE 2 Engine Block looks the part.

Are they pistons? asked the candid reader :))

It obviously can’t deliver 100 FPS in Crysis. But I bet within 100 seconds it can train an appropriate DNN capable of concurrently playing 100 games of Crysis each running at 100 FPS.