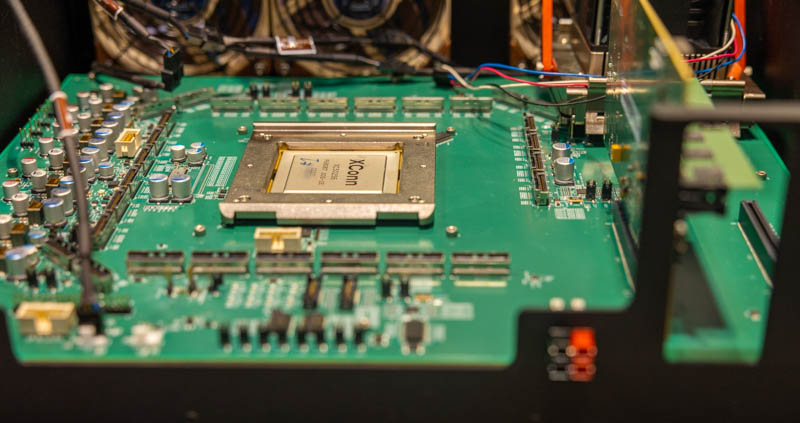

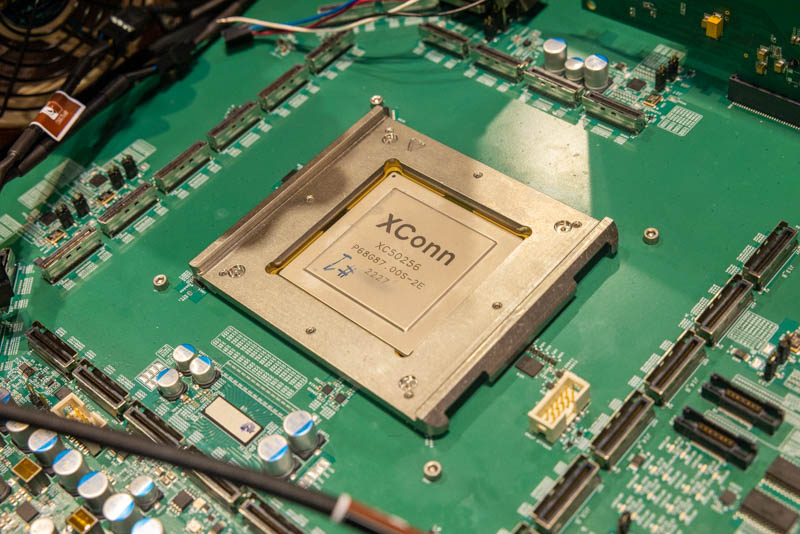

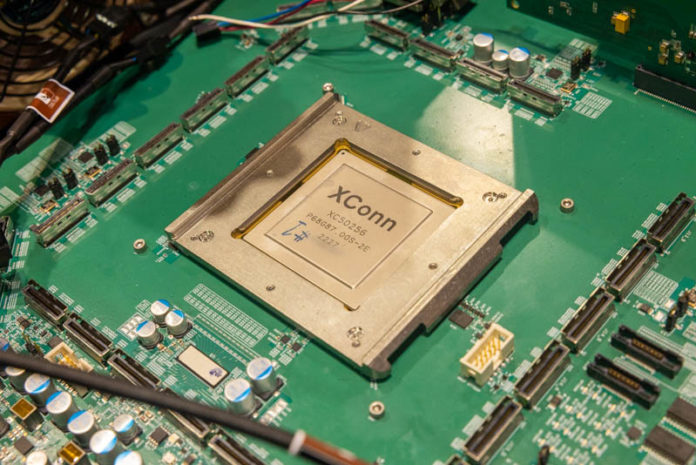

At FMS 2022, we saw a piece of technology that is straight from the future. We were told that there were only two of these platforms available at this time. The chip shown is the XConn XC50256 CXL 2.0 switch chip. This is a massive chip designed for next-gen switched CXL 2.0 infrastructure.

XConn XC50256 CXL 2.0 Switch Chip Shown at FMS 2022

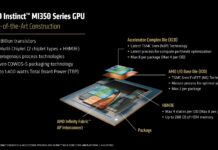

For a quick recap, CXL, or Compute Express Link is a protocol that sits alongside PCIe Gen5 infrastructure (for CXL 1-2, CXL 3.0 can use PCIe Gen6) in chips that we will see later in 2022 and into 2023. If you want to learn more about why Compute Express Link or CXL will be a game changer, check out our taco primer.

CXL 2.0 adds a number of new features over CXL 1.1. Perhaps the biggest is the ability to be switched. CXL switching is somewhat like PCIe switching, and it gets a major push towards a fabric in CXL 3.0. That said, not all PCIe Gen5 switches will also be CXL switches. This technology shift means we will have a new generation of switching technologies and perhaps even vendors. XConn aims to be one of the new solution providers in this space with its CXL 2.0 switch.

If you count the connectors and the PCIe x16 slots, and look at the XConn XC50256’s model number, you will get the same number we were told in the booth as this being a 256 CXL 2.0 lane switch chip. We were also told that this is one of two working demos of this platform in the world.

This chip is absolutely massive and has a huge cooler atop the chip.

Final Words

What we saw at FMS 2022 is clearly a development platform. It is designed to have many devices plugged in, and the single-level switching topology of CXL 2.0 is a starting point for the technology. Still, this technology demo at FMS 2022 was the first time we saw a working CXL 2.0 switch. CXL will, over the next few years, revolutionize the data center, and a big part of that is having CXL switches as fundamental building blocks. This XConn XC50256 was perhaps one of, if not the most important pieces of technology shown at FMS 2022.

…So in CloudX of the near(ish) future: Docker files / VM definitions, Ansible playbooks / Teraform, etc + add VFe (Virtual Iron) configs for said app stack // As in this app works best with 4 fast cores per server (so take chunks of a 16-core fast spin EPYC for example), 16-GB of RAM, 100Gb network and 64GB fast local SSD storage)

Ah perhaps the VFe definition/creation tool can be called “IronForm” or just add a complex VFe type definition to replace “instance_type” in Terraform, when one wants to “bend their own metal” for a give app.

New tech employment add?: “Linux SA blah, blah with farrier experience” // Farrier being the added job skill metaphor

Can we get some discussion of CXL latency? If the numbers limit CXL to just a PCIe fabric, it won’t change anything – memory is really the main question. 50ns?

Mark – I have been told by a few folks that it is roughly on par with a NUMA node hop to memory.

Something very nice is the lack of traditional x16 and x8 PCIe slots for the physical layer, and the use of SFF type connectors instead. This change should come to the entire industry including desktop IMO.

Mark Hahn, memory architecture will eventually become just like processors, a block that will “run” memory instructions and will move data blocks to be processed. Then switch architecture will change how shared cloud servers really works.