Now that both Intel and AMD have launched new server platforms, the industry is firmly on the path of its DDR5 transition. We have already discussed the speed increase a bit, but a lot more has changed in this generation. For example, there are now two memory channels on chip. Also, even if you have an ECC DDR5 UDIMM, it is no longer compatible with platforms that support RDIMMs. It was time to do a bit of a deep dive on why DDR5 memory is different.

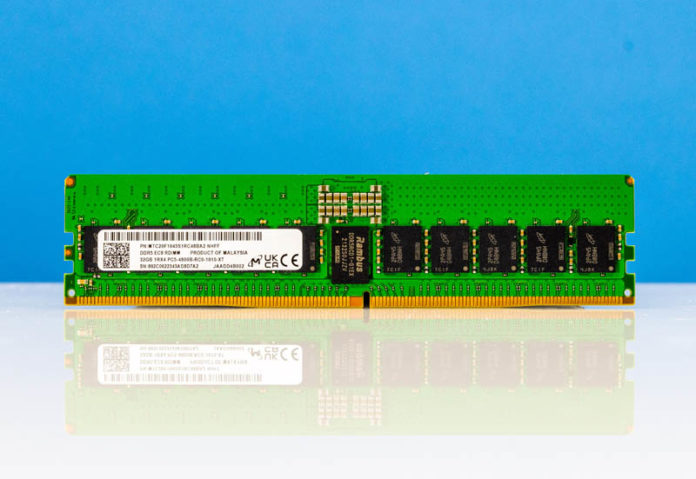

As a quick note here, I wanted to do a piece on the DDR4 to DDR5 transition. We worked with Micron, and the company is sponsoring this article and video, sending DDR5 RDIMMs to use in this article. We just wanted to say thank you to Micron for this help and also explain why our readers will see only Micron memory in this article.

Since this is a big topic, we also have a video for this one that you can find here:

As always, we suggest opening this in its own tab, window, or app for a better viewing experience.

Why DDR5 is Absolutely Necessary in Modern Servers: Core Count Growth

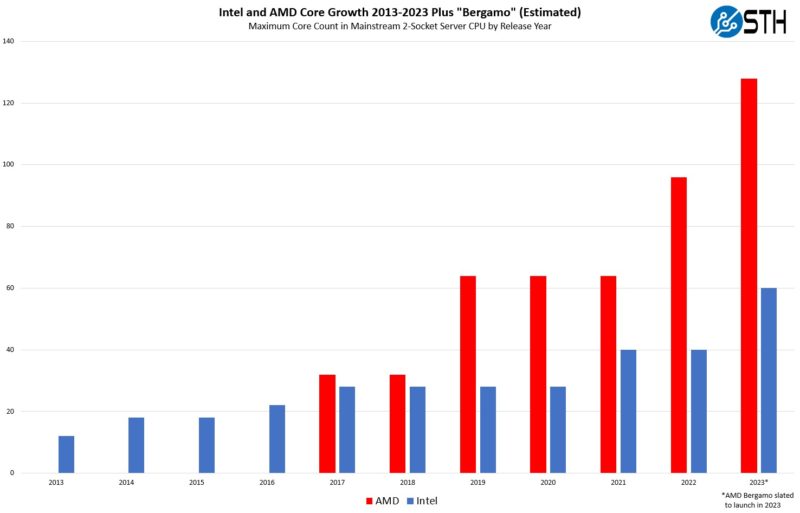

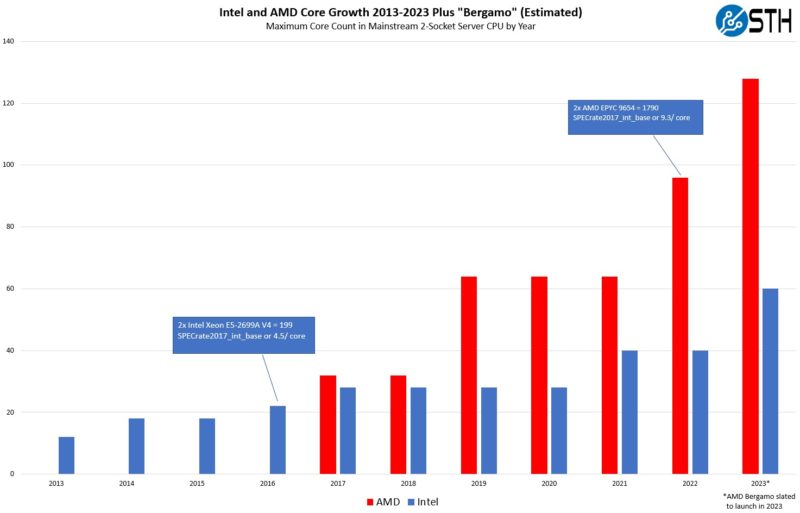

Here is the setup from our earlier piece, Updated AMD EPYC and Intel Xeon Core Counts Over Time. Over the last ten years, the server market has evolved from 12 cores/ socket to 96 currently, with 128 cores/ socket coming relatively soon in the same platforms we already have in the market.

What is clear is that servers are consolidating what used to be in racks into single nodes. This trend will accelerate in the future with chiplets and CXL. One of the biggest challenges with system scaling is memory bandwidth. Having more cores and more PCIe devices is great, but if portions of the system are sitting idle waiting for data, then they are being wasted.

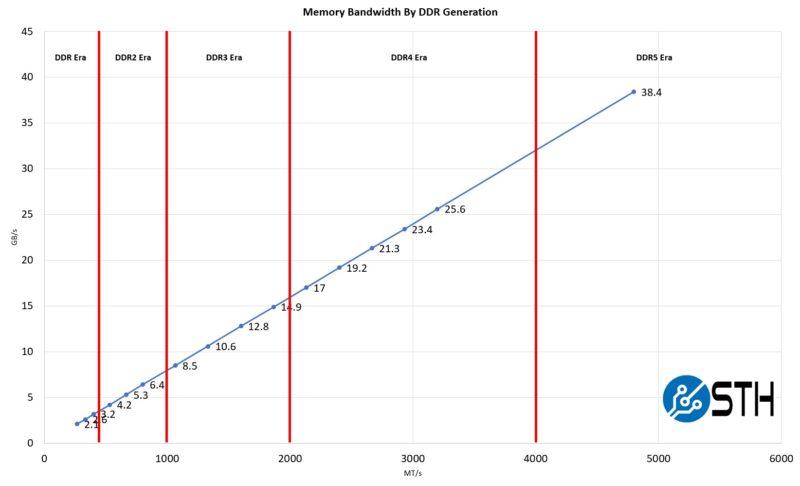

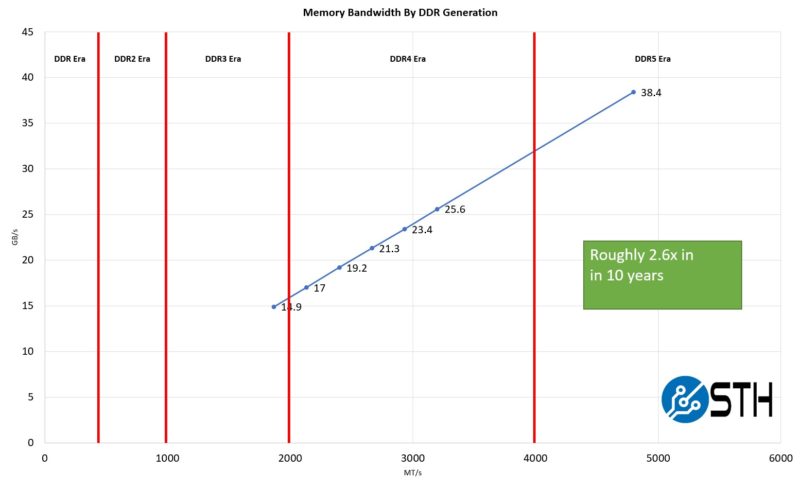

The jump from DDR4-3200 to DDR5-4800 is enormous.

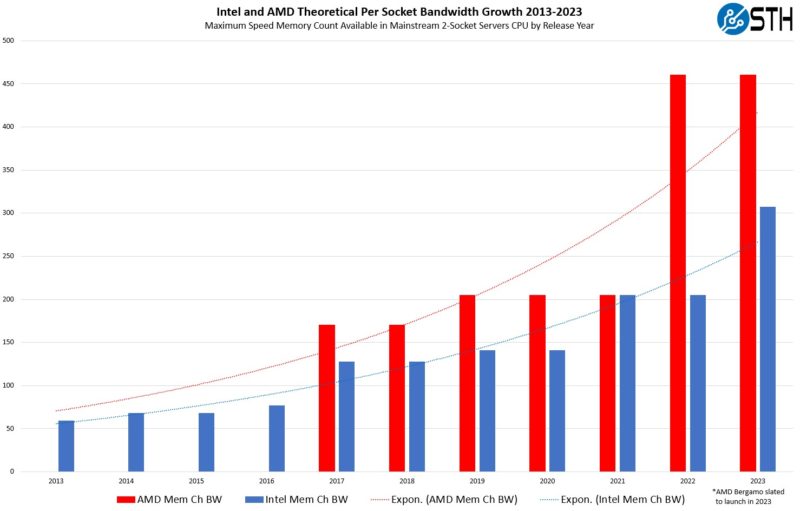

Since memory is scaling at a pace slower than core growth, server vendors have designed a simple solution, scaling out the number of memory channels along with using faster memory.

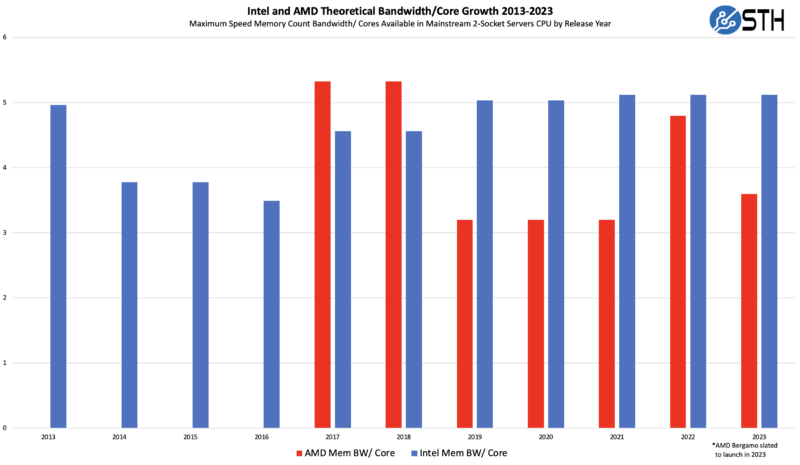

Increasing memory channels and, at the same time, increasing the speed of the memory means that the industry has been able to keep at least feeding cores with a similar amount of bandwidth over time. Note, we are using the maximum core counts here, but if one keeps core counts contestant, for example, at 32 cores, then memory bandwidth per core increases.

With that, many wonder why people talk about systems being memory bandwidth limited. There is another side to the equation: performance per core. Performance per core has roughly increased by 2-3x over the past decade on high core count SKUs. AMD EPYC Bergamo, we would expect to perform more in-line with a Zen 3 Milan core than a full Zen 4 Genoa core, but then again, there are 33% more cores in Bergamo.

With that backdrop, the memory bandwidth side has only scaled 2.6x in the last 10 years now that we have DDR5. If it were not for DDR5, that would be less than doubled.

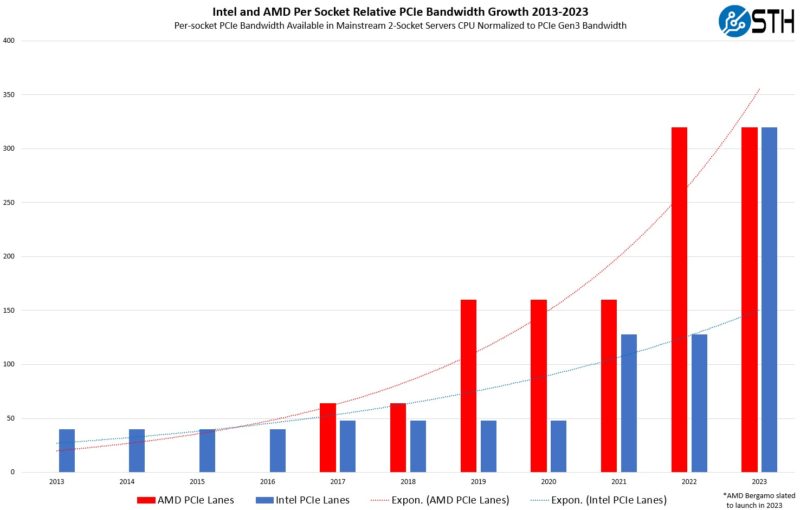

On a system level, we also showed the PCIe Lanes and Bandwidth Increase Over a Decade for Intel Xeon and AMD EPYC.

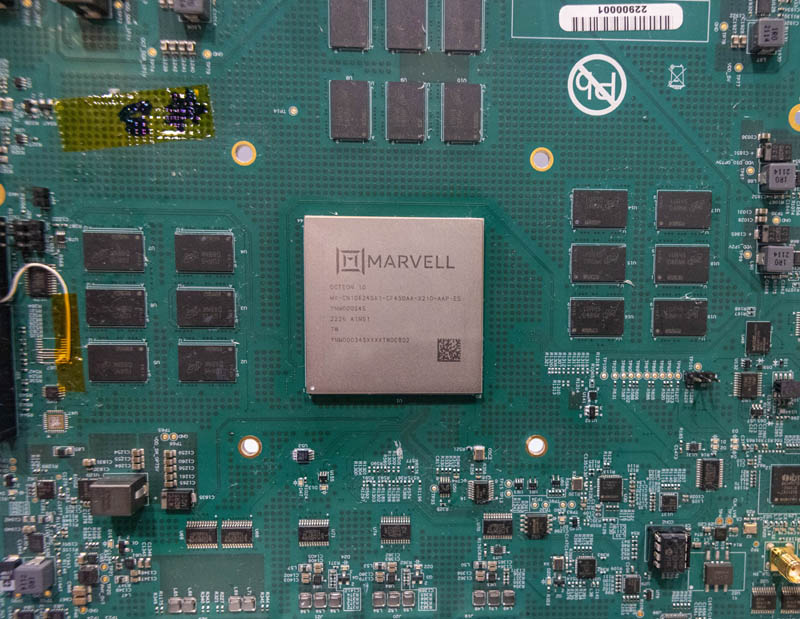

PCIe devices like high-speed RDMA NICs put further pressure on memory subsystems beyond what cores do alone. Likewise, Intel’s Sapphire Rapdis Xeon has up to 800Gbps of QAT acceleration onboard along with other accelerators. These accelerators can access memory and thus can put pressure on the memory subsystem. We are even seeing accelerators like DPUs use multiple channels of DDR5.

So while memory bandwidth per core as a metric seems to be satiated by increasing memory channels and moving to DDR5, the increased performance per core is causing pressure on memory subsystems.

One thing is for sure, modern CPUs with eight channels of DDR4-3200 would be very challenging. As a result, it seemed like a good opportunity to explain more about the DDR5 transition and what is different about DDR5 memory.

Next, let us get into why servers no longer support unbuffered ECC memory as we look at the module differences between DDR5 UDIMMs and DDR5 RDIMMs.

If you’re reading this, page 2 is where it’s at. I learned more in 5 minutes reading through that then everything I’ve seen on Reg DIMMs before.

Thanks to STH for this article +1

This is sooooooooooooo gooood. I’d +1 Uzman77’s rep on the 2nd page. That’s the best explanation I’ve ever seen. I’m usually only on STH to troll comments, but that was useful

I find it disappointing that a new higher-bandwidth higher-capacity higher-latency memory standard has been developed and people are still considering non-ECC DIMMs.

At the frequencies and densities where DDR5 makes sense, I think allowing the CPU to verify RAM integrity using ECC is important. Even if rowhammer and other ways of inducing memory errors through code didn’t exist, the tradeoff between reliability, the associated costs of memory corruption and adding two more chips per DIMM favours ECC, at least in my opinion.

It would be nice explore the ECC DDR5 options available for desktop computers.

TL;DR

1. We need DDR5 over DDR4 because capacity, more memory channels on server CPUs for DDR5 and higher bandwidth

2. U can’t use UDIMM anymore BC… you can’t use UDIMM anymore.

3. 2-channel DDR5 hack is in no way fundamental. It’s just a hack. But for some reason you have to have it.

Yes DDR5 has bigger burst step, but that was the case at any generation change…

4. On chip ECC – another bullshit fudge, heavilly used by marketing. In reality, DDR5 RAM cell shrinkage has dropped its reliability too low, so that had to be countered on-chip. So, it’s not an improvement but a patch for a cell, that can’t be shrunk without a compromise.

TIL – That you can’t use ECC UDIMMs in an RDIMM server with DDR5

… I have to ask, how much is that half tray of RAM worth in the second to last picture?!?!

Nice little AMD fluff piece.

Is the latest AMD even shipping? I have 13 dual socket Supermicro 1U servers – each with SPR and 2TB DDR5 ECC – not to mention 16 Supermicro dual socket workstations – each with a single SPR and 1TB DDR5 ECC – they will go great with the 16 GPU DGX H100…

“4. On chip ECC – another bullshit fudge, heavilly used by marketing. In reality, DDR5 RAM cell shrinkage has dropped its reliability too low, so that had to be countered on-chip. So, it’s not an improvement but a patch for a cell, that can’t be shrunk without a compromise.”

DDR5 has on chip ECC. It is from Engineering, not Marketing. What is your basis for claiming that reliability is too low? The Gnome living in your nightstand does not count as a source.

So many Desktop kiddies thinking they know something about servers.

How about Dr. Ian Cutress? https://www.youtube.com/watch?v=XGwcPzBJCh0&t=3m33s

Minie was talking about the cell reliability without this correction. The point is that on-die ECC exists because with increasing density the factors causing bit-flips have increased to the point that it’s impossible to get a low enough defect rate without some form of built-in correction.

Ummm….way to ignore CAS latency pretty much entirely. It’s why, to this day, top end DDR4 kits will outperform even up to mid-range DDR5 kits. Hell, they’ll even outperform some of the lower top-end DDR5 kits. CAS latency is supreme. In desktop environments and ESPECIALLY server environments.

Also, this supposed “dual channel” thing they talk about, which is a grossly incorrect term, is reminiscent of the AMD bulldozer days where they’d claim they were splitting up the load between “cores”, but it was still only one pipe going out of the processor, this negating any real world benefit.

The main reason that DDR5 systems perform better is because the platforms and CPU architectures are better. It’s got very little to do with the RAM itself.

Another great article by STH. Bravo lads.

Joe I’m seeing like $168 per so $4k.

Dissident Aggressor. I don’t see how it’s an AMD fluff piece. It is nice that you’ve only got a few servers. The DRAM vendors Samsung, Hynix, and Micron all talk about the bit flips in technical conferences. I can tell you from experience that even DDR4 had massive issues. We’ve got hundreds of thousands of DDR4 modules installed in just the data center I’m responsible for. The newer 1x modules saw an increase in errors. Samsung’s are much worse than they used to be. That’s why they’re doing on chip ECC with DDR5. 29 systems is less than a quarter of a rack for us and we’ve got many thousands of racks. I’m not sure who you’re talking about with the “desktop kiddies” but you sound like one based on your comments. I’ve worked at three different hyperscalers and one large social network over the last 10 years and my colleagues all are on STH because there’s good info here. Anandtech used to be good 7+ years ago.

ChipBoundary with CAS 40 is like 92-93ns on DDR5-4800 and it’s like 90ns on DDR4-3200 IIRC. So it’s like 50% more BW, the dual channel helps a small amount (we’ve measured it so that’ll make it into a paper). Between those two it’s better than DDR4 and it’s much better.

Even as far back as Sandy Bridge memory bandwidth was starting to impact some applications. It became particularly noticeable with Haswell’s AVX2 and FMA3: 4 cores were starved by 2 channels of DDR3 1600 memory and it began to make sense to underclock the CPUs.

It would be nice to have a consumer system with 16 cores and 8 sticks and not 2 to restore some balance. AVX512 needs it.

@Minie Marimba, totally agree on your point 2. Why isn’t the voltage the same. AFAIC, stepping down fron higher voltage increases efiiciency. Why are desktop DIMMs designed to be less efficient. Maybe they save 10-20 cents from the PMIC but they butcher any chance for compatibility.

This is especially strange because the industry has been moving towards 12v for everything. So the MB will probably need to have 12V->5V VR to feed the DIMMs, whereas it could just pass through the 12V it receives from the PSU. I must be wrong somewhere because this makes no sense whatsoever. Unless one is inclined to entertain the possibility that this is done specifically for market segmentation..

On point 3 – the dual channel nature is actually counterproductive. It increases the cost of ECC because you are moving from 12.5% redundancy to 25% redundancy (e.g. from 8+1 to 8+2 chips). WTF? DDR5 (as DDR4 by the way) supports in-band ECC. Sure, without a place to store the actual ECC data this can only protect the data in transit over the DDR bus but not in rest in the capacitor array. However, coupled with the internal ECC, that is even necessary at current semiconductor densities, this could have offered full ECC almost for free. Yes, almost because (1) it introduces additional cycle in the burst sequence for the transfer of ECC data and (2) because of the internal ECC overhead. However, the overhead from (1) is not more than 10% (pulled out of my ass – it’s slightly higher than 10% but you can never utilize the bus completely, so it it will not make as much difference; I would argue it’s more like 7-8% in real life situations). And (2) is already used anyway. And when you have the ECC over an entire internal row, istead of 32-bit only, you can have much lower overhead and/or better protection, e.g. correct several errouneous bits.

Again, I’m probably wrong somewhere because the state of affairs does not jive with what the techonologies can provide. One reason might be that memory chips are designed to be as simple as possibly because you need many of them and any overhead can hit hard. But, I would argue, not as hard as an additional chip for every rank in a module.

Just my 22 cents

@Nikolay Mihaylov

“Unless one is inclined to entertain the possibility that this is done specifically for market segmentation..”

Looking at it in any other way indicates a lack of comprehension of how the modern economy works. Interoperability between server and desktop platforms is anathema to the manufacturers/chaebols/cartels whoever you want to blame, and it is specifically allowed to be artificially implemented [through software/firmware/unnecessary physical incompatibilities] to drive margins on “Server” gear and to keep a layer of obfuscation between consumer and pro product lines, even if the difference between a Gaming CPU and a Server CPU doesn’t necessarily warrant it.

It’s only going to get worse as Intel’s scheme to pay to enable hardware features that ship complete on the chip isn’t really getting any serious pushback.

Few correction on the CXL section :

1.

“Latency is roughly the same as accessing memory across a coherent processor-to-processor link”

Should be

“Latency is roughly the same as accessing memory across a coherent socket-to-socket link”

2.

The aim of the CXL consortium is to make the latecney of direct attached CXL in the same ballpark as of socket NUMA hop, however we are not yet there.

3.

CXL/PCIe bandwidth in bidirectional, therefore the eqvivilant raw bandwidth of the suggest card is of *four* DDR5 channels.

Too much e-peen competition in these comments.

Some of you sounds like amazing folks to have to put up with, I feel back for your co-workers.

You didn’t explain why server platforms no longer support consumer-level DIMMs. This is very upsetting, it will make those platforms prohibitively expensive to home enthusiasts even down the line when they flood the second-hand market.

They did explain it. Different operating voltage with the power management onboard. They’re now physically keyed differently.

Hello NLST

Worth reading indeed. Thanks for sharing.