The Supermicro 2049P-TN8R (or technically the SYS-2049P-TN8R) is a 4-socket server that is designed differently from many of the other models we have tested like the Supermicro SYS-2049U-TR4. This server is designed for scale-out applications. It may seem like a slight nuance, but the system is designed differently for that purpose. This is a system designed to deliver maximum compute and memory in a system while minimizing extraneous costs. In our review, we are going to see how this was accomplished.

Supermicro 2049P-TN8R Overview

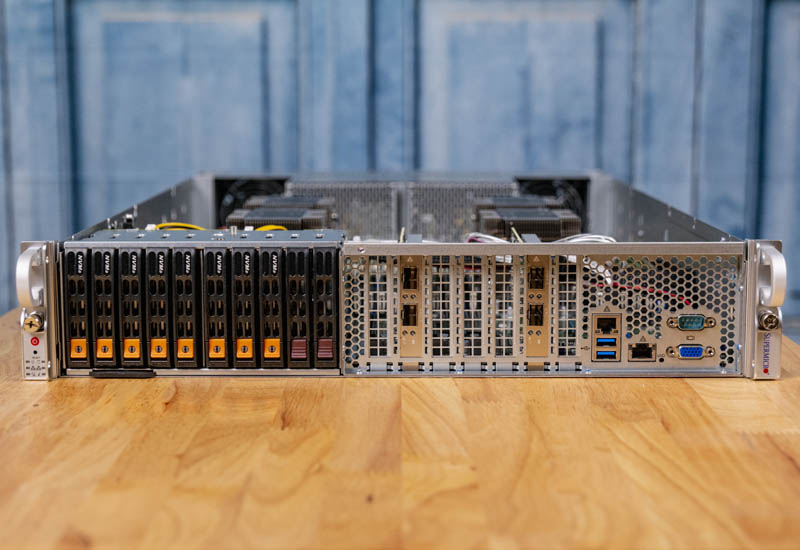

The unit itself is a 2U form factor, with a twist. Instead of being full of front panel storage and the I/O in the rear, we see a significantly different layout that aids in keeping the chassis short. The chassis itself is only 30.2″ or 767mm deep which means it fits in denser environments.

Starting with the front panel storage, there are eight 2.5″ U.2 SSD bays. Next to those are two SATA III hot-swap bays. This configuration is usually set up to use lower-cost SATA boot drives and higher-end NVMe drives for storage.

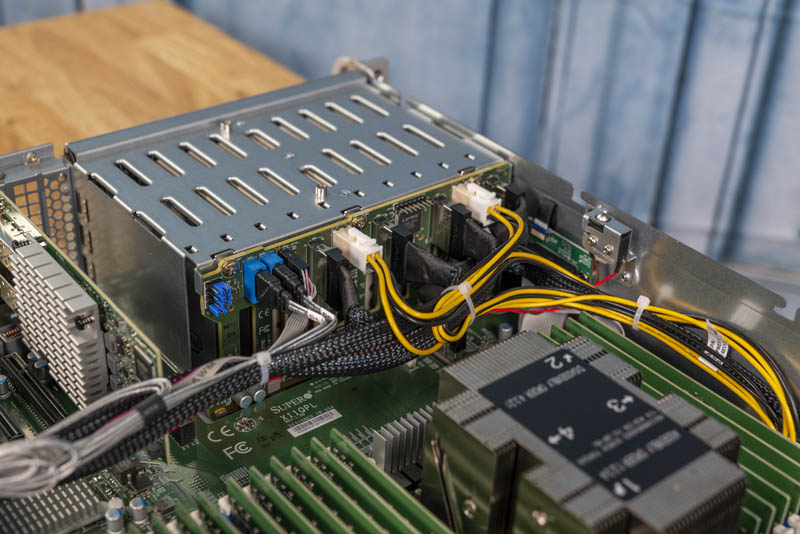

Taking a quick look at the storage cage from the other side one can see high-density Molex cabling for the NVMe drives along with standard SATA cabling for the additional two bays.

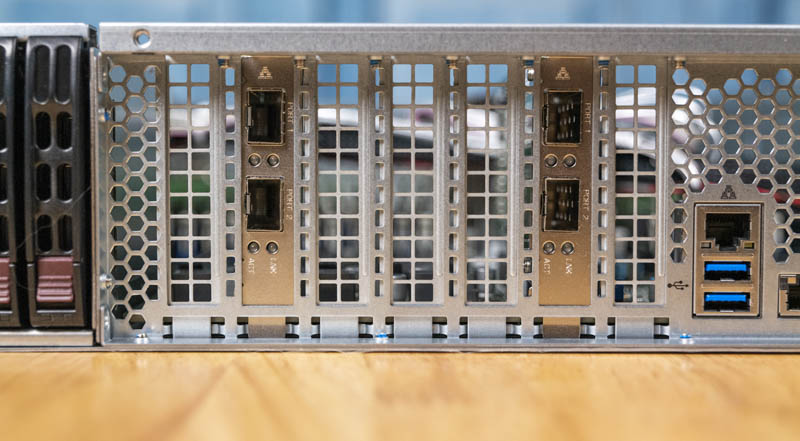

The seven expansion slots are all low-profile. Many larger servers utilize risers with full-height options. The SYS-2049P-TN8R instead offers only room for low-profile cards.

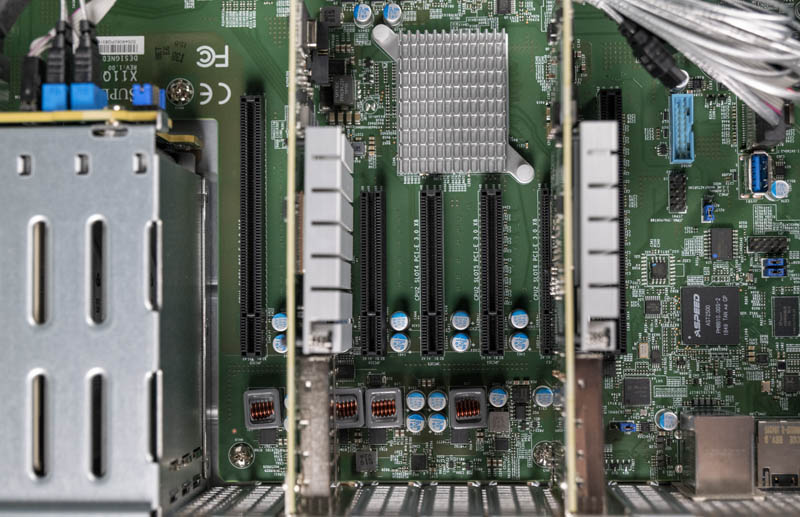

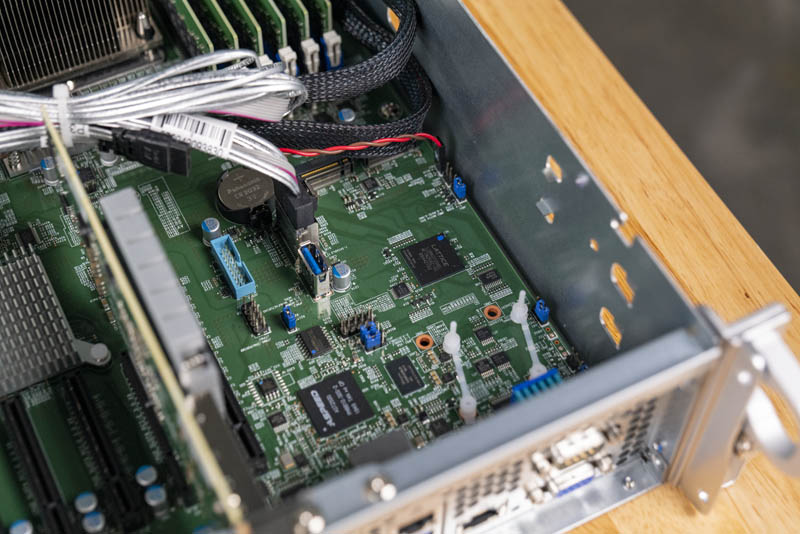

Taking a look inside, we see why. The seven PCIe 3.0 expansion slots are all directly on the PCB, not on risers. As a result, they are limited to the 2U chassis height. There are five PCIe 3.0 x8 slots and two PCIe 3.0 x16 slots that flank those five.

On the right side of the front, we again see something different. The management NIC port, 1GbE basic networking port, two USB 3.0 ports, serial console port, and VGA port are all located on the front of the chassis. Normally these are found in the rear of the chassis which is considerably different and has implications for data center operations.

Behind those ports we can see two SATA III / NVMe M.2 slots. These two slots support M.2 2280 (80mm) or M.2 22110 (110mm) SSDs adding to additional storage diversity. Also located in this area is a USB 3.0 Type-A header.

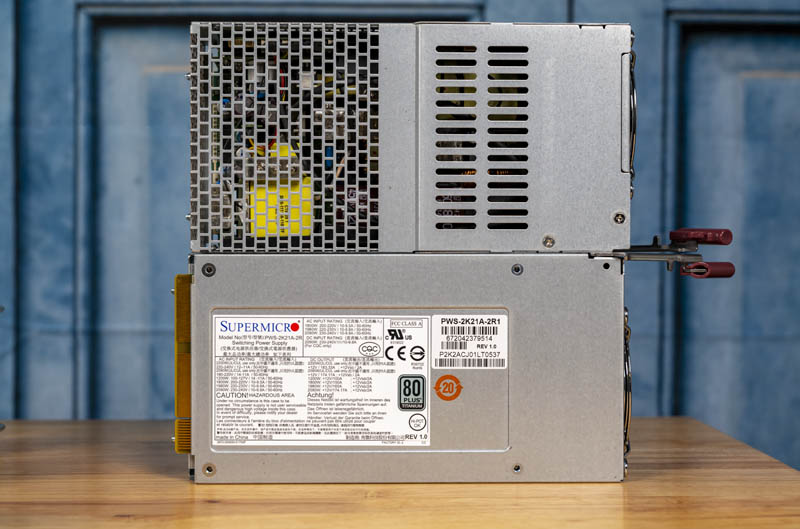

With the I/O on the front of the chassis, the rear is significantly different. We find large San Ace fans on both sides and two power supplies. This server is designed for simplified hot aisle deployments.

The two power supplies are 2.2kW 80Plus Titanium units designed for 96% efficiency.

The two fans are on assemblies with simple and efficient hot-swap mechanisms.

This is a very simplified design that keeps the chassis more compact than some of the other 4-socket designs we have seen. The simplification also reduces production costs and operational overhead.

The airflow guide snaps in place and ensures that air pressure is maintained throughout the chassis.

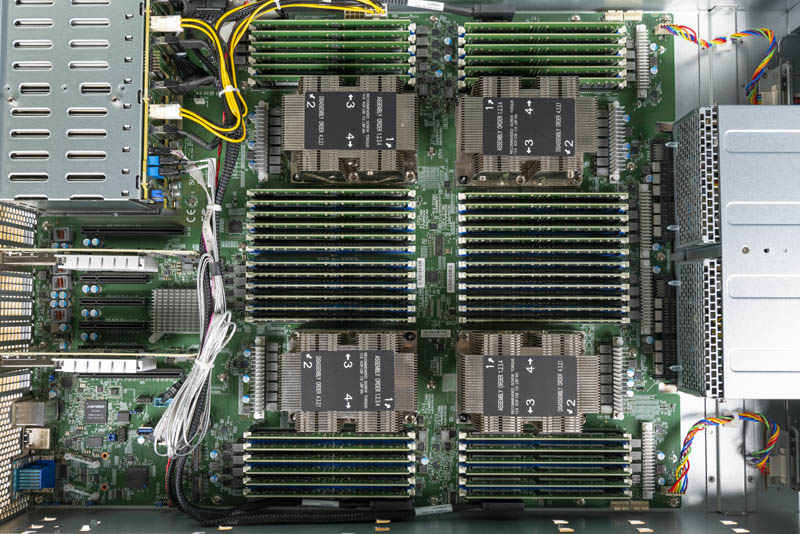

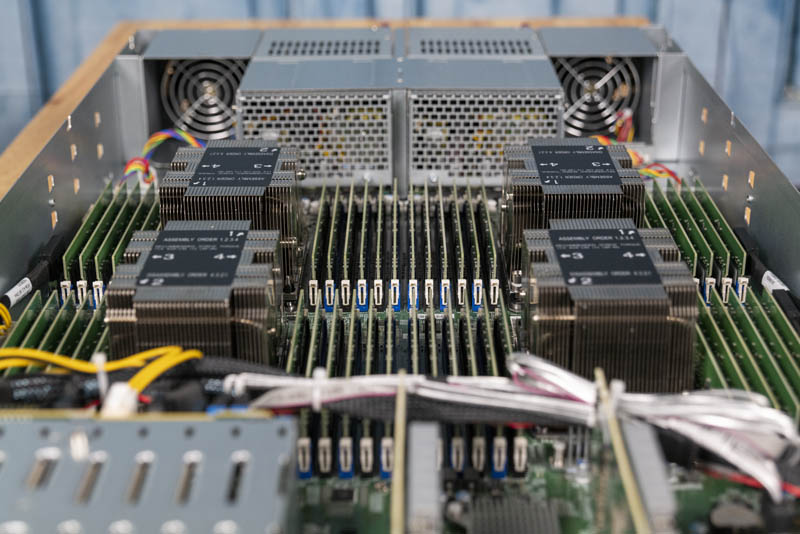

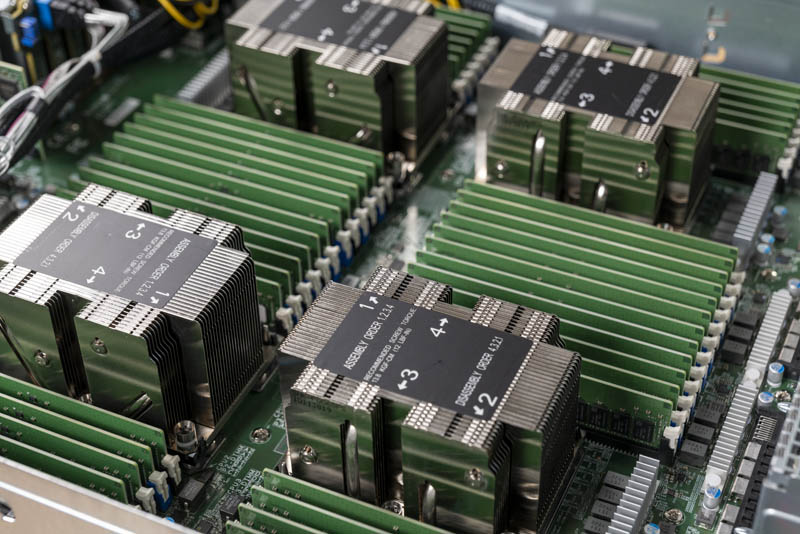

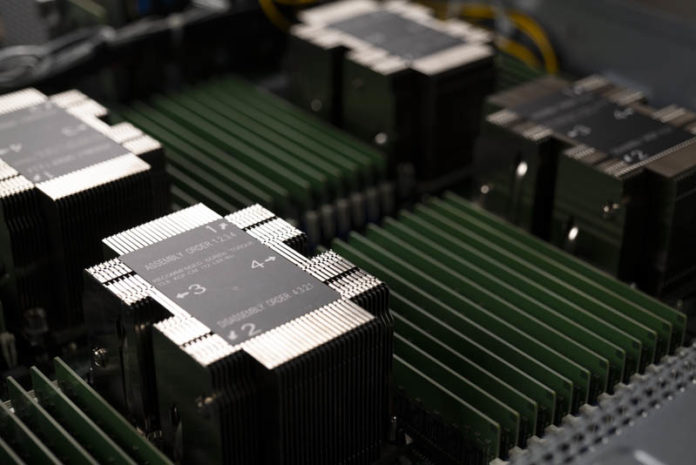

Here is a look at the chassis from above. One can see this is a quad Intel Xeon Scalable server with a full set of 12 DIMMs per socket for 48 DDR4 DIMM slots total.

These four sockets each support CPUs with up to 205W TDP such as the Intel Xeon Platinum 8280.

The DIMM slots support 24 DIMMs total in 1 DIMM per channel mode or 48 DIMMs in 2 DPC mode. The system also supports Intel Optane DCPMM when used with 2nd generation Intel Xeon Scalable processors that support the Optane modules.

One other small physical detail of the system that we saw during our teardown involved the cables. Although the 2049P-TN8R is a Xeon Scalable system and therefore PCIe Gen3, Supermicro is actually using higher-end PCIe 4.0 cabling within.

In our teardown, it was readily apparent how Supermicro designed this server to deliver four Intel Xeon Scalable processors with full memory capabilities at a minimal system cost. Instead of paying a premium for flexibility as with many 4-socket servers, the Supermicro 2049P-TN8R is a refreshingly simplified way to deliver compute for 4-socket scale-out deployments.

Next, we are going to look at the topology, management, and test configuration before getting to our performance testing.

Can Supermicro get STH a JBOF to connect to this to test? That’d be a neat combo.

Any tests planned vs. a 2P EPYC Rome system? Would be good to see the performance and power comparisons.

Hi MoreServersPlease. In these reviews, we focus on options that one can configure in the platform being reviewed. You may be looking for the CPU review which we published here: Quad Intel Xeon Gold 6252 Benchmarks and Review

Why exactly 3would anyone even think of using Xeon thesee days ?

It has more security holes than a swiss cheese, it’s relatively highly priced and with uncertain upgrade path.

What’s the point ?

Brane2: you can’t probably have number of DIMMS available in this system in any even dual cpu Epyc system. Otherwise you are absolutely right.

Compability with existing environment

Certain applications are certified only for Intel (e.g. SAP HANA, NFV apps using Intel DPDK)

4P 6230 are more cost effective than 2P 7742 – so if rack space, electricity & licensing is not an issue then Intel is still viable

Anyway – thank you very much Patrick for this test.

This is really clever design to turn some things around in order to make the platform more compact.

We bought Dells R840 and we had to buy deeper racks to accomodate them.

@Zibi

“Certain applications are certified only for Intel (e.g. SAP HANA, NFV apps using Intel DPDK)”

Both the software and the intel hardware have proven to have more holes than Swiss cheese.

Both have certified leaks. Some are by accident others are forced by the US-goverment (it’s hard to spy on Huawei, ZTE, etc.. hardware without US certified leaks).