In the STH/ DemoEval lab we have a lot of hardware that is continually cycled. The hardware in our lab is generally run at high utilization with our CPU load/ chassis ratio hovering above 76%. It suffices to say that there is a significant load on the components. At any given time we are likely to have several machines running pre-release hardware so we do experience a decent amount of component failures. By far, the most common type of failure is due to issues with pre-release firmware/ BIOS. Every so often we have a more “exciting” failure and that is what we wanted to highlight. Here is a quick look at our most spectacular hardware failure in the STH/ DemoEval lab for 2016.

The Spectacular Hardware Failure

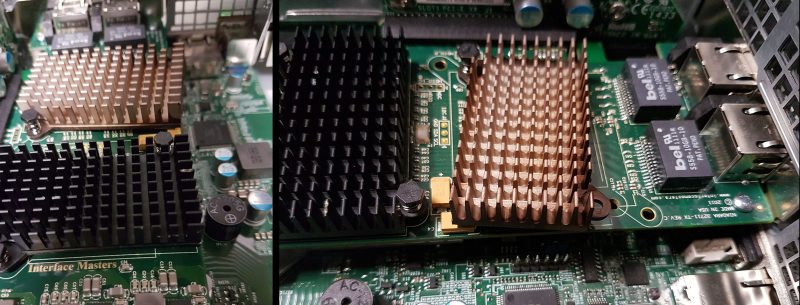

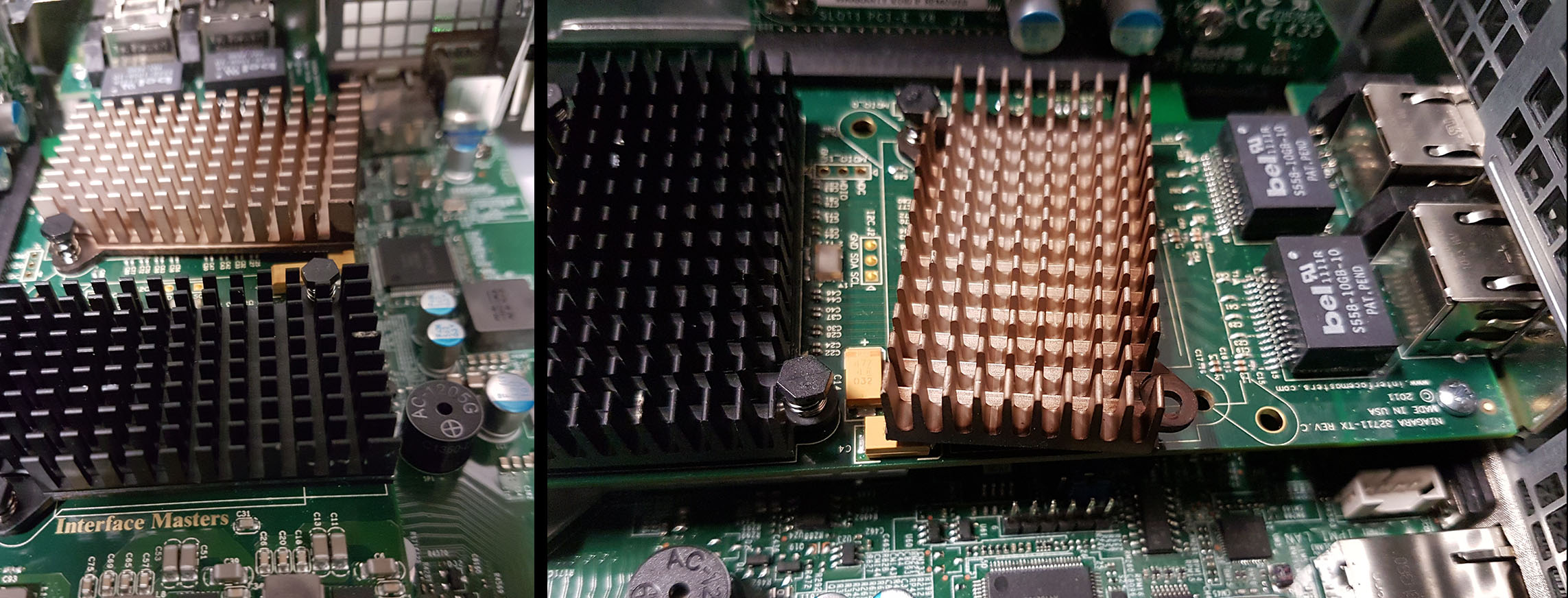

We originally posted this in the STH Forums but in September 2016 we were experiencing slow performance from a NIC in our lab’s then main Hyper-V server. The dual 10Gbase-T Interface Masters NIC was dropping speeds below what we normally see from 10Gbase-T. We waited for a maintenance window and opened the server up to shock:

To say that we were lucky would be an understatement. It appears as though one of the plastic clips failed. That led to the spring decompressing and the heatsink popping off. You can see the burnt discoloration of the heatsink. We checked the other plastic clips on both heatsinks and they were brittle enough to crack under slight pressure. This was likely a freak accident as we later checked our other cards and they were working fine. We also checked airflow and temps to ensure that the chassis was working as intended.

On the positive side, this was just as we started converting all of our base infrastructure to 40GbE so it was an opportune time to check for damage in the server and swap to a new network controller. We were worried about damage to the motherboard but we appear to have been very lucky regarding where the spring ended up. 40GbE NICs run cooler and at significantly lower power consumption (more on this soon) than 10Gbase-T NICs so that is another benefit we obtained from the transformation.

The lesson from this anecdotal story is that hardware failures do still occur. We have heard of failures that result in fire, setting off fire suppression systems and causing extensive and costly damage. It also reminds us to be mindful of how we treat clip and spring fasteners in the data center.

Just ran into this article. Interesting how the failure was just on this nic and not others of the same model. Perhaps a quality control issue? Or the clip manufacturer cheating the manufacturer with a batch of inferior quality clips? Maybe a fake nic?

Glad to see no fires or any other damage occurred and that the nic was thermal throttling as it should–otherwise that could have been a big disaster. :o