My Challenge to Intel

At the event I gave our PR contact some simple feedback: we need a better story. Here is why. VNNI as a “new” instruction set for mainstream is going to take some time to gain broad software adoption. Furthermore, most expect the majority of inferencing to happen at the edge so putting VNNI in AWS, GCP, Azure, Ali Cloud, and others is not going to necessarily help inferencing adoption across all applications that NVIDIA is already targeting with CUDA. Remember, NVIDIA now has Tensor Cores in its Xavier platform for robots and self-driving cars, Voltas for the data center, and RTX cards for consumer desktops. That is a fairly wide range of application scenarios compared to a Cascade Lake Xeon by Q4 2018.

Just about everyone in the industry thinks that Intel Optane Persistent Memory (OPM) is going to be big. If I can get 128GB OPM DIMMs for $400-500, STH will be running on OPMoF by next year without question. Intel has not released pricing, but we also do not expect every Cascade Lake-SP server to ship with OPM. As a result, OPM is only a solution for a portion of the market that will be early adopters. For those with large in-memory database and analytics workloads, we expect this to be a game changer. For others, it may take some time for software to catch up with hardware. This is normal.

For those who are doing inferencing on GPUs or other accelerators, or for those who are not going to adopt OPM widely in this generation of deployments, the real performance benefits are likely to be a small MHz improvement on SKUs, potentially some SKU-levels moving to higher core counts below the 28 core maximum, and then performance more in line with the pre-side channel attack world.

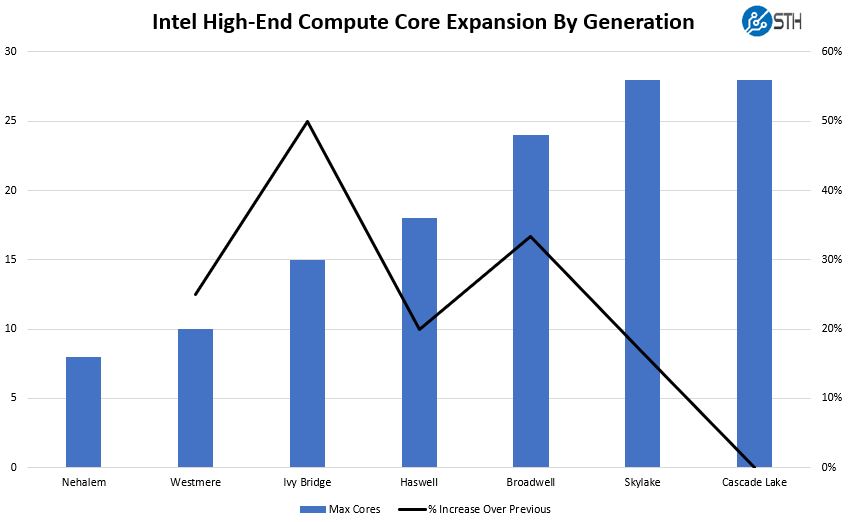

Security is undoubtedly important. There is also a chance that Cascade Lake-SP has fixes for yet undisclosed vulnerabilities as well. If we are going to put a stake in the ground and say “this is where Moore’s law died”, Cascade Lake-SP will be it. Here are two charts. The first is the real generational comparison between maximum core counts of each generation. We are leaving Sandy Bridge out as there was no real “Beckton” successor until Ivy Bridge.

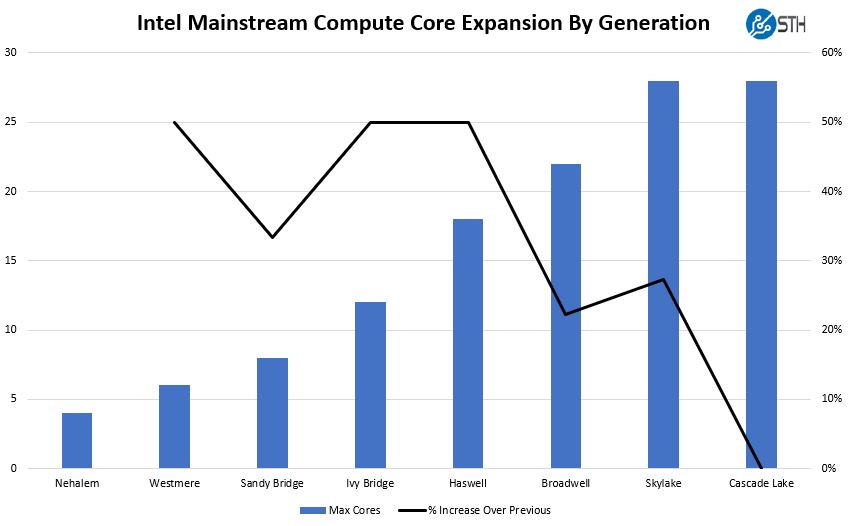

The second is the real generational comparison between maximum core counts of mainstream CPUs, and assuming the 28 Xeon Platinum parts are “mainstream.”

As you can see, that black bar tracking the rate of change has hit zero for Cascade Lake-SP. We have been accustomed to a nominal performance increase plus core increases in each generation, now we are getting the nominal performance increase (save for the scenarios with hardware fixes for side channel) and no core count increase.

Either way, the narrative we need from Intel is more than just bugfix plus bleeding edge features. Most Intel Xeon shops will continue to buy Intel Xeon, getting VNNI in the process. We need a narrative for the 10-30% of customers who will contemplate AMD EPYC and its second generation, much improved, and bigger 2019 “Rome” offering. To those folks, Intel is at risk of having no good answer until Cooper.

To Intel, VNNI, OPM, and security fixes are engineering feats. For Intel’s channel partner community, they will need a better story in 2019 to extole their benefits.

Final Words

In the context of Hot Chips, Akhilesh Kumar did a valiant job of getting out the new Cascade Lake-SP disclosures. For the marketing teams at Intel, in the coming months, the less server focused press will come up with the charts shown above and proclaim the death of Moore’s law and Intel’s ability to drive more than nominal improvement every 18 months. The reality is somewhat different as hardware side channel fixes mitigating performance impacts may have a huge influence on performance, as we saw with Intel Publishes L1TF and Foreshadow Performance Impacts and Intel Circles Back on Meltdown and Spectre Initial Fixes Pushed.

OPMoF ???

Optane Persistent Memory over Fabrics. Need to be extra trendy!

This kind of sounds goodbye Xeon, hello EPYC2.

I thought this was going to be an Intel bashing piece but it really isn’t. You did a good job presenting the new parts first. I’ll agree that this isn’t going to keep everyone who is going epyc to stay Intel. Intel knows that too unless they know of some major security issue that’s not public yet and cascade fixes but amd can’t handle.

The Meltdown mitigation alone might be worth quite a lot in Performance for I/O heavy applications, and this is an area where the MCM Design of AMD is problematic. It’s going to be interesting to see if AMD manages to put more Cores on a die and perhaps also attach more memory channels and PCIe lanes, this would seriously diminish the last of Intels advantages.

Do i need a new motherboard when upgrading from Skylake Xeons to Cascade Lake xeons?? Would like to get a new Skylake Xeon SP motherboard (Supermicro, Tyan ??) and upgrade approx a year from now to Cascade Lake. The only thing keeping me from going Epyc is the Optane Persistent Memory feature…looks great for a fast OLTP sql database that fits within 4-8 TB…

Cascade Lake will use the same motherboards and chipsets with a BIOS update

It is going to take a while for IT Departments to adopt amd epyc. especially mission critical 24x7x365 operations to switch to a relatively new platform, from a company that has a less then stellar data center reputation. Core counts aren’t everything especially given certain software is licensed per CORE. So by Intel pushing forward and integrating new features and fixes that will filter in as IT Dept refresh cycles hit, this is a good. It would have been bad had they not introduced a refresh this year.

Totally agree with you Matthew, Intel has a stellar reputation these days, the leaks are all over the place, there have neverbeen more hacks on mission critical operations the last year and a half then ever before.

There have never been more speed decrease in a processor because of all the patches than ever bevor.

8 socket 28 cores is no problem with licenses per CORE, but 2 socket 32 cores is becomming a real problem and why do people need all those PCIe lanes anyway.

AMD are no saints but doing a pretty good job lately.

As EPYC has been mentioned a couple of times, while interested in it depending on your environment it may not be an option. While EPYC may be cheaper and faster, it also has higher power demands which could be a limitation for some.

“Just about everyone in the industry thinks that Intel Optane Persistent Memory (OPM) is going to be big.”

This is absolutely HILARIOUS with years of hindsight.