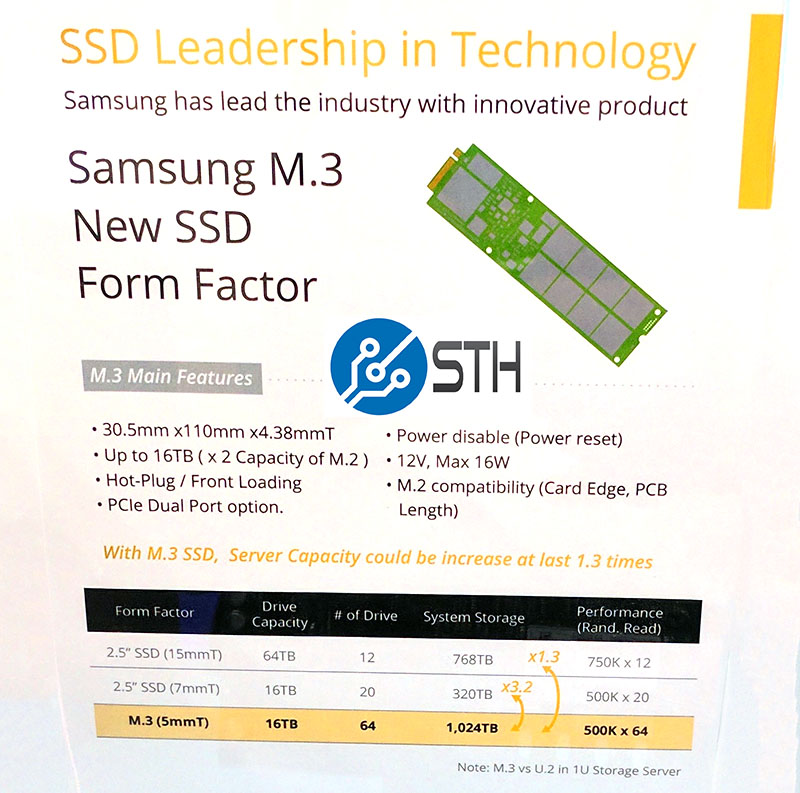

At Computex 2017 in Taipei Taiwan, we saw a new data center SSD form factor being championed by Samsung: the M.3 form factor. For the past few years, m.2 SSDs have been gaining significant market share. While visiting with server vendors at the show, the continued trend to support m.2 SSD form factors either on motherboard PCB or via risers was apparent. Without going into this summer’s new platforms in great depth, “gum stick” SSDs are set to make a splash. Samsung is championing a modified server form factor, M.3 which it expects will allow 1 petabyte (PB) per U of storage.

The Challenge With M.2 SSDs in the Data Center

M.2 SSDs were primarily designed for consumer devices. Eschewing traditional 2.5″ form factors for 22 x 80mm (or smaller) m.2 sticks helped usher in thinner, lighter laptops than could be achieved with 2.5″ drives.

Since the 2280 m.2 form factor was largely driven by the portable business, capacities were targeted at that PCB size. On the server side this presents challenges. Normal data center SSDs require additional PCB space for capacitors providing drives with power loss protection. While vendors have greatly reduced the footprint required for PLP components from just five years ago, these components still use considerable space.

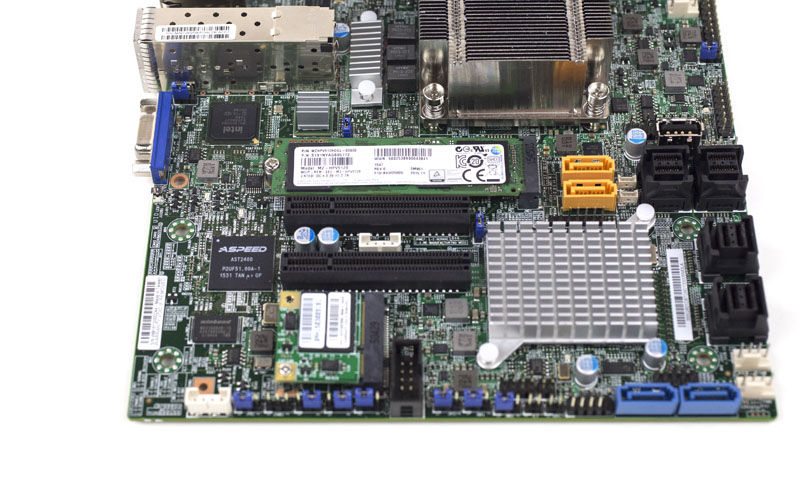

One way server SSD manufacturers are solving the challenge is with 22110 (110mm long) m.2 SSDs. Adding an extra 30mm over 2280 drives allows SSD vendors space to add PLP components that are not common in notebook drives. With the next-generation platforms sporting more RAM capacities (e.g. AMD EPYC with up to 16 DIMMs per CPU/ 32 DIMMs per system), motherboard PCBs are growing and space is again at a premium. That makes 110mm long m.2 drives too large to fit in many current 80mm designs.

A Larger Drive, the “up to” 16TB M.3 SSD and 1PB per U

When you are looking to expand capacities for servers, you need to fit more NAND packages and PLP components. PCIe lanes in servers are valuable commodities with next generation servers maxing out around 88-128 PCIe lanes in dual socket configurations. At the same time, we are seeing more designs using PCIe switches to have 1U shelves with 64x gum stick SSDs.

Samsung’s answer: the 16TB M.3 SSD.

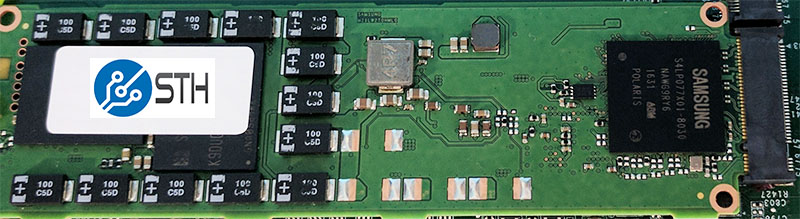

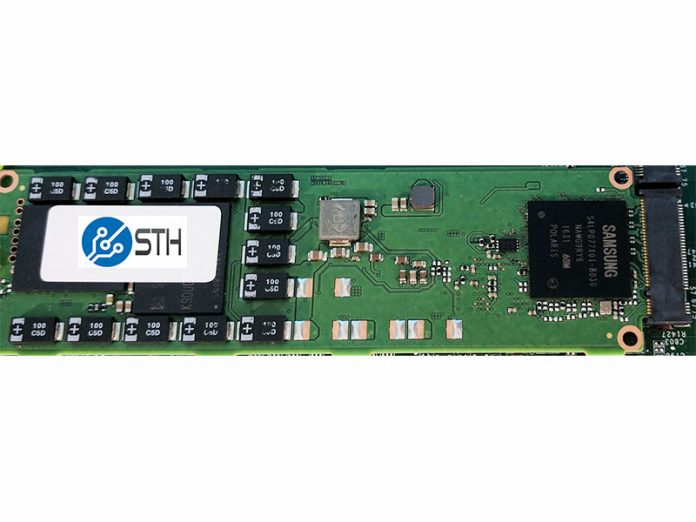

As you can see, the M.3 SSD uses the standard m.2 PCIe 3.0 x4 connector. The actual PCB is much wider leaving more room for PLP circuits and NAND packages.

Samsung is claiming that with 16TB M.3 SSDs it will be possible to hit ~1PB of storage in a 1U form factor! Each drive is also capable of 500K read IOPS so you will be limited by PCIe switch back-haul links to servers. At 16W each 1PB of raw storage in 64 drives will consume only about 1kW.

What this means is that we are going to see “shelves” with M.3 SSD connected via PCIe switches. Each providing bandwidth equal to the PCIe connection and up to 1PB per U. This may be an M.3 version of the OCP Lightning JBOF platform as an example.

Of course, there is one major obstacle to this: NAND shortage. We are still hearing of SSD allocation situations due to the global NAND shortage. There are some very large and well-known vendors struggling to keep up demand at given prices. For these 1PB 1U systems to make sense, the cost to achieve this density needs to outweigh the cost of adding more rack space.

Final Words

Even at $0.25/GB, we would expect 1PB per U storage leveraging M.3 to cost more than $250,000. Still, it is clearly a direction that we can see the industry heading. While all applications may not need 1PB per U, the storage industry is finding ways to squeeze more density into each server. By comparison, 4U 102 drive (and larger) hard drive chassis that can weigh upwards of 300lbs are still less than a third as dense as the M.3 form factor will allow in its first iteration.

There is not much detail about the M.3 form factor but the new 30.5mm wide SSD form factor may be coming to a server near you.

Patrick,

Does this use NVMe protocol?

Yes. It can also be dual ported.

Very rough in the edges. For very large deployments it can work, for company servers, the “hot swap bays with blinking leds” is still much more convenient and serviceable.

Aside from m3, can we also get a better SATA link so that the 2.5 inch form factor can last another decade?

This is weird I don’t quite get why they needed to make ‘m.3’ In the m.2 specs there are provisions for a 30*110mm sized package so it should have been fine just call it m.2 30110 and be done. It seems like some marketing people wanted to differentiate and call it m.3 by changing it to 30,5 mm or something like that. Guess that rubbed me the wrong way

Friedrich I can see it being important when you get down to end users seeing this huge ssd and trying to stick it in their laptop. making it say m3 = not for laptop… plus the marketing hype always helps.

2 things that were not thought about or indirectly mentioned is support contracts. companies like to charge per device + support contract level depending on how you have the contract with them. in cases like that its quite beneficial to have 1 device vs 3 not to mention the rack

savings. which leads in to the 2nd part… ehs would much rather you have a single 1u box that’s under 50lbs than 3 100+ lb devices as it allows for 1 man lifting

If they found a way to make a clam-shell connector for the m3 drives that allowed the cage around the m3 drive to lock/unlock the drive before removal for hot swapping that would make it very hard to resist m3 afterwords.

Those mouting holes at corners of the “M.3” pcb are for mounting to a metal cage/case/slide to help hot-swap usage. There is also a half circle cut at the middle of the end to support M.2 style one screw mounting.

I think they couldn’t call SSDs M.2 30110-D5-M as Key-M does not have 12V supply voltage. Then again article says it supports also M.2 usage – Does it work from 3.3V supply in that case?

Also as many many manufacturers seem to have those same mounting holes at corners and afaik M.2 30110 doesn’t have standard for those might allow M.3 naming to born.

Also M.2 socket doesn’t (officially) support straight sliding in insertion, which is kind of requirement for hot-swap bays. M.2 connector has also really limited life of up to 60 mating cycles. These two require a new socket (name).

So does anyone know if these SSDs will really work in M.2 key-M socket and 3.3V suply?

Hi,

as far as I know it is really not M.2 with other dimension. NGSFF should you N/C pins from M.2 connector and also the socket supports 12V.

You can check this link: https://www.google.de/url?sa=t&rct=j&q=&esrc=s&source=web&cd=7&ved=0ahUKEwillqKA3uvYAhXB16QKHWpyCe0QFghYMAY&url=https%3A%2F%2Fwww.flashmemorysummit.com%2FEnglish%2FCollaterals%2FProceedings%2F2017%2F20170810_FC32_Wang.pdf&usg=AOvVaw1ng6ArfYcs2t5ybpvZpkxv

Has the M.3 standard been ratified yet? Is any server or storage vendor offering it yet? What is the capacity of M.2 vs M.3 vs U.?

I meant U.2 – bad keyboard